Linkerd2: telemetry: incorrect "meshed" count

I have 3 similar deploys (strest-client-*). Each deploy only has one pod. linkerd stat seems to indicate that two of these deploys each have 2 pods, which is incorrect.

:; kubectl get po -n strest-new-balance --show-labels -l strest-role=client

NAME READY STATUS RESTARTS AGE LABELS

strest-client-p2c-pending-6bf684f5f4-f6ntr 2/2 Running 0 9m26s app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-client-p2c-pending,pod-template-hash=6bf684f5f4,proxy=p2c-pending,strest-role=client

strest-client-p2c-ready-7b6fd8f8c4-ftkn8 2/2 Running 0 9m26s app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-client-p2c-ready,pod-template-hash=7b6fd8f8c4,proxy=p2c-ready,strest-role=client

strest-client-p2c-unready-6bf684f5f4-wvnhs 2/2 Running 0 9m26s app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-client-p2c-unready,pod-template-hash=6bf684f5f4,proxy=p2c-pending,strest-role=client

#; golly ~

:; linkerd stat -n strest-new-balance deploy

NAME MESHED SUCCESS RPS LATENCY_P50 LATENCY_P95 LATENCY_P99 TCP_CONN

strest-client-p2c-pending 2/2 - - - - - -

strest-client-p2c-ready 1/1 - - - - - -

strest-client-p2c-unready 2/2 - - - - - -

strest-server-fast 10/10 100.00% 1989.7rps 19ms 74ms 96ms 28

strest-server-slow 10/10 100.00% 151.6rps 93ms 716ms 949ms 12

All 7 comments

Additionally, the "meshed" count for both strest-server deploys is incorrect! The fast deploy has 7 instances and the slow has 3 pods:

:; kubectl get po -n strest-new-balance --show-labels

NAME READY STATUS RESTARTS AGE LABELS

strest-client-p2c-pending-6bf684f5f4-f6ntr 2/2 Running 0 11m app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-client-p2c-pending,pod-template-hash=6bf684f5f4,proxy=p2c-pending,strest-role=client

strest-client-p2c-ready-7b6fd8f8c4-ftkn8 2/2 Running 0 11m app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-client-p2c-ready,pod-template-hash=7b6fd8f8c4,proxy=p2c-ready,strest-role=client

strest-client-p2c-unready-6bf684f5f4-wvnhs 2/2 Running 0 11m app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-client-p2c-unready,pod-template-hash=6bf684f5f4,proxy=p2c-pending,strest-role=client

strest-server-fast-67774d96b6-2g2nm 2/2 Running 0 9m31s app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-server-fast,pod-template-hash=67774d96b6,strest-role=server

strest-server-fast-67774d96b6-4fb26 2/2 Running 0 9m7s app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-server-fast,pod-template-hash=67774d96b6,strest-role=server

strest-server-fast-67774d96b6-64l4g 2/2 Running 0 9m40s app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-server-fast,pod-template-hash=67774d96b6,strest-role=server

strest-server-fast-67774d96b6-7wnj8 2/2 Running 0 9m40s app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-server-fast,pod-template-hash=67774d96b6,strest-role=server

strest-server-fast-67774d96b6-9d2n8 2/2 Running 0 9m14s app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-server-fast,pod-template-hash=67774d96b6,strest-role=server

strest-server-fast-67774d96b6-dcdzc 2/2 Running 0 9m27s app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-server-fast,pod-template-hash=67774d96b6,strest-role=server

strest-server-fast-67774d96b6-hss6s 2/2 Running 0 9m18s app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-server-fast,pod-template-hash=67774d96b6,strest-role=server

strest-server-slow-78f6868957-bhkr2 2/2 Running 0 7m25s app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-server-slow,pod-template-hash=78f6868957,strest-role=server

strest-server-slow-78f6868957-c5pcz 2/2 Running 0 7m32s app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-server-slow,pod-template-hash=78f6868957,strest-role=server

strest-server-slow-78f6868957-vtznl 2/2 Running 0 7m32s app=strest,linkerd.io/control-plane-ns=linkerd,linkerd.io/proxy-deployment=strest-server-slow,pod-template-hash=78f6868957,strest-role=server

:; kubectl get deploy -n strest-new-balance --show-labels

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE LABELS

strest-client-p2c-pending 1 1 1 1 12m app=strest,proxy=p2c-pending,strest-role=client

strest-client-p2c-ready 1 1 1 1 12m app=strest,proxy=p2c-ready,strest-role=client

strest-client-p2c-unready 1 1 1 1 12m app=strest,proxy=p2c-unready,strest-role=client

strest-server-fast 7 7 7 7 12m app=strest,strest-role=server,strest-server-profile=fast

strest-server-slow 3 3 3 3 12m app=strest,strest-role=server,strest-server-profile=slow

This is the same as https://github.com/linkerd/linkerd2/issues/2548

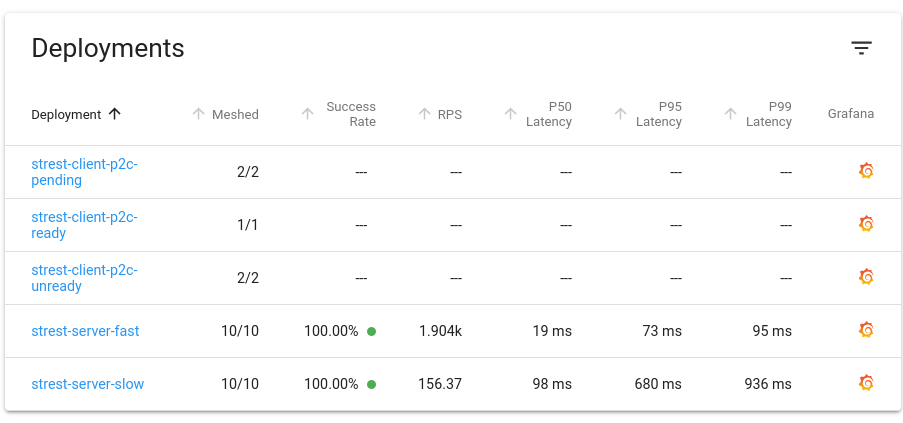

Same behavior on web:

I'd be curious to see what happens in this code section https://github.com/linkerd/linkerd2/blob/ecc4465cd12eebf9d99cb97294ccabc8003b5dfd/controller/api/public/stat_summary.go#L431-L449 when we set up a test that uses the pods described above as a test fixture. It could be that we may be bundling all pods in a given namespace when we request stats for pods without giving a specific pod name.

@dadjeibaah I would like to work on it. I know the issue is not with a good first issue but I want to start to contribute to the project and I was taking a look at #1967 and both touch a similar part of the codebase.

@olix0r that's probably an intermittent bug, right? Were you able to reproduce it? If yes, could you please provide your kubernetes resources specs? AFAIK the behavior was not reproducible on #2548.

@jonathanbeber this is the config i noticed this with last: https://gist.github.com/olix0r/25020ab317e7b2cc5bf8cb6fd2de42ff

nice, will work on it

Most helpful comment

@jonathanbeber this is the config i noticed this with last: https://gist.github.com/olix0r/25020ab317e7b2cc5bf8cb6fd2de42ff