Lightgbm: [CRITICAL BUG][Python] cannot wrire() UTF-8 strings by UnicodeEncodeError

Environment info

Operating System: Any

CPU/GPU model: Any

Python version: Any

LightGBM version or commit hash: ce082425

Error message

UnicodeEncodeError: 'ascii' codec can't encode characters in position 0-2: ordinal not in range(128)

Reproducible examples

import lightgbm

import numpy

X = numpy.random.random(size=(100, 3))

y = numpy.random.random(size=100)

dataset = lightgbm.Dataset(X, y)

params = {}

bst = lightgbm.train(params, dataset)

text = '日本語' # This means Japanese :)

open('tmp.txt', 'w').write(text)

The below code works fine.

text = '日本語'

open('tmp.txt', 'w').write(text)

Steps to reproduce

- Just run the above code

6b68967d9ee6ab68e353d698cbb32c992b22ee81 (#2891) breaks user locale settings.

LightGBM is just a library, and it has never to change user locale, which is set in users' own envrionment😡

All 11 comments

In Python, the locale is always "C" by default, still as seen in the comment ninia/jep#205 (comment), it is possible to break the model by changing locales.

from #2891.

This is not true!

The default locale depends on users' environment.

You can see that.

❯ python -V

Python 3.7.7

~

❯ locale

LANG="ja_JP.UTF-8"

LC_COLLATE="ja_JP.UTF-8"

LC_CTYPE="UTF-8"

LC_MESSAGES="C"

LC_MONETARY="ja_JP.UTF-8"

LC_NUMERIC="ja_JP.UTF-8"

LC_TIME="ja_JP.UTF-8"

LC_ALL=

~

❯ ipython

Python 3.7.7 (default, Mar 15 2020, 18:12:44)

Type 'copyright', 'credits' or 'license' for more information

IPython 7.13.0 -- An enhanced Interactive Python. Type '?' for help.

In [1]: import locale

In [2]: locale.getdefaultlocale()

Out[2]: (None, 'UTF-8')

The default locale is not "C".

Hello @henry0312 ,

Thanks for the report, that is indeed a bug, I'm sorry about that, it's not supposed to be happening, the locale should pop back to your system default after train finishes. Besides this does not override your system locale, but only the running process locale.

True, this is not correct:

In Python, the locale is always "C" by default, still as seen in the comment ninia/jep#205 (comment), it is possible to break the model by changing locales.

from #2891.

however "it's as if" it behaves that way in what concerns the loaded LightGBM shared libraries.

Python is very careful handling locale. Even though it is reporting your system locale, unless you pass a locale to it, it definitely does NOT override the loaded shared libs and the C/C++ default process startup standard library functions that behave as if locale is "C":

In [2]: locale.getdefaultlocale()

Out[2]: (None, 'UTF-8')

```The default locale is not "C".

Which means, when you call lightgbm through Python, your locale does not matter to the LightGBM code.

However, setting the locale in Python will break your model, as you can see in https://github.com/ninia/jep/issues/205, or as reported in https://github.com/microsoft/LightGBM/issues/2890.

The old patch should not be reverted as robustly deploying apps without it across different environments is not in some circumstances possible, but fixed instead (as I said I'm sorry, should have been more thoroughly tested). I don't have time today though to fix it already.

Notice this does not override the system locale but this single process locale for the duration of the train (if it weren't for the locale not being popped back properly as you reported).

There is an alternative which is to rewrite all the code concerned with reading and writing numbers to use functions that don't depend on the process locale like std::stdod(), most likely will also slow-down the model save/load and requires even more extensive work and testing, but that is not going to be a quick-fix.

Ping @henry0312 and @guolinke , I would like to hear your opinion on the matter. Reversing the fix is not good, as it breaks systems that might not be able to be reliably configured, although there is the aforementioned alternative, which I'm not sure who has time to implement.

By the way, here is a snippet demonstrating the proper implementation https://www.gnu.org/software/libc/manual/html_node/Setting-the-Locale.html#Setting-the-Locale.

@AlbertoEAF Thank you for your explanation.

(Maybe) I understood that this has been a bug and what you want to do in https://github.com/microsoft/LightGBM/commit/6b68967d9ee6ab68e353d698cbb32c992b22ee81.

This issues is caused by handling locale with multi-threading, right?

I googled and consulted Stack Overflow , but I havn't know how to fix 😢

Which means, when you call lightgbm through Python, your locale does not matter to the LightGBM code.

Oh, I was wrong. Actually, Python seems to ignore my locale settings.

I tried to check what really happens again.

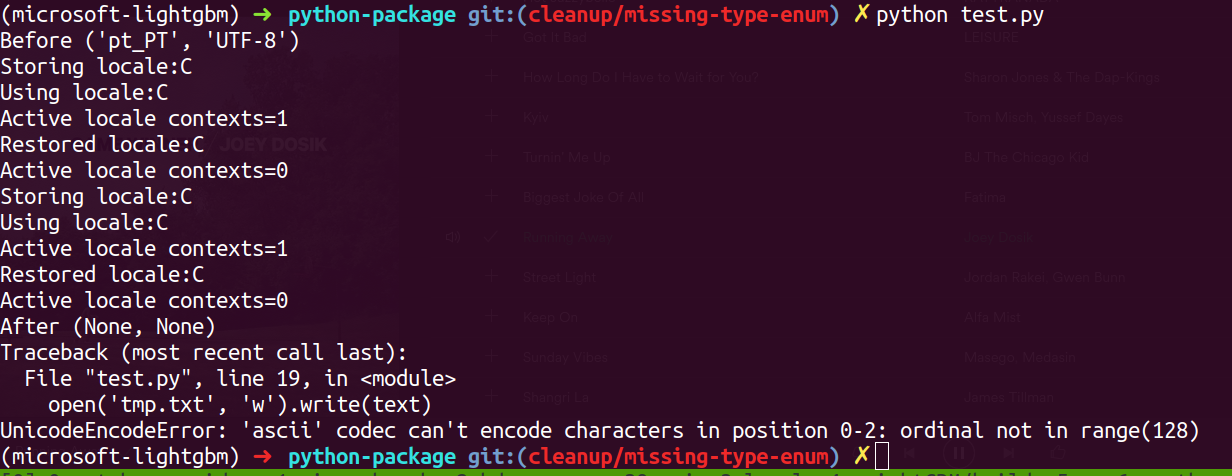

import locale

import lightgbm

import numpy

print('Before', locale.getlocale())

X = numpy.random.random(size=(100, 3))

y = numpy.random.random(size=100)

dataset = lightgbm.Dataset(X, y)

params = {'verbose': -1}

bst = lightgbm.train(params, dataset)

print('After', locale.getlocale())

text = '日本語' # This means Japnese :)

open('tmp.txt', 'w').write(text)

The above code output:

❯ python test_locale.py

Before (None, 'UTF-8')

After (None, None)

Traceback (most recent call last):

File "test_locale.py", line 18, in <module>

open('tmp.txt', 'w').write(text)

UnicodeEncodeError: 'ascii' codec can't encode characters in position 0-2: ordinal not in range(128)

The output tells me that locale becomes None after train.

I don't have time today though to fix it already.

Do you mean you have already fixed it (or have you known the solution) ?

(I'm sorry I'm not good at English, so I don't understand what you mean correctly)

If done, can you send a PR?

There is an alternative which is to rewrite all the code concerned with reading and writing numbers to use functions that don't depend on the process locale like std::stdod(), most likely will also slow-down the model save/load and requires even more extensive work and testing, but that is not going to be a quick-fix.

That seems realy hard works...😨

We want to avoid this solution (also don't have much time).

I'd like to ask you and @guolinke why you tried to fix the locale problem of SWIG in c++ layer.

I think it should be fixed in SWIG layer because it is the only problem around the layer.

Ping @henry0312 and @guolinke , I would like to hear your opinion on the matter. Reversing the fix is not good, as it breaks systems that might not be able to be reliably configured, although there is the aforementioned alternative, which I'm not sure who has time to implement.

In my opinion, if it takes a long time to fix, we should revert it for now and then try to fix (also develop) the locale issue in another branch, not in master branch, because master branch is as available as possible.

In short, it depends on how long we fix it.

And I don't know how to yet...

Do you have any idea?

Hello @henry0312 I'm at work but later today I'll reply to you (in summary the fix should not take long, testing takes more time, I have to test your example with Python and never tried compiling the python version - always used the pre-built version from pip).

Okay, I'll wait your fix. Of course, I'll cooperate with tests :)

Hello again @henry0312 :)

The fix was introduced at the C++ layer because if the process locale is changed outside LightGBM, our model format read/save is broken (i.e. use locale.setlocale("pt_PT.UTF-8") - https://github.com/ninia/jep/issues/205#issuecomment-544078377 @ https://github.com/ninia/jep/issues/205), which is a bug, i.e., if you change your locale our model file should still be read correctly and should not depend on that.

Since calling the train is not a call that occurs in a threaded manner (only the operations in it are threaded), this is safe.

The issue is that I didn't properly implement the locale pop-back state (I should have tested better when developing it).

The solution is very simple to implement: https://www.gnu.org/software/libc/manual/html_node/Setting-the-Locale.html#Setting-the-Locale

I will just take more time testing, because I don't know how to compile from source to test the python version (but will try it now).

Hmm strangely, the locales were being popped back correctly on the C layer already:

int main()

{

const std::string VALUE = "10.026";

print("Simulating externally called setlocale()...");

std::locale::global(std::locale("pt_PT.UTF-8"));

print(std::stod(VALUE));

{

LocaleContext withLocaleContext("C");

print("locale context = ON");

print(std::stod(VALUE));

}

print("locale context = OFF");

print(std::stod(VALUE));

return 0;

}

prints this:

10

locale context = ON

10.026

locale context = OFF

10

Thus the same should happen on the Python side.

I don't understand yet why it's not happening the same on the Python side.

I need to test a build of the python lightgbm package with your example so I can understand.

@henry0312 can you open a terminal and run "env" on your terminal and post here the result?

Ok, I managed to build the Python version and instrument the C code to see what's going on. Using @henry0312 's second snippet:

Unfortunately it seems that there are some incompatibilities with Python's handling of locale even if the underlying C layer correctly sets the locale back.

I believe we should revert my commit until I understand a bit better why Python does this and how to fix it well, so we have a robust solution for this and the model locale-dependant issue.

Do you agree ? @henry0312 @guolinke @imatiach-msft

Thanks!

@AlbertoEAF Thank you for your quick response.

The issue is that I didn't properly implement the locale pop-back state (I should have tested better when developing it).

The solution is very simple to implement: https://www.gnu.org/software/libc/manual/html_node/Setting-the-Locale.html#Setting-the-Locale

Though I'm not sure, I guess that the sample implementation isn't thread-safe, either.

I will just take more time testing, because I don't know how to compile from source to test the python version (but will try it now).

It is easy for you to build python-package thanks to https://github.com/microsoft/LightGBM/tree/master/python-package.

(BTW, I install Python via pyenv)

@henry0312 can you open a terminal and run "env" on your terminal and post here the result?

Here is outputs of env.

env outputs

CLICOLOR=1

COLORFGBG=15;0

COLORTERM=truecolor

FPATH=/Users/henry/.zplug/repos/robbyrussell/oh-my-zsh/plugins/gem:/Users/henry/.zplug/repos/robbyrussell/oh-my-zsh/plugins/pip:/Users/henry/.zplug/repos/docker/compose/contrib/completion/zsh:/Users/henry/.zplug/repos/docker/docker-ce/components/cli/contrib/completion/zsh:/Users/henry/.zplug/repos/zplug/zplug/misc/completions:/Users/henry/.zplug/repos/zplug/zplug/base/sources:/Users/henry/.zplug/repos/zplug/zplug/autoload:/Users/henry/.zplug/repos/zplug/zplug/base/utils:/Users/henry/.zplug/repos/zplug/zplug/base/job:/Users/henry/.zplug/repos/zplug/zplug/base/log:/Users/henry/.zplug/repos/zplug/zplug/base/io:/Users/henry/.zplug/repos/zplug/zplug/base/core:/Users/henry/.zplug/repos/zplug/zplug/base/base:/Users/henry/.zplug/repos/zplug/zplug/autoload/commands:/Users/henry/.zplug/repos/zplug/zplug/autoload/options:/Users/henry/.zplug/repos/zplug/zplug/autoload/tags:/usr/local/share/zsh/site-functions:/Users/henry/.zsh/completions:/usr/share/zsh/site-functions:/usr/share/zsh/5.3/functions:/Users/henry/.zplug/repos/zsh-users/zsh-completions/src

GOPATH=/Users/henry/go

HOME=/Users/henry

ITERM_PROFILE=Default

ITERM_SESSION_ID=w0t4p0:A5176BB6-8CE9-4C41-ACC1-403B69ED3749

LANG=ja_JP.UTF-8

LC_CTYPE=UTF-8

LC_MESSAGES=C

LC_TERMINAL=iTerm2

LC_TERMINAL_VERSION=3.3.9

LOGNAME=henry

OLDPWD=/Users/henry

OMPI_CXX=clang++

PATH=/usr/local/Caskroom/google-cloud-sdk/latest/google-cloud-sdk/bin:/Users/henry/.zplug/repos/zplug/zplug/bin:/Users/henry/.zplug/bin:/usr/local/var/pyenv/shims:/usr/local/var/rbenv/shims:/usr/local/opt/gnu-tar/libexec/gnubin:/Users/henry/.cargo/bin:/Users/henry/local/bin:/usr/local/bin:/usr/local/sbin:/usr/bin:/bin:/usr/sbin:/sbin:/Users/henry/go/bin:/Library/TeX/texbin

PERIOD=30

PROMPT_EOL_MARK=

PWD=/Users/henry

PYENV_ROOT=/usr/local/var/pyenv

PYENV_SHELL=zsh

RBENV_ROOT=/usr/local/var/rbenv

RBENV_SHELL=zsh

SHELL=/bin/zsh

SHLVL=1

TERM=xterm-256color

TERM_PROGRAM=iTerm.app

TERM_PROGRAM_VERSION=3.3.9

TERM_SESSION_ID=w0t4p0:A5176BB6-8CE9-4C41-ACC1-403B69ED3749

TEXINPUTS=:/Users/henry/.TeX

TMPDIR=/var/folders/0x/1003t9zx6qx2hpsfb964c91m0000gn/T/

USER=henry

VIRTUAL_ENV_DISABLE_PROMPT=12

XPC_FLAGS=0x0

XPC_SERVICE_NAME=0

ZPLUG_BIN=/Users/henry/.zplug/bin

ZPLUG_CACHE_DIR=/Users/henry/.zplug/cache

ZPLUG_ERROR_LOG=/Users/henry/.zplug/.error_log

ZPLUG_FILTER=fzf-tmux:fzf:peco:percol:fzy:zaw

ZPLUG_HOME=/Users/henry/.zplug

ZPLUG_LOADFILE=/Users/henry/.zplug/packages.zsh

ZPLUG_LOG_LOAD_FAILURE=false

ZPLUG_LOG_LOAD_SUCCESS=false

ZPLUG_PROTOCOL=HTTPS

ZPLUG_REPOS=/Users/henry/.zplug/repos

ZPLUG_ROOT=/Users/henry/.zplug/repos/zplug/zplug

ZPLUG_THREADS=16

ZPLUG_USE_CACHE=true

ZSH=/Users/henry/.zplug/repos/robbyrussell/oh-my-zsh

ZSH_CACHE_DIR=/Users/henry/.zplug/repos/robbyrussell/oh-my-zsh/cache/

_=/usr/bin/env

_ZPLUG_AWKPATH=/Users/henry/.zplug/repos/zplug/zplug/misc/contrib

_ZPLUG_CONFIG_SUBSHELL=:

_ZPLUG_OHMYZSH=robbyrussell/oh-my-zsh

_ZPLUG_PREZTO=sorin-ionescu/prezto

_ZPLUG_URL=https://github.com/zplug/zplug

_ZPLUG_VERSION=2.4.2

__CF_USER_TEXT_ENCODING=0x0:1:14

I believe we should revert my commit until I understand a bit better why Python does this and how to fix it well, so we have a robust solution for this and the model locale-dependant issue.

Do you agree ? @henry0312 @guolinke @imatiach-msft

I agree.

ping @guolinke

Most helpful comment

Ok, I managed to build the Python version and instrument the C code to see what's going on. Using @henry0312 's second snippet:

Unfortunately it seems that there are some incompatibilities with Python's handling of locale even if the underlying C layer correctly sets the locale back.

I believe we should revert my commit until I understand a bit better why Python does this and how to fix it well, so we have a robust solution for this and the model locale-dependant issue.

Do you agree ? @henry0312 @guolinke @imatiach-msft

Thanks!