Lightgbm: Question about fobj and feval

def loglikelihood(preds, train_data):

labels = train_data.get_label()

preds = 1. / (1. + np.exp(-preds))

grad = preds - labels

hess = preds * (1. - preds)

return grad, hess

def binary_error(preds, train_data):

labels = train_data.get_label()

return 'error', np.mean(labels != (preds > 0.5)), False

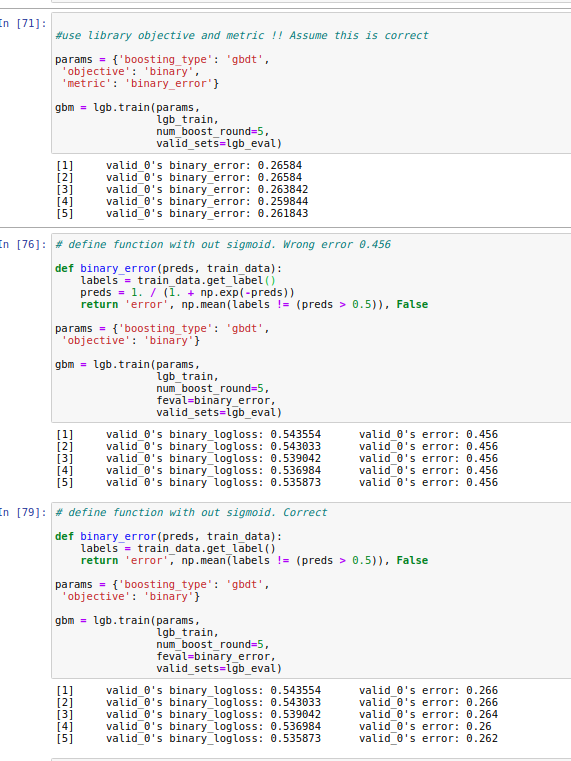

This code is from advanced example.

Is it different between preds of feval and preds of fobj ?

I see that code in binary_error doesn't perform sigmoid before threshold 0.5

All 7 comments

In the last experiment, how would the lightgbm library even know to apply the logit link function (inverse of the sigmoid function) since no objective was passed. Does it profile the label and if it sees binary labels, assumes logit link function?

What happens if you take the sigmoid out of both the fobj and feval?

I think it is just a demo for using fobj and feval. But, indeed, the feval is not correct.

The fix is welcomed.

@guolinke, I would be happy to update the example, but I don't understand what is happening. How would the model know to boost the linear predictor (i.e. the prediction before applying the sigmoid transform) when a custom loss function is defined? Nowhere in the last example shown above did the model know that the preds that were being sent into loglikelihood custom objective function, were supposed to be on the linear predictor scale.

I guess the reason is in the following lines:

More precise, here:

https://github.com/microsoft/LightGBM/blob/516bd37a28a0dfa7c2bc4a24587dbbcb8a697eb2/src/boosting/gbdt.cpp#L645

https://github.com/microsoft/LightGBM/blob/0a4a7a86f5a1d3146c36c7d8c082154a193d4893/src/objective/binary_objective.hpp#L167-L169

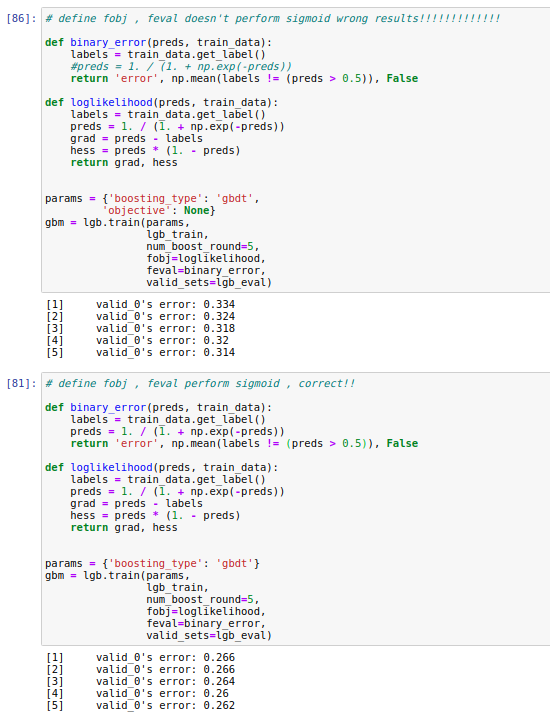

When you use built-in objective function, preds in custom metric function (feval) have been already transformed and you don't need to do it again. When you use custom objective function, LightGBM knows nothing about a needed transformation, so you get a raw output in preds and have to apply sigmoid before calculate a metric value inside feval.

@guolinke Please correct me if I'm wrong.

@StrikerRUS thanks! you are very right!

This thread has been automatically locked since there has not been any recent activity after it was closed. Please open a new issue for related bugs.

Most helpful comment

I guess the reason is in the following lines:

https://github.com/microsoft/LightGBM/blob/0a4a7a86f5a1d3146c36c7d8c082154a193d4893/python-package/lightgbm/basic.py#L2519-L2524

https://github.com/microsoft/LightGBM/blob/0a4a7a86f5a1d3146c36c7d8c082154a193d4893/python-package/lightgbm/basic.py#L2547-L2551

https://github.com/microsoft/LightGBM/blob/516bd37a28a0dfa7c2bc4a24587dbbcb8a697eb2/src/boosting/gbdt.cpp#L637-L657

More precise, here:

https://github.com/microsoft/LightGBM/blob/516bd37a28a0dfa7c2bc4a24587dbbcb8a697eb2/src/boosting/gbdt.cpp#L645

https://github.com/microsoft/LightGBM/blob/0a4a7a86f5a1d3146c36c7d8c082154a193d4893/src/objective/binary_objective.hpp#L167-L169

When you use built-in objective function,

predsin custom metric function (feval) have been already transformed and you don't need to do it again. When you use custom objective function, LightGBM knows nothing about a needed transformation, so you get a raw output inpredsand have to apply sigmoid before calculate a metric value insidefeval.@guolinke Please correct me if I'm wrong.