Leela-zero: Tune first play urgency ?

I tried a lot of modifications to first play urgency after learning that AlphagoZero probably the equivalent of 0.5, reading #238 and the relevant part of the AlphagoZero paper. A lot of my modifications were bad or didn't make an improvement (I am not an expert in game AI or neural networks so that was expected, also I don't have a very good computer so the sample size of my test games is low), but when I tried simply lowering the fpu by 0.2 in the function UCTNode::get_eval, my reasoning was that from my others failed experiment, it seemed like it was bad for exploitation to be hurt by a fpu that is too high, and I simply intended to confirm my hypothesis. I got a very surprising result : 5/0 against the standard version (that was on 0.10.1). My tests were run on Sabaki with 1000 playouts, using the c83e network, the parameters used were

--gtp -w leelaz-model-128000.txt --playouts 1000 --noponder

I also switched black/white side every game.

I then tried to give 1600 playouts to the standard version, the results were 1/1, then the version 0.11 arrived so I did my modification on this version and ran tests again, and got 3/0 against the normal version.

At that point I was wondering if these results are simply because the same network is used on both side. The deeper search caused by lowered fpu would then be more efficient because it knows the moves the other side is going to make. So I tried making the lowered fpu version with c83e net against the normal version with the latest 7fde net. The results are currently 2/0 for the lowered fpu version with worse net.

I also tried using weaker networks. Because it seems to makes sense that the lowered fpu cause less exploration and thus, a weaker networks would not be as strong using it. So maybe my change is useful only once the network is sufficiently strong.

Using the a1151640 network, one of the earliest, almost playing randomly, the results are indeed 0/2, showing that maybe it is worse at that level of play.

Using the 4e4d09 network, a slightly stronger one, made after the bug corrections, the results are however 2/0.

In total that makes a record of 13/3 for the modification. I know that is not enough to draw complete conclusions, especially with the use of 1000 playouts instead of 1600 and the mess of different networks and versions used in my tests, but I hope by posting this that someone with a stronger computer than me can run extensive tests to confirm or refute this change.

The only change to make is UCTNode.cpp in the function get_eval, change

return eval to return eval-0.2f

I'm not very familiar with github workflows so I'm not sure if this warranted a pull request before confirming if it works or not.

All 369 comments

@killerducky You might find this of interest.

Nice experiment. Another way to encourage deeper search instead of wider is to modify cfg_puct. It's currently 0.85f, maybe it should be smaller. This will change the balance for all moves, not just those that have 0 visits. Many code changes have been made since cfg_puct was tuned. I think especially tree reuse could have a large impact on the balance between deep/wide search.

Of course there aren't enough results yet. In addition you said you ran many experiments, that makes the problem of low sample size even worse because it's a form of p-hacking.

Yeah I know that it may have just been me being lucky with the matches. I'm running more games but it is slow. I ran 10 more games, using 1000 playouts for the reduced fpu and 1600 for the normal fpu, so at a handicap, and I got 8/2 for now.

I thought about cfg_puct too but I thought fpu was more important, because for example, if one side is on a bad sequence which is becoming worse and worse as it explore deeper, the fpu will be higher than explored nodes, hurting exploitation. If a 0.5 net_eval move has all his explored childs at 0.1, but one at 0.4, instead of exploring the 0.4 move which is the best explored move yet, it will continue exploring unexplored nodes which have fpu 0.5, which seems bad to me. This preference will be the same no matter cufg_puct.

I'm starting to like your idea more. Suppose the parent position has value 0.5 (it's even). The top candidate moves should also have close to 0.5. But as you move down the candidate list, those moves will tend to be worse, and will have smaller and smaller evals when finally evaluated. So it might be interesting to try to model that behavior. Or just use a simple parameter like you have subtracting 0.2.

Edit: Another obvious candidate is to actually test FPU=0.5, since we now think that's what Google did. You didn't explicitly say so, did you test FPU=0.5?

Yes that was one of the things I tried. In particular I tried using get_eval of the parent position as a maximum for the fpu. The results were interesting but not spectacular enough given the sample size, especially compared to just subtracting 0.2 which I quickly realised was almost only winning.

Edit : I also tried 0.5, I didn't do that much tests (3/4 ? I don't remember, I should have kept my notes for that xD) but it didn't seem very different than the current version. I abandoned the idea when it got caught in a ladder by the normal version.

Edit 2 : I saw this reddit post https://www.reddit.com/r/cbaduk/comments/7rqj3k/leelazero_passed_my_ladder_test_finally/ and decided to try the reduced fpu versionwith c83e on this position, and it doesn't get caught in the ladder while the normal version does

If a 0.5 net_eval move has all his explored childs at 0.1, but one at 0.4, instead of exploring the 0.4 move which is the best explored move yet, it will continue exploring unexplored nodes which have fpu 0.5, which seems bad to me. This preference will be the same no matter cufg_puct.

This is not correct. Take a look at the complete formula: https://github.com/gcp/leela-zero/blob/47ddf38327b80ec5f2303344d1d9c65e25f5a3fb/src/UCTNode.cpp#L340

cfg_puct controls the influence of the policy net prior. If this is strong, the other moves will not be explored even if their FPU is higher than the evaluated move.

the sample size of my test games is low

A strong word of advice: this is a problem that you must fix first, or else you are completely wasting your time.

Yes the policy prior has an influence but in the case it has the same value for 2 moves then what I said hold true.

For the more game parts, I know and I am doing more games, but since my computer is slow I also think it is a better use of time if someone with a strong computer can do 100 games in one day while it will take me a week.

in the case it has the same value for 2 moves then what I said hold true.

If the moves have the same policy prior, then why would the behavior you describe (explore them both) not be exactly what you'd want? The alternative (explore the move we just discovered is bad) makes no more sense, for sure.

Many code changes have been made since cfg_puct was tuned. I think especially tree reuse could have a large impact on the balance between deep/wide search.

Fair point. Seems worth re-tuning now. (I do think tree reuse is the only change the has an effect here)

If the moves have the same policy prior, then why would the behavior you describe (explore them both) not be exactly what you'd want? The alternative (explore the move we just discovered is bad) makes no more sense, for sure.

the point is that the move we just discovered is actually not bad. It is worse than we expected given parent's net_eval, but all the other explored moves are worse. In fact at that point the parent's get_eval() would be lower than the move we just discovered. And yet because of the higher fpu (parent's net_eval), the search will prefer to explore unexplored nodes. To illustrate that point, if we imagine a worst case of a flat policy, the search will actually explore ALL unexplored node before looking at the best explored node. In fact I wonder if that is not what happen in failed ladders.

Essentially because of that I'm quite sure there is a problem with the current fpu, my solution of -0.2 is probably not even the best solution (even beyond simply optimizing the value to subtract), it's just something that mitigate the problem.

How can we know that all the unexplored nodes will be even worse without

exploring them?

On Sat, 20 Jan 2018 at 19:53 Eddh notifications@github.com wrote:

If the moves have the same policy prior, then why would the behavior you

describe (explore them both) not be exactly what you'd want? The

alternative (explore the move we just discovered is bad) makes no more

sense, for sure.the point is that the move we just discovered is actually not bad. It is

worse than we expected given parent's net_eval, but all the other explored

moves are worse. In fact at that point the parent's get_eval() would be

lower than the move we just discovered. And yet because of the higher fpu

(parent's net_eval), the search will prefer to explore unexplored nodes. To

illustrate that point, if we imagine a worst case of a flat policy, the

search will actually explore ALL unexplored node before looking at the best

explored node. In fact I wonder if that is not what happen in failed

ladders.—

You are receiving this because you are subscribed to this thread.

Reply to this email directly, view it on GitHub

https://github.com/gcp/leela-zero/issues/696#issuecomment-359197627, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AgPB1pwrYJotFTjM3I_1clrkZJYcS7WGks5tMkRQgaJpZM4Rlcn0

.

We don't know, but we can't know if they will be better either. There should be a balance, and It's probably not with a fpu that is higher than the best explored node that we get this balance.

I follow now, but it surely depends how many nodes you explored, if it is

just 1, and that happens to be worse than the parent, that does not mean

much, if it is several then that may suggest that the parent node was too

optimistic.

On Sat, 20 Jan 2018 at 20:52 Eddh notifications@github.com wrote:

We don't know, but we can't know if they will be better either. There

should be a balance, and It's probably not with a fpu that is higher than

the best explored node that we get this balance.—

You are receiving this because you are subscribed to this thread.

Reply to this email directly, view it on GitHub

https://github.com/gcp/leela-zero/issues/696#issuecomment-359201299, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AgPB1kysEPKsg-hquQ46eXwHVPm0RHBkks5tMlIRgaJpZM4Rlcn0

.

So as a starting point to test, an idea that comes to me is the fpu for unknown children be a weighted average of the parent and the children seen so far. So when we start new, it's the parent's. But the more we explore and see bad children, the more the unknown children will also reduce to the average. By the time we have 1 unknown child left, it'll be the weighted average of 1 (for parent) + # of children - 1 (the unknown child), and the parent alone is having very little effect.

@roy7 I like that idea.

It looks very interesting. Perhaps someone with strong machine will help with testing?

So as a starting point to test, an idea that comes to me is the fpu for unknown children be a weighted average of the parent and the children seen so far. So when we start new, it's the parent's. But the more we explore and see bad children, the more the unknown children will also reduce to the average. By the time we have 1 unknown child left, it'll be the weighted average of 1 (for parent) + # of children - 1 (the unknown child), and the parent alone is having very little effect.

Yeah that is the idea I had at the very start. It didn't seem to work though, and I think it may be because it causes problem but on the opposite side : when we have a parent node at 0.5, and child explored nodes at 0.5, and then we find a excellent node at 0.9, so the average value go up, thus the fpu goes up, and because of that the excellent node is exploited less...

But to be precise, the tests I did used get_eval of the parent for the fpu of the children, because from what I understand it is already an average of the children and the parent. But it is not weighted right ? So maybe using a weighted average would actually be better. Good idea !

I did 10 more tests of the reduced fpu option, and the record thus far is 13/7. Keep in mind that I do these tests with only 1000 playouts for the reduced fpu option and 1600 for the normal version, just to go faster while taking out possible handicap of using a playout number the normal version wasn't tuned for.

I've not learned this area of the code, but I read all of the discussions and issue and pull requests. :) get_eval might average, I think what I meant was when a new node is made, unknown children are assumed to be the same as the parent, which would be the nn_eval from the parent's node being fed to the GPU? So that's what I mean when I suggested a weighted average. If the parent get_eval is already weighted then I don't want to confuse the discussion further. Although I'm guessing as nodes are explored, the unknown children's values are never changed.

Instead of looping through all of the children each time we explore a new child to update their values, some sort of "unknown child value" could be stored and updated and referenced for any unknown child. So if the get_eval of the parent is an average, it'd sum actual children and # of unknown * unknown val. And unknown_val would be adjusted accordingly every time a new child is resolved?

Simple example of my thinking. Say we open a new node. It has a nn_eval of .5. We have 4 children with unknown values. So:

Parent nn_eval .5. Child 1-4 is each set to .5 based on parent.

We explore child 1. It has a nn_eval of .99. So we update unknown children to be 1 * .99/4 + 3 * .5/4.

So now Child 1 = .99, Child 2-4 are .6225.

As opposed to leaving Child 2-4 to the original .5 which was just a guess anyway. Say we now explore child 2 and it really is a nn_eval of .5.

Without weight: C1 .99, C2-4 still .5

With weight: C1 .99, C2 .5, C3-4 = 1 * .99/4 + 1 * .5/4 + 2 * .5/4 = .6225 still.

In English, if we assume all moves are 50/50 and explore a couple and see one is great and one is 50/50, we'll assume unknown ones might be better than 50/50 since we know a better move exists. If the one move we looked at was terrible, the average will go down and .5 would be our best move. As we explore more unknown children, maybe we discover .5 is the best move. Or maybe we find other better moves to raise the average, and so unknown moves look better than .5 again.

Anyway, just some brainstorming. Hopefully someone with more C++ skills and LZ knowledge might try tweaking the unknown child fpu a bit and run ringmaster on the ideas.

Hmm I thought about it more and the weighted average idea may not actually work as is. If what I think is right that is : in the situation with parent net_eval at 0.5 and an excellent child at 0.9, you want to go exploit the 0.9 first, thus getting the fpu up might be a bad idea, since the search will read the 0.9 less deeply before going to unexplored children. It is similar thinking that brought me to just dumbly lower fpu by 0.2, I wanted to verify if it was better to reduce fpu in general. Still need more test matches as gcp said but for now it does seem that we want to lower fpu more than up it.

Edit : I realized there is a problem with the -0.2 : if m_init_eval is below 0.2, then the fpu is less than 0, and the unexplored nodes will stay unexplored forever since the explored nodes will never have an eval below 0 and the puct cannot go negative either. Changed the return of get_eval in the case of visit == 0 to std::max(0.05f, m_init_eval-0.2f) and seeing better results at 1600 playouts. Will do more tests games

@gcp When testing things an SPRT pass is good enough? So like 11-0 in favor of a change can stop there, or do more tests than just what SPRT wants?

@Eddh I'm running a test now. I'll let it run overnight, but let me just say... Get your pull request ready!

I think we can do even better, I'm going to try to code up something soon.

Is the idea be to best predict the value of the node once explored? If so,

then it seems reasonable to expect that those nodes that have a lower

policy % will also have a lower value. i.e should they should default to a

function of the parent value and the policy percentage?

Returning eval-0.2 just seems to unfairly favour the first node explored with no basis for believing all the other nodes are likely to evaluate much worse than the parent, this causes the search to be deeper than might otherwise be desired - good for ladders but maybe not good overall. In any case the decision of how wide the search should be set elsewhere.

On Sun, 21 Jan 2018 at 01:16 Andy Olsen notifications@github.com wrote:

@Eddh https://github.com/eddh I'm running a test now. I'll let it run

overnight, but let me just say... Get your pull request ready!I think we can do even better, I'm going to try to code up something soon.

—

You are receiving this because you are subscribed to this thread.

Reply to this email directly, view it on GitHub

https://github.com/gcp/leela-zero/issues/696#issuecomment-359215656, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AgPB1r_OgSNR_Cfq4ND753-eDjFwVU53ks5tMo_vgaJpZM4Rlcn0

.

Might be. I have no clue. But it seems redundant since the policy influencing which move to pick among explored and unexplored both is already there and working.

Apart from that I changed again to eval-0.2 but then I seek the worst child and if the fpu is lower than the worst child I put it to the worst child. So it is a slight tune back toward more exploration and it also takes care of the negative fpu problem. We'll need to sort all these solutions out before doing a pull request I think x)

@Eddh even if you don't want to do a formal pull request yet, can you push a branch to your fork so we can see the various experiments you try? For example I get the idea of seek the worst child thing but I'd like to see the implementation.

Using the worst child makes a bit more sense, in a way this does what I suggest - those with a lower policy score will be evaluated later, so will be likely be given a lower unexplored value when the time comes to consider them, as it is more likely a worse child will be found in the meantime. I like this, though the -0.2 cap still seems arbitrary.

If we want to avoid one bad child setting the FPU too low, how about setting it to the first quartile? (median value of the lowest half of all child nodes)

If we check each child for a NNCache hit to use real nn_eval starting value, there's a chance we'll find a value we already really know. If it's a bad value, that gives you a bad child floor to start with before even needing to explore a random child.

Here is my fork https://github.com/Eddh/leela-zero/tree/tune_fpu_worst_child

I have got 7/1 with 1600p vs 1600p c83e against normal version for now

Thanks for your help with testing :)

@evanroberts85 Yes I think that might be a good idea. It's slightly more complicated to implement though. I was too lazy. And now I am sleep deprived from testing.

@Eddh did you check the performance of that latest idea? It looks to me like it will be very expensive.

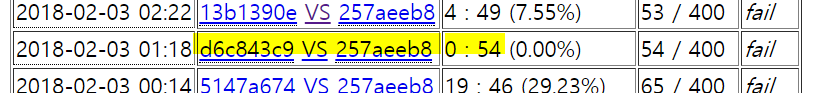

My results for Eddh's first suggestion return eval - 0.2f. This is with latest 6x128 7fde net.

lzbase-p-1600 v lzfpum0p2-p-1600 (68/1000 games)

board size: 19 komi: 7.5

wins black white avg cpu

lzbase-p-1600 8 11.76% 3 8.82% 5 14.71% 435.14

lzfpum0p2-p-1600 60 88.24% 29 85.29% 31 91.18% 429.74

32 47.06% 36 52.94%

I'm testing a version that does a moving average of sibling node net evals. I wrote some code to analyze a few different methods and my goal was a simple algorithm that minimizes the MSE between get_eval when visits=0 (FPU) and when visits=1.

The idea behind using parent's eval as the FPU is to use a better approximation than a constant. Doing a moving average as we get more information is a refinement of that idea.

I'll post more results and some code tomorrow.

How does the FPU=0 version perform? That was the arguably the choice for AGZ, and IIRC that performed quite well for the supervised 6x128 net. That could at least serve as the second baseline.

I wrote some code to analyze a few different methods and my goal was a simple algorithm that minimizes the MSE between get_eval when visits=0 (FPU) and when visits=1.

I wondered if anyone would make this step, it makes perfect sense of course!

A moving average is likely to give too high an evaluation, as unexplored nodes are unexplored for the reason that we can expect them to be slightly worse than our first options, hence there lower policy score. I would suggest an average of only the weaker half of all sibling nodes would give a lower MSE.

@killerducky wow, I didn't even expect that much because of the negative fpu problem. Again, thanks for running the tests.

For the performance, I admit I didn't really look at it. I don't think it has a strong impact tho because I use an already existing loop for seeking the worst child. So at worst it would be 1 comparison, 1 value assignment per child, and then 1 comparison/1 value assignement for the parent. (If you look at my code I actually have some code for finding the max child too but I don't use it xD)

Edit : It doesn't seem to affect performance noticeably.

The moving average idea was also something I thought of. But looking at the alphago zero paper and and the code, get_eval already returns the average of the child nodes and the parents, so in that case I already tried that. It didn't seem to work. It was a very low sample size so maybe could turn differently with more tests, but I think it isn't that simple because as evan said, explored nodes have been explored because they were recommended by the policy, and thus the unexplored ones are likely to be worse than the explored ones. I still think this idea is a good starting point but not usable directly.

The idea of roy7 to look for previous evaluations of the move is very good also, I didn't think of that.

@isty2e It seems to me that AGZ's network returned values in the range -1;1 and not 0;1, thus 0 for them should be the equivalent of 0.5 for us. Apart from that I think using fpu of 0 is the wrong balance but on the "too deep" side. Imagine worst case of flat policy, the search will choose a move at random, which is likely to be bad, so let's say this move has net_eval of 0.2. The next time the parent node is visited, the search will have to choose between this node and all the unexplored nodes, with fpu=0. Since puct cannot go negative, the only explored node will always have a value > 0 (unless the network is absolutely sure to lose, which happens, but very rarely from my experience), and thus the search will never go visit the other unexplored node, despite how bad the first pick is.

Obviously since we have a strong policy and not a flat one the effect will be mitigated. If your policy is godlike maybe fpu=0 is even optimal. In general tho I think even tho we have a strong policy, the search should be sensible even with a flat policy, because the original paper which invented the very concept of fpu didn't seem to have a policy (correct me if I'm wrong tho), so it was actually flat. In addition, if we do a new start, we will not have a strong policy.

@Eddh Oh I forgot that again, yes the range was [-1, 1] for them. For the rest of the analysis, I agree, and I wondered if such a "too deep" search can be any beneficial at the first place.

@killerducky's tests of @Eddh's ideas certainly sound statistically significant to me, and it's surprising just how much improvement potential there is to be derived from UCT parameter tuning only! I hope this is researched thoroughly and implemented soon. This would be a really big strength gain, or speed boost if invested in lowering playouts.

I have 12/3 for now with the fpu lowered then with worst child as minimum. With c83e and 1600p. So that seems like another solution to compare to the other.

Ok I pushed a branch. I implemented the moving average (I think technically it's an exponential decay average or something like that). Preliminary tests show it is also better than base, but worse than just subtracting 0.2. Next I am going to tune cfg_puct.

See below my python script to calculate MSE for a few different methods. Procedure to do this is in the comments of the script.

https://github.com/killerducky/leela-zero/tree/aolsen_fpu2

analyze_first_v.py.txt

test.log

test.sgf.txt

So "moving average" seems to be a shift towards the value of the

latest explored node with inertia. I am right in understanding

that in your code MOVING_AVG_WEIGHT

is a constant set to 0.1?

On Sun, 21 Jan 2018 at 13:52 Andy Olsen notifications@github.com wrote:

Ok I pushed a branch. I implemented the moving average (I think

technically it's an exponential decay average or something like that).

Preliminary tests show it is also better than base, but worse than just

subtracting 0.2. Next I am going to tune cfg_puct.See below my python script to calculate MSE for a few different methods.

Procedure to do this is in the comments of the script.https://github.com/killerducky/leela-zero/tree/aolsen_fpu2

analyze_first_v.py.txt

https://github.com/gcp/leela-zero/files/1649791/analyze_first_v.py.txt

test.log https://github.com/gcp/leela-zero/files/1649788/test.log

test.sgf.txt

https://github.com/gcp/leela-zero/files/1649789/test.sgf.txt—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/gcp/leela-zero/issues/696#issuecomment-359249929, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AgPB1rRCSQAApXJawVOgm39RqWQUxHVhks5tM0EKgaJpZM4Rlcn0

.

@killerducky I think your function is called exponential smoothing. I would guess you will get better results with a higher smoothing factor than 0.1, which seems very low. In a sense this does a similar thing to Eddh using the lowest child, as the latest explored nodes tend to be the weakest ones, of course you have applied smoothing too so there are no big jumps, which makes some sense.

Last time we had this discussion we found out that fpu=0 worked well for the supervised network, but not for the self-play ones. The fpu=parent thing was ok for both. So maybe check with the best 5x64 as well.

This is what I am getting for now (7fde):

Parent-eval v FPU-0 (14/100 games)

board size: 19 komi: 7.5

wins black white avg cpu

Parent-eval 3 21.43% 1 14.29% 2 28.57% 717.37

FPU-0 11 78.57% 5 71.43% 6 85.71% 717.03

6 42.86% 8 57.14%

Unfortunately it might take a few days for my hardware, and eval - 0.2 version seems currently better (though statistically insignifcant yet).

Yeah, that's why I made that remark, see: https://github.com/gcp/leela-zero/pull/238

The behavior was very different for the supervised network and the self-play networks. That's why it's important to test with 57481001 or c83e1b6e as well.

I thought it was related more to the quality of the policy. Anyway when it reaches 100 games or becomes statistically significant I might test this for 5x64 ones, but hopefully someone with a better graphic card can finish it faster.

I thought it was related more to the quality of the policy.

I thought this too but I'm not so sure anymore now. I am testing it overnight.

This would have some interesting consequences. I tuned PUCT, and I used the supervised network to do so (because it was strong and we didn't have any strong self-training ones). If the supervised network is very different from the self-play ones in the optimal PUCT value, then right now the matches are being played with a massive advantage to the supervised network.

This could be a potential reason why after the bootstrap all matches are going 0:50.

(After having said that, the supervised networks we are using now had massive problems beating the 5x64, and passed at like 55-60%, so maybe it's not a very good explanation for the 0:50's)

@isty2e Interesting. So it's no surprise that by lowering the current version's fpu the winrate increase. I guess there must be a sweet spot for fpu. For now it seems eval-0.2 is closer to it. Maybe killerducky's solution can beat it. There's potentially a few hundreds elo to gain and then more because of higher self play quality.

Edit : I have made another variation that seems to work : using get_eval of the parent instead of net_eval, so it is a moving fpu too, except i still take out a value from it, which is 0.13. Very arbitrary. I tried 0.2 for 2/3 games against eval-0.2 version with 1000 playouts, they were lost and I didn't like the way in which they were lost, so I tried 0.15, which seemed a bit better to my eyes, then 0.13 and it got 4/1 against eval-0.2. I am trying it at 1600 playouts against the normal version now, for now it is 4/1 in that matchup too.

So yeah that is not very hard science I just went by feeling to tune the value to subtract, need confirmation with lots more game if someone would be kind enough to run it.

There was a reason for subtracting a lesser value though, which is that I think in the game of go most moves are "bad" moves, thus get_eval should generally be slightly lower than parent's net_eval, and thus there is no need to subtract a value as large as 0.2 because it is already lower.

Oh and I also added a lower and upper bound. 0.01f for the minimum fpu value, and the maximum value will never exceed the best child, for reason I already explained.

https://github.com/Eddh/leela-zero/tree/moving_fpu_m0.13_bounded

For the aforementioned match between the current one and FPU=0:

Parent-eval v FPU-0 (38/100 games)

board size: 19 komi: 7.5

wins black white avg cpu

Parent-eval 8 21.05% 5 26.32% 3 15.79% 644.61

FPU-0 30 78.95% 16 84.21% 14 73.68% 646.79

21 55.26% 17 44.74%

Due to the occasional kernel panic in OS X when running leelaz it was terminated here. Though it is not statistically significant in terms of SPRT(-13, 13), I would like to start a benchmark with c83e, though I personally expect nothing different. After all, all our networks are trained with supervised learning in terms of the training method.

leelaz-5748 v leelaz-fpu0 (155/1000 games)

board size: 19 komi: 7.5

wins black white avg cpu

leelaz-5748 55 35.48% 31 39.74% 24 31.17% 198.14

leelaz-fpu0 100 64.52% 53 68.83% 47 60.26% 196.77

84 54.19% 71 45.81%

leelaz-5748 v leelaz-fpu05 (155/1000 games)

board size: 19 komi: 7.5

wins black white avg cpu

leelaz-5748 115 74.19% 57 73.08% 58 75.32% 184.63

leelaz-fpu05 40 25.81% 19 24.68% 21 26.92% 184.96

76 49.03% 79 50.97%

leelaz-5748 v leelaz-m2 (155/1000 games)

board size: 19 komi: 7.5

wins black white avg cpu

leelaz-5748 33 21.29% 15 19.23% 18 23.38% 209.17

leelaz-m2 122 78.71% 59 76.62% 63 80.77% 208.13

74 47.74% 81 52.26%

leelza-5748 is the current method, FPU=parent. FPU=0 and FPU=parent-0.2 are fairly close, not sure if they are fundamentally different (how often does the score drop by 20% or more?). But they are better than FPU=parent.

My main concern is that we tested FPU=0 vs FPU=parent before and reached the opposite conclusion (https://github.com/gcp/leela-zero/pull/238#issuecomment-349557312). What makes the difference now? Did I have a bug? Is it because the networks are stronger?

PUCT tuning indicates a maximum between 0.5 and 1.5, and a current best guess of 1.05. This is basically the same as what we have, so this isn't the issue.

The results are good enough that I will make the "minus" a tunable value and do a joint FPU+PUCT tuning run.

The two proportion z-test says that FPU=parent-0.2 is better than FPU=0 with a p-value of 0.00558. Also, doesn't the quality of policy prior explain the difference? A better policy allows us to decrease the FPU, where the limiting case is FPU=0 for a perfect policy, and there is a rating difference of 5269 between 6f27 and 5748. Of course the tree reuse might have been affected this, but my guess is that it is much more relevant to the strength of the policy.

By the way, how many methods do we have for the FPU?

- Constant (0, 0.5, etc.)

- Parent

- Parent-constant(>0)

- Parent*constant(<1)

- MSE-based one

If someone has a beefy machine, would it be worthwhile to test all of these?

If this now becomes a tuning with many variable parameters, would it now make sense to implement some way to run distributed tuning tests? For example by implementing different tuning settings and making them callable with an option to leelaz?

Something similar to this http://tests.stockfishchess.org/tests

A Fishtest analogon has already been rejected, for the problems associated with auto compiling different binaries on clients. But if the tests could be run with the same binary, just with different parameters?

For example by implementing different tuning settings and making them callable with an option to leelaz?

This is already implemented.

But as I said several times before, tuning isn't a problem, it's something that only has to happen very occasionally so I can just rent a bunch of boxes on AWS if I run out of local machines.

Also, doesn't the quality of policy prior explain the difference?

Probably, but that does mean these kind of things might be problematic for "fresh starts".

Of course it would, but I don't think we are in a position to consider a new run from scratch (especially when #704 is done). Maybe relevant for forked projects though. If that is really a thing, FPU can be set by an external argument and letting autoGTP receive it from server would be fine, I suppose?

After all, all our networks are trained with supervised learning in terms of the training method.

Not really. The supervised ones all had lower weighting on MSE, presumably required because the datasets are more static. That's why I wasn't sure the behavior was the same. (And it clearly isn't if you look at what's happening with the bootstrap)

FPU can be set by an external argument

Not so much FPU itself, but the algorithm, I guess yes.

Ugh, I did not care much about the bootstrapping, but was it trained from a single dataset, with all post-0.9 games? Anyway I will run the FPU=0 test for 5x64 and compare.

Ugh, I did not care much about the bootstrapping, but was it trained from a single dataset, with all post-0.9 games?

See #591.

Anyway I will run the FPU=0 test for 5x64 and compare.

You mean with a weak 5x64? I already ran it with the best one, see above.

I already ran it with the best one, see above.

Oh, I am halting it then. Unfortunately 30-8 is not enough to run some z-tests or something (30-8 is better than 100-55 by a p-value of 0.09).

https://github.com/Eddh/leela-zero/tree/moving_fpu_m0.13_bounded

if (worstChildVal = 1000.0f || bestChildVal == -1000.0f) {

I'm pretty sure this is buggy. (Repeat rant about doing proper testing with large enough sample size here)

As far as fresh starts go, I think the FPU = 0 (Q(a) initialized at 0) described in the AGZ paper would work, it wouldn't be the optimal value, but it wouldn't prevent the network from improving, as opposed to the FPU = 1.2 that was initially in LZ, and which made the winrate more and more lopsided.

My main concern is that we tested FPU=0 vs FPU=parent before and reached the opposite conclusion (#238 (comment)). What makes the difference now? Did I have a bug? Is it because the networks are stronger?

Wasn't there a bug with white side at that point ?

if (worstChildVal = 1000.0f || bestChildVal == -1000.0f) {

I'm pretty sure this is buggy. (Repeat rant about doing proper testing with large enough sample size here)

Hmm could be. I did it very fast just to see the result. It seemed to work anyway, but I completely agree that it is ugly, I will change it this evening. Also I realized I did a mistake when pushing this branch, it seems to be with a constant of -0.17 and not -0.13. Sorry ><

For the sample size. I've been considering doing a lot of game but with around 500 playouts to have reasonable speed. But would it be valid enough to extrapolate for 1600 ?

As far as fresh starts go, I think the FPU = 0 (Q(a) initialized at 0) described in the AGZ paper would work

Do you mean FPU=0 or FPU=0.5 (in Leela terms)?

which made the winrate more and more lopsided.

That was a bug with virtual loss, not FPU.

Do you mean FPU=0 or FPU=0.5 (in Leela terms)?

Huuuuh, Leela's FPU isn't equal to the Q(a) initialization? What I meant what initializing the Q(a) at 0 as described in the paper, if that means a value other than 0 for Leelaz' FPU, then I've missed something...

That was a bug with virtual loss, not FPU.

Ah right, but didn't the FPU at 1.2 also cause extremely bad exploration which was flattening the distribution (and thus prevented the policy head from learning)?

Huuuuh, Leela's FPU isn't equal to the Q(a) initialization? What I meant what initializing the Q(a) at 0 as described in the paper, if that means a value other than 0 for Leelaz' FPU, then I've missed something...

Leela scores internally from 0 to 1. AGZ scores from -1 to 1. When the FPU discussion was had originally, the latter was missed and people interpreted the paper as saying FPU=0. This did not work so well as FPU=parent. Much later in another thread someone pointed out that FPU=0 in the paper is FPU=0.5 in Leela, which coincidentally is closer to FPU=parent for most nodes.

But as you can see above, FPU=0.5 (or FPU=parent) isn't as good as FPU=0 for strong networks.

Interesting, I did miss that part of the discussion. Does that mean that in Leelaz, Q(s,a) should be multiplied by a factor of 2 to keep the same "importance" relative to U(s,a), or was that already tuned by reducing cfg_puct (I'm assuming the latter through the tuning process)?

Anyway I meant FPU = 0.5 in Leelaz terms for fresh starts, yes. But it's good to know it can be retuned along the way to maximize performance (I guess if someone has as much computing power as Google, then retuning it after each network promotion would be ideal).

was that already tuned by reducing cfg_puct (I'm assuming the latter through the tuning process)?

AGZ's PUCT isn't specified, so indeed this would have been compensated by the tuner.

leelza-5748 is the current method, FPU=parent. FPU=0 and FPU=parent-0.2 are fairly close, not sure if they are fundamentally different (how often does the score drop by 20% or more?)

The score should drop by 20% or more quite often in the search tree. In capturing races, ladders, suicide moves...

Yeah but most moves are not that.

These moves decide the fate of the game though.

Oh and I also added a lower and upper bound. 0.01f for the minimum fpu value

I'm not sure the lower bound, i.e. cap to 0.01f is necessary FWIW. The eval gets pushed up by the UCT part of the formula anyway, so negative values will "just work".

Yeah I'm not sure either. With a strong policy fpu can be negative and the policy can compensate. But in the case 2 moves have identical policy, but one is explored and the other isn't. The unexplored one will never be visited right ?

The unexplored one will never be visited right ?

It will get visited at some point. The UCT formula will force exploration if the other move has had a ton of visits, and that even works if it has a negative value.

this : cfg_puct * psa * (numerator / denom) can have a negative value ?

@Eddh The full formula is: value = winrate + cfg_puct * psa * sqrt(parentvisits) / (1+childvisits)

The part your code modifies is winrate, and even if it is negative, the other term will overwhelm it as parentvisits get large.

OK I see I misunderstood that. So yeah lower bound probably not necessary.

Edit : I think from now on I will do a lot more games but with 600 playouts. It seems from this thread https://github.com/gcp/leela-zero/issues/667 that there is some correlation for this range of playout with 1600 playouts when it come to networks. I'm hoping there is also a correlation when it come to fpu.

Edit2 : I have to say my branch moving_fpu_m0.15 is showing promise at 12/8 against eval-0.2f at 600 playouts for now. I took out the dumb shenanigans with lower bound, and maximum to the best child and just use parent's get_eval(color)-0.15f so if you want to test at 1600 playouts a moving average like fpu you can try that. https://github.com/Eddh/leela-zero/tree/moving_fpu_m0.15

I am at 17/11 for moving_fpu_m0.15 at 600 playouts against net_eval-0.2f. Seems more and more promising to me. For fun I tried 5 matches at 600 playouts against normal Leelazero 1600 playouts, result 3/2.

Wow, the 600 playouts one beat the 1600 playouts 3 times out of 5? That's some serious potential ELO gain there even though not statistically significant.

If you can make that result statistically significant, that would be a good reason to lower self-play playouts to something like 800-1000... this would compensate the slowdown we're going to experience with 10 blocks, and provide very fast games with 6 blocks.

I played 4 x 200 games with the variants (FPU=parent-0.2, parent-0.1, your branch, and FPU=max(0, parent-0.2) and aside from parent - 0.1 being weaker than - 0.2 they were all very close, so no statistically significant decisions. Based on this I will just take the simplest one.

I'm doing a combined tuning run now for PUCT factor + FPU reduction constant.

The earlier PUCT tuning run ended with an optimum between 0.8 and 0.9. We have 0.85 now, so that wasn't a problem.

Tuner is trending towards PUCT=0.8 and FPU-reduction=0.22, so not very far from what we already have.

Are you doing the tuning on a single net, or are you using multiple nets for reference? How confident are you that optimal tuning values for one network are the same as for another?

Nice. I've been very lucky to hit 0.2 on the first try.

Apart from that, I wonder if it is possible to approximate how different fpu methods would perform for a new run from scratch by testing performance on a flat/random policy, using only the network evaluation for guiding the search.

Are you doing the tuning on a single net, or are you using multiple nets for reference? How confident are you that optimal tuning values for one network are the same as for another?

I'm using 5748 (best 5x64). The "optimum" in the strict sense may differ between networks but I don't even see any reason to care whatsoever! We need a "good enough" value. It's not like I'm going to let the tuner run till infinity either.

It's more likely that the strength of the policy network influences the optimum value. We observed earlier that FPU=0 ain't so good for near random networks.

Apart from that, I wonder if it is possible to approximate how different fpu methods would perform for a new run from scratch by testing performance on a flat/random policy, using only the network evaluation for guiding the search.

You can take one of the very early networks (say a11516) and compare there. (Just take it early enough that it has no white bias yet - we had a bug with that early on. Winrate should be close to 50% in the beginning).

For Odin I have similar FPU implementation as in current Leela, discovered independently (although I probably have the same in Valkyria which is about 10 years old so I forgot). I started out with the FPU= 0.5, then I used the Parent Value (but forgot to adjust for color change) and when I I fixed the bug I got a really huge boost in playing strength. And this boost is there also for pure MCTS without neural network priors so I would agree with gcp that this implementation detail is not dependent on the network. It is simply a matter of search efficiency.

A rationalization for why this is better: The puct formula assumes that each move returns a value from some sort of distribution (normal? I don't know). So each additional search to the same move only gets a more accurate measure of that moves average value. But in a Go game each additional search goes deeper and can potentially provide much more information.

Suppose you have sixteen moves with the same psa, and you have sixteen playouts left. Current code would search each of those moves once (ignoring a large positive change in winrate found in one). But it might be a better strategy to randomly pick one of those sixteen moves, search it twice. Then pick another move and search it twice. Etc until you searched eight moves twice, and ignored the other eight.

So the parent-0.2 serves as a sort of progressive widening. Until a move with zero playouts overcomes the -0.2 penalty you don't search it. But once it is deemed time to search it once, the penalty is removed, and the search focuses on only that move for awhile. As the penalty is removed from the top candidates the search converges to the classic uct formula. But the parent-0.2 effect is still going on deeper in the tree.

Also once the penalty is removed and it starts to search, suppose the move turns out to be bad after all and gets lower winrates. The search will start to avoid that move again. This can explain why my idea of moving average is not needed.

tldr, over simplified: For every level in the tree, search each candidate N moves deep before moving to another candidate. But for candidates with N moves searched, spread the searches evenly.

@odinAuthor Do you also use a fpu reduction constant ?

@killerducky I agree, this effect is probably a big part of the reason it works so well. But the idea of moving average, even tho not needed, is at least competitive with the fpu reduction constant from the testing gcp did with my moving_fpu branch. I think more effort on that front can still yield even better results. But for now I agree with keeping things simple since there is no reason to go for something else.

@Eddh No, not yet but I will most certainly consider testing it. The simplest reason for it is probably that most moves in go is very bad and easy to predict, and by postponing searching those moves a little bit longer has a huge impact even if the heuristic for doing it is very simple. For Odin it might even be more important because the priors from a network evaluation is added after the node has been visited perhaps 50-100 times or so.

@odinAuthor That will be very interesting.

@gcp @killerducky I have tried a bit of games with a115, but I don't think I have the right setup to test it with more games. But from the few games I've tried, there seems be a huge and obvious problem with eval-0.2 when the policy is almost flat like with a115. In that case, even though the policy is flat, all playouts go into very few moves. Which I realize now is logical from the formula for puct, since when psa is <1% for every move, parentvisits need to get huge to compensate the fpu reduction. This seems very bad to me, since that means a flatter policy cause deeper search, which is contradictory. It also has implications for strong networks, who can sometime hesitate between a few moves and output a lot of < 10% options. Logically this also cause a deeper search to compensate for fpu reduction which is contradictory with the goals of the policy.

To correct this, I'm wondering if it would be possible to use something like the variance of the policy distribution to adjust the fpu reduction for each move. The goal being that a flatter policy, with lower variance, imply a lower fpu reduction value. In a way the more the policy is confident, the bigger the fpu reduction would be, and vice versa. Any thoughts ?

@Eddh For you or others interested, I was looking over the UCT formula on Wikipedia and in the Improvements section it mentions

One such method assigns nonzero priors to the number of won and played simulations when creating each child node, leading to artificially raised or lowered average win rates that cause the node to be chosen more or less frequently, respectively, in the selection step.[18] A related method, called progressive bias, consists in adding to the UCB1 formula a {\displaystyle {\frac {b_{i}}{n_{i}}}} {\displaystyle \frac{b_i}{n_i}} element, where bi is a heuristic score of the i-th move.[5]

The linked references are Combining Online and Offline Knowledge in UCT (2007) for using non-zero priors, and Progressive Strategies for Monte-Carlo Tree Search (2008) for progressive bias.

What we currently do with puct=parent or parent-0.2 basically sounds like the non-zero prior idea, whereas your comment on using variance or something else to make the priors more dynamic is similar to the progressive bias idea. Perhaps those references (or other newer papers that reference them) can lead to ideas for Leela Zero. The latter paper also has an idea for "progressive unpruning" where they reduce the branching factor up front, then increase it as time allows.

It seems a bit different, those seems for using heuristic knowledge on top of monte-carlo evaluations. I've been reading a bit Modifications of UCT and sequence-like simulations for

Monte-Carlo Go and Exploration exploitation in Go as well to better understand this. It seems there are many different ways to do this. I don't even think we are limited to using winrate estimation directly. I've made another buggy branch a few days ago which is interesting but not sure if it is better or worse than eval-0.2 yet. It tweaks the winrate estimation so that child nodes action value is mapped to a [0;x] range, and then we can choose fpu to be a constant within that range. Its playstyle is .... different http://eidogo.com/#1F0JL95t7 (I called it normalwinrate) but it depends on the parameters. It would be completely different than Alphago Zero at that point though.

I found a paper on using MCTS to play Heathstone. In it they mentioned using non-zero priors as "search seeding". I'd not heard of it by that term before. Googling for "MCTS search seeding" led me to A Survey of Monte Carlo Tree Search Methods (2012) which is a bit more recent and talks about both progressive bias and search seeding. Although the main reference in the search seeding section goes back to the 2007 paper above.

From the second paper, "In plain UCT, every node is initialised with zero win and

visits. Seeding or “warming up” the search tree involves

initialising the statistics at each node according to some

heuristic knowledge." So that involves human knowledge or reusing moves seen elsewhere in the tree.

Rather than

value = winrate + cfg_puct * psa * sqrt(parentvisits) / (1+childvisits)

Would something like the following make more sense?

value = (winrate + cfg_puct * psa) * sqrt(parentvisits) / (1+childvisits)

or:

value = (winrate + psa) * cfg_puct * sqrt(parentvisits) / (1+childvisits)

Hmm I'll try the second one. In the third one you could just take out cfg_puct and it would make no difference.

then needs to be some exploration paramater so this is better:

value = winrate + psa + cfg_puct * sqrt(parentvisits) / (1+childvisits)

Second one seems bad. Only one game, but looking at the spread of the search, it sometimes only search one move, sometimes all moves. It lost quite fast.

Edit : might also be a parameter thing, who knows.

Yea there needs to be a exploration paramater, see my updated formula. Also cfg_puct would need to be retuned.

value = winrate + psa + cfg_puct * sqrt(parentvisits) / (1+childvisits)

This seems bad to me because as parentvisits get large the last term will overwhelm both winrate and psa who will almost not matter. Will end up searching all moves almost evenly.

Edit : I'm not even so sure, it's tricky to imagine the behavior of these formulas xD

But as childvisits increase the third part gets smaller, so winrate and psa becomes more relevant.

So it will first search nodes with high psa+winrate, but then the univisited nodes will have higher and higher parentvisits while their childvisits stay the same. It seems like with that rise it will force exploration of the unvisited node every 1 or 2 visit of the parent ?

Since I have nothing to lose I will probably still try anyway, but tomorrow. I need to sleep now.

Well cf_puct would need to be retuned, probably lowered quite a bit. But the basic idea of the amount of children explored being increased the more the parent is explored is part of the original formula.

I also wonder, the balance between the influence of policy score and winrate currently depends on if the AI is winning or losing. Would it not be better if they were both scored out of 1? So instead of using winrate you use win winrate/best-winrate, where best-winrate is the best child of the root node?

Edit: actually instead of best-winrate, just the root winrate may make more sense

So it will first search nodes with high psa+winrate, but then the univisited nodes will have higher and higher parentvisits while their childvisits stay the same. It seems like with that rise it will force exploration of the unvisited node every 1 or 2 visit of the parent ?

Ok thinking about it more I can see what you mean, and why the original formula is what is is. Nodes with a very low or 0 policy score should not be selected, so the score needs to be a multiplier not a constant.

How about:

value = winrate + cfg_puct * psa/best-sibling-policy-score * sqrt(parentvisits) / (1+childvisits)

That way, a flatter distribution would behave similar to a strongly biased one as 1%/2% is the same as 30%/60% etc.

With this if you have moves with psa 0.5 ; 0.10 ; 0.10 etc, once divided by best sibling they will turn into multipliers 1 ; 0.2 ; 0.2 etc. It doesn't seem right either because once the 0.5 has been explored and the first 0.10 has caught up with the fpu reduction, the second 0.10 move will have to do just as much catching up as the first one in order to be selected, even though he is being compared to a much lower policy value. With the thought experiment @killerducky did it seems ok to have the second one wait for a few exploitations of the first one, but it shouldn't be too much, and there it seems too much because the difficulty of catching up has been raised just because the 0.5 policy move is raising the bar. But I think I'll test it this evening, if I have time.

The solution I'm thinking of is a bit more complicated, using the standard deviation of unvisited nodes. That way the balance isn't messed up by a strong policy move. When there is one unvisited node at 0.4 and the others at 0.01 or lower, the standard deviation will be high and because of that fpu reduction will be high too (I'm thinking it should be a maximum of around 0.4), but the 0.4 policy move should be able to catch up after a few parentvisits, then there are only 0.01 or lower policy moves, meaning a very low standard deviation, which should drag down fpu reduction, and we're back at the normal formula, though we might want to still have a minimum for fpu reduction, seing the results it has had.

I also wonder, the balance between the influence of policy score and winrate currently depends on if the AI is winning or losing. Would it not be better if they were both scored out of 1? So instead of using winrate you use win winrate/best-winrate, where best-winrate is the best child of the root node?

Edit: actually instead of best-winrate, just the root winrate may make more sense

This is actually kind of what I do in my normalwinrate branch might want to try it, it is fun. But at that point it becomes so different from AlphagoZero that we are deviating from the goals of the project. (Also my normalwinrate branch is buggy, I haven't pushed a correction for a division by 0 when NN eval is absolute 0 xD)

It doesn't seem right either because once the 0.5 has been explored and the first 0.10 has caught up with the fpu reduction, the second 0.10 move will have to do just as much catching up as the first one in order to be selected, even though he is being compared to a much lower policy value. With the thought experiment

I do not quite understand what you mean here, if both policy scores are 0.1, then as soon as the first node with a 0.1 policy score gets an extra child node it's overall value would be less, meaning that the other 0.1 node would be explored, so they should end up with more or less an equal number of child_visits if win_rates also stays equal.

The denominator is equal for all siblings, so the behaviour is the same as the original formula.

If you do test it, I would suggest to set cfg_puct to a third of its current value, on the basis that best-child policy score probably averages around 30% currently and acts as a divisor.

Hmm maybe you're right on that part. I will try when I can.

I looked at the old code in Valkyria and in the more recent code in Odin. It turned out that the Valkyria code is so riddled with weird experiments and features it is impossible to say what it is doing... In Odin I saw that I did not use the parent node for FPU, but instead it uses the win rate of the most visited sibling sofar. Both measures are correlated but the dynamic behavior of my current implementation is probably more unstable/complicated. Nice thing is I have a command line parameter for it already so maybe I could do some quick tests in the weekend easily. I would like to compare what I have with A) using parent winrate and B) parent winrate - constant, and C) current system - constant.

killerducky tried the most recent sibling but with exponential smoothing. Lowest sibling though seems to make more sense to me, which is what Eddh proposed I believe.

Lowest sibling feels dangerous for Odin at least. In Odin the following could happen: the hardcoded prior (basic local patterns) dominates search and the highest prior is searched 10 times in a row. Sometimes all the simulations are lost for a score of 0% (Leela is different becuase the WR is from the nn value output does not suffer from statistical random noise (just bad bias). In this case no new moves might be searched for a long time compare to using the wr of the parent.

I could see that could be a problem then, exponential smoothing should sort

that though and should be used anyway for this kind of thing. It seems at

best though this is only a small improvement on just parent - constant.

On Fri, 26 Jan 2018 at 12:55 odinAuthor notifications@github.com wrote:

Lowest sibling feels dangerous for Odin at least. In Odin the following

could happen: the hardcoded prior (basic local patterns) dominates search

and the highest prior is searched 10 times in a row. Sometimes all the

simulations are lost for a score of 0% (Leela is different becuase the WR

is from the nn value output does not suffer from statistical random noise

(just bad bias). In this case no new moves might be searched for a long

time compare to using the wr of the parent.—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/gcp/leela-zero/issues/696#issuecomment-360777536, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AgPB1odMtiE0lWbaAqvculJfpomQjfLgks5tOctVgaJpZM4Rlcn0

.

killerducky tried the most recent sibling but with exponential smoothing. Lowest sibling though seems to make more sense to me, which is what Eddh proposed I believe.

What I tried was using the lowest sibling as a minimum for fpu (eval-0.2f), so it didn't cause that problem. It didn't seem to make much of a difference either. To this day I don't know if it is better or worse.

I'm doing some math on the current situation. Right now with flat policy at 0.01 everywhere, you need around 600 visits to compensate the fpu reduction of 0.2f (assuming winrate stay around eval, which is likely). So that is exactly why in my test of a115, at 500 playouts, only 1 move was searched despite flat policy.

For moves with policy of 0.1, which are more interesting and are relevant for our current strong networks, you only need 5 parentvisits. So it might actually not be too much of a problem for strong networks...

Formula I use is N = (fpu_reduction/(psa*cfg_puct))^2

So almost everything is better than FPU =0.5. This is my understanding now. Just having FPU constant is bad because when the best move is winning 0.75 or so, other moves are not explored but there could be a good move with low prior that simplifies the game with a high win rate. In this case we want search wide and not deep. If we are losing however with a WR of 0.25 the FPU=0.5 will leads us to explore all moves in sequence, but when we are losing we would like to search complicated lines deep looking for a tactical combination that actually works. By using the WR of the parent (or anything correlated) the exploration/exploitation tradeoff will be much more stable.

Also the statistic is different in traditional MCTS and AGZ style MCTS. The value output of the NN is close to the value returned by a deeper search, but for Odin it is a coin flip. If I remember correctly in Odin WR = (Wins+WRofMostVisited)/(Visits + 1) so that WRofmostVisited is still stabilizing the WR also after the first time it was used. I think that is good to counter random noise, but could perhaps also have a tiny effect on Leela too. Using the WR of the most visited sibling means exploration is encouraged also when a strong move has been found (very important for Odin since at the leaves no NN policy priors are available). But I am still curious to see if making the current search slightly more selective could be good.

Yeah I'm more and more certain that 0.5 is bad now. If they used something like that for Alphago Lee it would explain a lot why it plays bonkers when it is far ahead or behind. it would not be at all because it actually thinks those moves are good for winrate, but because the search is messed up, though more when behind than when ahead.

@odinAuthor I think that's a fantastic observation that FPU=0.5 means we go deep when winning and wide when losing. I wonder if that factors into why LZ often loses with a large dead dragon. Watching some of those games live on OGS, her win rate will peak over 99% for a long time. Eventually crashing to resignation within a few moves once she is finally forced to see the capture moves on the dragon. The moves where the opponent will capture the dragon (and thus the win rate estimate shift drastically, so they are the opponent's best moves as far as LZ is concerned once seen) never made it into a PV until the very very end.

This also suggests in self play LZ would resign long before the dragon dies (win chance for opponent < 1%) and thus reinforce its belief that the dragon board state is a winning one, not a losing one. LZ has to somehow see dragons dying in training to learn more about eyes...

For moves with policy of 0.1, which are more interesting and are relevant for our current strong networks, you only need 5 parentvisits. So it might actually not be too much of a problem for strong networks...

I think you are mean child visits.

psa * sqrt(parentvisits) / (1+childvisits)

so if the first node is explored 5 times, we looking for if (0.1 * sqrt(parent)/1 ) - ( 0.1 * sqrt(parent)/6) becomes greater than the fpu reduction. But the parentvisits depends also on other siblings so I can not just put this as 6.

I don't know about LZ. LZ doesn't use FPU=0.5 right now, and it seems like the NN actually has problems understanding very large groups #708. But the behavior of the search will indeed be different when it is at an extreme range of winrate because all winrates are the same, so it will be guided only by the policy probably ? Do you know what kind of shape the policy has in endgame ?

@evanroberts85 I was just looking at the number of visit in the parent node required to make puct = 0.2

It is important because in case the NN output a policy where the 3 best moves are 0.1, the rest very low,

The first 0.1 is going to be picked. Assuming its winrate is at net_eval and stay that way as it is exploited, puct of the second 0.1 node will need to at least be higher than 0.2 for it to be picked. because the first node will have score = net_eval + puct(first node), while the second will have score = net_eval - 0.2 + puct(second node)

I think we'll have to repeat these experiments after the new nets settle. The bug fix gcp made to randomness of positions selected for training should improve the value net. That might have a big impact on these other parameters.

@Eddh I think i was following your thought process, but if the puct(node) is going to make up the 0.2 difference, then it is amount of child sub-nodes that is the dominant factor, though I suppose you could compare a node that has been explored exactly once to one that has not been explored at all and then look at the amount of parent nodes required to make up the difference.

@evanroberts85 first node with psa 0.1 has score = net_eval + puct(first node), no matter how much this node is visited, puct(first node) will always be positive.

second node, with psa 0.1 also, has score net_eval-0.2 +puct(second node) since it has not been visited yet.

When can score(secondnode) overcome score(firstnode) ?

net_eval + puct(firstnode) < net_eval -0.2 + puct(secondnode)

puct(firstnode) + 0.2 < puct(secondnode)

Thus, puct(secondnode) needs to be at least more than 0.2 so that the score of the second node overcome the score of the first one. I'm sorry I explain my thought process badly.

Edit:

This led me to solving puct(secondnode)=0.2 which is psa * cfg_puct * sqrt(parentvisits)/(1+childvisits)

Since secondnode is unvisited, childvisits = 0

parentvisits is what we want to find

so we have 0.2 = psa * cfg_puct * sqrt(parentvisits)

sqrt(parentvisits) = 0.2 / (psa * cfg_puct)

parentvisits = (0.2 / (psa * cfg_puct))^2

@Eddh I understood that and that is reflected in my comments. There are two factors in the value of puct(node) though, child visits and parent visits.

Currently looking through your normalwinrate branch. I am not sure using worstChildValue to create a range is a good idea. Imagine only two sibling nodes where the difference in value between them is infinitesimally small, in that case the policy output instead should be dominant and the value difference should be all but ignored, but by normalising over the range the value output would have a huge affect.

I think it is better to just use bestChildValue. So for the child node:

winrate=winrate/best-sibling-winrate.

child visits is 0 for the second node, which is unvisited.

I know my normalwinrate branch is not ideal, it was one of my goal to make the search more sensitive to slight evaluation difference and see what it would do. It is actually not bad, at least from the few games I've tried. You can try more if you want.

Indeed, so the unexplored node will evaluate psa * sqrt(parentvisits) instead of half that value if it had one visit, or a third that value if it had 2 visits etc.

I will try to make a few modifications to your branch then do some very limited tests (I have a very slow computer).

Well yeah it will rise according to the square root. And there will be a point at which it will surpass 0.2, which is the minimum if the second node is to be picked (assuming that winrate for the first node stay at net_eval). This point is when parentvisits is more than 5. So in the current situation, if the 2 best child nodes have same policy and the first one is stagnant or rising in terms of winrate, the second node will not be picked until the first one has been visited 5 times (minimum). I'm not sure if this is actually good or bad. It means nodes deep in the tree, or very low playout setting, will have an unbalanced search sometimes.

It is almost certainly bad for networks producing flat policy though. And hypothetical cases where a strong network would be confused and output lots of policies below 5%. I'm not sure how often that happen.

@evanroberts85 Your formula scaling the policy seems to not be bad. Just played one match against normal Leela(raw parent net_eval), both using c83e and 600 playouts, it won convincingly, reading out capturing races properly. More testing would be interesting, to know if it is better than eval-0.2f alone.

Here is my branch

That is a good sign. I have got my development tools and Sabaki set up now locally so started doing some testing myself, but I am having to learn c++ as I go along.

Your formula fared well against normal Leela but didn't seem as good against eval-0.2 alone, It got 2/6 (600 playouts, with c83e). Too few to be sure, but I also tested a lot of my ideas and found one which has a record of 21/11 against eval-0.2 at 600 playouts so I find it more promising. It is this branch.

The basic idea is that the anomaly we are trying to take care of is a case where a 1% policy move which isn't particularly good is taking up all of the visits. So we can detect that with simple math. Adding up all of the visited moves's policies, we get a total visited policy, and if it is very low that means a lot of the policies recommendations are being ignored.

And so this solution simply multiply a fpu reduction constant by the total visited policy. If this total is 0.01 then the fpu reduction constant will be very low which will allow all the clogged up policy to do something. if it is 0.01 for a good reason, like a very good move was found, the fpu reduction will be weak, but since the very good move has a winrate higher than net_eval it will not be too much of a problem.

Edit: current results are, at 600 playouts:

27/15 for my branch against eval-0.2 on network c83e

3/1 for my branch against eval-0.2 on network 714a

I will merge this, after adding the old policy as a tunable (for weak networks);

leelaz v leelaz-tuned (433/1000 games)

board size: 19 komi: 7.5

wins black white avg cpu

leelaz 106 24.48% 49 22.58% 57 26.39% 520.75

leelaz-tuned 327 75.52% 159 73.61% 168 77.42% 487.62

208 48.04% 225 51.96%

leelaz-m2_2 v leelaz-tuned (432/1000 games)

board size: 19 komi: 7.5

wins black white avg cpu

leelaz-m2_2 189 43.75% 102 47.22% 87 40.28% 511.17

leelaz-tuned 243 56.25% 129 59.72% 114 52.78% 508.18

231 53.47% 201 46.53%

PUCT=0.8, reduction=0.22

Network was 5773f44c

Are we staying with 1600 playouts, or are you considering putting some of the tuning gain into speed up by lowering playouts? In general, are there any changes to the plan you posted 5 days ago, before discovering the training data shuffling issue?

@jkiliani There is something to be said for keeping the numerical value of 1600 playouts. It's what AlphaGo Zero used, and it's what people have been using, and it's what people know. Keeping it at that number will prevent a lot of questions about "hey this is different than AGZ".

@WhiteHalmos I'm not arguing that point, and I suppose it would prevent such questions... but at some point we will need to ensure our game rate doesn't drop too much, e.g. when going to 10 blocks. Lowering playouts is the easiest such method that comes to mind.

I have been running tests with Odin 1000 simulations, with no network against Gnugo on 9x9. About at least 3000 games was played for each experiment.

It turned out that I still used FPU=-0.5 as default and in my tests it is also the best option. All other are about 2%-4% worse.

FPU = MostVisitedWR - k, was worst with k= 0, k = 0.1 or something near could almost be as good as default

FPU = AverageWR - k, slightly less bad and effect of k less clear

I am still running some k = 0.06 experiments but so far it looks like FPU=0.5 is rock solid and simply the best for Odin 9x9 MCTS.

Interesting. It would indicate that 0.5 is good for 9x9 but bad for 19x19, possibly because the branching factor is lower. Or it depends on the search algorithm.

@Eddh

I have started on a new approach to balancing Winrate, Policy Score and influence of number of visits (child and parent). It should fix all the issues you have mentioned before.

Winrate and policy_score are converted into points using logarithms. So if 50% is 0 points 25% is -10 points and 12.5% is -20 points etc. For scores above 50% I use the distance from 100%, so 75% is +10 points and 87.5% is +20 points etc.

This means I can separate out the psa from the PUCT algorithm. Now the total value is = win_points + policy_points + puct_points. I just need to find a good balance between the three.

Edit: Realised weighting of policy needs to be related to number of visits otherwise a poor policy score can never be selected, so might add it back into puct.

Yeah you need to be careful tweaking the puct formula itself. Deepmind obviously didn't make it that way mindlessly. In the alphago zero paper they say the goal of the formula is that it favor moves recommanded by the policy early on but as the visits count gets higher it is more and more based on pure winrate.

they say the goal of the formula is that it favor moves recommanded by the policy early on but as the visits count gets higher it is more and more based on pure winrate.

Well that is just the entire idea of UCT itself. There's the original one (using log), the one in the AGZ paper (using sqrt), also there's also a variant (UCT with priors) that's described in another paper the AGZ one refers to.

They are all fairly close in strength as far as I remember.

Is there going to be a release with mandatory update for this? There's a good chance some nets will scale better with FPU reduction than others, and not having a uniform UCT search code over all clients may bias network testing results.

I found this which seems quite interesting : https://ewrl.files.wordpress.com/2011/08/ewrl2011_submission_29.pdf

From what I understand about this "double progressive widening", it seems to me like fpu would disappear. Instead once the best scored explored node is sufficiently exploited an unvisited node is picked. We would no longer directly compare unvisited nodes to visited ones, which is what lead us to these problems. They use similar reasoning to what @killerducky said :

"it is of little importance to build a very wide tree at the random node level (i.e.

to explore many different random occurrences of the events). In this setting, the

best strategy is to explore very few random occurrences, and to build a tall tree,

with many leaves being final nodes"

I will try to see if i can adapt this to LeelaZero. Potentially we could have a general solution that work for all network.

Well that is just the entire idea of UCT itself. There's the original one (using log), the one in the AGZ paper (using sqrt), also there's also a variant (UCT with priors) that's described in another paper the AGZ one refers to.

If the original UCT algorithm didn't use priors then going for high priors moves before going for high winrate ones is not the entire idea of UCT. It is the entire idea of the modified UCT used by deepmind though.

this is the original UCT paper.

There's more recent publications describing how to incorporate prior knowledge about arm likelihood into UCT, I don't remember the links offhand though. I just remember that for some reason AGZ did not use that algorithm but a variation, IIRC the difference is that AGZ applies the prior to the entire right-hand side of the equation, whereas in the original algorithm the prior is a separate term.

Here's a paper I just came across. Go-Ahead: Improving Prior Knowledge Heuristics by Using

Information Retrieved from Play Out Simulations

Going to be hard to retrieve information from playouts without having playouts.

Other papers were linked earlier in this thread, around this point: https://github.com/gcp/leela-zero/issues/696#issuecomment-360601056. Using the term "search seeding" when setting priors is involved.

Here's also one paper with very similar neural net evaluation as AGZ, but in Hex instead of Go: https://arxiv.org/abs/1705.08439. Chapter 4 and Appendix C are about MCTS.

Is there going to be a release with mandatory update for this?

This and tree reuse (which we didn't enforce yet as there were bugs with time controls etc)

There's a good chance some nets will scale better with FPU reduction than others

There's no evidence of this, aside from networks which have a near-uniform policy, which no longer applies. So what makes you say "there's a good chance"?

If this causes a strong bias to network strength we probably need to back it out again ASAP.

There's no evidence of this, aside from networks which have a near-uniform policy, which no longer applies. So what makes you say "there's a good chance"?

The tests I conducted at low playouts currently show pretty convincingly that scaling with playouts varies very widely between different nets. For recent nets, I'd have to consider the screening at low playouts pretty much a failure, mainly for this reason.

However, if the MCTS playout scaling is very different based on the net, is it really a stretch to think that scaling may also depends on UCT parameters, to more than a minor extent?

is it really a stretch to think that scaling may also depends on UCT parameters, to more than a minor extent?

It may or may not. I'd suspect likely not as long as the shape of the priors is roughly similar. But there's no evidence and there's no data, so obviously I don't think there's a "good chance". I would never have merged this otherwise.