Leela-zero: Version 0.10 released - Next steps

Version 0.10 is released now. If no major bugs surface in the next few days the server will start enforcing this version.

There is this 1500+ post issue where most plans for the future were posted in the past. It's become rather problematic to read, especially on mobile, and mixed with a lot of theories (most not backed with any data or experiments 😉) so I'll post my plans and thoughts for the near future in this issue.

It looks like we're slowly reaching the maximum what 64x5 is capable of. I will let this run until about 2/3 of the training window is from the same network without improvement, and then drop the learning rate. I expect that's the last time we can do that and (maybe!) see some improvement.

I have been training a 128x6 network starting from the bug-fixed data (i.e. starting around eebb910d) and gradually moving it up to present day. Once 64x5 has completely stalled, I will see if I can get the 128x6 to beat it. If that works out, we can just continue from there and effectively skip the first 6500 Elo and see how much higher we can get (and perhaps do the same with even bigger networks) from continuing the current run.

If that kind of bootstrapping turns out not to work, I'd be interested in doing a new run. My ideas for that right now:

Somewhere between 128x6 and 128x10 sized network. 128x10 would be 8 times slower, but there is a ~2x speed improvement that we could expect to have merged in by then, and the total running time would be around half a year maybe? "Short" enough that people are probably mostly going to stick around. Hopefully also "big" enough that we can see pro level play.

Immediately use new networks for self play (i.e. according to the latest AZ paper). We see very strong strength see-sawing right now. It is possible that using the new network immediately lets the learning figure out why some of those are bad and thus produce faster improvement. It's also possible that this procedure produces no or very slow improvement for our unsynchronized distributed set up and this run ends up being a total failure. But I think we should try to find this out, in the interest of answering the question in case anyone ever tries a "full" 256x20 run on BOINC or an improved version of this project.

Small revision of weights format. There is a redundant bias layer (convolution output before BN) that needs to go, and I want to add a shift layer after the BatchNorm layers. The latter hasn't been generally shown to provide improvements (and I never found any in Go either), but it is computationally almost free and makes the design more generic, so we might as well include it. (Note that scale layers are completely redundant in the AGZ architecture so no point in adding those)

There's been a demonstration that instead of stopping at 1600 playouts per move, it may be more computationally efficient to stop at 2200 "visits" per move. So we should do that.

Thanks to all who have contributed computation power and code contributions to the project so far. We've validated that the AlphaGo approach is reproducible in a distributed setting - even if only on smaller scale - and made a dan player appear out of thin air.

Some personal words:

I have been very, very happy with the quality and extent of code contributions so far. It seems that many of you have found the codebase approachable enough to make major enhancements, or use it as a base for further learning or other experiments about Go or machine learning. I could not have hoped for a more positive outcome in that regard. My initial estimate was that 10-50 people would run the client, maybe one person would submit build fixes, and that would be it. Clearly, I was off by an order of magnitude, and I'm spending much more time than foreseen on doing things like reviewing pull requests etc. So please have some patience in that regard - I will keep trying to do those thoroughly.

For the people who have a lot of ideas and like to argue: convincing, actionable data (or even better, code that can be tested for effectiveness) will make my opinion flip-flop like the best/worst politician, whereas arguing with words only is likely to be as fun and effective as slamming your head against a wall repeatedly.

Miscellaneous:

I am very interested in any ideas or contributions that make me more redundant for this project. I have some ideas of my own that I want to test. My wife would also like to see me again!

The training and server portions run fully automatically now for the most part (cough @roy7), although some other things like uploading training data have been proven problematic to automate, so that won't be live for the foreseeable future either.

There's been a lot of concern about bad actors, vandalism, broken clients, etc, but so far the learning seems to be simply robust against this. There is now some ability to start filtering bad training data, but it remains tricky to make this solid and not give too much false positives. I'd advise only worrying when there are actual problems.

All 732 comments

How does the 128x6 network training work? If we keep the same training pipeline as 64x5, it needs testing self-plays. Would the self-plays be done by some contributing clients, or would you do all of them yourself?

"Once 64x5 has completely stalled, I will see if I can get the 128x6 to beat it."

Does it beat it now? :)

@gcp Thanks!

Would the self-plays be done by some contributing clients, or would you do all of them yourself?

I can upload the networks and schedule tests for them, same as it happens for the regular networks. The clients won't really notice, they'll just run a bit slower :-)

@gcp thanks.

Thank you for running this project, it's been a delight to follow and contribute wherever possible!

About the increase in network size: Is there any good way to test in which cases increasing number of filters helps more, and where the Deepmind approach "Stack more layers" is better? In other words, is there a significant possibility that something like 64x10 might reach similar strength as 128x6? Is there any way other than training supervised nets to find out?

GCP and everyone involved, thank you very much for all your efforts! This project has been truly fascinating to follow it, both as a Go player as well as a developer. Looking forward to further experiments.

Thks for the computer only version im generating games in 17 minutes instead of 5 hours XD, what a massive improvement!

Thank you for running and managing this wonderful project :)

Plans sound good. Just to be clear, with the 128x6. network are you moving up by the intevals of the 65x5 “best” networks using the 250k game window that those networs were trained upon? Also are you using a set number of steps? I guess this should work but other approaches are likely to work better.

Also, this will not really tell us if the difference in strength is due to the size of the networks or the differences in how they are trained.

Is there any good way to test in which cases increasing number of filters helps more, and where the Deepmind approach "Stack more layers" is better?

Isn't Deepmind's approach stacking both more filters and more layers? AGZ has 256 filters.

About the increase in network size: Is there any good way to test in which cases increasing number of filters helps more, and where the Deepmind approach "Stack more layers" is better? In other words, is there a significant possibility that something like 64x10 might reach similar strength as 128x6? Is there any way other than training supervised nets to find out?

I believe that in general stacking deeper is more attractive for the same (theoretical!) computational effort. You leave more opportunity to develop "higher level" features (or not, when not needed, especially in a resnet where inputs are forwarded!), or possibility for features to spread out their influence spatially. Deeper stacks are harder to train, but ResNets and BN appear to be pretty good at dealing with that.

But in terms of computational efficiency, a larger amount of filters tends to behave better, especially on big GPUs, because that part of the computation goes in parallel. The layers need to be processed serially.

"In theory" 128 filters are 4 times slower than 64 filters, but in practice, the difference is going to be much smaller.

Isn't Deepmind's approach stacking both more filters and more layers? AGZ has 256 filters.

They did 256x20 and 256x40. They did not do 384x20, for example.

Just to be clear, with the 128x6. network are you moving up by the intevals of the 65x5 “best” networks using the 250k game window that those networs were trained upon? I guess this should work but other approaches are likely to work better.

No, I started with a huge window and have been narrowing it to 250k.

Thanks for the fantastic work! I'm interested in knowing how results for 2200 visits bit were obtained. Also, has anyone trained a supervised network with different depths and filters?

I'm interested in knowing how results for 2200 visits bit were obtained

See the discussion in #546. There's still some work ongoing in this area, and further testing, but it looks promising. The idea is not to spend too much effort in lines that are very forced anyway.

First I would like to thank @gcp and all contributors for this awesome project and efforts. Now we can prepare for the next run, and here are things I would like to clarify or discuss:

- AGZ uses rectifier nonlinearity, while we are currently using ReLU if I am not terribly wrong. For the new network, it could be desirable to change the activation from ReLU to a nonlinear one, but unfortunately the AGZ paper lacks details about this. What would be our choice? There are many options like LeakyReLU, CReLU, or ELU.

- For the training window, I still do not see any advantage we gain from including data from too weak networks. In addition to the training window by number of games, how about filtering out games based on rating also (like 300 or any reasonable value)?

- I am still dubious if the AZ approach is reproducible for us, when the computational resource is fluctuating. A milder approach would be to accept-if-not-too-bad one, and to prioritize networks with more training steps. Is it reasonable enough?

- For networks with more filters, #523 must be merged somehow. What should be done to accomplish this as fast as possible?

- This question probably cannot be answered without any experiment, but I always have been thinking that 8-moves history for the ko detection is too much, though more feature planes will lead to a stronger AI somehow practically. Can we consider reducing the input dimension from current 8x2+2 to a smaller one, like 4x2+2?

A milder approach would be to accept-if-not-too-bad one, and to prioritize networks with more training steps. Is it reasonable enough?

But the goal of AZ is to eliminate evaluation matches. If you need to know "not-too-bad", you need evaluation matches and you could as well go full AGZ. (This is pretty much the opposite argument of what we used to reject switching to the AZ method this run)

Also, shouldn't we try to reproduce AZ exactly because "we're not sure if it is reproducible"? If we change all kind of things and then fail (or succeed), we still do not know if it is because we changed a bunch of stuff or because it's an inherently bad method.

Anyway, before we start with a new larger network. How viable would it be to do one or a few runs with a smaller network, but with some variables adapted. For example we could use the current games and train a 3x32 network, and then run 500k games. After that run a 3x32 network from scratch and run 1m games to see the result. (And would these results carry over to larger networks?)

Another experiment i like to see would be to try different window sizes. We could use the current 5x64 network for that. Just go back 1m games, and train the then best network with a 100k or 500k window (or possibly 2 runs with each window size), and then run 500k games or so.

We're at 43k games/day now, so experiments like that would take ~2 weeks, but might give valuable data on our next run that might take several months. using a 3x32 network could probably 4 fold our game output and only take a couple days to get meaningful result.

AGZ uses rectifier nonlinearity, while we are currently using ReLU if I am not terribly wrong. For the new network, it could be desirable to change the activation from ReLU to a nonlinear one, but unfortunately the AGZ paper lacks details about this. What would be our choice? There are many options like LeakyReLU, CReLU, or ELU.

BN dramatically reduces, if not totally eliminates the assumed advantages of other fancy activations over ReLU. This is why all those huge CNNs(Res-101, 1201, etc) prefer trying all kinds of different structures and filter/layer combinations rather than exploiting the seemingly low-hanging fruits of better activation functions. They are not low-hanging fruits because they only offer advantages in some non-general cases and controlled environments.

By the way ReLU is a nonlinear function, and two layers of ReLU could theoretically approximate all continuous functions, just like tanh and sigmoid.

This question probably cannot be answered without any experiment, but I always have been thinking that 8-moves history for the ko detection is too much, though more feature planes will lead to a stronger AI somehow practically. Can we consider reducing the input dimension from current 8x2+2 to a smaller one, like 4x2+2?

4x2+2 can't detect triple-ko.

>

I started [the 128x6 network] with a huge window and have been narrowing it.

You could repeat the same process but with a 64x5 network like the current

one, to see how much of the gain (if there is a gain) comes from the

increase in network size and what effect just changing the training had.

@Dorus It is true that there is no evaluation in AZ, but I am not sure if it is the purpose. In fact, the motivation for changes from AGZ to AZ is unclear in the paper.

@RavnaBergsndot That is theoretically true to an extent, but in practice it affects the performance more or less, usually depending on the nature of the dataset. A simple example would be this. After all, this project aims to be a faithful replication of AGZ, so why not? Also AFAIK a triple ko consist of 6 moves so 3x2+2 will do, and we are not adopting superko rules, so is it any meaningful to detect a triple ko after all?

A consistent training procedure with no added variables would be nice to compare different configurations. I think the 5x64 has quite a bit more potential, but was ham-stringed by a rough start. I like the idea of the AZ method of using the latest network. I vote to do a small scale AZ approach first.

That is theoretically true to an extent, but in practice it affects the performance more or less, usually depending on the nature of the dataset. A simple example would be this. After all, this project aims to be a faithful replication of AGZ, so why not? Also AFAIK a triple ko consist of 6 moves so 3x2+2 will do, and we are not adopting superko rules, so is it any meaningful to detect a triple ko after all?

Most of the experiments in that paper were done without BN. BN enforces most input points falling into the most interesting part of the ReLU domain, therefore reduces the need of non-zero value outputs when the input is negative. We need more recent experiments.

I'm also not convinced that AGZ's "rectifier nonlinearity" means "rectifier plus nonlinearity on its negative domain" instead of just ReLU itself.

3x2+2 won't do, because that "x2" part is for the same turn. "These planes are concatenated together to give input features st = [Xt, Yt, Xt−1, Yt−1, ..., Xt−7, Yt−7, C]." Therefore for 6 moves, we need at least 6x2+2.

I feel the need for a more general discussion, more beginner friendly and less specifically about

leela. Please have a look at https://www.game-ai-forum.org/viewforum.php?f=21

@RavnaBergsndot Well but the batch normalization was there in CIFAR-100 benchmark. And if the shift variable is somehow set inappropriately during training, the batchnorm layer can shift the input to the negative region of ReLU ("dying ReLU"), so that is the idea behind all the modified rectifier units. I can hardly imagine any case referring to ReLU by the "rectifier nonlinearity", because you know, ReLU is a rectifier linear unit.

And you are right about the input features, though I still do not see why we need to detect triple ko at the first place.

though I still do not see why we need to detect triple ko at the first place.

Triple kos matter in rule systems without superkos.

Actually, they change the result of the game.

With superko, they would just make a move illegal.

Without it, they form a (really interesting in a game, I might add) situation where, if neither player is willing to give way, the game cannot end and is declared a draw (actually a "no result", but in situations where no return-matches are played, it's effectively the same).

Not including enough information for triple-ko detection in the NN would make the network unable to tell the difference between a situation where a move would end the game without a win or a loss, and one that will.

So even if we aren't interested in superko, it's still a bare minimum to be able to detect triple ko.

That being said, It might help a lot in superko detection as well, since gapped repetitions are exceedingly rare in actual play, perhaps sufficiently so that the "damage" of not recognizing these cases without search might not be felt.

However, the important thing was to demonstrate why triple ko detection is needed even if we do not use superko.

Why 128 filters? 24 blocks * 64 filters should be consuming same time as 6 * 128, I wonder how blocks/filters affect strength...

Maybe we can train a 24 * 64 network and a 6 * 128 network, to compare with them?

64 filters, 24 blocks will almost certainly use more time than 128 filters, 6 blocks. @gcp explained earlier that increasing the number of filters allows more parallelization and is thus usually much less than quadratic in computation time on a GPU. Layers have to be evaluated serially on the other hand.

I'm also not convinced that AGZ's "rectifier nonlinearity" means "rectifier plus nonlinearity on its negative domain" instead of just ReLU itself...I can hardly imagine any case referring to ReLU by the "rectifier nonlinearity", because you know, ReLU is a rectifier linear unit.

I'm 99.9% sure that "rectifier nonlinearity" exactly means ReLU. ReLU is a non-linear unit constructed from a rectifier and a linear unit. A rectified linear unit is a rectifier non-linearity.

As was already pointed out, the advantages of "more advanced" activation units disappear when there are BN layers involved, which is why everyone including DeepMind just uses BN+ReLU.

2) In addition to the training window by number of games, how about filtering out games based on rating also (like 300 or any reasonable value)?

It's important to make sure the window has enough data or you will get catastrophic over-fitting, especially for the value heads. You can test this yourself. This can't be guaranteed if you introduce a rating cutoff so it's a bad idea.

You could repeat the same process but with a 64x5 network like the current one, to see how much of the gain (if there is a gain) comes from the increase in network size and what effect just changing the training had.

Be my guest and be sure to let us know the result.

While it is true that BN mitigates the dying ReLU problem a lot (and especially considering we are using ResNet) and therefore BN+ReLU practically works very well, it is not completely true that the architecture is completely free from the problem. Of course, if there is no problem with the current LZ nets, the change is unlikely to be made anyways.

For the training window, overfitting can be of course potentially problematic, but the counter-argument that it can be bad to learn from bad policies and results of weaker games also makes sense, and we don't really know if overfitting is severe after all, so both are not well supported by data, I would say. So what kind of experiment is good enough here? Training with a smaller window is fine, but doing that for several generations with self-plays from that trained network is nearly impossible for an individual. So if we restrict the experiment to be done for a single generation, how can we measure the strength? Self-play ratings are not necessarily applicable to non-LZ players. Is the match between networks trained with narrower and wider windows meaningful enough?

@gcp "Once 64x5 has completely stalled, I will see if I can get the 128x6 to beat it."

IMHO 128x6 doesn't even need to beat 64x5. Suppose you find out that 128x6 is about 300, 500, 700, or even 1000 elos lower than 64x5. It still means the former can play reasonably. Then, we can just adopt it and improve it by training on its own self-play games. It would still be much better than starting from the scratch.

@gcp When you decide that we should move to 128x6, you can pitch it against at least 3 best networks (latest but about 1000 elos apart). Then we can decide the exact elo of the initial 128x6, which should be our starting point.

Why put that burden on gcp? Dont be lazy and just run it yourself @ashinpan :)

Or just wait for one of our other enthusiasts to do so, i'm 100% sure somebody will.

IMHO 128x6 doesn't even need to beat 64x5.

If it can't from a similar training set, then what's the point of moving to 128x6 - with the same training set?

@Dorus We haven't reached the complete stall yet, and it is @gcp who must decide that we actually have. Besides, he just can send out matches to do such a test; he doesn't need to do anything.

@gcp Have you read my comment to the end?

If 128x6 trained by supervised learning can't beat 64x5 trained to saturation by reinforcement learning, that mainly implies that the supervised learning can't absorb all the knowledge from games it didn't play itself. It certainly doesn't mean that such a net wouldn't beat 64x5 in short order once trained by reinforcement learning itself.

@jkiliani I agree with you.

I found leelaz has a tendency to forget learnd knowledge. Though current weight 65e94e52 much stronger than before on midgame, earlier 40b94cfe seems playing better on endgame. Since 58da6176 beat 40b94cfe by midgame, the endgame playing of leelaz improved so slowly. If we train a network based on previous network like AlphaZero, could it work? Or any better way to solve it?

@fffasttime I think if your observation is correct, it simply means there is still considerable improvement potential in 64x5. What will likely happen is that eventually the learning process won't produce stronger networks anymore at 0.001 learning rate, but that with reduced rate, the networks will reach slightly higher mid game strength than now combined with higher endgame strength than 40b94cfe. We'll see what happens, but this run definitely doesn't seem to be quite over yet.

The reason behind self-forgetting is highly likely due to network capability or learning rate. As suggested in the OP, we can try lowering the learning rate, and if it still stalls we might safely conclude that this is close to the limit of the current architecture.

@fffasttime It is well-known that Alphago also makes quirky endgame moves, probably owing to the same cause here. Perhaps this is the motivation for Alphazero adopting the method of training on the last network.

Maybe we should reconsider the resigning... it's possible we'd get better results letting all games play to the end, maybe with just 400 playouts after one of the players falls below the resignation threshold.

In either case, reinforcement learning so far appears to be remarkably robust in that it fixes its own weaknesses even with the presence of bad data. I doubt the problem will persist.

A new network could understand that one move is bad, but does not yet know that an alternative is even worse, because it has not been played much before in training games. This reminds me of the phrase "A little knowledge is a dangerous thing". In this case training with the new network despite its new weakness may be beneficial.

That said training with a network which scores only <30% will mean that then following network will need to score 70+% just to get back to where we were, assuming Elo ratings work cumulatively (which they do not). I can not see this leading to faster overall progress given how badly the average network does.

I am optimistic about the 128x6 network myself. I will try to get training running on my laptop tonight to do some tests but without a good GPU I am not sure if I can get reasonable results fast enough.

@gcp (Sorry, I just noticed your edited comment) "If it can't from a similar training set, then what's the point of moving to 128x6 - with the same training set?" I never said that it should be the same training set. We can start training on the new self-playing games of 128x6. (If I am not wrong, this was how AG Master was born)

I never said that it should be the same training set.

But I am saying that it should. If training 128x6 on the training data that is beyond saturating 64x5 does not produce an improvement, then this implies that data is sub-optimal for that network. And we should reset rather than use data we known is not optimal (and get risk getting stuck in a lower optimum).

If 128x6 trained by supervised learning can't beat 64x5 trained to saturation by reinforcement learning, that mainly implies that the supervised learning can't absorb all the knowledge from games it didn't play itself. It certainly doesn't mean that such a net wouldn't beat 64x5 in short order once trained by reinforcement learning itself.

Exactly. My point is that if 128x6 cannot (somehow) use the training data from the 64x5 well enough, we should get new data, and not try recover a half-crippled net.

A 4th point for a new run would be to extend the training data format to include the resign analysis. Shouldn't forget about that either.

If I am not wrong, this was how AG Master was born

...and we get back to the open question, that if AG Master with 256x20 was better than AGZ with 256x20 (this can be inferred from the graphs in the papers), why did DeepMind do AGZ 256x40, and not AG Master 256x40.

...and we get back to the open question, that if AG Master with 256x20 was better than AGZ with 256x20 (this can be inferred from the graphs in the appers), why did DeepMind do AGZ 256x40, and not AG Master 256x40.

My best guess is that "Go program trained without human knowledge" sells better in a paper than "Even more awesome Go program than our last awesome Go program". They had to make their point without human input data for that, even though the reason for AGZ 40 blocks being stronger than AG Master may very well be simply in the number of blocks, not the Zero approach. They also shifted goal posts in the latest Alphazero paper, by making their reference Go program there AGZ 20 blocks instead of 40 blocks.

@gcp Let us think in this way:

1) AG Lee trained on human and self-play games

2) AG Master trained only on self-play games but initiated with a network (AG Lee) carrying human bias

3) AG Zero trained on self-play games from scratch.

It is obviously not the objective of Deepmind to make the best possible Go playing bot, but to let machines go where no human has gone. Then, even if AG Zero is inferior to AG Master at 256x20 level, they must still push AG Zero.

In our case, our initial 128x6 network would be admittedly of supervised learning; but its supervisor is another network (64x5), not human.

@gcp "But I am saying that it should. If training 128x6 on the training data that is beyond saturating 64x5 does not produce an improvement, then this implies that data is sub-optimal for that network. And we should reset rather than use data we known is not optimal (and get risk getting stuck in a lower optimum)." I agree if by resetting you mean "reset the data", i.e., to train on self-playing games only. But I cannot see why we should start again with a network of zero elo, which makes completely random moves.

Maybe we should have more patience, I think that 64x5 present can reach 7500 self-estimate elo rating before 4000,000 games played. But if we want to get much obvious improvement, IMHO, I don' think a little change(in this case is from 64×5 to 128×6) can reach a obvious upper limitation.

A change in neural network structure is unfortunately never "little", since it means we cannot continue training from the previous best network, but have to transfer the knowledge encoded in those self-play games into a larger neural network.

At present, the big question about this procedure is whether or not doing so limits the achievable strength of the larger network by self-play, i.e. "cripples" it, and this is not easy to test. Failing to achieve a 128x6 network by supervised learning from 64x5 self-play data that is stronger than the latest 64x5 network does not prove that the network is crippled, and successfully training such a 128x6 network also does not prove it is not crippled.

IMHO the only way to test this would be to continue until 64x5 has plateaued, then take an arbitrary 64x5 (supervised) network, and see whether or not self-play reinforcement will take this network to the same plateau as achieved by starting from Zero.

Of course it may well be a good idea to restart anyway, to cleanly implement a better training format, possibly training procedure (AlphaZero), and code changes such as visit count. However, it would be very valuable to know whether the bootstrapping procedure works in theory without long-term damage to the network, since eventually even the second run (128x10?) would stall out.

@jkiliani TX for your interpretation, I wonder whether it makes sense to train a 128x6 network with latest 500k games ptoduced by 64×5 network? Come up with anothet deal, is it worth trying a 64×10 network?

I have to admit that without the hardware to run training experiments myself, anything I tell you about how specifically to do it would be pure speculation. My feeling is @gcp will succeed in training a 128x6 net stronger than the best 64x5 if he uses an annealing schedule and samples the network often enough, but other people who tried it themselves are better qualified to answer this.

I do not understand why we should reset the training. I understand that if a bigger network is trained with the same sample of the smaller, will take more time and will reach a point that is not optimum for its search space. But at worst it just need more sample and more training to reach the level of the smaller not need to trow away all the sample we add till now.

@gcp are you planning on moving to AlphaZero-like (no eval) NN training steps AND change other issues such as lack of symmetry-reflrection usage etc. ?

Because I was rereading the paper, listing all the different random or noisy behaviors there would be in match evaluations in AlphaZero (had they been used) - and... I found none...

Since eval matches in AGZ had no temperature, no noise, no randomness, no regularization for the net, and the network had no usage of symmetry reflections - there seem to be no random or noisy factors at all...

How would an evaluation match even work? with no rollouts, and no other random factors, then each UCT with the same NN would deterministically always choose the same move 1, and respond with the same move 2 to the other network's move 1.

Aren't there only 2 games possible?

What are the other random factors I am missing? or is that the reason to remove the eval? (it doesn't do anything if there are only 2 games possible...)

lack of symmetry-reflrection usage

God no, this is very useful for go.

But you have an excellent point about the inability to do match games in their setup.

@jkiliani "At present, the big question about this procedure is whether or not doing so limits the achievable strength of the larger network by self-play, i.e. "cripples" it, and this is not easy to test."

If we can infer anything from the history of AG Lee and AG Master, it seems to be that:

(1) The quirks of older networks may remain very long, even forever. AG Lee has quirky endgame moves, and AG Master also has this characteristic.

(2) But the blind spot of AG Lee, the one that allowed Lee Sedol to win one game against it, was remedied by training on pure self-play games (so said David Silver in an interview).

So, I think we don't need to worry a lot for having to bootstrap from a supervised bigger network.

(1) that quirk is just MCTS not caring about big or small losses, only about certain or uncertain losses. This has nothing to do with the NN.

But at worst it just need more sample and more training to reach the level of the smaller not need to trow away all the sample we add till now.

Maybe, maybe not. I think there's an argument that due to the forced exploration and randomness, it will indeed eventually "fill in" any holes it might have.

A nice example is a network trained from 9 dan pro games: it will be even worse at ladders than our current 64x5. But the policy priors from the pro games will be very much against situations where a ladder can start. So you need the randomness to "end up" in a ladder so it can discover about them.

But it's not so clear at this point if this is overall faster or not than being tabula rasa.

God no, this is very useful for go.

That was probably because they insisted in "no domain knowledge" other than basic game rules. Btw, i wonder how much stronger a net can become if you included some domain knowledge like working ladder moves. The original AG also had planes for liberties etc, i do not think those planes are all that useful, but a plane for ladders* and captures could be very useful considering the weaknesses the current network has.

The current network makes many mistakes with: Self atari. Long diagonal ladders. Second line atari's that result in a capture on the first line next (this is a type of ladder.) Captures of large groups with 1 eye and no other liberties.

On the subject of capturing large groups: Possibly the network is trained to learn certain moves are invalid. But wouldn't it be better to not train it on invalid moves? Any output for invalid moves is zero'd out anyway, so the network could just produce garbage here, it wont make any difference to the end result. This might make it easier for the network to learn about valid captures.

*) Ladders are all captures that happen with a seri of atari's on a group with 2 liberties, decreasing them to 1 each time until eventually the group is captured.

@Dorus "(1) that quirk is just MCTS not caring about big or small losses, only about certain or uncertain losses. This has nothing to do with the NN."

Then, it is even better. We won't have anything to worry about.

Pardon the silly question, but rather than starting over with a new network architecture, why wouldn't you use the output of the current network as additional inputs to a new one?

@gcp are you tempted to adopt AlphaZero's approach of having randomness on all game and not just for the opening?

Is the 0.10.1 any better than 0.10?

@gcp Thanks for all the hard work, and a big thank you to all the code contributors in general! Having this project be open-source is a tremendous help for people like me who like to tinker with things and see what they can do with them. To be honest, all the speed improvements that have been done on this implementation make it more and more easy to just go test something locally, and that's awesome :)

Does it make sense to train specifically for end game situation? For example, the program can select some already played games and start from 150 steps on, the objective is to find a variant of the network that can maximize the area at the end. I think this is a much smaller problem and it should be solved very well with the current network. And once our network learned good end-game play, then the improvement will be focused on the mid-game and openings. Would this be more efficient?

The following might be useful for @gcp. But I suspect he is already aware of it :)

Net2Net: Accelerating Learning via Knowledge Transfer

(Abstract) We introduce techniques for rapidly transferring the information stored in one neural net into another neural net. The main purpose is to accelerate the training of a significantly larger neural net . . .

Is the 0.10.1 any better than 0.10?

It fixed a bug related to maximum number of threads.

I have some doubt about how training is done as I read the AGZ paper closer: in the paper, it seems to me that they always use the search propability distribution π for training, which is proportional to the visit counts for the first 30 moves (temperature=1) and assigns 1 to the move with most visits, which is also the move actually played, and 0 to other moves (temperature=0). However, we have been using the visit counts for training all game long. @gcp commented https://github.com/gcp/leela-zero/issues/78#issuecomment-346347588 that training on the distribution should give a slightly stronger program than training on just the moves, and indeed in the AZ paper, they set temperature=1 all game long. However they use the same temperature setting in self-play and training, while we are taking a hybrid approach. I imagine this could be a potential source of problem, and I think it is at least worth trying to train networks following AGZ starting from the current best network and see whether this leads to faster improvement. For this one may need to re-generate the training data (https://github.com/gcp/leela-zero/issues/167).

I am not saying that we need to follow AGZ completely faithfully. I have several ideas deviating from the AG approach, for example (1) using the Q-value of the root node after a certain number of MCTS playouts (say 1600) in place of the final winner to train the network; (2) add in "the number of moves remaining" as an input feature and generate training data by doing MCTS from random positions with different densities and with the number of moves remaining gradually increasing from 1 to 722. I don't currently have the time and skill to test these ideas, but I would be glad to hear your comments. (By the way, Prof. Paul Purdom is having another offering of his graduate course on AG this semester here at IU, and he doesn't like using the final winner as feedback either. Edit: it turned out that he didn't study the loss function before.)

@alreadydone Alphago Zero was a demonstration of one particular implementation that works. They never proved what they did works best, and they never shared any information they didn't publish with @gcp when he asked, even though that might have helped a lot in the beginning. We have to assume they also had multiple experiments that failed, but they never shared any information on that either. What we're doing here is a _mostly_ faithful reimplementation, which I though was a very good decision since starting a 256x20 run with untested code would have been a disaster, and even distributed we don't have even close to the computing resources of Google.

The general consensus of the project seems to be to gradually try out further deviations from AGZ simply to build up some knowledge on why their approach works, rather than just confirm it did. I can't think of any other way to be successful in the end.

I have some doubt about how training is done as I read the AGZ paper closer: in the paper, it seems to me that they always use the search propability distribution π for training, which is proportional to the visit counts for the first 30 moves (temperature=1) and assigns 1 to the move with most visits, which is also the move actually played, and 0 to other moves (temperature=0)

You are confusing the actual move selection with the data used for the training. They use the temperature for the move selection.

For the training:

" The neural network (p, v) = fθi (s) is adjusted to minimise the error between the predicted value v and the self-play winner z, and to maximise the similarity of the neural network move probabilities p to the search probabilities π "

This obviously makes no sense if you set temperature = 0. And it would be silly, because if the search sees multiple good moves, you're forcing the network to forget about all but the first, which is catastrophic.

using the Q-value of the root node after a certain number of MCTS playouts (say 1600) in place of the final winner to train the network;

It is very likely that this was tried and discarded (it is a common optimization for REINFORCE). People who tried it with supervised-style learning got clearly worse results.

add in "the number of moves remaining" as an input feature

I'm pretty sure the network can calculate 722 - stones on the board.

And if you mean until the original game was stopped, then you would need to predict the future to fill in this input.

@gcp are you tempted to adopt AlphaZero's approach of having randomness on all game and not just for the opening?

I think that rule was added to deal with games with a much lower branching factor, who might not deviate enough otherwise. It probably does not improve go.

On the other hand, yay for less magic constants.

using the Q-value of the root node after a certain number of MCTS playouts

(say 1600) in place of the final winner to train the network;This is far worse. Many people have already tried it.

The policy network already gets the information from the immediate

tree-search so I can see why this would not get good results. You are

effectively doubling up this information.

I can see the idea of not waiting until the final game result though, to

get more immediate feedback. Taking the Q-value of the root node of a move

played a few moves after the one you are training on is the idea I had

thought of and am yet to test.

Do you want to change?

You are confusing the actual move selection with the data used for the training. They use the temperature for the move selection.

"The MCTS search outputs probabilities π of playing each move. ... Once the search is complete, search probabilities π are returned, proportional to N^(1/τ), where N is the visit count of each move from the root state and τ is a parameter controlling temperature."

"the neural network's parameters are updated to make the move probabilities and value (p, v)=f_θ(s) more closely match the improved search probabilities and self-play winner (π, z)"

"The neural network parameters θ are updated to maximize the similarity of the policy vector p_t to the search probabilities π_t, and to minimize the error between the predicted winner v_t and the game winner z"

"a move is played by sampling the search probabilities π_t ... The data for each time-step t is stored as (s_t, π_t, z_t) ... new network parameters θi are trained from data (s, π, z) sampled uniformly among all time-steps of the last iteration(s) of self-play."

The above are all from the AGZ paper, and I cannot see any indication that they are using different temperatures for self-play and training. The search probabilities depend on the temperature, the moves are played according to the search probabilities, and the network is trained to approximate the search probabilities. In our case, what worries me is that although the network is trained to explore all moves according to the visit count, it only sees continuations from one of the moves. I can imagine this have some effect on learning forced moves, e.g. ladders, (self-)atari, and capturing races.

I cannot see any indication that they are using different temperatures for self-play and training.

They're not, nobody is saying this. Again, the temperature to select the move is not necessarily the same as the output of the search probabilities.

"In each iteration, αθ∗ plays 25,000 games of self-play, using 1,600 simulations of MCTS to select

each move (this requires approximately 0.4s per search). For the first 30 moves of each game, the

temperature is set to τ = 1; this selects moves proportionally to their visit count in MCTS, and

ensures a diverse set of positions are encountered. For the remainder of the game, an infinitesimal

temperature is used, τ → 0."

"At the end of the search AlphaGo Zero selects a move a to play in the root position s0 , proportional to its exponentiated visit count, π(a|s0 ) = N (s0, a)1/τ / Pb N (s0 , b)1/τ , where τ is a temperature parameter that controls the level of exploration."

However, I'll give you that it's actually rather confusing:

"MCTS may be viewed as a self-play algorithm that, given neural network parameters θ and a root position s, computes a vector of search probabilities recommending moves to play, π = αθ (s), proportional to the exponentiated visit count for each move, πa ∝ N (s, a)1/τ , where τ is a temperature parameter."

The paragraph you quote is even less clear, but it's the explanation of a figure.

I guess you could also interpret that as saying that after move 30, they train the network to predict the best move only (which would be incredibly arbitrary, much more than only doing so for move selection). They rather consistently note π as having the temperature parameter applied to it.

The next AZ paper:

"The search returns a vector π representing a probability distribution over moves, either proportionally or greedily with respect to the visit counts at the root state."

And they do say:

"Moves are selected in proportion to the root visit count" (i.e. without any qualification that it's the first 30 moves only)

At worst we accidentally used the improved method from the AZ paper. (I wondered about the "either" in the first paragraph, but they use greedy selection for evaluation games)

@gcp what is the process for training on the next window when 8k-256k steps all fail? For the next 8k-256k steps network do you start over from previous best weights or do you continue on from the previous failed 256k step? Latter seems better to me but maybe it would overfit?

Also what about increasing the game window back to 500k games? Or maybe it would overfit because we have a smaller network than google?

An idea for new run: since we are doing some sort of node merge as stated in #576 already, inputting the last 8 board positions aka the last 7 moves into the neural net is less useful. Inputting so many move history only serves the purpose of reading triple ko within 1 playout and it will miss special cases like triple ko stones cycle anyway. Meanwhile the computation is a lot more inflated for some ko corner cases and then we throw the benefit away.

On the other hand, if we input only the last 4 or even just 3 board positions, it will still pass the bare minimum requirement to read Chinese Superko in 1 playout, while the computation resource can be better spent for more blocks or more featuresfilters or more game throughput. Since we are not aiming for perfect play or super-human Go strength in the second run (yet) I guess, such a tradeoff for better reading for large group life and death / long ladders looks attractive to me.

EDIT: There is no clear proof that the so called "Chinese Superko" is used in Chinese go tournaments today. But since 8 is just a magic number set by AGZ, it can also subject to decrease like the featurefilter count or block count, and 3 is at least less magic as there is some historical context.

while the computation resource can be better spent for more blocks or more "features" or more game throughput.

Increasing the number of input features barely increases the number of weights and computations of the network, because the number of intermediate filters in each layer is still 64.

Furthermore, if some information in the 8-step history is not interesting enough, the network will learn to spend its computations elsewhere anyway.

Ouch, so they are called filters not features. I was talking of those 64vs128vs256 thing, and forgot the exact name.

@gcp If you want to get a snapshot of your 128x6 strength, load it in as a match but not against the best-network. That way it won't promote and replace anything if it does win. (Promotion depends on beating current best network.) I'm curious what result 128x6 vs ffc1e51b would have, for instance.

I will let this run until about 2/3 of the training window is from the same network without improvement

Is the training window still at 250K or back to 500K currently?

Just two random thoughts:

- I think the current setup should be let run until it nearly completely stalls, to get as much info as possible (maybe then hack-switch to 6 blocks for a few weeks to see the difference it makes).

- In the longer run, why not 40 blocks? Even if it takes a few years, that's what people are interested in, a real AGZ in the public domain. For the same reason, I'd consider increasing the playouts during selfplay. Aim for maximum strength, not minimum time spent.

For the next 8k-256k steps network do you start over from previous best weights or do you continue on from the previous failed 256k step? Latter seems better to me but maybe it would overfit?

I restart from the best to prevent overfitting. (I do not consider it likely we lack enough steps to see improvement - not with already having had to reduce the learning rate, and no clear progress in the training loss)

Also what about increasing the game window back to 500k games? Or maybe it would overfit because we have a smaller network than google?

It would be less likely to overfit, not more. But I don't see why it would help to include much weaker networks. Our training window is 1/2 that of Google but the network is 64 times smaller. It's likely that a smaller window would help more, but that just ends up equivalent to letting training games accumulate, so I don't really want to change it for now.

Is the training window still at 250K or back to 500K currently?

It's 250K. I used 500K briefly to get the 128x6 started with all games post the major bugfixes.

I think the current setup should be let run until it nearly completely stalls, to get as much info as possible (maybe then hack-switch to 6 blocks for a few weeks to see the difference it makes).

I agree we should try got get as much out of 5x64 as possible. 6x128 is already 5 times slower to compute (ok, not really on most GPU, but in theory), so it will have to overcome that handicap in "real" games.

A strong 5x64 is also very useful for people without a GPU, if someone wants to make a phone app, etc.

In the longer run, why not 40 blocks? Even if it takes a few years, that's what people are interested in, a real AGZ in the public domain. For the same reason, I'd consider increasing the playouts during selfplay. Aim for maximum strength, not minimum time spent.

I think the intermediates are likely to be more useful in the near future, until we all get a few TPU in our desktop machines. Someone should test how many nodes per second a 1080 Ti can search with 256x40. It's not going to be much.

If our bootstrapping 6x128 on 5x64 data works, that's another reason to not leap too far.

I think the timescale of 40x256 is also such that it will take much longer to run than I personally am willing to commit to, and one would probably need to think a bit more about what to do with bad clients and so on. @roy7 intends to open source the server side this weekend, so I am hoping to become redundant soon.

@gcp If you want to get a snapshot of your 128x6 strength, load it in as a match but not against the best-network. That way it won't promote and replace anything if it does win. (Promotion depends on beating current best network.)

Very clever, I will do exactly this for the supervised one and my current candidate.

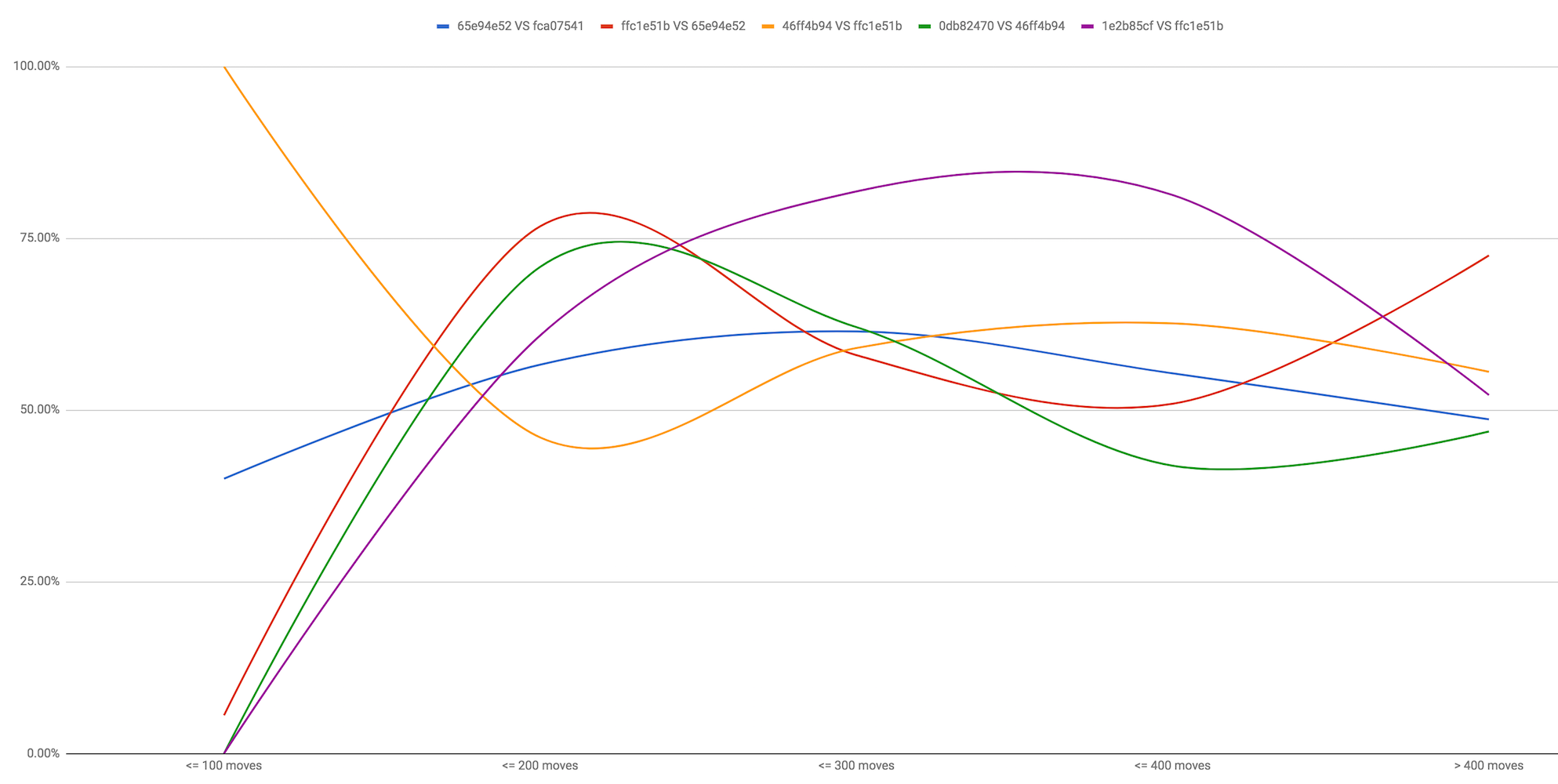

Those matches are added, they are a bit more down on the match table, but you can see them on the graph (will probably confuse a few onlookers).

Those matches are added, they are a bit more down on the match table, but you can see them on the graph (will probably confuse a few onlookers).

Oh, i just found them based on the date. I never realized that list wasn't sorted on date, but on network training games + steps.

So basically a 6x128 already wins against a 5x64.

1e2b85cf611d5ede3f8d77ddc56a7bd79a7f1e51a647ddea428b92c00fdf2612 is the supervised one

5e1014c1d19b03ea7188310711b37bbf50421d777d0f6f5cd6d20986acb7c34c is 6x128 on 5x64 data

1e wins convincingly

5e is getting crushed

Looks like beating a 5 times bigger supervised network will be possible with 5x64 (and that's ignoring the speed difference).

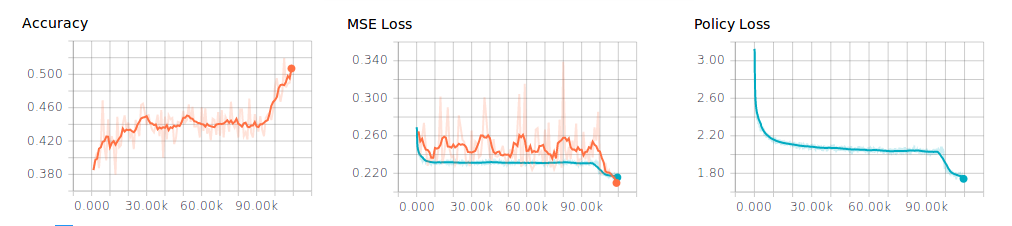

But the odds for bootstrapping are a bit worse. From the training I see it has a comparable policy loss, but a much lower MSE loss, than the smaller network. Interesting that this by itself does not translate in strength.

Remember the current 5x64 network is handpicked out of 2 dozen networks. If you try to bootstrap the 6x128 network like that, with different learning steps etc, one might get lucky and improve on 5x64. 20% win vs ffc1e51b is terrible of course, but the 5x64 2.13M+64k network from before had a similar score.

What does exactly "the supervised one" mean?

@gcp - perhaps rerun the test but with a lower resign threshold. It might be predicting that against itself it is in a lost position and resigning games it can win. Or better, download the games and complete them with a lower resign threshold.

Slightly surprised at the poor performance of the 6x128 bootstrapped network, but as Dorus says the 5x64 networks has gone through the process of being successively handpicked. The 6x128 may need a round of self-play to discover its weaknesses.

The low MSE score is very promising.

@evanroberts85 Could we simulate the successive handpick for the 6x128 bootstrap?

I wasn't even talking about self play yet, just try a number of extra training steps on the currently available games might be enough. If you use the games from 46ff i would actually be surprised if this did not result in a stronger net.

However i also have the theory that a larger net, that will be able to run less playouts, will have it very difficult to improve on the current dataset. The problem is that a larger net can mostly improve because ti can learn to see things the current net can not (things future away or things that require more filters), but because the current games are from the current net that is blind to those weaknesses, the self play games do not contain much information about these weaknesses. A couple rounds of self play might improve this, but it might also be required to start all the way from scratch.

I'm still wonderinf if the messed up first 900k games are still leaking trough weaknesses in the current net, with failure to capture large groups, incorrect passes and self atari.

The better value output alone should be enough to see an improvement, but as gcp said, that does not seem to always translate. We are going to need a few more tests after extra training to see how much randomness there is in this translation.

I just set 6x128 supervised net 1e2b85cf against 6 dan HiraBot43 on KGS as LeelaZeroT

t might be predicting that against itself it is in a lost position

If it is better, why is it getting into lost positions in the first place? Seems pointless to try that.

Could we simulate the successive handpick for the 6x128 bootstrap?

Yes, that's no problem.

@gcp I'm not quite clear - is 5e101 trained only on the current window of 5x64 games (which are mostly generated by 46ff), or has it seen windows from the very beginning of the 5x64 run? Or some mix?

Just seen the 6x128 network was trained using 1m steps! Is this then using the full game window (since the major bug-fix). If this is the full window, is the plan to take this network and then train it on just the last 250k games?

I have already answered this in the beginning of this thread.

@alreadydone FWIW I fired an email to DeepMind asking if they were willing to clarify this issue.

@gcp I believe you said that the you would be gradually moving it up to the present day, reducing the window as you go along. Is this the final part of that process, in which case why 1m steps?

The number of steps is simply how long the training ran in total. There is no "best" network to restart from on a new set of training data, so it will just keep incrementing.

Well that is only more confusing. While there is no "best network" you can save the network and restart training using that network to initialise but using the smaller window. This is what I had assumed you would do from what you said before, or simply change the window mid-training if that is possible. Do you mean you just used the one window for the whole for the total of training?

"No, I started with a huge window and have been narrowing it to 250k."

I guess you just narrowed it mid-training, ok I understand now. Does that also mean the training rate was also adjusted mid training?

Just looked over a sampling of the games it lost, and it is clearly getting out played. Also most of the games won by 5e1014c1 are when ffc1e51b would get laddered early in the game. Also looking at the games versus supervised network, about 1/3 of lost games were to an early ladder.

you can save the network and restart training using that network to initialise but using the smaller window

This doesn't reset the training steps.

This [saving then initialising from that saved network with new

parameters] doesn't reset the training steps.

Ok, that is different behaviour to the number of training steps reported

when you have initialised from a "best network" on the current 5x64 run,

hence my confusion.

>

The current training iteration will run with about 146k games from the best network. If this does not produce a new best, the learning rate will be lowered.

@gcp Do you think that, after switching to the 6x128 network, if you keep training the 5x64 network with the games of the new one will keep improving?

Just FYI the current version 46ff4b is about 100 Elo stronger on the CGOS leaderboard than the previous version (the score isn't finalized but is going up and only has a few more games before it passes the threshold for a verified rating)

http://www.yss-aya.com/cgos/19x19/standings.html

http://www.yss-aya.com/cgos/19x19/cross/LZ-46ff4b-t1-p1600.html

Tbh the huge jump by 46ff seems to be a reason for me to stay on this learning rate for slightly longer. Idk what is lost by decreasing it, but the last few networks show no sign of stagnation yet, so far it is a stable upwards line (mostly because 46ff scored almost 60% instead of 55%)

Is 1e2b85cf the same as best_v1? If not what's the difference?

Is 1e2b85cf the same as best_v1? If not what's the difference?

Do a sha256sum on best_v1 and find out!

(It is)

Do you think that, after switching to the 6x128 network, if you keep training the 5x64 network with the games of the new one will keep improving?

I don't know. I guess that might be possible.

Tbh the huge jump by 46ff seems to be a reason for me to stay on this learning rate for slightly longer.

If the training window consists (near-)entirely of games from the last best network, and this does not produce an improvement, there is no point in continuing as is. Playing more games will change nothing.

Idk what is lost by decreasing it

The (large scale) rate of improvement slows down after an initial small jump.

The Elo difference between the supervised and previous best network based on the match (+195) is quite a bit smaller than the Elo difference from CGOS leaderboard Bayes Elo (2663, 2316 = +343).

its the same with 46ff, it has less than 70 elo over previous network and 100 elo in CGOS, previous elo differences have been inflated so this is even more noticeable, i guess match elo is deflated now, but we still dont have the bayers elo for 46ff so maybe its too early to say.

Remember that both 46ff and the supervised network on CGOS only played the order of 100 games each, so that's quite a small sample size especially since it's against many different opponents.

What are the current benchmarks for people on the winograd branch about 128x6 vs 64x5 seconds per move?

from now, is it just generating the 128x6 networks?

from now, is it just generating the 128x6 networks?

No, nothing has changed.

We have just surpassed 2/3 of the window.

If the 256k step network of the current (2.32M) training cycle somehow passes, we'll probably stay at 0.001. Otherwise, as @gcp announced earlier, the learning rate will be lowered starting with the next training cycle.

@jkiliani Do you mean that the learning rate has not already lowered yet?

No, that is what @gcp said in his last post. Also I think it's pretty clear from the strength fluctuation that learning rate is not changed yet.

@Dorus

I never realized that list wasn't sorted on date, but on network training games + steps.

It's actually sorted on opponent network creation date first, then sorted by age of the network itself. This was requested of me when I started queuing the old networks for matches when the match system was new, so they'd all sort more properly.

We could also try

1) more rollouts during training - even 200 more rollouts might give it enough additional information to train a stronger network

2) fewer rollouts during match tests - MCTS makes up for weaknesses in the NN evaluation function, perhaps it will be easier to see improvements with fewer rollouts.

3) Sample more from completed games or different parts of the game - this might help it address specific weaknesses that it might be having trouble learning.

more rollouts during training - even 200 more rollouts might give it enough additional information to train a stronger network

Did you mean more playouts?

Just to be clear - "rollouts" refers to the random simulations to the end of the game from leaf nodes in UCT style MCTS. Those were removed in AGZ and so in LZ as well. There are no more random rollouts at all.

If you meant playouts, those are essentially deterministic node expansions in the UCT during matches and training.

Well, more COULD help. Or they might not. Deepmind actually reached a far better and faster result after using less playouts in AlphaZero for example.

What it would do for sure is slow it down considerably which, if it's not guaranteed to help, will just hurt the project.

These things need to be tested before implementation to at least have an idea of what you expect to work better.

I did mean playouts - 200 more is 10% longer.

@killerducky commented a while ago on the training procedure here, specifically about whether restarting from the previous best network at the start of every training cycle is actually better than continuing directly from the last window. Now that learning rate is lowered, maybe this could be reconsidered? The switch from 0.01 to 0.001 was at the same time https://github.com/gcp/leela-zero/commit/6b018413df0e0d11232eba44e5b6f0e423f62f7d improved the training speed a lot and allowed much more steps per training run, so we may not have seen any problems from backing up back then.

Maybe backing up to best-network only every third or forth training run would still serve the purpose of preventing overfitting, while allowing more training steps to accumulate if needed to break out of a local optimum.

If you meant playouts, those are essentially deterministic node expansions in the UCT during matches and training.

Well, more COULD help. Or they might not. Deepmind actually reached a far better and faster result after using less playouts in AlphaZero for example.

Earlier I also voted for more playouts as this seems the easiest way for increasing search strength. I believe LZ currently squeezes less knowledge/gain out from each selfplay game, which are of lower quality than AGZ. But the actual problem may be in the search code.

Before investing a year of computation into the new run, it may worth to do some structured testing, such as running 100k-100k selfplay games from an earlier net (way before stall) with different number of sims, also probably with different search (exploration) tweaks, and see the gains in training. Some objective comparison to AGZ progress per game may also be in order.

@tapsica "Before investing a year of computation into the new run"

But is it possible to piggyback on some 5x64 stuff? Or isn't it?

The 6x128 unsupervised net runs as LeelaZeroT right now on KGS, and is around 1 kyu versus other bots. Can one start from there?

@gcp I believe the learning rate got dropped for the last 6 networks? No progress so far (although we probably need another 12-24h to be sure), but could you consider to increase the learning window back to say 500k before switching to a new strategy all together. The last 250k games are all created by the same net, and that might hurt the learning too.

@Dorus "The last 250k games are all created by the same net, and that might hurt the learning too."

Can other nets be used instead? The ones that were close?

But those other nets did not generate games. Also there is no reason to believe new games generated by other weaker nets will have better results than just using games from a previous net we already generated games with.

Beside, using games we already have anyway is something we can test very easily, generating new games first takes a lot of project resources.

@Dorus "there is no reason to believe new games generated by other weaker nets will have better results"

Those which were close. do not have to be weaker, Or not much. Can they provide some diversity?

Right now we are wasting resources since new games are the same strength as games in the maximum window. So we should probably make some change - such as more playouts, etc.

I agree that the current stall is another opportunity to get an idea of the benefits of more playouts - like doubling or tripling for a few days. But even if this won't help now, it can still be more optimal for an unpeaked state, so further testing may still be necessary.

As you can see lowering the learning rate did not produce an improvement. There is a possibility that using 3200 playouts could still produce a tiny jump, but IMHO the odds are strongly against it as the problem seems simply that the network is at capacity, not that more playouts are needed to make deeper discoveries about Go (and note the AZ result with 800!).

At this point I think it's best to just terminate the 5x64 run (EDIT: OK I GUESS NOT!). More games are unlikely to help much, as we're close to 500k games on 2 networks very close to the optimal strength. Further experiments can be run on the existing dataset, we don't need to continue generating games from the same network for that.

I'll ask @roy7 to make the get-task endpoint 404, at which point the clients should stop and gradually back off, checking every now and then if there's something to do (we may have some test matches for you). Or you can just close them. There'll probably be some news in a week, or two.

Bootstrapping 6x128 was (very!) unsuccessful so far. From eyeballing the results (and experience with supervised learning), the value network on those is overfit. It's possible to control this, but training new networks is going to take a week or so in any case. Just forcing the clients to play games with any of those (which are pretty much known to be in a bad state) seems a waste of resources. I'd rather we do testing and see if we can produce a better network from the data we have. We can still start a new run from that instead of 0.

I'll use the break to make the (incompatible) changes that were intended (new weights format, add resigning statistics to training data), we can probably merge Winograd (~x2 speedup for 6x128) during that time, and I get some time to upload all the data so people can run experiments rather than make random theories.

Meanwhile, the server source was published:

https://github.com/gcp/leela-zero-server

According to AGZ paper's learning rate schedule, the 0.0001 learning rate

should run with 600k+ steps, but I'm still seeing 8/16/32/64/128/256k steps

as before. What am I misreading?

On Sun, Jan 14, 2018 at 4:39 PM, Gian-Carlo Pascutto <

[email protected]> wrote:

As you can see lowering the learning rate did not produce an improvement.

There is a possibility that using 3200 playouts could still produce a tiny

jump, but IMHO the odds are strongly against it as the problem seems simply

that the network is at capacity, not that more playouts are needed to make

deeper discoveries about Go (and note the AZ result with 800!).At this point I think it's best to just terminate the 5x64 run. More games

are unlikely to help much, as we're close to 500k games on 2 networks very

close to the optimal strength. Further experiments can be run on the

existing dataset, we don't need to continue generating games from the same

network for that.I'll ask @roy7 https://github.com/roy7 to make the get-task endpoint

404, at which point the clients should stop and gradually back off,

checking every now and then if there's something to do (we may have some

test matches for you). Or you can just close them. There'll probably be

some news in a week, or two.Bootstrapping 6x128 was (very!) unsuccessful so far. From eyeballing the

results (and experience with supervised learning), the value network on

those is overfit. It's possible to control this, but training new networks

is going to take a week or so in any case. Just forcing the clients to play

games with any of those (which are pretty much known to be in a bad state)

seems a waste of resources. I'd rather we do testing and see if we can

produce a better network from the data we have. We can still start a new

run from that instead of 0.I'll use the break to make the (incompatible) changes that were intended

(new weights format, add resigning statistics to training data), we can

probably merge Winograd (~x2 speedup for 6x128) during that time, and I get

some time to upload all the data so people can run experiments rather than

make random theories.Meanwhile, the server source was published:

https://github.com/gcp/leela-zero-server—

You are receiving this because you are subscribed to this thread.

Reply to this email directly, view it on GitHub

https://github.com/gcp/leela-zero/issues/591#issuecomment-357496732, or mute

the thread

https://github.com/notifications/unsubscribe-auth/ABHWLr3172yU3-tFIaK4vH7oij6rpiriks5tKb0qgaJpZM4RVxWY

.

Our steps reset on each best new network. Their numbers are total training steps, and that on another batch size and orders of magnitude bigger network. The numbers aren't comparable at all, and not useful.

Maybe backing up to best-network only every third or forth training run would still serve the purpose of preventing overfitting, while allowing more training steps to accumulate if needed to break out of a local optimum.

I don't see any movement in the training one way or another (but weaker networks do come out), so I don't consider it worthwhile. (This would be different if loss gradually decreased, obviously)

You can try this if the entire dataset is uploaded.

I suppose now that it's decided that 46ff4b94 is the best 5x64 for this run, would it be okay to keep clients involved / connected by running self play with various other networks? Potentially some of the recently failed 5x64 or 6x128 -- this is somewhat like the AZ approach without evaluation. (?)

People can choose to self terminate or participate in an experiment that doesn't require client changes.

Tradeoffs being people see the break as a time to leave and potentially not come back. Or running selfplay on failed networks is a waste of resources and not wanting to contribute any more. Or… ?

I knew that as soon as I made that post this would happen. (0db82470 had an SPRT pass)

Y'all keep your clients running for now will ya?

Looking at 0db82470 (currently 47 : 28), it seems that one strategy for dealing with stalls is to publicly call a halt to the run - this should immediately cause a new best network to be created.

Edit:

Too late XD

Edit2:

The ELO of this one can't be too far off from 1e2b, which is very interesting.

Potentially some of the recently failed 5x64 or 6x128 -- this is somewhat like the AZ approach without evaluation. (?)

This would be reasonable but it requires server side fiddling I guess.

I consider the current 6x128 to be a be a dead loss. They're so much weaker it has to be possible to make better ones by adjusting the training (lowering the MSE weighting seems like a good bet).

”I knew it that as soon as I made that post this would happen.

Y'all keep your clients running for now will ya?”

Hahaha, you need to make another post again when we are not making any progress for a few days next time. ;-)

I agree it is best to stop self-play while we figure out how to improve

things for the next run.

On Sun, 14 Jan 2018 at 09:21, pcengine notifications@github.com wrote:

”I knew it that as soon as I made that post this would happen.

Y'all keep your clients running for now will ya?”Hahaha, you need to make another post again when we are not making any

progress for a few days next time. ;-)—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/gcp/leela-zero/issues/591#issuecomment-357498606, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AgPB1n0V-S6Jx36vQbzGq39rwrmV6Quaks5tKccrgaJpZM4RVxWY

.

The current network only made 475 games so far. It's too early to call this run completed.

@gcp I could add an now option into autogtp (for the /next branch) so that if the server deliver a command wait with the json, each thread in autogtp would wait for x minutes and then check again. Would that help?

The only think is that I check the code and the autogtp in the master will exit with a wait command.

@gcp since we have bit of time now (we have anew best network) I added the wait command for autogtp and I will test it.

@roy7

the command look like this:

{

"cmd" : "wait",

"minutes" : "5",

}

If you could set up an URL where you loop 5 wait commands and 1 selfplay commands you would help me a lot in the test.

Why "each thread"? Would it be possible to set some global wait state and only have 1 thread responsible for checking again?

There is a possibility that using 3200 playouts could still produce a tiny jump, but IMHO the odds are strongly against it as the problem seems simply that the network is at capacity, not that more playouts are needed to make deeper discoveries about Go (and note the AZ result with 800!).

I still think experiments (4800) are better than assumptions, especially when they are free since the swarm does no other useful things. And I think there can be SIGNIFICANT differences between LZ and A(G)Z in the search code, so if the playout optimum happens to differ that is one more thing to look for.

EDIT: Another idea to experiment with would be to try 5x128 (I guess hacking this is possible to start with the old weights directly, with new weights at near-zero random) - another drop of information for the future.

What is the risk in freezing 5x64 and letting 6x128 to grow for couple weeks, using contributors GPUs?

If the problem with 5x64 is too small size, why not let bigger net take over, even if slower? As it is 5x64 growth is quite slow anyway.

I don't believe that 6x128 will be much different that 5x64. I'd rather believe that 10x128 will surpass the ama level.

It is almost certain that 10 blocks would be significantly stronger. The point is, we should use the current opportunity to gain as much information as possible, to make the most effective use of computation resources during a longer run.

Am I the only one who would like to continue on 5x64 for quite some time asuming that we get a new best network every 250k-500k games ?

I'd also continue with 5x64. We need to see the limit. It's not at it's max right now.

Glad to see a new best network when I got up. BRII is down for maintenance at this moment though.

If bootstrapping ends up working after all there's nothing wrong with 6x128. If we have to restart, I would definitely hope we go for 10x128, so the improvement in plateau isn't just marginal.

@Dorus Each thread works independently from the other, so if one finishes and receive the wait command, the others may still have to finished their work, so they cannot be stopped. To synch them all with a sigle flag, the code have to be change a lot and more test have to be done. This is the easier and faster way to do the waiting. But maybe you have a good reason to make it global?

@marcocalignano Send get-task/1 and you'll get a wait 80% of the time.

While you are at it, get-task/client-major-version-# is the intended use of that if you want to update autogtp to send proper client version # to the server? Just the major version, it won't read periods/letters/etc.

The idea was we could add support for a feature in a newer client while not sending those commands to older clients, like if old clients were asking for get-task/9 and newer clients on /ext asking for get-task/10.