Kubespray: Dashboard eror issue

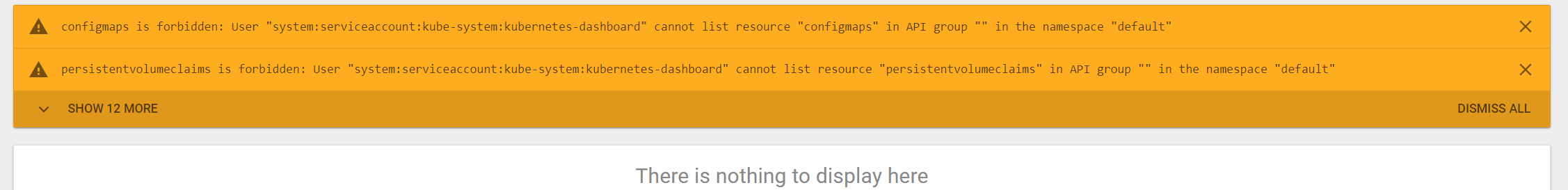

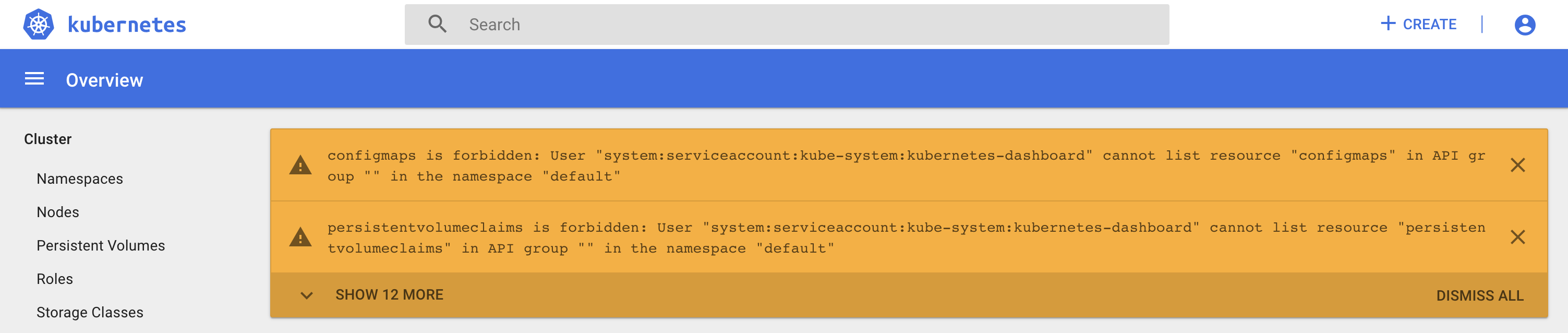

When I login with token, it return errors:

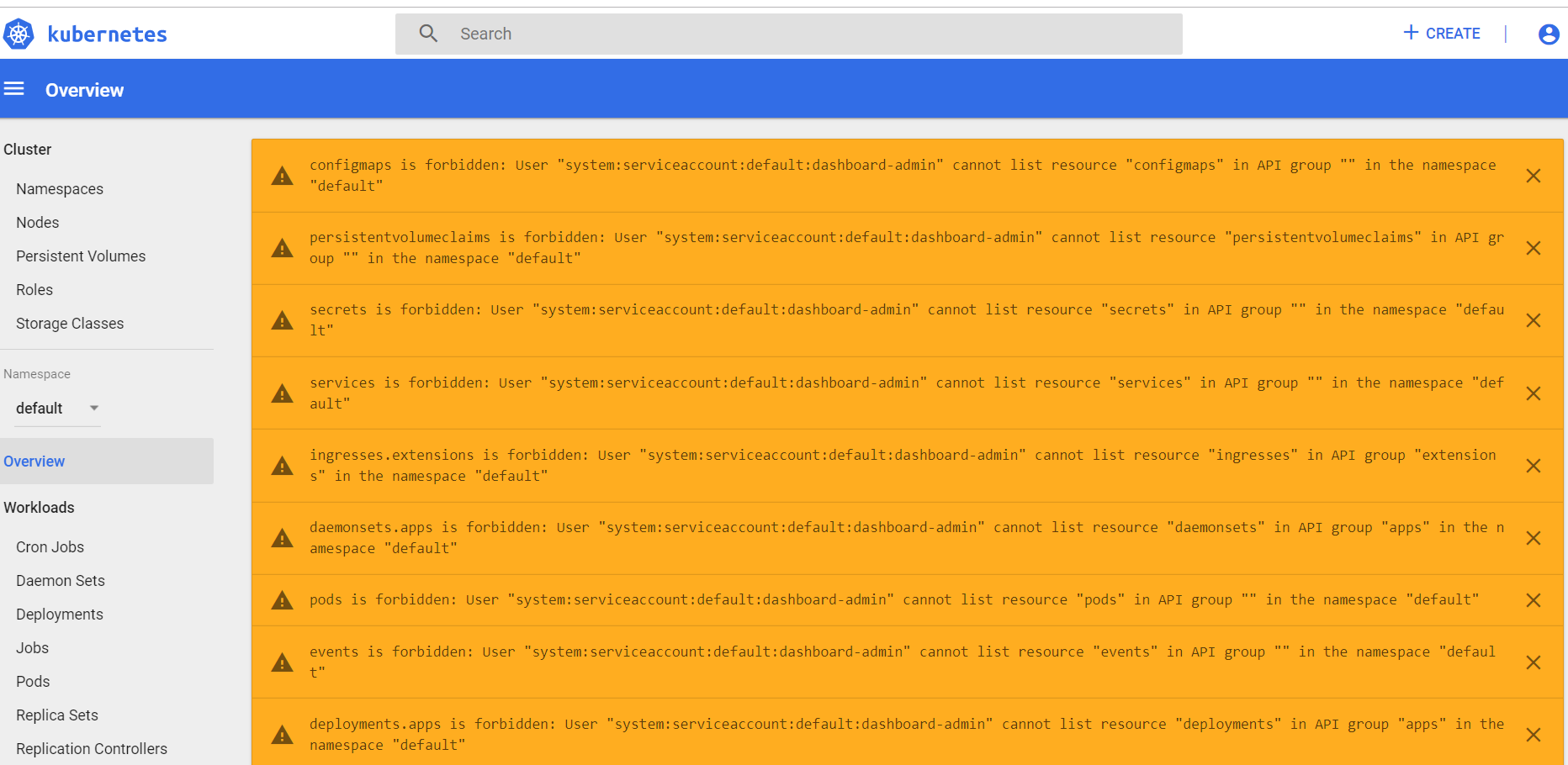

configmaps is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "configmaps" in API group "" in the namespace "default"

persistentvolumeclaims is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "persistentvolumeclaims" in API group "" in the namespace "default"

secrets is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "secrets" in API group "" in the namespace "default"

services is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "services" in API group "" in the namespace "default"

ingresses.extensions is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "ingresses" in API group "extensions" in the namespace "default"

daemonsets.apps is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "daemonsets" in API group "apps" in the namespace "default"

pods is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "pods" in API group "" in the namespace "default"

events is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "events" in API group "" in the namespace "default"

deployments.apps is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "deployments" in API group "apps" in the namespace "default"

replicasets.apps is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "replicasets" in API group "apps" in the namespace "default"

jobs.batch is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "jobs" in API group "batch" in the namespace "default"

cronjobs.batch is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "cronjobs" in API group "batch" in the namespace "default"

replicationcontrollers is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "replicationcontrollers" in API group "" in the namespace "default"

statefulsets.apps is forbidden: User "system:serviceaccount:kube-system:kubernetes-dashboard" cannot list resource "statefulsets" in API group "apps" in the namespace "default"

How to solve by modify roles/kubernetes-apps/ansible/templates/dashboard.yml.j2 ?

All 15 comments

Also have the same issue.

Using RBAC and already created bindings

kubectl create clusterrolebinding kubernetes-dashboard --clusterrole=cluster-admin --serviceaccount=kube-system:kubernetes-dashboard

which token do you use (token of which serviceaccount)? Please provide your kubectl describe secret... command

hi,

having the same issue after trying to deploy k8s cluster with kubespary

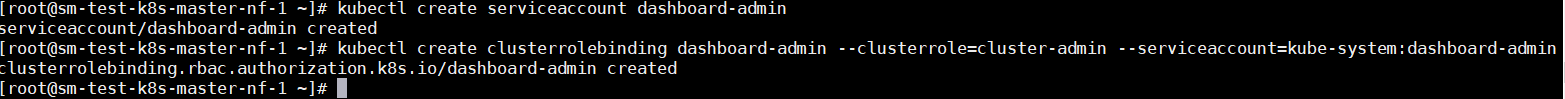

created service account:

kubectl create serviceaccount dashboard-admin

bind the new serviceaccout to cluster-admin role:

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

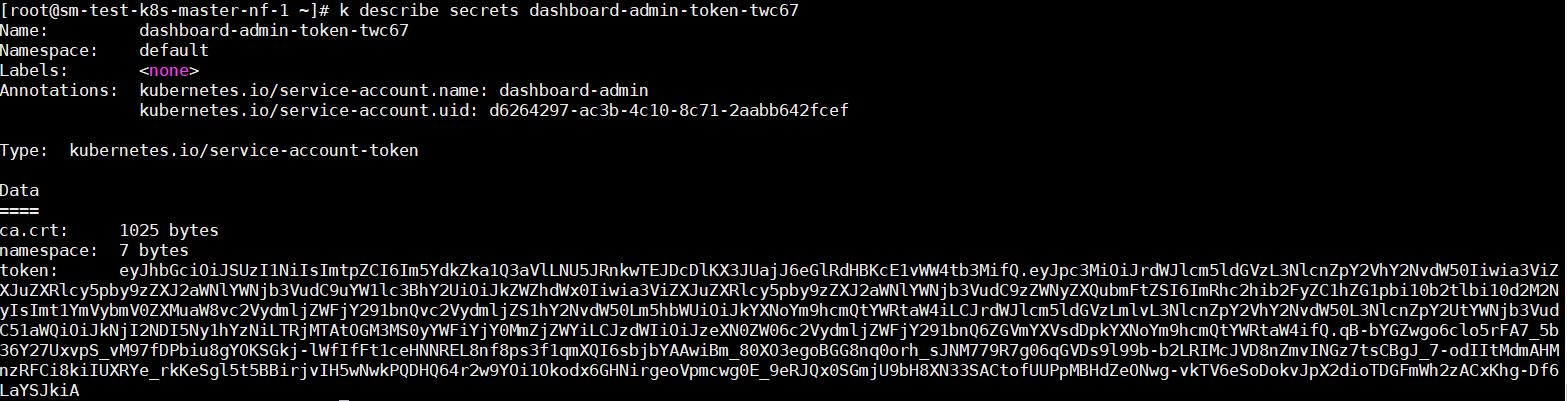

got the secret of dashboard-admin token:

trying to login and fails with :

create more service accounts with different namespaces including kube-system \ default and bind them to cluster-admin role did not help as well.

any help will be most appreciated

Also getting this issue and the common fix of creating the clusterole binding and namespaces does not work for me either.

Also have the same issue.

Using RBAC and already created bindings

kubectl create clusterrolebinding kubernetes-dashboard --clusterrole=cluster-admin --serviceaccount=kube-system:kubernetes-dashboard

the error 404 reported after running this command successfully

I am also seeing this issue as well. I used these commands to retrieve the tokens;

kubectl -n kube-system describe secrets/kubernetes-dashboard-token-vvdll

and

kubectl describe secrets/default-token-7nzb5

Running;

Kubespray tag v2.12.1

Ubuntu 18.04.4

Kubernetes: 1.16.7 and also seen when kubespray downgrades the cluster to 1.15.7 or 1.15.6

I didn't see this issue when I installed on;

Ubuntu: 16.04

Kubernetes: 1.15.6

Having this issue too. I also get a 404 after running kubectl create clusterrolebinding kubernetes-dashboard --clusterrole=cluster-admin --serviceaccount=kube-system:kubernetes-dashboard and trying to login. Workarounds like clicking "+Create" or any of the tabs before get redirected don't work, either.

same issue for me too

I tried both these with no luck

Having this issue too. I also get a 404 after running

kubectl create clusterrolebinding kubernetes-dashboard --clusterrole=cluster-admin --serviceaccount=kube-system:kubernetes-dashboardand trying to login. Workarounds like clicking "+Create" or any of the tabs before get redirected don't work, either.

kubectl create clusterrolebinding kubernetes-dashboard --clusterrole=cluster-admin --serviceaccount=kube-system:kubernetes-dashboard

This command worked great, then in VSCode when I right clicked and said Open Dashboard the next time no more errors! Using an Azure AKS cluster.

For those of us with the endless 404 loop after running;

kubectl create clusterrolebinding kubernetes-dashboard --clusterrole=cluster-admin --serviceaccount=kube-system:kubernetes-dashboard

I think the 404 loop is a known issue with kubernetes dashboard in v1.16

Here is a workaround;

Install a cluster with kubespray branch release-2.12 and kubernetes v1.16.7.

In addons.yml set dashboard_enabled: false. Actually I had already set that to true but manually deleted the service and deployments for kubernetes-dashboard in namespace kube-system. (Setting dashboard_enabled: false and rerunning the ansible playbook does not delete the dashboard)

Install a newer version of the dashboard, that is known to work with 1.16. Notice the newer 2.0 dashboard versions install into a kubernetes-dashboard namespace

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-rc3/aio/deploy/recommended.yaml

Fix the dashboard role (this is not ideal because it gives your dashboard account cluster admin, and that is dangerous)

kubectl create clusterrolebinding kubernetes-dashboard --clusterrole=cluster-admin --serviceaccount=kubernetes-dashboard:kubernetes-dashboard

Get the dashboard token

namespace=kubernetes-dashboard

token_name=kubernetes-dashboard

token=$(kubectl -n "${namespace}" get secret | grep "${token_name}" | cut -d " " -f1)

kubectl -n "${namespace}" describe secret $token

Create a dashboard proxy

kubectl port-forward -n kubernetes-dashboard service/kubernetes-dashboard 443:443 --address 0.0.0.0

Visit https://<master node ip>:443 and enter the token, makes sure to cut and paste the whole token minus spaces

Same issue, this old version of the dashboard that is included with kubesprey as of 31st. of march 2020 is not compatible with the Kubernetes version according to the dashboard version matrix.

yes i agree with @jakobjs, the problem is still there . how do we fix > should we use HELM to install latest kubernetes-dashboard ?

5821 Should fix this issue, you can already try it if needed, but this will be ship with 2.13

/close

@floryut: Closing this issue.

In response to this:

/close

Instructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository.

Most helpful comment

Having this issue too. I also get a 404 after running

kubectl create clusterrolebinding kubernetes-dashboard --clusterrole=cluster-admin --serviceaccount=kube-system:kubernetes-dashboardand trying to login. Workarounds like clicking "+Create" or any of the tabs before get redirected don't work, either.