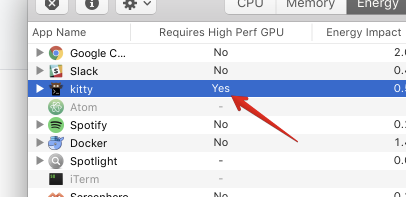

Kitty: Kitty requires "High Perf GPU" when it could probably get away with the low power GPU on a Mac

I suspect (but don't know for sure) that Kitty's rendering demands are modest enough not to require the high performance GPU on a Mac. Using the lower power GPU would be better for battery life.

All 21 comments

It has nothing to do with performance. kitty uses opengl 3.3, probably the "low perf GPU" on OS X does not support opengl 3.3. I'm afraid I am not interested in supporting older versions of opengl, as that woudl degrade actual performance and complicate the code.

Actually, it might be that there simply needs to be an entry in the info.plist for using the low perf GPU, as per https://developer.apple.com/library/content/qa/qa1734/_index.html

Try adding it yourself and see if it works.

@kovidgoyal yes, I was about to say it's probably something to do with the plist but you beat me to it. I'll give it a try.

@kovidgoyal I tried changing the plist but that is insufficient. There needs to be a code change to make the app support multiple GPUs.

https://developer.apple.com/library/content/technotes/tn2229/_index.html

Note that this does does not imply supporting older OpenGL libs. There just needs to be some Mac-specific code to opt in to multiple GPU support _and_ and the change to the plist.

It'd be really handy to have support for Mac's with multiple graphics cards, as using the high performance card drastically reduces battery life (from around 5hrs to around 2 on my 2013 MBP)

Unfortunately, I dont actually have a mac capable of running kitty, only building it (an ancient single GPU mac mini). So it is hard for me to implement mac specific features. This kind of thing is going to require somebody with the appropriate hardware to write the code. I'll be happy to review/merge a PR.

Note that there are significant changes to the opengl code in the gr branch (which will eventually be merged into master). So if you (or anyone) are going to work on it, best to do so off the gr branch.

@kovidgoyal I'm not a Mac dev but I can probably give it a shot once the gr branch has been merged. That's unless someone with the skills picks it up first :)

@freshtonic I'm not a mac dev either :)

Just to note the gr branch is likely to be merged in the next few days, unless I come across a show stopper bug.

FYI gr branch is merged.

@kovidgoyal excellent. I'll roll up my sleeves and see if I can make this happen.

Cool feel free to ask if you have questions about kitty's codebase.

If anyone needs assistance testing on this, I'm happy to help.

I took a quick look at this, and as best as I can tell, this patch should fix it. However, since I dont have the hardware, I have no way to test:

diff --git a/kitty/glfw.c b/kitty/glfw.c

index c573d74..1cf7f37 100644

--- a/kitty/glfw.c

+++ b/kitty/glfw.c

@@ -227,6 +227,7 @@ create_os_window(PyObject UNUSED *self, PyObject *args) {

glfwWindowHint(GLFW_STENCIL_BITS, 0);

#ifdef __APPLE__

if (OPT(macos_hide_titlebar)) glfwWindowHint(GLFW_DECORATED, false);

+ glfwWindowHint(GLFW_COCOA_GRAPHICS_SWITCHING, true);

#endif

standard_cursor = glfwCreateStandardCursor(GLFW_IBEAM_CURSOR);

diff --git a/kitty/shaders.c b/kitty/shaders.c

index 0636ce6..535820f 100644

--- a/kitty/shaders.c

+++ b/kitty/shaders.c

@@ -114,6 +114,12 @@ layout_sprite_map() {

if (!limits_updated) {

glGetIntegerv(GL_MAX_TEXTURE_SIZE, &(sprite_map.max_texture_size));

glGetIntegerv(GL_MAX_ARRAY_TEXTURE_LAYERS, &(sprite_map.max_array_texture_layers));

+#ifdef __APPLE__

+ // Since on Apple we could have multiple GPUs, with different capabilities,

+ // upper bound the values according to the data from http://developer.apple.com/graphicsimaging/opengl/capabilities/

+ sprite_map.max_texture_size = MIN(8192, sprite_map.max_texture_size);

+ sprite_map.max_array_texture_layers = MIN(512, sprite_map.max_array_texture_layers);

+#endif

sprite_tracker_set_limits(sprite_map.max_texture_size, sprite_map.max_array_texture_layers);

limits_updated = true;

}

@kovidgoyal I tested your patch and it did solve this issue! :tada:

Cool :) Can you run with it a little while to see if there are any problems, especially when switching between GPUs.

You have no idea how excited I am to see this. I'm going to test as well.

This seems to work in any scenario that I try it in:

- Power unplugged/plugged

- External display plugged/unplugged

- Starting on internal display, plugging external display, moving Kitty to external display (and vice versa)

Everything works as expected, and Kitty is using _way_ less power now.

Thanks so much for this!!

I've been using it for 5 hours now and everything looks great!

OK, thanks for the testing, I'll commit it.

@kovidgoyal @cweagans This is awesome! Thank you both :)

Most helpful comment

I took a quick look at this, and as best as I can tell, this patch should fix it. However, since I dont have the hardware, I have no way to test: