Hi there,

First, many thanks for such a great project, I really like it :)

I'm trying to deploy the openstack-cloud-controller-manager on k3s, but the deamon set does not schedule any pods.

Version:

k3s version v1.18.3+k3s1 (96653e8d)

K3s arguments:

curl -sfL https://get.k3s.io | sh -s - server --disable-cloud-controller --no-deploy servicelb --kubelet-arg=\"cloud-provider=external\" --node-external-ip ${master1_public_ip}

Describe the bug

The openstack-cloud-controller daemon set does not deploy any pods in kube-system namespace

To Reproduce

- Create cloud.conf (according to the sample: https://raw.githubusercontent.com/kubernetes/cloud-provider-openstack/master/manifests/controller-manager/cloud-config)

- Create the secret

kubectl create secret -n kube-system generic cloud-config --from-file=cloud.conf - Deploy the openstack-cloud-controller manager (according https://github.com/kubernetes/cloud-provider-openstack/blob/master/docs/using-openstack-cloud-controller-manager.md):

kubectl apply -f https://raw.githubusercontent.com/kubernetes/cloud-provider-openstack/master/cluster/addons/rbac/cloud-controller-manager-roles.yaml

kubectl apply -f https://raw.githubusercontent.com/kubernetes/cloud-provider-openstack/master/cluster/addons/rbac/cloud-controller-manager-role-bindings.yaml

kubectl apply -f https://raw.githubusercontent.com/kubernetes/cloud-provider-openstack/master/manifests/controller-manager/openstack-cloud-controller-manager-ds.yaml

Expected behavior

The openstack-cloud-controller deamon set should deploy the required pods in kube-system namespace.

Actual behavior

The daemonset set does not schedule any pod.

Additional context / logs

kubectl describe daemonset openstack-cloud-controller-manager -n kube-system

Name: openstack-cloud-controller-manager

Selector: k8s-app=openstack-cloud-controller-manager

Node-Selector: node-role.kubernetes.io/master=

Labels: k8s-app=openstack-cloud-controller-manager

Annotations: deprecated.daemonset.template.generation: 1

kubectl.kubernetes.io/last-applied-configuration:

{"apiVersion":"apps/v1","kind":"DaemonSet","metadata":{"annotations":{},"labels":{"k8s-app":"openstack-cloud-controller-manager"},"name":"...

Desired Number of Nodes Scheduled: 0

Current Number of Nodes Scheduled: 0

Number of Nodes Scheduled with Up-to-date Pods: 0

Number of Nodes Scheduled with Available Pods: 0

Number of Nodes Misscheduled: 0

Pods Status: 0 Running / 0 Waiting / 0 Succeeded / 0 Failed

Pod Template:

Labels: k8s-app=openstack-cloud-controller-manager

Service Account: cloud-controller-manager

Containers:

openstack-cloud-controller-manager:

Image: docker.io/k8scloudprovider/openstack-cloud-controller-manager:latest

Port:

Host Port:

Args:

/bin/openstack-cloud-controller-manager

--v=1

--cloud-config=$(CLOUD_CONFIG)

--cloud-provider=openstack

--use-service-account-credentials=true

--address=127.0.0.1

Requests:

cpu: 200m

Environment:

CLOUD_CONFIG: /etc/config/cloud.conf

Mounts:

/etc/config from cloud-config-volume (ro)

/etc/kubernetes/pki from k8s-certs (ro)

/etc/ssl/certs from ca-certs (ro)

Volumes:

k8s-certs:

Type: HostPath (bare host directory volume)

Path: /etc/kubernetes/pki

HostPathType: DirectoryOrCreate

ca-certs:

Type: HostPath (bare host directory volume)

Path: /etc/ssl/certs

HostPathType: DirectoryOrCreate

cloud-config-volume:

Type: Secret (a volume populated by a Secret)

SecretName: cloud-config

Optional: false

Events:kubectl -n kube-system get pods

NAME READY STATUS RESTARTS AGE

metrics-server-7566d596c8-g4dpz 1/1 Running 0 42m

local-path-provisioner-6d59f47c7-k45n4 1/1 Running 1 42m

coredns-8655855d6-5rrrv 1/1 Running 0 42m

helm-install-traefik-mv2w4 0/1 CrashLoopBackOff 16 42m

Many thanks for your help :)

Best regards

Reto

All 3 comments

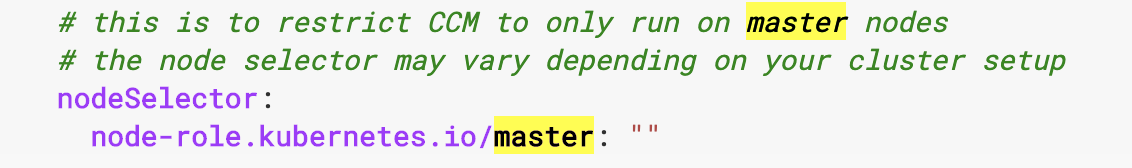

How many nodes do you have in your cluster? Are they server, or agent? Your pod spec appears to have a selector in it that would prevent it from scheduling on master nodes, which is the only type you have if this is a single-node installation:

Node-Selector: node-role.kubernetes.io/master=

k3s server nodes have node-role.kubernetes.io/master=true which would not match the selector.

Hi @brandond

Many thanks for your help and the hint :-)

I've changed the node-selector to:

nodeSelector: node-role.kubernetes.io/master: "true"

And it works now perfectly:

kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

openstack-cloud-controller-manager-4m9d6 1/1 Running 0 4m22s

local-path-provisioner-6d59f47c7-h9kbc 1/1 Running 0 4m23s

metrics-server-7566d596c8-zdws9 1/1 Running 0 4m23s

helm-install-traefik-824hj 0/1 Completed 0 4m24s

coredns-8655855d6-jz522 1/1 Running 0 4m23s

traefik-758cd5fc85-gmvms 1/1 Running 0 3m18s

Just one question: According to the documentation (https://kubernetes.io/docs/tasks/administer-cluster/running-cloud-controller/) the cloud-controller-manager pods should be scheduled on the master and the node-selector is correct in my view:

https://github.com/kubernetes/kubeadm/blob/master/docs/design/design_v1.9.md#mark-master

It just makes me wonder why k3s uses the attribute master: "true"?

Have a good weekend :)

Best regards

Reto

.

Most helpful comment

Hi @brandond

Many thanks for your help and the hint :-)

I've changed the node-selector to:

And it works now perfectly:

Just one question: According to the documentation (https://kubernetes.io/docs/tasks/administer-cluster/running-cloud-controller/) the cloud-controller-manager pods should be scheduled on the master and the node-selector is correct in my view:

https://github.com/kubernetes/kubeadm/blob/master/docs/design/design_v1.9.md#mark-master

It just makes me wonder why k3s uses the attribute master: "true"?

Have a good weekend :)

Best regards

Reto