Describe the bug

I'm not sure if it's a bug, but I think it's not an expected behaviour. When running k3s on any computer, it causes a very high load average. To have a concrete example, I'll explain the situation of my raspberry pi3 node.

When running k3s, I have a load average usage of:

load average: 2.69, 1.52, 1.79

Without running it, but still having the containers up, I have a load average of:

load average: 0.24, 1.01, 1.72

To Reproduce

I just run it without any special arguments, just how is installed by the sh installer.

Expected behavior

The load average should be under 1.

All 42 comments

@drym3r is this a fresh install? Do you any thing deployed in kubernetes? Do you you see what processes are taking CPU?

I'm seeing the same behavior on my laptop (vanilla Ubuntu 18.04 - i5-3427u CPU).

Fresh install of k3s with nothing running on it.

htop shows "k3s server" hovering between 20 and 30% CPU usage.

containerd, traefik and friends all seem to stay below 1%.

stdout from k3s only shows the startup sequence and it doesn't log any errors or anything after that.

@ibuildthecloud Yes, fresh install. I have things installed right now, but I saw this behaviour when I didn't. There's nothing taking CPU much, similarly to @seabrookmx, I only see a high load average. The load average is also afected by IO from disks, but I also doesn't have detect any activity on that.

Seeing the same kind of k3s-server CPU usage (continuous 25-30%) on centos 7.6, vanilla k3s install, otherwise idle server.

Only resources deployed is kubevirt 0.17, but nothing actually deployed for kubevirt to manage. Not sure if it's related but I am also seeing #463 at the same time.

My cpu load of a small single node cluster is also quite high:

top - 15:19:19 up 20 days, 8:12, 1 user, load average: 1.92, 2.02, 2.31

Tasks: 351 total, 1 running, 265 sleeping, 0 stopped, 0 zombie

%Cpu(s): 25.7 us, 15.1 sy, 0.1 ni, 58.0 id, 0.2 wa, 0.0 hi, 0.9 si, 0.0 st

KiB Mem : 7970176 total, 344068 free, 4440344 used, 3185764 buff/cache

KiB Swap: 0 total, 0 free, 0 used. 4007944 avail Mem

PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND

1136 root 20 0 944976 718932 72760 S 130.4 9.0 21602:46 k3s-server

1670 root 20 0 286068 136560 64308 S 6.9 1.7 1533:51 containerd

25288 phil 20 0 1554952 341296 16248 S 5.3 4.3 283:18.57 prometheus

3849 root 20 0 41136 23376 13208 S 3.0 0.3 1455:01 speaker

5651 root 20 0 212384 121944 22672 S 3.0 1.5 48:28.77 fluxd

2887 root 20 0 142912 30660 18784 S 1.7 0.4 387:46.37 coredns

3558 nobody 20 0 175916 146508 18468 S 1.7 1.8 1586:37 tiller

18419 root 20 0 73516 33312 14776 S 1.7 0.4 97:04.46 helm-operator

11822 999 20 0 1007744 43224 3952 S 1.0 0.5 222:55.45 mongod

21244 phil 20 0 7533224 1.320g 18364 S 1.0 17.4 555:32.21 java

31635 root 20 0 78648 13656 2948 S 1.0 0.2 6:44.81 python3

2655 phil 20 0 43052 4268 3408 R 0.7 0.1 0:01.13 top

9122 root 20 0 344164 119080 11864 S 0.7 1.5 68:48.69 hass

8 root 20 0 0 0 0 I 0.3 0.0 92:27.44 rcu_sched

28 root 20 0 0 0 0 S 0.3 0.0 8:50.50 ksoftirqd/3

585 phil 20 0 123160 22336 5232 S 0.3 0.3 23:32.13 alertmanager

1290 root 20 0 0 0 0 I 0.3 0.0 0:00.39 kworker/u8:3

k3s manages to produce more load than the elasticsearch process and other resource intensive workloads.

v0.6.0-rc5 also causes a high cpu load...

I'm seeing about 20-25% cpu usage of a single Skylake Xeon core constantly. Is this expected behaviour? With https://microk8s.io it's only a couple percent.

v0.7.0-rc5 also causes a high cpu load...

@ibuildthecloud are you guys going to tackle this issue anytime soon?

I'm using the last stable version and still see a too high load average, but it 's a little bit better now. I'm between 0.82, 0.93, 0.86 and 1.21, 0.93, 1.30. Even when under 1 it still has too little traffic that justifies it, but at least is not blocking any more.

What kind of load avg, or idle CPU usage, would be considered normal?

I'm getting a similar higher load average on my Raspberry Pi cluster.

Without a way to reproduce is hard to fix, any info that can be given about OS version, hardware, and K3S version is greatly appreciated.

I am not too worried about 10-30% usage but 100%+ is not good. If there is anything helpful in the logs sharing that would be greatly appreciated. It would be good to obtain some profiling data from k3s, we may need to make a special compilation to help with that: https://github.com/golang/go/wiki/Performance

Some details from me:

- k3s version v0.5.0 (8c0116dd)

- development cluster with currently 2 nodes

- contains one application that's completely idle in the examined timespan

- contains a rook installation (mostly idle as well)

- Host OS: ubuntu 18.04 LTS

- Host Hardware: Xeon E5 with 4 physical / 8 effective cores, 96G RAM (master) / 64G (agent)

- load on master: 1-2, according to htop completely dominated by "k3s server"

- load on agent: about 0.8, dominated by a kafka installation that's also on the machine. "k3s agent" contributes very little to the load.

- strace attached on the "k3s server" process produced very little (only occasional

futex()calls andSIGCHLD+rt_sigreturn()combos)

- strace cmdline:

strace -r -C -o k3s.strace.txt -p <PID>

- strace cmdline:

Excerpt from syslog on master:

Aug 2 10:36:20 k8smaster k3s[41722]: E0802 10:36:20.533869 41722 kubelet_volumes.go:154] Orphaned pod "afa0f060-b498-11e9-8a40-000af74e3b38" found, but volume paths are still present on disk : There were a total of 1 errors similar to this. Turn up verbosity to see them.

Aug 2 10:36:21 k8smaster k3s[41722]: E0802 10:36:21.502906 41722 watcher.go:208] watch chan error: EOF

Aug 2 10:36:22 k8smaster k3s[41722]: E0802 10:36:22.532124 41722 kubelet_volumes.go:154] Orphaned pod "afa0f060-b498-11e9-8a40-000af74e3b38" found, but volume paths are still present on disk : There were a total of 1 errors similar to this. Turn up verbosity to see them.

Aug 2 10:36:24 k8smaster k3s[41722]: E0802 10:36:24.532394 41722 kubelet_volumes.go:154] Orphaned pod "afa0f060-b498-11e9-8a40-000af74e3b38" found, but volume paths are still present on disk : There were a total of 1 errors similar to this. Turn up verbosity to see them.

Aug 2 10:36:25 k8smaster k3s[41722]: E0802 10:36:25.511113 41722 watcher.go:208] watch chan error: EOF

Aug 2 10:36:25 k8smaster k3s[41722]: E0802 10:36:25.594629 41722 watcher.go:208] watch chan error: EOF

Aug 2 10:36:26 k8smaster k3s[41722]: E0802 10:36:26.182178 41722 watcher.go:208] watch chan error: EOF

Aug 2 10:36:26 k8smaster k3s[41722]: E0802 10:36:26.538714 41722 kubelet_volumes.go:154] Orphaned pod "afa0f060-b498-11e9-8a40-000af74e3b38" found, but volume paths are still present on disk : There were a total of 1 errors similar to this. Turn up verbosity to see them.

Aug 2 10:36:27 k8smaster k3s[41722]: E0802 10:36:27.138981 41722 watcher.go:208] watch chan error: EOF

Aug 2 10:36:28 k8smaster k3s[41722]: E0802 10:36:28.532382 41722 kubelet_volumes.go:154] Orphaned pod "afa0f060-b498-11e9-8a40-000af74e3b38" found, but volume paths are still present on disk : There were a total of 1 errors similar to this. Turn up verbosity to see them.

Aug 2 10:36:31 k8smaster k3s[41722]: E0802 10:36:30.533515 41722 kubelet_volumes.go:154] Orphaned pod "afa0f060-b498-11e9-8a40-000af74e3b38" found, but volume paths are still present on disk : There were a total of 1 errors similar to this. Turn up verbosity to see them.

Aug 2 10:36:31 k8smaster k3s[41722]: E0802 10:36:30.555398 41722 watcher.go:208] watch chan error: EOF

The "orphaned pod" messages refer to https://github.com/kubernetes/kubernetes/issues/60987 . Removing the orphans doesn't seem to have any effect on the load.

The "EOF" messages continue to be logged.

Aside from these two I can see no other log messages from k3s during normal (idle) operation.

The EOF messages should not be causing an issue. It is fixed in later versions of k3s, the fact that versions like v0.7.0-rc5 still has this issue is concerning.

Does the CPU spike right away or does it take time to build up?

What are the ulimits of the k3s server process?

What is the iostat of the k3s server process?

What services/things are installed that might be using the k8s API? And does disabling that thing reduce CPU consumption?

Regarding CPU & I/O, here's a grafana screenshot of the two servers: https://drive.google.com/open?id=17CJi95dO98z9bW6oMJtiqiym1yEuIQ5i

"beryllium" ist the k3s server host, "protactinium" is the agent with an additional kafka broker installed. The curves are pretty representative for the behavior all day, including shortly after a restart.

There is nothing installed using the k8s API that I know of, aside from the rook (ceph) deployment inside the cluster, which is idle as well.

ulimit of "k3s server":

RESOURCE DESCRIPTION SOFT HARD UNITS

AS address space limit unlimited unlimited bytes

CORE max core file size unlimited unlimited bytes

CPU CPU time unlimited unlimited seconds

DATA max data size unlimited unlimited bytes

FSIZE max file size unlimited unlimited bytes

LOCKS max number of file locks held unlimited unlimited locks

MEMLOCK max locked-in-memory address space 16777216 16777216 bytes

MSGQUEUE max bytes in POSIX mqueues 819200 819200 bytes

NICE max nice prio allowed to raise 0 0

NOFILE max number of open files 1000000 1000000 files

NPROC max number of processes unlimited unlimited processes

RSS max resident set size unlimited unlimited bytes

RTPRIO max real-time priority 0 0

RTTIME timeout for real-time tasks unlimited unlimited microsecs

SIGPENDING max number of pending signals 386264 386264 signals

STACK max stack size 8388608 unlimited bytes

Does this have anything to do with kubernetes/kubernetes#64137? That has to do with orphaned systemd cgroup watches causing kubelet CPU usage to gradually ramp up until a node dies. Several threads attribute this to a bad interaction between certain kernel versions and certain systemd versions. It's particularly exacerbated by cron jobs since they can have such a short lifecycle. Anyhow, if this is the same thing, then I posted a DaemonSet that you can use as a workaround on that ticket until someone gets around to fixing the kernel or systemd or whatever.

I was also able to replicate this pretty trivially on Ubuntu 18.04. This is with a cluster with nothing deployed to it and yet the k3s server consumed 20-30% cpu. I also evaluated microk8s and standard kubernetes deployed with kubeadm. Both only consumed about ~3% cpu at idle with nothing deployed on the same machine. These results were consistent across attempts and after I applyed deployments to the cluster. The load was immediate right after bring up. No logs emitted from the server suggest something going wrong.

This a show stopper for me moving forward with k3s unfortunately.

The latest version 0.8.1 also suffers from this problem. I wonder how someone would run k3s on a low-power edge device when k3s itself consumes so many CPU cycles.

If this is trivial to replicate it would be good to have steps to reproduce.

Ubuntu 18.04 seems to be the common theme, so I tried creating a VM:

Vagrant.configure(2) do |config|

config.vm.box = "bento/ubuntu-18.04"

config.vm.provider "virtualbox" do |v|

v.cpus = 1

v.memory = 2048

end

end

However, after launching k3s with curl -sfL https://get.k3s.io | sh - and waiting a minute for the cluster to launch, the CPU consumption was ~%3 idle.

I'm seeing this on k3s 1.0.1. I've been using rke before and haven't had this problem on the same host. In my case I'm using NixOS - https://nixos.org.

I've investigated about the load average and how it works. And it does in fact make sense that in a raspberry pi 3, that has 4 cores, the load avearge is of from 1 to 4.

I do still think that a load average of 2.69 for a master with little usage is too much, but since it's inside of what is supported, I'll close this issue.

If somebody doesn't agree, feel free to open another one or reopen this one.

PD: In the same machine, an agent without containers does have like 0.3 of load average, FYI.

Same behavior with my tests.

- Raspberry 3 with Raspbian Light

- same with Raspi 4 / 4GB

- Ubuntu Server 18.04 in a VM / Braswell Host

- Ubuntu Server 18.04 on Braswell Host

k3s consumes always about 20% CPU in top after initialization. 18% away from using it on an Edge device. 20% for a management tool when idling is a joke. A full installed Apache, PHP, MariaDB, Gitea, Postfix, Dovecot all in Docker Containers jumps around 3-5% when idling. On a slow Raspberry 3.

Easy to reproduce.

Have similar issue here: idle cluster with 5-15 load average on master.

Cluster:

- master: 4 core, 8Gb ram VDS (kvm)

- worker: 2 core 4Gb ram VDS (kvm)

Apps: istio + single nginx serving static that has no requests at all.

Versions:

- k3s v1.17.2+k3s1 (cdab19b0)

- istio:

- client version: 1.4.5

- control plane version: 1.4.5

- data plane version: 1.4.5 (2 proxies)

- OS:

Linux master 4.15.0-88-generic #88-Ubuntu SMP Tue Feb 11 20:11:34 UTC 2020 x86_64 x86_64 x86_64 GNU/Linux - systemd:

237 +PAM +AUDIT +SELINUX +IMA +APPARMOR +SMACK +SYSVINIT +UTMP +LIBCRYPTSETUP +GCRYPT +GNUTLS +ACL +XZ +LZ4 +SECCOMP +BLKID +ELFUTILS +KMOD -IDN2 +IDN -PCRE2 default-hierarchy=hybrid

No pods are restarting or scaling so this is out of the picture. I suspect it may be related to health checks but it seems really strange that 19 pods can produce so much load (especially considering that all health checks should be in async IO and once per 2 seconds tops).

The most strange thing that the more I observe (sitting in SSH) the less load average becomes... Just after installation of the whole cluster load avg was about 4-5, then after good sleep (about 7 hours) I wanted to check that all is OK and load avg was 13-15, after some investigation (collecting logs, inspecting all versions, etc.) load avg dropped to 5 and now is raising again. I don't understand neither why nor how it is possible. All this time (regarding of load avg) k3s daemon process is jumping from 0-5% to X% (where x is ~ load avg * 100) for about a second and then back to 0-5%

kubectl top node:

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

master 1723m 43% 2275Mi 28%

scala.node 116m 5% 1317Mi 33%

kubectl top pod --all-namespaces:

NAMESPACE NAME CPU(cores) MEMORY(bytes)

blog blog-frontend-85b4b8d4d6-7vdv9 5m 28Mi

istio-system grafana-5dfcbf9677-t4wcb 20m 18Mi

istio-system istio-citadel-7d4689c4cf-wzpwc 2m 7Mi

istio-system istio-galley-c9647bf5-tsrm2 152m 19Mi

istio-system istio-ingressgateway-67bffcc97f-vbnz9 9m 22Mi

istio-system istio-pilot-8897967c5-6x2rr 18m 14Mi

istio-system istio-sidecar-injector-6fdc95467f-sqgsc 127m 7Mi

istio-system istio-telemetry-78c75b8b9-nblld 6m 19Mi

istio-system istio-tracing-649df9f4bc-5s8xt 30m 270Mi

istio-system kiali-867c85b4bd-47896 2m 8Mi

istio-system prometheus-bc68dd6dc-nflvh 109m 185Mi

istio-system secured-prom-f845c694-hzdqb 0m 2Mi

istio-system secured-tracing-5bdb4bf48b-w2kxw 0m 2Mi

istio-system ssl-termination-edge-proxy-6bb9bdf867-2xkwd 0m 3Mi

istio-system svclb-ssl-termination-edge-proxy-7wzwb 0m 2Mi

istio-system svclb-ssl-termination-edge-proxy-qht9t 0m 1Mi

kube-system coredns-d798c9dd-cvrw4 29m 9Mi

kube-system local-path-provisioner-58fb86bdfd-n882w 40m 7Mi

kube-system metrics-server-6d684c7b5-pn8zd 11m 13Mi

k3s journalctl logs (last 100 lines):

Feb 21 12:50:45 master k3s[18342]: W0221 12:50:45.755119 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-workload-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:50:45 master k3s[18342]: W0221 12:50:45.756428 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-galley-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:50:45 master k3s[18342]: W0221 12:50:45.761659 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-pilot-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:50:45 master k3s[18342]: W0221 12:50:45.802047 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-mixer-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:50:45 master k3s[18342]: W0221 12:50:45.814517 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/config and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:51:29 master k3s[18342]: I0221 12:51:29.646590 18342 controller.go:107] OpenAPI AggregationController: Processing item v1beta1.metrics.k8s.io

Feb 21 12:52:00 master k3s[18342]: W0221 12:52:00.616923 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-mesh-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:52:00 master k3s[18342]: W0221 12:52:00.620100 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-service-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:52:00 master k3s[18342]: W0221 12:52:00.621750 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/config and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:52:00 master k3s[18342]: W0221 12:52:00.623491 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~secret/default-token-bfcgg and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:52:00 master k3s[18342]: W0221 12:52:00.623685 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-galley-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:52:00 master k3s[18342]: W0221 12:52:00.625416 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-mixer-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:52:00 master k3s[18342]: W0221 12:52:00.628072 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-citadel-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:52:00 master k3s[18342]: W0221 12:52:00.629662 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-pilot-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:52:00 master k3s[18342]: W0221 12:52:00.630752 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-performance-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:52:00 master k3s[18342]: W0221 12:52:00.634944 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-workload-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:52:29 master k3s[18342]: I0221 12:52:29.698895 18342 controller.go:107] OpenAPI AggregationController: Processing item v1beta1.metrics.k8s.io

Feb 21 12:53:18 master k3s[18342]: W0221 12:53:18.641179 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~secret/default-token-bfcgg and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:53:18 master k3s[18342]: W0221 12:53:18.643060 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-citadel-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:53:18 master k3s[18342]: W0221 12:53:18.644241 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-mesh-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:53:18 master k3s[18342]: W0221 12:53:18.645464 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-workload-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:53:18 master k3s[18342]: W0221 12:53:18.645704 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/config and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:53:18 master k3s[18342]: W0221 12:53:18.646113 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-mixer-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:53:18 master k3s[18342]: W0221 12:53:18.647266 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-performance-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:53:18 master k3s[18342]: W0221 12:53:18.647501 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-galley-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:53:18 master k3s[18342]: W0221 12:53:18.648394 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-service-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:53:18 master k3s[18342]: W0221 12:53:18.649018 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-pilot-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:53:29 master k3s[18342]: I0221 12:53:29.754930 18342 controller.go:107] OpenAPI AggregationController: Processing item v1beta1.metrics.k8s.io

Feb 21 12:54:22 master k3s[18342]: W0221 12:54:22.544684 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-pilot-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:54:22 master k3s[18342]: W0221 12:54:22.552140 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-mixer-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:54:22 master k3s[18342]: W0221 12:54:22.553658 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~secret/default-token-bfcgg and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:54:22 master k3s[18342]: W0221 12:54:22.555444 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/config and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:54:22 master k3s[18342]: W0221 12:54:22.557187 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-citadel-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:54:22 master k3s[18342]: W0221 12:54:22.560310 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-workload-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:54:22 master k3s[18342]: W0221 12:54:22.558646 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-galley-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:54:22 master k3s[18342]: W0221 12:54:22.559266 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-mesh-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:54:22 master k3s[18342]: W0221 12:54:22.559288 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-service-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:54:22 master k3s[18342]: W0221 12:54:22.559952 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-performance-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:54:29 master k3s[18342]: I0221 12:54:29.840474 18342 controller.go:107] OpenAPI AggregationController: Processing item v1beta1.metrics.k8s.io

Feb 21 12:55:29 master k3s[18342]: I0221 12:55:29.892486 18342 controller.go:107] OpenAPI AggregationController: Processing item v1beta1.metrics.k8s.io

Feb 21 12:55:46 master k3s[18342]: W0221 12:55:46.654650 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-service-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:55:46 master k3s[18342]: W0221 12:55:46.656157 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-citadel-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:55:46 master k3s[18342]: W0221 12:55:46.656361 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-mixer-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:55:46 master k3s[18342]: W0221 12:55:46.656446 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~secret/default-token-bfcgg and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:55:46 master k3s[18342]: W0221 12:55:46.657296 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-mesh-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:55:46 master k3s[18342]: W0221 12:55:46.657889 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-performance-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:55:46 master k3s[18342]: W0221 12:55:46.658169 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-galley-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:55:46 master k3s[18342]: W0221 12:55:46.658331 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/config and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:55:46 master k3s[18342]: W0221 12:55:46.659340 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-pilot-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:55:46 master k3s[18342]: W0221 12:55:46.663163 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-workload-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:56:30 master k3s[18342]: I0221 12:56:30.034176 18342 controller.go:107] OpenAPI AggregationController: Processing item v1beta1.metrics.k8s.io

Feb 21 12:56:40 master k3s[18342]: E0221 12:56:40.061403 18342 upgradeaware.go:357] Error proxying data from client to backend: tls: use of closed connection

Feb 21 12:56:45 master k3s[18342]: E0221 12:56:45.226739 18342 upgradeaware.go:357] Error proxying data from client to backend: EOF

Feb 21 12:56:45 master k3s[18342]: E0221 12:56:45.227130 18342 upgradeaware.go:371] Error proxying data from backend to client: tls: use of closed connection

Feb 21 12:57:08 master k3s[18342]: W0221 12:57:08.630236 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-mesh-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:57:08 master k3s[18342]: W0221 12:57:08.631870 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-service-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:57:08 master k3s[18342]: W0221 12:57:08.632818 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-galley-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:57:08 master k3s[18342]: W0221 12:57:08.633945 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-workload-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:57:08 master k3s[18342]: W0221 12:57:08.635160 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-pilot-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:57:08 master k3s[18342]: W0221 12:57:08.636099 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/config and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:57:08 master k3s[18342]: W0221 12:57:08.637074 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-mixer-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:57:08 master k3s[18342]: W0221 12:57:08.637854 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~secret/default-token-bfcgg and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:57:08 master k3s[18342]: W0221 12:57:08.639554 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-performance-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:57:08 master k3s[18342]: W0221 12:57:08.640618 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-citadel-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:57:30 master k3s[18342]: I0221 12:57:30.100736 18342 controller.go:107] OpenAPI AggregationController: Processing item v1beta1.metrics.k8s.io

Feb 21 12:58:06 master k3s[18342]: E0221 12:58:06.601367 18342 remote_runtime.go:351] ExecSync dc95ef787409873f3086e12974e80fad43752a4a489fbe9ec83871541978c192 '/usr/local/bin/galley probe --probe-path=/tmp/healthliveness --interval=10s' from runtime service failed: rpc error: code = DeadlineExceeded desc = failed to exec in container: timeout 1s exceeded: context deadline exceeded

Feb 21 12:58:28 master k3s[18342]: W0221 12:58:28.663301 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-performance-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:58:28 master k3s[18342]: W0221 12:58:28.663276 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-mesh-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:58:28 master k3s[18342]: W0221 12:58:28.664261 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-mixer-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:58:28 master k3s[18342]: W0221 12:58:28.664385 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-citadel-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:58:28 master k3s[18342]: W0221 12:58:28.665411 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/config and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:58:28 master k3s[18342]: W0221 12:58:28.665683 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~secret/default-token-bfcgg and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:58:28 master k3s[18342]: W0221 12:58:28.666269 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-workload-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:58:28 master k3s[18342]: W0221 12:58:28.667465 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-pilot-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:58:28 master k3s[18342]: W0221 12:58:28.667780 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-galley-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:58:28 master k3s[18342]: W0221 12:58:28.673220 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-service-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:58:30 master k3s[18342]: I0221 12:58:30.198787 18342 controller.go:107] OpenAPI AggregationController: Processing item v1beta1.metrics.k8s.io

Feb 21 12:59:07 master k3s[18342]: E0221 12:59:07.129099 18342 remote_runtime.go:351] ExecSync dc95ef787409873f3086e12974e80fad43752a4a489fbe9ec83871541978c192 '/usr/local/bin/galley probe --probe-path=/tmp/healthliveness --interval=10s' from runtime service failed: rpc error: code = DeadlineExceeded desc = failed to exec in container: timeout 1s exceeded: context deadline exceeded

Feb 21 12:59:30 master k3s[18342]: I0221 12:59:30.263218 18342 controller.go:107] OpenAPI AggregationController: Processing item v1beta1.metrics.k8s.io

Feb 21 12:59:49 master k3s[18342]: W0221 12:59:49.646923 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-mesh-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:59:49 master k3s[18342]: W0221 12:59:49.646914 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~secret/default-token-bfcgg and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:59:49 master k3s[18342]: W0221 12:59:49.647972 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-performance-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:59:49 master k3s[18342]: W0221 12:59:49.649327 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-galley-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:59:49 master k3s[18342]: W0221 12:59:49.651594 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-service-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:59:49 master k3s[18342]: W0221 12:59:49.657478 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-workload-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:59:49 master k3s[18342]: W0221 12:59:49.661854 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-citadel-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:59:49 master k3s[18342]: W0221 12:59:49.663625 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-pilot-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:59:49 master k3s[18342]: W0221 12:59:49.667435 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/config and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 12:59:49 master k3s[18342]: W0221 12:59:49.668398 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-mixer-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 13:00:30 master k3s[18342]: I0221 13:00:30.355881 18342 controller.go:107] OpenAPI AggregationController: Processing item v1beta1.metrics.k8s.io

Feb 21 13:01:17 master k3s[18342]: W0221 13:01:17.745478 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-workload-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 13:01:17 master k3s[18342]: W0221 13:01:17.747164 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-galley-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 13:01:17 master k3s[18342]: W0221 13:01:17.747246 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-performance-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 13:01:17 master k3s[18342]: W0221 13:01:17.748267 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-mesh-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 13:01:17 master k3s[18342]: W0221 13:01:17.748450 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-istio-service-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 13:01:17 master k3s[18342]: W0221 13:01:17.749308 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/config and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 13:01:17 master k3s[18342]: W0221 13:01:17.749532 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-pilot-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 13:01:17 master k3s[18342]: W0221 13:01:17.750209 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-mixer-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 13:01:17 master k3s[18342]: W0221 13:01:17.751260 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~secret/default-token-bfcgg and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Feb 21 13:01:17 master k3s[18342]: W0221 13:01:17.752816 18342 volume_linux.go:45] Setting volume ownership for /var/lib/kubelet/pods/a87681bf-2765-4d3a-a2b5-61b0664d2cf5/volumes/kubernetes.io~configmap/dashboards-istio-citadel-dashboard and fsGroup set. If the volume has a lot of files then setting volume ownership could be slow, see https://github.com/kubernetes/kubernetes/issues/69699

Do you have many workloads which use an exec based liveness/readiness probes? I came across this issue and wonder if there could be some relationship?

No there are only http probes

Although it might be that k3s executes http probes similary to how reference implementation executes exec probes (i.e. not on some unblocking IO, but using a thread per probe) that would make sense of this behavior (but no sense at implementation level...)

Another idea just came to me: my cluster has literally zero load, so I though if it's possible that there is some nonblocking code based on spin-locks or some other cpu consuming algorithm that is killing all of resources on my vds when there is no actual load at all?

I'm still seeing this in the latest releases. Both on Intel and ARM CPUs, load is high - and I don't mean load average, I'm seeing 20-30% CPU load on single-core VMs.

Hi,

I am encountering the same issue.

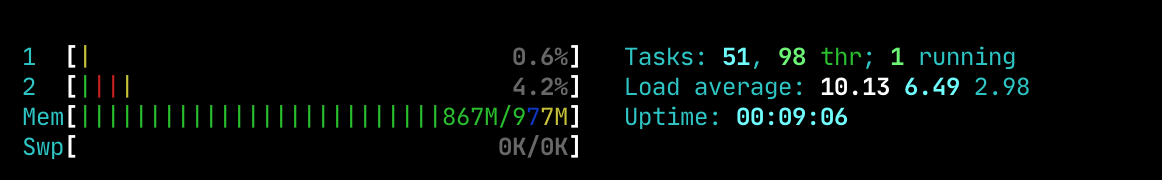

After installing k3s on raspberry pi3b+ cluster (with raspi-os arm64) using k3s-ansible I've noticed that the sysload on the masters is jumping instantly to 15 and constantly increasing. SSH and Prometheus are quickly no longer responsive.

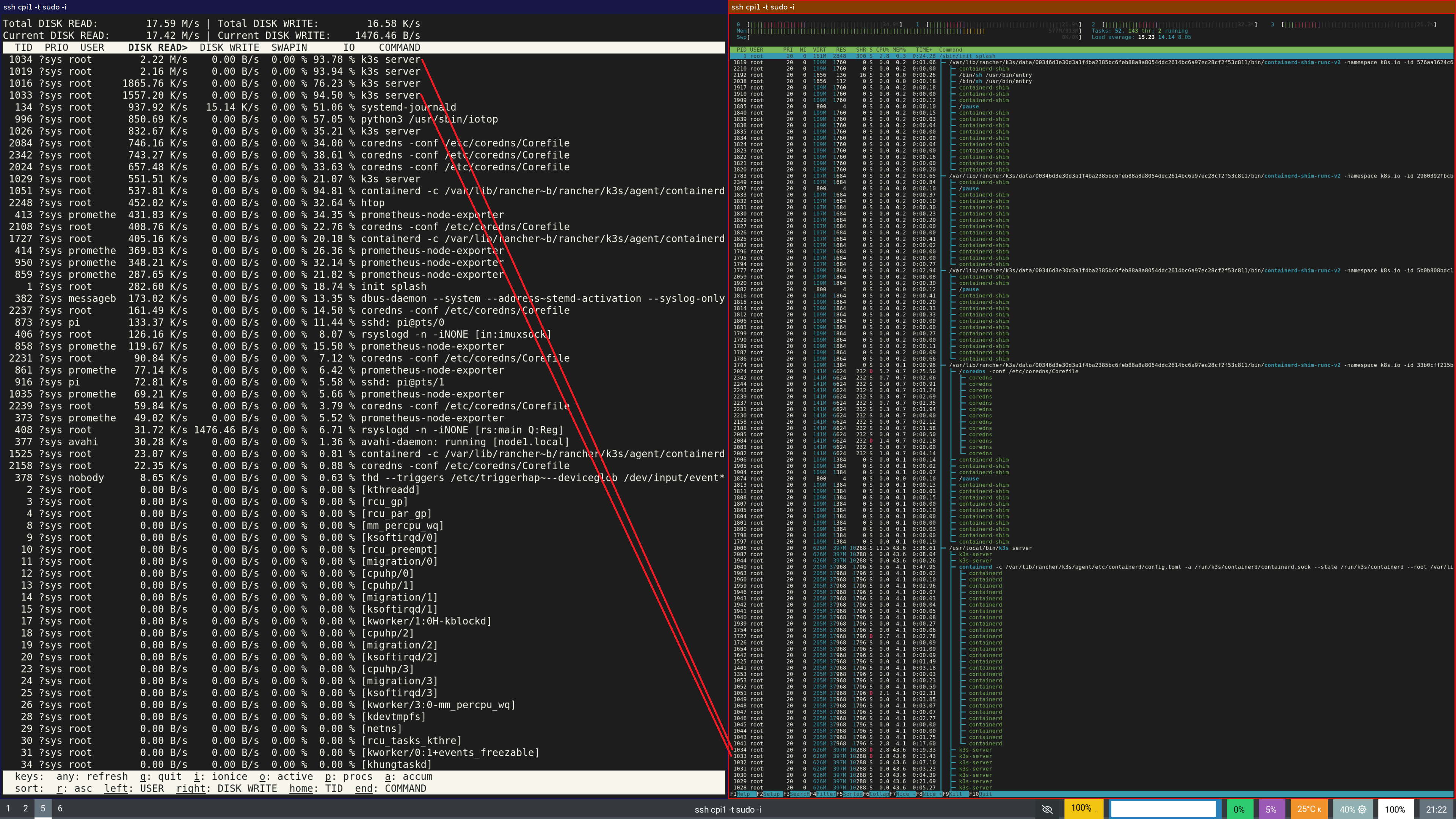

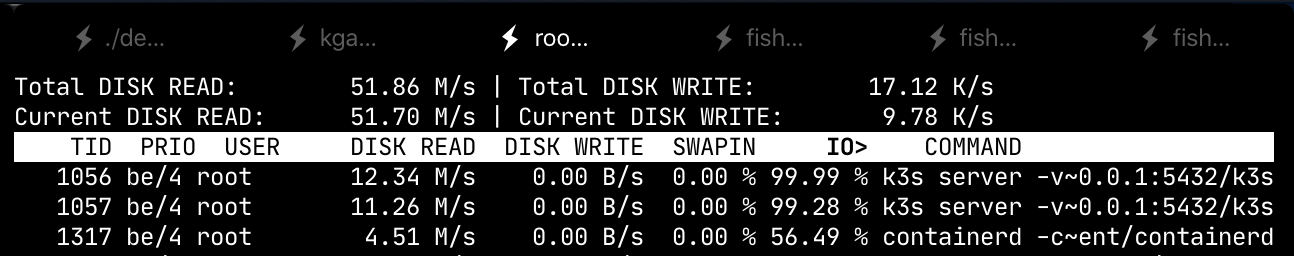

The sysload seems to be IO related as I observe a constant 20Mb/s and 500req/s read on the poor micro sd-card.

Is there any reason behind all those read from filesystem operations ?

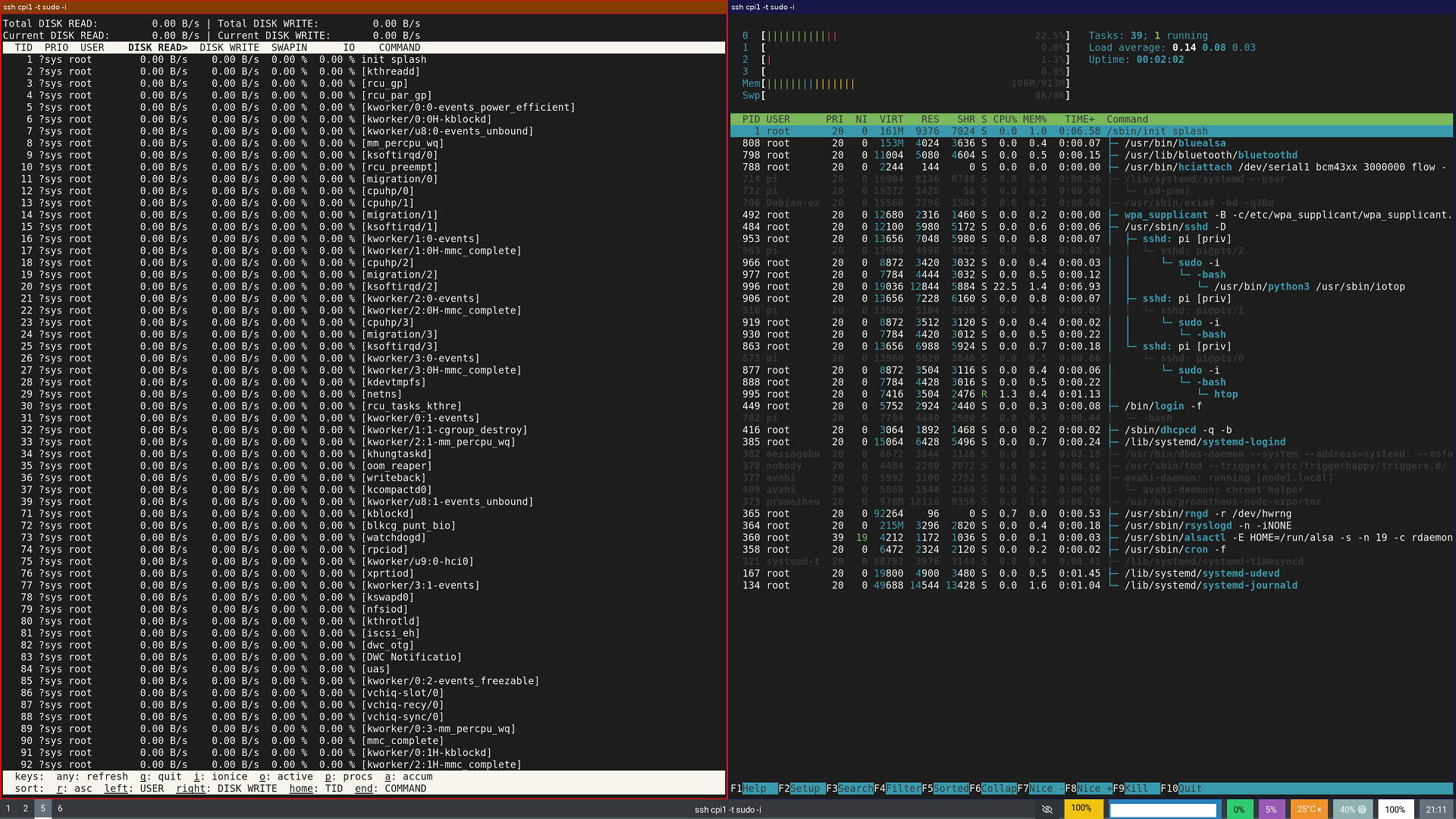

IO load without k3s server

And quickly after starting k3s server

Note, there is only 50MiB of cache/buffer and there is 268MiB of free memory

root@node1:~# free -h

total used free shared buff/cache available

Mem: 913Mi 593Mi 268Mi 0.0Ki 50Mi 269Mi

Swap: 0B 0B 0B

The version is:

k3s version v1.17.5+k3s1 (58ebdb2a)

@arcenik on startup it's going to pull a bunch of images for servicelb, helm-controller, coredns, traefik, node-exporter, etc. If your SD card can't handle that, you might consider attaching an external disk with higher IO capacity and mount it at /var/lib/rancher before installing k3s. A 1GB pi isn't going to get you very far either, at least not as a server - you might check out the 4GB and 8GB Pi4b, and keep the Pi3b for use as an agent. Combined with a high-speed SD card or suitable USB storage they're quite capable.

Is it possible to spread all those services on multiple servers ?

The discussion is not about startup. It is during operation. Load on my k3s master mode (regardless of what hardware I’m using) is always around 25% on an otherwise idle setup.

On 11 Jun 2020, at 23:54, Brandon Davidson notifications@github.com wrote:

@arcenik on startup it's going to pull a bunch of images for servicelb, helm-controller, coredns, traefik, node-exporter, etc. If your SD card can't handle that, you might consider attaching an external disk with higher IO capacity and mount it at /var/lib/rancher before installing k3s. A 1GB pi isn't going to get you very far either, at least not as a server - you might check out the 4GB and 8GB Pi4b, and keep the Pi3b for use as an agent. Combined with a high-speed SD card or suitable USB storage they're quite capable.—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub, or unsubscribe.

I’m seeing this on both on rpi and low powered vms.

It looks like it is mostly IO in my case.

Does anyone have a theory on what part of k3s winds up all the io?

I have tried to understand if it can be the SQLite/etcd part or logging, but not sure.

I have a Pi4b running just the master (started with the hidden --disable-agent flag) with a single agent connected. I'm using a SQL datastore on a different host, and have confirmed that there is no IO other than occasional small writes from rsyslogd.

In this configuration, k3s consumes 20% of one core and 380mb of RAM, which seems reasonable.

From systemd-cgtop:

Control Group Tasks %CPU Memory Input/s Output/s

system.slice/k3s.service 17 19.9 378.9M - -

I am mostly seeing crazy high read load, triggering the k3s to become unresponsive:

While the CPU is barely used:

This is using a PostgreSQL db, on the same host. But the read does not seem to be related to that.

This is what I have with k3s version v1.0.1 (e94a3c60) on a single node cluster.

It's on an Integrated AMD Dual-Core Processor E-350.

I've investigated my problem and found a workaround. However, I'm not certain yet that it works as intended. Still, I'm going to share--feel free to discuss. :-)

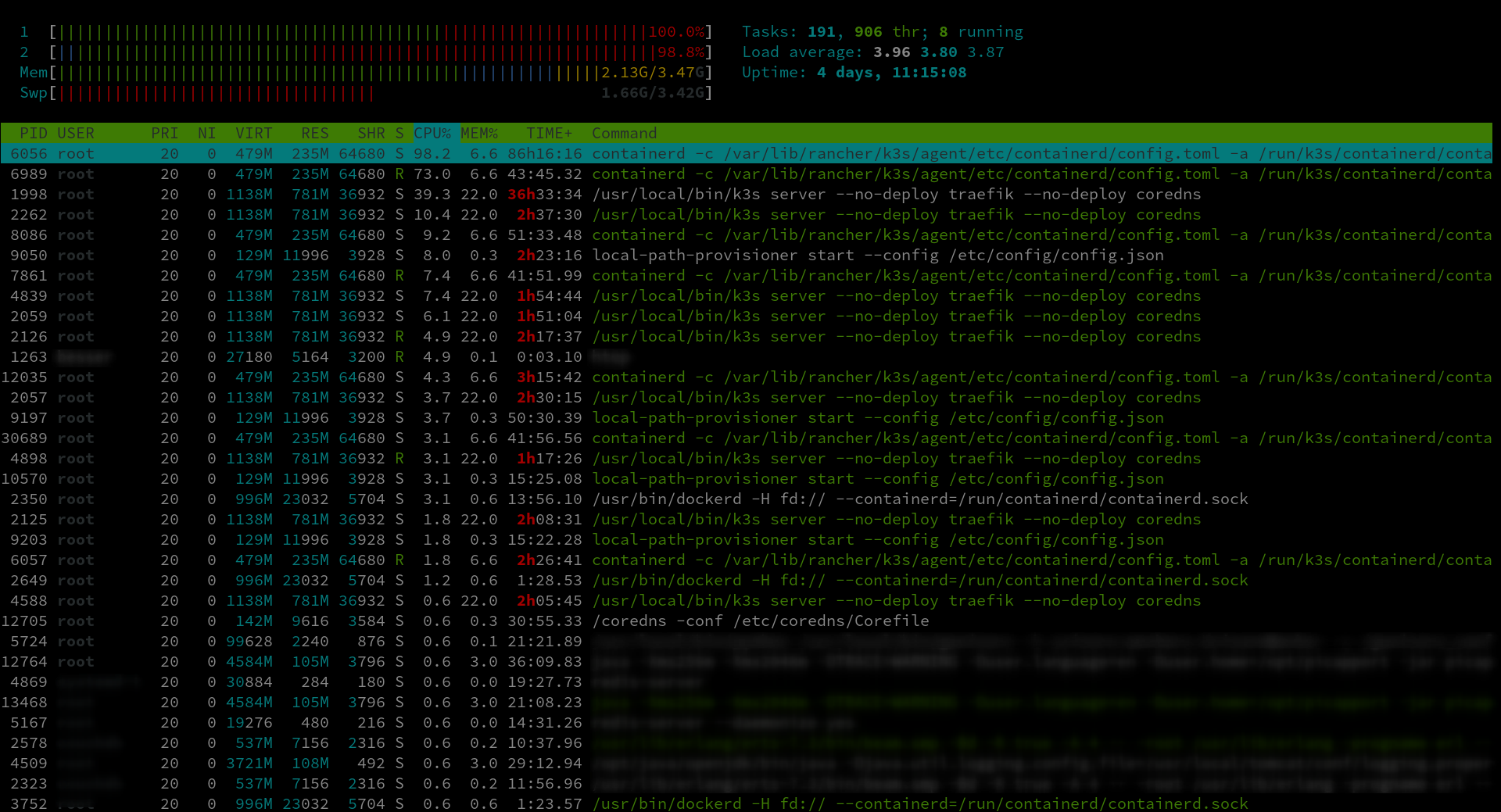

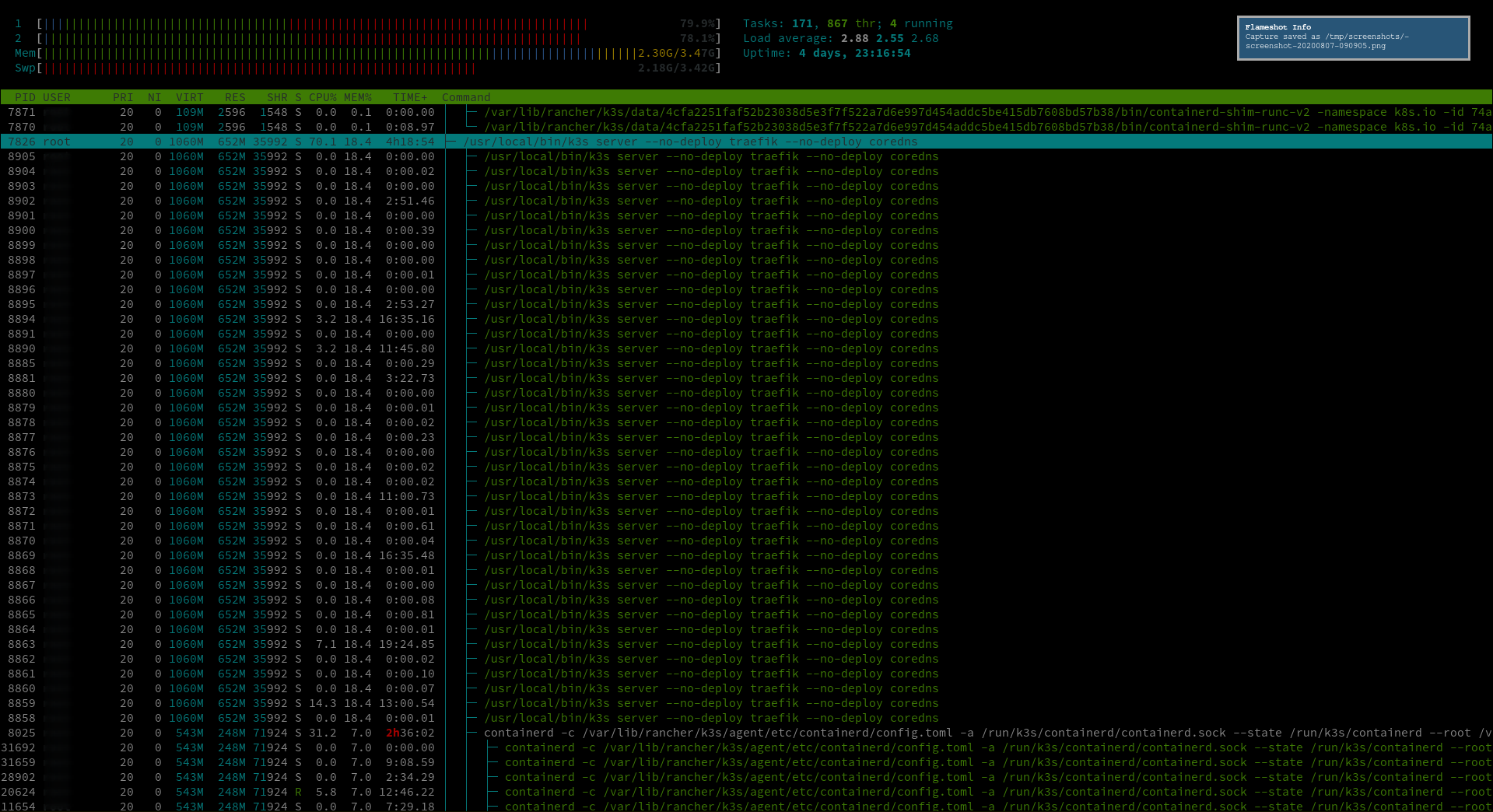

The load is generated in relatively small part by k3s, whereas the major load comes from a containerd sub-process of k3s and containerd's threads. See tree structure in the following screenshot.

Note that in the screenshot I have already applied the workaround. That's why the overall load of k3s and containerd does not hog the entire CPU.

The workaround is as follows. k3s is a systemd service. So I configured its service file to limit k3s' cgroup's CPU consumption. I'm on ubuntu, so I edited _/etc/systemd/system/k3s.service_. I added the following lines to the [Service] section:

CPUQuota=30%

CPUQuotaPeriodSec=50ms

AllowedCPUs=0

I'm not convinced, yet, that it's an adequate workaround. I'm afraid to restrict k3s CPU too much to function properly. As of yet, however, my services and jobs run fine. Note that services, jobs, etc. are _not_ affected by the workaround, i.e. they still can use arbitrary CPU. Indeed, this speeds up my services since they now have more CPU left that's not eaten by k3s.

@bertbesser I have applied this configuration to my k3s nodes and all of them seem usable and _way_ more predicable.

Here is an overview over my CPU pre/post change at 11:00~

This is a reasonably high-power cluster but it seem to make even that better. I deployed it to some low power machine that earlier just became unresponsive (over SSH) after a while and they seem to work now.

Good job, I wonder if this would be a good enough pointer to the fact that something _must_ be wrong with k3s?

@bertbesser that is now a quite dated release, have you tried on anything newer?

Keep an eye on the k3s logs if you're going to throttle it and pin it to a single core. If you start seeing messages about datastore latency and request rate-limiting, you've limited it too much.

I am seeing what looks to be normal on my k3s (1.18.6) master (if you count this as normal)

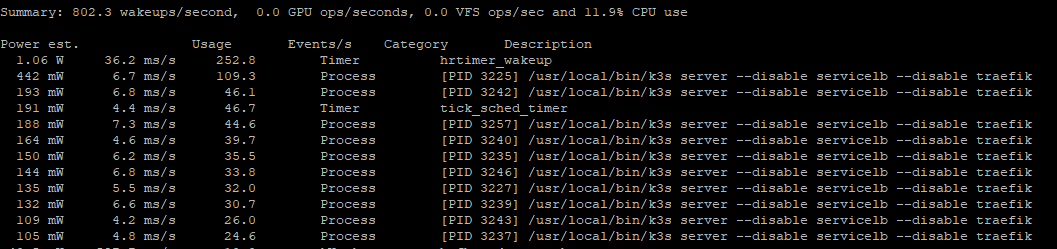

On a clean VM with Ubuntu 20.04.1 LTS installed and the latest k3s version v1.18.8+k3s1 (6b595318) I still see high cpu usage for the k3s-server ranging from 40-130%.

k3s is installed without servicelb and Traefik.

Before installing k3s, I removed lxd/snap and set iptables to use legacy mode.

Running Powertop shows k3s server at the top:

After throttling the k3s service, cpu load is down to approx 25%. Still high for a clean install.

I do not see any change in powertop.

Edit:

I did also test DietPi (Kernel 4.19), but I see no difference.

What I do see, but I'm not sure if I interpret the TOP CPU percentage figures right, is:

- Assigning 4vCPU gives an average k3s-server load of 40%

- Assigning 2vCPU gives an average k3s-server load of 20%

- And yes, a 1vCPU gives 10% CPU load...

But that observation might be my wrong interpretation of the % CPU Load displayed in TOP/HTOP.

At least I see no changes using a complete different OS and Kernel.

What did you do?

How was the cluster created?

k3d cluster create k3d-rupert-test

What did you do afterwards?

- nothing

What did you expect to happen?

I expect CPU usage to be around 5%, but it is around 90%

Screenshots or terminal output

docker stats

CONTAINER ID NAME CPU % MEM USAGE / LIMIT MEM % NET I/O BLOCK I/O PIDS

4756c2db6909 k3d-k3d-rupert-test-serverlb 0.01% 4.172MiB / 5.811GiB 0.07% 978B / 0B 0B / 0B 7

23b86aece4e3 k3d-k3d-rupert-test-server-0 43.21% 552.9MiB / 5.811GiB 9.29% 115MB / 1.19MB 0B / 0B 171

top -o cpu

PID COMMAND %CPU TIME #TH #WQ #PORT MEM PURG CMPRS PGRP PPID STATE BOOSTS %CPU_ME %CPU_OTHRS UID FAULTS COW MSGSENT

1019 com.docker.h 85.4 10:05:16 17/5 1 42 10G 0B 1416M- 960 1005 running *0[1] 0.00000 0.00000 501 4748835+ 478 2907

Which OS & Architecture?

- MacOS 10.14.6 (18G4032)

- x86

Which version of k3d?

k3d version v3.0.1

k3s version latest (default)

Which version of docker?

Client: Docker Engine - Community

Azure integration 0.1.10

Version: 19.03.12

API version: 1.40

Go version: go1.13.10

Git commit: 48a66213fe

Built: Mon Jun 22 15:41:33 2020

OS/Arch: darwin/amd64

Experimental: true

Server: Docker Engine - Community

Engine:

Version: 19.03.12

API version: 1.40 (minimum version 1.12)

Go version: go1.13.10

Git commit: 48a66213fe

Built: Mon Jun 22 15:49:27 2020

OS/Arch: linux/amd64

Experimental: true

containerd:

Version: v1.2.13

GitCommit: 7ad184331fa3e55e52b890ea95e65ba581ae3429

runc:

Version: 1.0.0-rc10

GitCommit: dc9208a3303feef5b3839f4323d9beb36df0a9dd

docker-init:

Version: 0.18.0

GitCommit: fec3683

Copied from: https://github.com/rancher/k3d/issues/352

Most helpful comment

I'm still seeing this in the latest releases. Both on Intel and ARM CPUs, load is high - and I don't mean load average, I'm seeing 20-30% CPU load on single-core VMs.