Harbor: Harbor Core is failing at quota sync after update to 1.9

If you are reporting a problem, please make sure the following information are provided:

Expected behavior and actual behavior:

Harbor is upgraded by a helm upgrade to the newest version (1.9) from (1.8) and runs smooth.

Steps to reproduce the problem:

helm upgrade with values updated to 1.9

Versions:

Please specify the versions of following systems.

- harbor version: 1.9.0

- Kubernetes version: 1.15.3

Additional context:

Maybe some data in the registry is corrupted? We are using an external Postgres database and everything else is provisioned by harbor. Database is looking fine. Registry is connected to S3

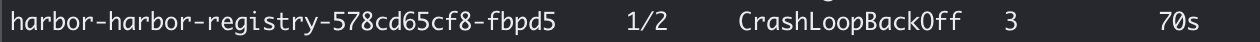

NAME READY STATUS RESTARTS AGE

acid-harbor-cluster-0 2/2 Running 0 111m

acid-harbor-cluster-1 2/2 Running 0 117m

harbor-harbor-chartmuseum-6644d76bdd-8nq5h 1/1 Running 0 32m

harbor-harbor-chartmuseum-6644d76bdd-j7jxf 1/1 Running 0 31m

harbor-harbor-chartmuseum-6644d76bdd-qpbs8 1/1 Running 0 31m

harbor-harbor-clair-7bf7d7cb76-gr9st 1/1 Running 0 31m

harbor-harbor-clair-7bf7d7cb76-zglhm 1/1 Running 0 32m

harbor-harbor-core-7c75b55f68-97p6c 0/1 CrashLoopBackOff 4 3m7s

harbor-harbor-jobservice-689b758974-dn277 0/1 CrashLoopBackOff 13 32m

harbor-harbor-jobservice-749779f679-mlck2 0/1 CrashLoopBackOff 13 38m

harbor-harbor-portal-b97448bc6-6s2n6 1/1 Running 0 32m

harbor-harbor-portal-b97448bc6-g8xfc 1/1 Running 0 31m

harbor-harbor-redis-0 1/1 Running 0 31m

harbor-harbor-registry-6c7989df76-5w8rn 2/2 Running 0 3m54s

# Harbor Core Logs

logs -f harbor-harbor-core-7c75b55f68-97p6c

2019-09-20T16:57:51Z [INFO] [/replication/adapter/native/adapter.go:44]: the factory for adapter docker-registry registered

2019-09-20T16:57:51Z [INFO] [/replication/adapter/harbor/adapter.go:42]: the factory for adapter harbor registered

2019-09-20T16:57:51Z [INFO] [/replication/adapter/dockerhub/adapter.go:25]: Factory for adapter docker-hub registered

2019-09-20T16:57:51Z [INFO] [/replication/adapter/huawei/huawei_adapter.go:27]: the factory of Huawei adapter was registered

2019-09-20T16:57:51Z [INFO] [/replication/adapter/googlegcr/adapter.go:31]: the factory for adapter google-gcr registered

2019-09-20T16:57:51Z [INFO] [/replication/adapter/awsecr/adapter.go:49]: the factory for adapter aws-ecr registered

2019-09-20T16:57:51Z [INFO] [/replication/adapter/azurecr/adapter.go:15]: Factory for adapter azure-acr registered

2019-09-20T16:57:51Z [INFO] [/replication/adapter/aliacr/adapter.go:28]: the factory for adapter ali-acr registered

2019-09-20T16:57:51Z [INFO] [/replication/adapter/helmhub/adapter.go:31]: the factory for adapter helm-hub registered

2019-09-20T16:57:51Z [INFO] [/core/controllers/base.go:288]: Config path: /etc/core/app.conf

2019-09-20T16:57:51Z [INFO] [/core/main.go:169]: initializing configurations...

2019-09-20T16:57:51Z [INFO] [/core/config/config.go:98]: key path: /etc/core/key

2019-09-20T16:57:51Z [INFO] [/core/config/config.go:71]: init secret store

2019-09-20T16:57:51Z [INFO] [/core/config/config.go:74]: init project manager based on deploy mode

2019-09-20T16:57:51Z [INFO] [/core/config/config.go:143]: initializing the project manager based on local database...

2019-09-20T16:57:51Z [INFO] [/core/main.go:173]: configurations initialization completed

2019-09-20T16:57:51Z [INFO] [/common/dao/base.go:84]: Registering database: type-PostgreSQL host-acid-harbor-cluster port-5432 databse-registry sslmode-"require"

2019-09-20T16:57:51Z [INFO] [/common/dao/base.go:89]: Register database completed

2019-09-20T16:57:51Z [INFO] [/common/dao/pgsql.go:119]: Upgrading schema for pgsql ...

2019-09-20T16:57:51Z [INFO] [/common/dao/pgsql.go:122]: No change in schema, skip.

2019-09-20T16:57:51Z [INFO] [/common/config/encrypt/encrypt.go:60]: the path of key used by key provider: /etc/core/key

2019-09-20T16:57:51Z [INFO] [/core/main.go:80]: User id: 1 already has its encrypted password.

2019-09-20T16:57:51Z [INFO] [/chartserver/cache.go:184]: Enable redis cache for chart caching

2019-09-20T16:57:51Z [INFO] [/chartserver/reverse_proxy.go:58]: New chart server traffic proxy with middlewares

2019-09-20T16:57:51Z [INFO] [/core/api/chart_repository.go:599]: API controller for chart repository server is successfully initialized

2019-09-20T16:57:51Z [INFO] [/common/dao/base.go:64]: initialized clair database

2019-09-20T16:57:51Z [INFO] [/core/main.go:221]: initializing notification...

2019-09-20T16:57:51Z [INFO] [/pkg/notification/notification.go:50]: notification initialization completed

2019-09-20T16:57:51Z [INFO] [/core/main.go:243]: Because SYNC_REGISTRY set false , no need to sync registry

2019-09-20T16:57:51Z [INFO] [/core/main.go:246]: Init proxy

2019-09-20T16:57:51Z [INFO] [/core/main.go:102]: Start to sync quota data .....

2019-09-20T16:57:51Z [INFO] [/core/api/quota/migrator.go:92]: [Quota-Sync]:: start to ping server ... [chart]

2019-09-20T16:57:51Z [INFO] [/core/api/quota/migrator.go:97]: [Quota-Sync]:: success to ping server ... [chart]

2019-09-20T16:57:51Z [INFO] [/core/api/quota/migrator.go:98]: [Quota-Sync]:: start to dump data from server ... [chart]

2019-09-20T16:57:51Z [INFO] [/chartserver/cache.go:184]: Enable redis cache for chart caching

2019-09-20T16:57:51Z [INFO] [/core/api/quota/chart/chart.go:218]: API controller for chart repository server is successfully initialized

2019-09-20T16:57:51Z [INFO] [/core/api/quota/migrator.go:104]: [Quota-Sync]:: success to dump data from server ... [chart]

2019-09-20T16:57:51Z [INFO] [/core/api/quota/migrator.go:111]: [Quota-Sync]:: start to persist data for server ... [chart]

2019-09-20T16:57:51Z [INFO] [/core/api/quota/migrator.go:116]: [Quota-Sync]:: success to persist data for server ... [chart]

2019-09-20T16:57:51Z [INFO] [/core/api/quota/migrator.go:92]: [Quota-Sync]:: start to ping server ... [registry]

2019-09-20T16:57:51Z [INFO] [/core/api/quota/migrator.go:97]: [Quota-Sync]:: success to ping server ... [registry]

2019-09-20T16:57:51Z [INFO] [/core/api/quota/migrator.go:98]: [Quota-Sync]:: start to dump data from server ... [registry]

2019-09-20T16:57:51Z [INFO] [/core/api/quota/migrator.go:101]: [Quota-Sync]:: fail to dump data from server ... [registry], quit sync ...

2019-09-20T16:57:51Z [ERROR] [/core/main.go:104]: Fail to sync quota data, Get http://harbor-harbor-registry:5000/v2/_catalog?n=1000: EOF

2019-09-20T16:57:51Z [FATAL] [/core/main.go:252]: quota migration error, Get http://harbor-harbor-registry:5000/v2/_catalog?n=1000: EOF

# Harbor registry error logs

k logs -f harbor-harbor-registry-6c7989df76-5w8rn registry

time="2019-09-20T16:53:56.56022601Z" level=info msg="debug server listening localhost:5001"

time="2019-09-20T16:53:56.568447676Z" level=info msg="configuring endpoint harbor (http://harbor-harbor-core/service/notifications), timeout=3s, headers=map[]" go.version=go1.11.8 instance.id=bd9887da-8622-44cf-8304-ed1542bfd8ed service=registry version=v2.7.1

time="2019-09-20T16:53:56.582353389Z" level=info msg="using redis blob descriptor cache" go.version=go1.11.8 instance.id=bd9887da-8622-44cf-8304-ed1542bfd8ed service=registry version=v2.7.1

time="2019-09-20T16:53:56.583192708Z" level=info msg="listening on [::]:5000" go.version=go1.11.8 instance.id=bd9887da-8622-44cf-8304-ed1542bfd8ed service=registry version=v2.7.1

10.15.2.222 - - [20/Sep/2019:16:54:04 +0000] "GET / HTTP/1.1" 200 0 "" "kube-probe/1.15"

10.15.2.222 - - [20/Sep/2019:16:54:06 +0000] "GET / HTTP/1.1" 200 0 "" "kube-probe/1.15"

10.15.2.222 - - [20/Sep/2019:16:54:14 +0000] "GET / HTTP/1.1" 200 0 "" "kube-probe/1.15"

10.15.2.222 - - [20/Sep/2019:16:54:16 +0000] "GET / HTTP/1.1" 200 0 "" "kube-probe/1.15"

10.15.2.222 - - [20/Sep/2019:16:54:24 +0000] "GET / HTTP/1.1" 200 0 "" "kube-probe/1.15"

10.15.2.222 - - [20/Sep/2019:16:54:26 +0000] "GET / HTTP/1.1" 200 0 "" "kube-probe/1.15"

10.15.2.222 - - [20/Sep/2019:16:54:34 +0000] "GET / HTTP/1.1" 200 0 "" "kube-probe/1.15"

10.15.2.222 - - [20/Sep/2019:16:54:36 +0000] "GET / HTTP/1.1" 200 0 "" "kube-probe/1.15"

10.233.76.58 - - [20/Sep/2019:16:54:43 +0000] "GET / HTTP/1.1" 200 0 "" "Go-http-client/1.1"

time="2019-09-20T16:54:44.151486697Z" level=info msg="authorized request" go.version=go1.11.8 http.request.host="harbor-harbor-registry:5000" http.request.id=e9c7b10f-212c-4895-b53b-bb6219cd83bb http.request.method=GET http.request.remoteaddr="10.233.76.58:35516" http.request.uri="/v2/_catalog?n=1000" http.request.useragent="Go-http-client/1.1"

time="2019-09-20T16:54:44.279732063Z" level=panic msg="runtime error: invalid memory address or nil pointer dereference"

2019/09/20 16:54:44 http: panic serving 10.233.76.58:35516: &{0xc00014e050 map[] 2019-09-20 16:54:44.279732063 +0000 UTC m=+47.731409503 panic runtime error: invalid memory address or nil pointer dereference <nil>}

goroutine 39 [running]:

net/http.(*conn).serve.func1(0xc00013cb40)

/usr/local/go/src/net/http/server.go:1746 +0xd0

panic(0xe91b80, 0xc0002940f0)

/usr/local/go/src/runtime/panic.go:513 +0x1b9

github.com/docker/distribution/vendor/github.com/sirupsen/logrus.Entry.log(0xc00014e050, 0xc0000be6f0, 0x0, 0x0, 0x0, 0x0, 0x0, 0x0, 0x0, 0x0, ...)

/go/src/github.com/docker/distribution/vendor/github.com/sirupsen/logrus/entry.go:124 +0x574

github.com/docker/distribution/vendor/github.com/sirupsen/logrus.(*Entry).Panic(0xc0002940a0, 0xc000642948, 0x1, 0x1)

/go/src/github.com/docker/distribution/vendor/github.com/sirupsen/logrus/entry.go:169 +0xb2

github.com/docker/distribution/vendor/github.com/sirupsen/logrus.(*Logger).Panic(0xc00014e050, 0xc000642948, 0x1, 0x1)

/go/src/github.com/docker/distribution/vendor/github.com/sirupsen/logrus/logger.go:236 +0x6d

github.com/docker/distribution/vendor/github.com/sirupsen/logrus.Panic(0xc000642948, 0x1, 0x1)

/go/src/github.com/docker/distribution/vendor/github.com/sirupsen/logrus/exported.go:107 +0x4b

github.com/docker/distribution/registry.panicHandler.func1.1()

/go/src/github.com/docker/distribution/registry/registry.go:345 +0xf9

panic(0xd84d40, 0x170e620)

/usr/local/go/src/runtime/panic.go:513 +0x1b9

github.com/docker/distribution/registry/storage/driver/s3-aws.(*driver).doWalk.func1(0xc000120f80, 0xc000331a01, 0xc00057ee38)

/go/src/github.com/docker/distribution/registry/storage/driver/s3-aws/s3.go:973 +0x9d

github.com/docker/distribution/vendor/github.com/aws/aws-sdk-go/service/s3.(*S3).ListObjectsV2PagesWithContext(0xc00000c6a8, 0x7f080ad73080, 0xc000349b90, 0xc00014f0e0, 0xc000642f38, 0x0, 0x0, 0x0, 0x1, 0x2)

/go/src/github.com/docker/distribution/vendor/github.com/aws/aws-sdk-go/service/s3/api.go:4198 +0x111

github.com/docker/distribution/registry/storage/driver/s3-aws.(*driver).doWalk(0xc000532780, 0xff4d80, 0xc000349b20, 0xc00057eff8, 0xc000490901, 0x20, 0xea98b5, 0x1, 0xc00014f090, 0x0, ...)

/go/src/github.com/docker/distribution/registry/storage/driver/s3-aws/s3.go:971 +0x3a1

github.com/docker/distribution/registry/storage/driver/s3-aws.(*driver).Walk(0xc000532780, 0xff4d80, 0xc000349b20, 0xc00034baa0, 0x20, 0xc00014f090, 0x2, 0x0)

/go/src/github.com/docker/distribution/registry/storage/driver/s3-aws/s3.go:919 +0x160

github.com/docker/distribution/registry/storage/driver/base.(*Base).Walk(0xc0004a5e10, 0xff4d80, 0xc000349b20, 0xc00034baa0, 0x20, 0xc00014f090, 0x0, 0x0)

/go/src/github.com/docker/distribution/registry/storage/driver/base/base.go:239 +0x234

github.com/docker/distribution/registry/storage.(*registry).Repositories(0xc0000fe000, 0xff4f00, 0xc000110540, 0xc00043e000, 0x3e8, 0x3e8, 0x0, 0x0, 0xfe2e28, 0x1, ...)

/go/src/github.com/docker/distribution/registry/storage/catalog.go:30 +0x1fb

github.com/docker/distribution/registry/handlers.(*catalogHandler).GetCatalog(0xc00011c178, 0xff2a40, 0xc000330fc0, 0xc000161a00)

/go/src/github.com/docker/distribution/registry/handlers/catalog.go:48 +0x12a

github.com/docker/distribution/registry/handlers.(*catalogHandler).GetCatalog-fm(0xff2a40, 0xc000330fc0, 0xc000161a00)

/go/src/github.com/docker/distribution/registry/handlers/catalog.go:24 +0x48

net/http.HandlerFunc.ServeHTTP(0xc0001a5f20, 0xff2a40, 0xc000330fc0, 0xc000161a00)

/usr/local/go/src/net/http/server.go:1964 +0x44

github.com/docker/distribution/vendor/github.com/gorilla/handlers.MethodHandler.ServeHTTP(0xc0004209f0, 0xff2a40, 0xc000330fc0, 0xc000161a00)

/go/src/github.com/docker/distribution/vendor/github.com/gorilla/handlers/handlers.go:35 +0x342

github.com/docker/distribution/registry/handlers.(*App).dispatcher.func1(0xff2a40, 0xc000330fc0, 0xc000161400)

/go/src/github.com/docker/distribution/registry/handlers/app.go:726 +0x51f

net/http.HandlerFunc.ServeHTTP(0xc000460fc0, 0xff2a40, 0xc000330fc0, 0xc000161400)

/usr/local/go/src/net/http/server.go:1964 +0x44

github.com/docker/distribution/vendor/github.com/gorilla/mux.(*Router).ServeHTTP(0xc0000928c0, 0xff2a40, 0xc000330fc0, 0xc000161400)

/go/src/github.com/docker/distribution/vendor/github.com/gorilla/mux/mux.go:114 +0xe0

github.com/docker/distribution/registry/handlers.(*App).ServeHTTP(0xc0005168c0, 0x7f080ad72e30, 0xc000113cb0, 0xc000160e00)

/go/src/github.com/docker/distribution/registry/handlers/app.go:640 +0x297

github.com/docker/distribution/registry.alive.func1(0x7f080ad72e30, 0xc000113cb0, 0xc000160e00)

/go/src/github.com/docker/distribution/registry/registry.go:365 +0x6a

net/http.HandlerFunc.ServeHTTP(0xc000112c00, 0x7f080ad72e30, 0xc000113cb0, 0xc000160e00)

/usr/local/go/src/net/http/server.go:1964 +0x44

github.com/docker/distribution/health.Handler.func1(0x7f080ad72e30, 0xc000113cb0, 0xc000160e00)

/go/src/github.com/docker/distribution/health/health.go:271 +0x11a

net/http.HandlerFunc.ServeHTTP(0xc000356f20, 0x7f080ad72e30, 0xc000113cb0, 0xc000160e00)

/usr/local/go/src/net/http/server.go:1964 +0x44

github.com/docker/distribution/registry.panicHandler.func1(0x7f080ad72e30, 0xc000113cb0, 0xc000160e00)

/go/src/github.com/docker/distribution/registry/registry.go:348 +0x81

net/http.HandlerFunc.ServeHTTP(0xc000356f40, 0x7f080ad72e30, 0xc000113cb0, 0xc000160e00)

/usr/local/go/src/net/http/server.go:1964 +0x44

github.com/docker/distribution/vendor/github.com/gorilla/handlers.combinedLoggingHandler.ServeHTTP(0xfeb860, 0xc00000c018, 0xfecfa0, 0xc000356f40, 0xff3e00, 0xc000464540, 0xc000160e00)

/go/src/github.com/docker/distribution/vendor/github.com/gorilla/handlers/handlers.go:75 +0x22f

net/http.serverHandler.ServeHTTP(0xc000080750, 0xff3e00, 0xc000464540, 0xc000160e00)

/usr/local/go/src/net/http/server.go:2741 +0xab

net/http.(*conn).serve(0xc00013cb40, 0xff4a00, 0xc000330e80)

/usr/local/go/src/net/http/server.go:1847 +0x646

created by net/http.(*Server).Serve

/usr/local/go/src/net/http/server.go:2851 +0x2f5

10.15.2.222 - - [20/Sep/2019:16:54:44 +0000] "GET / HTTP/1.1" 200 0 "" "kube-probe/1.15"

10.233.76.58 - - [20/Sep/2019:16:54:45 +0000] "GET / HTTP/1.1" 200 0 "" "Go-http-client/1.1"

time="2019-09-20T16:54:45.918109645Z" level=info msg="authorized request" go.version=go1.11.8 http.request.host="harbor-harbor-registry:5000" http.request.id=098f2bc5-81f0-4688-81a4-ab8f113264ab http.request.method=GET http.request.remoteaddr="10.233.76.58:35564" http.request.uri="/v2/_catalog?n=1000" http.request.useragent="Go-http-client/1.1"

time="2019-09-20T16:54:45.971466422Z" level=panic msg="runtime error: invalid memory address or nil pointer dereference"

2019/09/20 16:54:45 http: panic serving 10.233.76.58:35564: &{0xc00014e050 map[] 2019-09-20 16:54:45.971466422 +0000 UTC m=+49.423143865 panic runtime error: invalid memory address or nil pointer dereference <nil>}

goroutine 66 [running]:

net/http.(*conn).serve.func1(0xc000699040)

/usr/local/go/src/net/http/server.go:1746 +0xd0

panic(0xe91b80, 0xc0000c0500)

/usr/local/go/src/runtime/panic.go:513 +0x1b9

github.com/docker/distribution/vendor/github.com/sirupsen/logrus.Entry.log(0xc00014e050, 0xc0006ea660, 0x0, 0x0, 0x0, 0x0, 0x0, 0x0, 0x0, 0x0, ...)

/go/src/github.com/docker/distribution/vendor/github.com/sirupsen/logrus/entry.go:124 +0x574

github.com/docker/distribution/vendor/github.com/sirupsen/logrus.(*Entry).Panic(0xc0000c04b0, 0xc00074e948, 0x1, 0x1)

/go/src/github.com/docker/distribution/vendor/github.com/sirupsen/logrus/entry.go:169 +0xb2

github.com/docker/distribution/vendor/github.com/sirupsen/logrus.(*Logger).Panic(0xc00014e050, 0xc00074e948, 0x1, 0x1)

/go/src/github.com/docker/distribution/vendor/github.com/sirupsen/logrus/logger.go:236 +0x6d

github.com/docker/distribution/vendor/github.com/sirupsen/logrus.Panic(0xc00074e948, 0x1, 0x1)

/go/src/github.com/docker/distribution/vendor/github.com/sirupsen/logrus/exported.go:107 +0x4b

github.com/docker/distribution/registry.panicHandler.func1.1()

/go/src/github.com/docker/distribution/registry/registry.go:345 +0xf9

panic(0xd84d40, 0x170e620)

/usr/local/go/src/runtime/panic.go:513 +0x1b9

github.com/docker/distribution/registry/storage/driver/s3-aws.(*driver).doWalk.func1(0xc000121280, 0xc00038f201, 0xc000019100)

/go/src/github.com/docker/distribution/registry/storage/driver/s3-aws/s3.go:973 +0x9d

github.com/docker/distribution/vendor/github.com/aws/aws-sdk-go/service/s3.(*S3).ListObjectsV2PagesWithContext(0xc00000c6a8, 0x7f080ad73080, 0xc000248e70, 0xc000294aa0, 0xc00074ef38, 0x0, 0x0, 0x0, 0x1, 0x2)

/go/src/github.com/docker/distribution/vendor/github.com/aws/aws-sdk-go/service/s3/api.go:4198 +0x111

github.com/docker/distribution/registry/storage/driver/s3-aws.(*driver).doWalk(0xc000532780, 0xff4d80, 0xc000248d90, 0xc00074eff8, 0xc0002aa481, 0x20, 0xea98b5, 0x1, 0xc000294a50, 0x0, ...)

/go/src/github.com/docker/distribution/registry/storage/driver/s3-aws/s3.go:971 +0x3a1

github.com/docker/distribution/registry/storage/driver/s3-aws.(*driver).Walk(0xc000532780, 0xff4d80, 0xc000248d90, 0xc0001caca0, 0x20, 0xc000294a50, 0x2, 0x0)

/go/src/github.com/docker/distribution/registry/storage/driver/s3-aws/s3.go:919 +0x160

github.com/docker/distribution/registry/storage/driver/base.(*Base).Walk(0xc0004a5e10, 0xff4d80, 0xc000248d90, 0xc0001caca0, 0x20, 0xc000294a50, 0x0, 0x0)

/go/src/github.com/docker/distribution/registry/storage/driver/base/base.go:239 +0x234

github.com/docker/distribution/registry/storage.(*registry).Repositories(0xc0000fe000, 0xff4f00, 0xc000522ae0, 0xc000484000, 0x3e8, 0x3e8, 0x0, 0x0, 0xfe2e28, 0x1, ...)

/go/src/github.com/docker/distribution/registry/storage/catalog.go:30 +0x1fb

github.com/docker/distribution/registry/handlers.(*catalogHandler).GetCatalog(0xc00000c550, 0xff2a40, 0xc00038e200, 0xc0006e0000)

/go/src/github.com/docker/distribution/registry/handlers/catalog.go:48 +0x12a

github.com/docker/distribution/registry/handlers.(*catalogHandler).GetCatalog-fm(0xff2a40, 0xc00038e200, 0xc0006e0000)

/go/src/github.com/docker/distribution/registry/handlers/catalog.go:24 +0x48

net/http.HandlerFunc.ServeHTTP(0xc000019070, 0xff2a40, 0xc00038e200, 0xc0006e0000)

/usr/local/go/src/net/http/server.go:1964 +0x44

github.com/docker/distribution/vendor/github.com/gorilla/handlers.MethodHandler.ServeHTTP(0xc0000bf9b0, 0xff2a40, 0xc00038e200, 0xc0006e0000)

/go/src/github.com/docker/distribution/vendor/github.com/gorilla/handlers/handlers.go:35 +0x342

github.com/docker/distribution/registry/handlers.(*App).dispatcher.func1(0xff2a40, 0xc00038e200, 0xc0000b1800)

/go/src/github.com/docker/distribution/registry/handlers/app.go:726 +0x51f

net/http.HandlerFunc.ServeHTTP(0xc000460fc0, 0xff2a40, 0xc00038e200, 0xc0000b1800)

/usr/local/go/src/net/http/server.go:1964 +0x44

github.com/docker/distribution/vendor/github.com/gorilla/mux.(*Router).ServeHTTP(0xc0000928c0, 0xff2a40, 0xc00038e200, 0xc0000b1800)

/go/src/github.com/docker/distribution/vendor/github.com/gorilla/mux/mux.go:114 +0xe0

github.com/docker/distribution/registry/handlers.(*App).ServeHTTP(0xc0005168c0, 0x7f080ad72e30, 0xc0000be9c0, 0xc0000b1500)

/go/src/github.com/docker/distribution/registry/handlers/app.go:640 +0x297

github.com/docker/distribution/registry.alive.func1(0x7f080ad72e30, 0xc0000be9c0, 0xc0000b1500)

/go/src/github.com/docker/distribution/registry/registry.go:365 +0x6a

net/http.HandlerFunc.ServeHTTP(0xc000112c00, 0x7f080ad72e30, 0xc0000be9c0, 0xc0000b1500)

/usr/local/go/src/net/http/server.go:1964 +0x44

github.com/docker/distribution/health.Handler.func1(0x7f080ad72e30, 0xc0000be9c0, 0xc0000b1500)

/go/src/github.com/docker/distribution/health/health.go:271 +0x11a

net/http.HandlerFunc.ServeHTTP(0xc000356f20, 0x7f080ad72e30, 0xc0000be9c0, 0xc0000b1500)

/usr/local/go/src/net/http/server.go:1964 +0x44

github.com/docker/distribution/registry.panicHandler.func1(0x7f080ad72e30, 0xc0000be9c0, 0xc0000b1500)

/go/src/github.com/docker/distribution/registry/registry.go:348 +0x81

net/http.HandlerFunc.ServeHTTP(0xc000356f40, 0x7f080ad72e30, 0xc0000be9c0, 0xc0000b1500)

/usr/local/go/src/net/http/server.go:1964 +0x44

github.com/docker/distribution/vendor/github.com/gorilla/handlers.combinedLoggingHandler.ServeHTTP(0xfeb860, 0xc00000c018, 0xfecfa0, 0xc000356f40, 0xff3e00, 0xc0005660e0, 0xc0000b1500)

/go/src/github.com/docker/distribution/vendor/github.com/gorilla/handlers/handlers.go:75 +0x22f

net/http.serverHandler.ServeHTTP(0xc000080750, 0xff3e00, 0xc0005660e0, 0xc0000b1500)

/usr/local/go/src/net/http/server.go:2741 +0xab

net/http.(*conn).serve(0xc000699040, 0xff4a00, 0xc00038e040)

/usr/local/go/src/net/http/server.go:1847 +0x646

created by net/http.(*Server).Serve

/usr/local/go/src/net/http/server.go:2851 +0x2f5

10.15.2.222 - - [20/Sep/2019:16:54:46 +0000] "GET / HTTP/1.1" 200 0 "" "kube-probe/1.15"

This is our Helm Manifest used for installation:

expose:

# Set the way how to expose the service. Set the type as "ingress",

# "clusterIP", "nodePort" or "loadBalancer" and fill the information

# in the corresponding section

type: ingress

tls:

# Enable the tls or not. Note: if the type is "ingress" and the tls

# is disabled, the port must be included in the command when pull/push

# images. Refer to https://github.com/goharbor/harbor/issues/5291

# for the detail.

enabled: true

# Fill the name of secret if you want to use your own TLS certificate.

# The secret contains keys named:

# "tls.crt" - the certificate (required)

# "tls.key" - the private key (required)

# "ca.crt" - the certificate of CA (optional), this enables the download

# link on portal to download the certificate of CA

# These files will be generated automatically if the "secretName" is not set

secretName: "XXXXXXXXXXXXXXX"

# By default, the Notary service will use the same cert and key as

# described above. Fill the name of secret if you want to use a

# separated one. Only needed when the type is "ingress".

notarySecretName: ""

# The common name used to generate the certificate, it's necessary

# when the type isn't "ingress" and "secretName" is null

commonName: ""

ingress:

hosts:

core: XXXXXXXXXXXXXXX

notary: XXXXXXXXXXXXXXX

# set to the type of ingress controller if it has specific requirements.

# leave as `default` for most ingress controllers.

# set to `gce` if using the GCE ingress controller

# set to `ncp` if using the NCP (NSX-T Container Plugin) ingress controller

controller: default

annotations:

ingress.kubernetes.io/ssl-redirect: "true"

ingress.kubernetes.io/proxy-body-size: "0"

nginx.ingress.kubernetes.io/ssl-redirect: "true"

nginx.ingress.kubernetes.io/proxy-body-size: "0"

clusterIP:

# The name of ClusterIP service

name: harbor

ports:

# The service port Harbor listens on when serving with HTTP

httpPort: 80

# The service port Harbor listens on when serving with HTTPS

httpsPort: 443

# The service port Notary listens on. Only needed when notary.enabled

# is set to true

notaryPort: 4443

nodePort:

# The name of NodePort service

name: harbor

ports:

http:

# The service port Harbor listens on when serving with HTTP

port: 80

# The node port Harbor listens on when serving with HTTP

nodePort: 30002

https:

# The service port Harbor listens on when serving with HTTPS

port: 443

# The node port Harbor listens on when serving with HTTPS

nodePort: 30003

# Only needed when notary.enabled is set to true

notary:

# The service port Notary listens on

port: 4443

# The node port Notary listens on

nodePort: 30004

loadBalancer:

# The name of LoadBalancer service

name: harbor

# Set the IP if the LoadBalancer supports assigning IP

IP: ""

ports:

# The service port Harbor listens on when serving with HTTP

httpPort: 80

# The service port Harbor listens on when serving with HTTPS

httpsPort: 443

# The service port Notary listens on. Only needed when notary.enabled

# is set to true

notaryPort: 4443

annotations: {}

sourceRanges: []

# The external URL for Harbor core service. It is used to

# 1) populate the docker/helm commands showed on portal

# 2) populate the token service URL returned to docker/notary client

#

# Format: protocol://domain[:port]. Usually:

# 1) if "expose.type" is "ingress", the "domain" should be

# the value of "expose.ingress.hosts.core"

# 2) if "expose.type" is "clusterIP", the "domain" should be

# the value of "expose.clusterIP.name"

# 3) if "expose.type" is "nodePort", the "domain" should be

# the IP address of k8s node

#

# If Harbor is deployed behind the proxy, set it as the URL of proxy

externalURL: XXXXXXXXXXXXXXX

# The persistence is enabled by default and a default StorageClass

# is needed in the k8s cluster to provision volumes dynamicly.

# Specify another StorageClass in the "storageClass" or set "existingClaim"

# if you have already existing persistent volumes to use

#

# For storing images and charts, you can also use "azure", "gcs", "s3",

# "swift" or "oss". Set it in the "imageChartStorage" section

persistence:

enabled: true

# Setting it to "keep" to avoid removing PVCs during a helm delete

# operation. Leaving it empty will delete PVCs after the chart deleted

resourcePolicy: "keep"

persistentVolumeClaim:

registry:

# Use the existing PVC which must be created manually before bound,

# and specify the "subPath" if the PVC is shared with other components

existingClaim: ""

# Specify the "storageClass" used to provision the volume. Or the default

# StorageClass will be used(the default).

# Set it to "-" to disable dynamic provisioning

storageClass: "standard"

subPath: ""

accessMode: ReadWriteOnce

size: 500Gi

chartmuseum:

existingClaim: ""

storageClass: "sas"

subPath: ""

accessMode: ReadWriteOnce

size: 100Gi

jobservice:

existingClaim: ""

storageClass: ""

subPath: ""

accessMode: ReadWriteOnce

size: 50Gi

# If external database is used, the following settings for database will

# be ignored

database:

existingClaim: ""

storageClass: ""

subPath: ""

accessMode: ReadWriteOnce

size: 1Gi

# If external Redis is used, the following settings for Redis will

# be ignored

redis:

existingClaim: ""

storageClass: "ssd"

subPath: ""

accessMode: ReadWriteOnce

size: 20Gi

# Define which storage backend is used for registry and chartmuseum to store

# images and charts. Refer to

# https://github.com/docker/distribution/blob/master/docs/configuration.md#storage

# for the detail.

imageChartStorage:

# Specify whether to disable `redirect` for images and chart storage, for

# backends which not supported it (such as using minio for `s3` storage type), please disable

# it. To disable redirects, simply set `disableredirect` to `true` instead.

# Refer to

# https://github.com/docker/distribution/blob/master/docs/configuration.md#redirect

# for the detail.

disableredirect: false

# Specify the "caBundleSecretName" if the storage service uses a self-signed certificate.

# The secret must contain keys named "ca.crt" which will be injected into the trust store

# of registry's and chartmuseum's containers.

# caBundleSecretName:

# Specify the type of storage: "filesystem", "azure", "gcs", "s3", "swift",

# "oss" and fill the information needed in the corresponding section. The type

# must be "filesystem" if you want to use persistent volumes for registry

# and chartmuseum

type: s3

filesystem:

rootdirectory: /storage

#maxthreads: 100

azure:

accountname: accountname

accountkey: base64encodedaccountkey

container: containername

#realm: core.windows.net

gcs:

bucket: bucketname

# The base64 encoded json file which contains the key

encodedkey: base64-encoded-json-key-file

#rootdirectory: /gcs/object/name/prefix

#chunksize: "5242880"

s3:

region: eu-de

bucket: XXXXXXXXXXXXXXX

accesskey: XXXXXXXXXXXXXXX

secretkey: XXXXXXXXXXXXXXX

regionendpoint: https://obs.eu-de.otc.t-systems.com

encrypt: false

#keyid: mykeyid

secure: true

v4auth: true

chunksize: "5242880"

#rootdirectory: /s3/object/name/prefix

#storageclass: STANDARD

swift:

authurl: https://storage.myprovider.com/v3/auth

username: username

password: password

container: containername

#region: fr

#tenant: tenantname

#tenantid: tenantid

#domain: domainname

#domainid: domainid

#trustid: trustid

#insecureskipverify: false

#chunksize: 5M

#prefix:

#secretkey: secretkey

#accesskey: accesskey

#authversion: 3

#endpointtype: public

#tempurlcontainerkey: false

#tempurlmethods:

oss:

accesskeyid: accesskeyid

accesskeysecret: accesskeysecret

region: regionname

bucket: bucketname

#endpoint: endpoint

#internal: false

#encrypt: false

#secure: true

#chunksize: 10M

#rootdirectory: rootdirectory

imagePullPolicy: IfNotPresent

# Use this set to assign a list of default pullSecrets

imagePullSecrets:

# - name: docker-registry-secret

# - name: internal-registry-secret

logLevel: info

# The initial password of Harbor admin. Change it from portal after launching Harbor

harborAdminPassword: "XXXXXXXXXXXXXXX"

# The secret key used for encryption. Must be a string of 16 chars.

secretKey: "XXXXXXXXXXXXXXX"

# The proxy settings for updating clair vulnerabilities from the Internet and replicating

# artifacts from/to the registries that cannot be reached directly

proxy:

httpProxy:

httpsProxy:

noProxy: 127.0.0.1,localhost,.local,.internal

components:

- core

- jobservice

- clair

## UAA Authentication Options

# If you're using UAA for authentication behind a self-signed

# certificate you will need to provide the CA Cert.

# Set uaaSecretName below to provide a pre-created secret that

# contains a base64 encoded CA Certificate named `ca.crt`.

# uaaSecretName:

# If expose the service via "ingress", the Nginx will not be used

nginx:

image:

repository: goharbor/nginx-photon

tag: v1.9.0

replicas: 1

# resources:

# requests:

# memory: 256Mi

# cpu: 100m

nodeSelector: {}

tolerations: []

affinity: {}

## Additional deployment annotations

podAnnotations: {}

portal:

image:

repository: goharbor/harbor-portal

tag: v1.9.0

replicas: 2

resources:

requests:

memory: 256Mi

cpu: 100m

nodeSelector: {}

tolerations: []

affinity: {}

## Additional deployment annotations

podAnnotations: {}

core:

image:

repository: goharbor/harbor-core

tag: v1.9.0

replicas: 3

resources:

requests:

memory: 256Mi

cpu: 100m

nodeSelector: {}

tolerations: []

affinity: {}

## Additional deployment annotations

podAnnotations: {}

# Secret is used when core server communicates with other components.

# If a secret key is not specified, Helm will generate one.

# Must be a string of 16 chars.

secret: ""

# Fill the name of a kubernetes secret if you want to use your own

# TLS certificate and private key for token encryption/decryption.

# The secret must contain keys named:

# "tls.crt" - the certificate

# "tls.key" - the private key

# The default key pair will be used if it isn't set

secretName: ""

jobservice:

image:

repository: goharbor/harbor-jobservice

tag: v1.9.0

replicas: 1

maxJobWorkers: 10

# The logger for jobs: "file", "database" or "stdout"

jobLogger: database

resources:

requests:

memory: 256Mi

cpu: 100m

nodeSelector: {}

tolerations: []

affinity: {}

## Additional deployment annotations

podAnnotations: {}

# Secret is used when job service communicates with other components.

# If a secret key is not specified, Helm will generate one.

# Must be a string of 16 chars.

secret: ""

registry:

registry:

image:

repository: goharbor/registry-photon

tag: v2.7.1-patch-2819-v1.9.0

resources:

requests:

memory: 256Mi

cpu: 100m

controller:

image:

repository: goharbor/harbor-registryctl

tag: v1.9.0

resources:

requests:

memory: 256Mi

cpu: 100m

replicas: 3

nodeSelector: {}

tolerations: []

affinity: {}

## Additional deployment annotations

podAnnotations: {}

# Secret is used to secure the upload state from client

# and registry storage backend.

# See: https://github.com/docker/distribution/blob/master/docs/configuration.md#http

# If a secret key is not specified, Helm will generate one.

# Must be a string of 16 chars.

secret: ""

# If true, the registry returns relative URLs in Location headers. The client is responsible for resolving the correct URL.

relativeurls: false

middleware:

enabled: false

type: cloudFront

cloudFront:

baseurl: example.cloudfront.net

keypairid: KEYPAIRID

duration: 3000s

ipfilteredby: none

# The secret key that should be present is CLOUDFRONT_KEY_DATA, which should be the encoded private key

# that allows access to CloudFront

privateKeySecret: "my-secret"

chartmuseum:

enabled: true

# Harbor defaults ChartMuseum to returning relative urls, if you want using absolute url you should enable it by change the following value to 'true'

absoluteUrl: false

image:

repository: goharbor/chartmuseum-photon

tag: v0.9.0-v1.9.0

replicas: 3

resources:

requests:

memory: 256Mi

cpu: 100m

nodeSelector: {}

tolerations: []

affinity: {}

## Additional deployment annotations

podAnnotations: {}

clair:

enabled: true

image:

repository: goharbor/clair-photon

tag: v2.0.9-v1.9.0

replicas: 2

# The http(s) proxy used to update vulnerabilities database from internet

httpProxy:

httpsProxy:

# The interval of clair updaters, the unit is hour, set to 0 to

# disable the updaters

updatersInterval: 12

# resources:

# requests:

# memory: 256Mi

# cpu: 100m

nodeSelector: {}

tolerations: []

affinity: {}

## Additional deployment annotations

podAnnotations: {}

notary:

enabled: false

server:

image:

repository: goharbor/notary-server-photon

tag: v0.6.1-v1.9.0

replicas: 1

# resources:

# requests:

# memory: 256Mi

# cpu: 100m

signer:

image:

repository: goharbor/notary-signer-photon

tag: v0.6.1-v1.9.0

replicas: 1

# resources:

# requests:

# memory: 256Mi

# cpu: 100m

nodeSelector: {}

tolerations: []

affinity: {}

## Additional deployment annotations

podAnnotations: {}

# Fill the name of a kubernetes secret if you want to use your own

# TLS certificate authority, certificate and private key for notary

# communications.

# The secret must contain keys named ca.crt, tls.crt and tls.key that

# contain the CA, certificate and private key.

# They will be generated if not set.

secretName: ""

database:

# if external database is used, set "type" to "external"

# and fill the connection informations in "external" section

type: external

internal:

image:

repository: goharbor/harbor-db

tag: v1.9.0

# The initial superuser password for internal database

password: "changeit"

# resources:

# requests:

# memory: 256Mi

# cpu: 100m

nodeSelector: {}

tolerations: []

affinity: {}

external:

host: "acid-harbor-cluster"

port: "5432"

username: "harbor"

password: "XXXXXXXXXXXXXXX"

coreDatabase: "registry"

clairDatabase: "clair"

notaryServerDatabase: "notary_server"

notarySignerDatabase: "notary_signer"

# "disable" - No SSL

# "require" - Always SSL (skip verification)

# "verify-ca" - Always SSL (verify that the certificate presented by the

# server was signed by a trusted CA)

# "verify-full" - Always SSL (verify that the certification presented by the

# server was signed by a trusted CA and the server host name matches the one

# in the certificate)

sslmode: "require"

# The maximum number of connections in the idle connection pool.

# If it <=0, no idle connections are retained.

maxIdleConns: 50

# The maximum number of open connections to the database.

# If it <= 0, then there is no limit on the number of open connections.

# Note: the default number of connections is 100 for postgre.

maxOpenConns: 100

## Additional deployment annotations

podAnnotations: {}

redis:

# if external Redis is used, set "type" to "external"

# and fill the connection informations in "external" section

type: internal

internal:

image:

repository: goharbor/redis-photon

tag: v1.9.0

resources:

requests:

memory: 256Mi

cpu: 100m

nodeSelector: {}

tolerations: []

affinity: {}

external:

host: "192.168.0.2"

port: "6379"

# The "coreDatabaseIndex" must be "0" as the library Harbor

# used doesn't support configuring it

coreDatabaseIndex: "0"

jobserviceDatabaseIndex: "1"

registryDatabaseIndex: "2"

chartmuseumDatabaseIndex: "3"

password: ""

## Additional deployment annotations

podAnnotations: {}

All 20 comments

truncate table project_blob;

can solve this problem.

when harbor-core v1.9.0 is restartd, it always do QUOTA MIGRATION .

每当 harbor-core v1.9.0 在重启的时候,都会执行 quota migration 操作。 100% 复现。

Sep 21 02:05:47 bogon core[32240]: 2019-09-20T18:05:47Z [INFO] [/core/main.go:102]: Start to sync quota data .....

Sep 21 02:05:47 bogon core[32240]: 2019-09-20T18:05:47Z [INFO] [/core/api/quota/migrator.go:92]: [Quota-Sync]:: start to ping server ... [registry]

Sep 21 02:05:47 bogon core[32240]: 2019-09-20T18:05:47Z [INFO] [/core/api/quota/migrator.go:97]: [Quota-Sync]:: success to ping server ... [registry]

Sep 21 02:05:47 bogon core[32240]: 2019-09-20T18:05:47Z [INFO] [/core/api/quota/migrator.go:98]: [Quota-Sync]:: start to dump data from server ... [registry]

Sep 21 02:06:32 bogon core[32240]: 2019-09-20T18:06:32Z [INFO] [/core/api/quota/migrator.go:104]: [Quota-Sync]:: success to dump data from server ... [registry]

Sep 21 02:06:32 bogon core[32240]: 2019-09-20T18:06:32Z [INFO] [/core/api/quota/migrator.go:111]: [Quota-Sync]:: start to persist data for server ... [registry]

..skip..

..skip..

..skip..

Sep 21 02:03:20 bogon core[32240]: 2019-09-20T18:03:20Z [INFO] [/core/api/quota/registry/registry.go:189]: [Quota-Sync]:: start to persist artifact&blob for project: <project_name__x>, progress... [28/29]

Sep 21 02:03:50 bogon core[32240]: 2019-09-20T18:03:50Z [INFO] [/core/api/quota/registry/registry.go:201]: [Quota-Sync]:: success to persist artifact&blob for project: <project_name__y>, progress... [28/29]

Sep 21 02:03:50 bogon core[32240]: 2019-09-20T18:03:50Z [INFO] [/core/api/quota/registry/registry.go:260]: [Quota-Sync]:: start to persist project&blob for project: <project_name__z>, progress... [0/29]

Sep 21 02:03:50 bogon core[32240]: 2019-09-20T18:03:50Z [ERROR] [/core/api/quota/registry/registry.go:287]: sql: duplicate row in DB

Sep 21 02:03:50 bogon core[32240]: 2019-09-20T18:03:50Z [INFO] [/core/api/quota/migrator.go:113]: [Quota-Sync]:: fail to persist data from server ... [registry], quit sync ...

Sep 21 02:03:50 bogon core[32240]: 2019-09-20T18:03:50Z [ERROR] [/core/main.go:104]: Fail to sync quota data, sql: duplicate row in DB

Sep 21 02:03:50 bogon core[32240]: 2019-09-20T18:03:50Z [FATAL] [/core/main.go:252]: quota migration error, sql: duplicate row in DB

@tangx harbor only migrates quota data on upgrading.

The issue you mentioned is not the same one, it seems that you didn't wait for all of the containers already and killed them, then restarted harbor?

If you still encounter the issue as you mentioned, just file another issue, thanks.

@mkjoerg the root cause of your issue is that the registry crashed when to call the catalog API, you can get the error from the registry.log.

Harbor has to call the catalog API to dump data from the registry for the quota migration.

@ywk253100 any hint?

@wy65701436 Yes this is exactly what I can see too from the logs. Also always when harbor tries to connect to the call it crashes and the registry prints this error log.

We use an OpenStack Cloud by the Telekom and their S3 storage interface. It works actually pretty the same as S3 so I had never any big issues. Could it be, that something inside the registry storage is corrupted?

I got the same problem when upgrade to 1.9

It's a bug of docker registry https://github.com/docker/distribution/issues/2553 and has been fixed by PR https://github.com/docker/distribution/pull/2879, but the fix isn't included in any release yet.

@wy65701436 Maybe we should consider patch the registry by our own?

@reasonerjt your thought? Do we need to path the fix?

hi @mkjoerg , @dungdm93 , I do not have an env to reproduce your issue, but I have patched a build that contains the fix mentioned by @ywk253100 above.

Could you help to verify it in your env? Just pull the goharbor/registry-photon:v2.7.1-debug-9186-dev

Thanks

@wy65701436 I have patched the deployment and now the registry is only crashing without log output.

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

annotations:

deployment.kubernetes.io/revision: "15"

creationTimestamp: "2019-07-16T08:00:06Z"

generation: 16

labels:

app: harbor

chart: harbor

component: registry

heritage: Tiller

release: harbor

name: harbor-harbor-registry

namespace: tools-registry

resourceVersion: "32775840"

selfLink: /apis/extensions/v1beta1/namespaces/tools-registry/deployments/harbor-harbor-registry

uid: b93e2416-a79f-11e9-ad3f-fa163ec1f2bf

spec:

progressDeadlineSeconds: 600

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

app: harbor

component: registry

release: harbor

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

template:

metadata:

annotations:

checksum/configmap: f4bb879798beea8cb402f46974294b6bb9fc9e964df40cffa298a5d5e221f746

checksum/secret: 1119df6478e4912d63fd6cb41d57db8bf364b667e3816ebeb542865f28a76ccb

checksum/secret-core: 69033afada50c8391d26c6ccf965b09a73b0247d9fa4b4229942601294411ea9

checksum/secret-jobservice: c8a07e9e458a8a27042a5a593c19152dff1bf11d6dc961eaa1357ea6c9116c77

creationTimestamp: null

labels:

app: harbor

chart: harbor

component: registry

heritage: Tiller

release: harbor

spec:

containers:

- args:

- serve

- /etc/registry/config.yml

envFrom:

- secretRef:

name: harbor-harbor-registry

image: goharbor/registry-photon:v2.7.1-debug-9186

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /

port: 5000

scheme: HTTP

initialDelaySeconds: 1

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

name: registry

ports:

- containerPort: 5000

protocol: TCP

- containerPort: 5001

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /

port: 5000

scheme: HTTP

initialDelaySeconds: 1

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

resources:

requests:

cpu: 100m

memory: 256Mi

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

- mountPath: /storage

name: registry-data

- mountPath: /etc/registry/root.crt

name: registry-root-certificate

subPath: tls.crt

- mountPath: /etc/registry/config.yml

name: registry-config

subPath: config.yml

- args:

- serve

- /etc/registry/config.yml

env:

- name: CORE_SECRET

valueFrom:

secretKeyRef:

key: secret

name: harbor-harbor-core

- name: JOBSERVICE_SECRET

valueFrom:

secretKeyRef:

key: secret

name: harbor-harbor-jobservice

envFrom:

- secretRef:

name: harbor-harbor-registry

image: goharbor/harbor-registryctl:v1.9.0

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /api/health

port: 8080

scheme: HTTP

initialDelaySeconds: 1

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

name: registryctl

ports:

- containerPort: 8080

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /api/health

port: 8080

scheme: HTTP

initialDelaySeconds: 1

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

resources:

requests:

cpu: 100m

memory: 256Mi

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

- mountPath: /storage

name: registry-data

- mountPath: /etc/registry/config.yml

name: registry-config

subPath: config.yml

- mountPath: /etc/registryctl/config.yml

name: registry-config

subPath: ctl-config.yml

dnsPolicy: ClusterFirst

restartPolicy: Always

schedulerName: default-scheduler

securityContext: {}

terminationGracePeriodSeconds: 30

volumes:

- name: registry-root-certificate

secret:

defaultMode: 420

secretName: harbor-harbor-core

- configMap:

defaultMode: 420

name: harbor-harbor-registry

name: registry-config

- emptyDir: {}

name: registry-data

status:

conditions:

- lastTransitionTime: "2019-09-23T06:36:55Z"

lastUpdateTime: "2019-09-23T06:36:55Z"

message: Deployment does not have minimum availability.

reason: MinimumReplicasUnavailable

status: "False"

type: Available

- lastTransitionTime: "2019-07-16T08:00:06Z"

lastUpdateTime: "2019-09-23T06:37:41Z"

message: ReplicaSet "harbor-harbor-registry-578cd65cf8" is progressing.

reason: ReplicaSetUpdated

status: "True"

type: Progressing

observedGeneration: 16

replicas: 1

unavailableReplicas: 1

updatedReplicas: 1

It is also not logging:

Is there a way to increase the log output?

The events are not showing anything suspicious, the pod just crashes immediately.

what's the output of core?

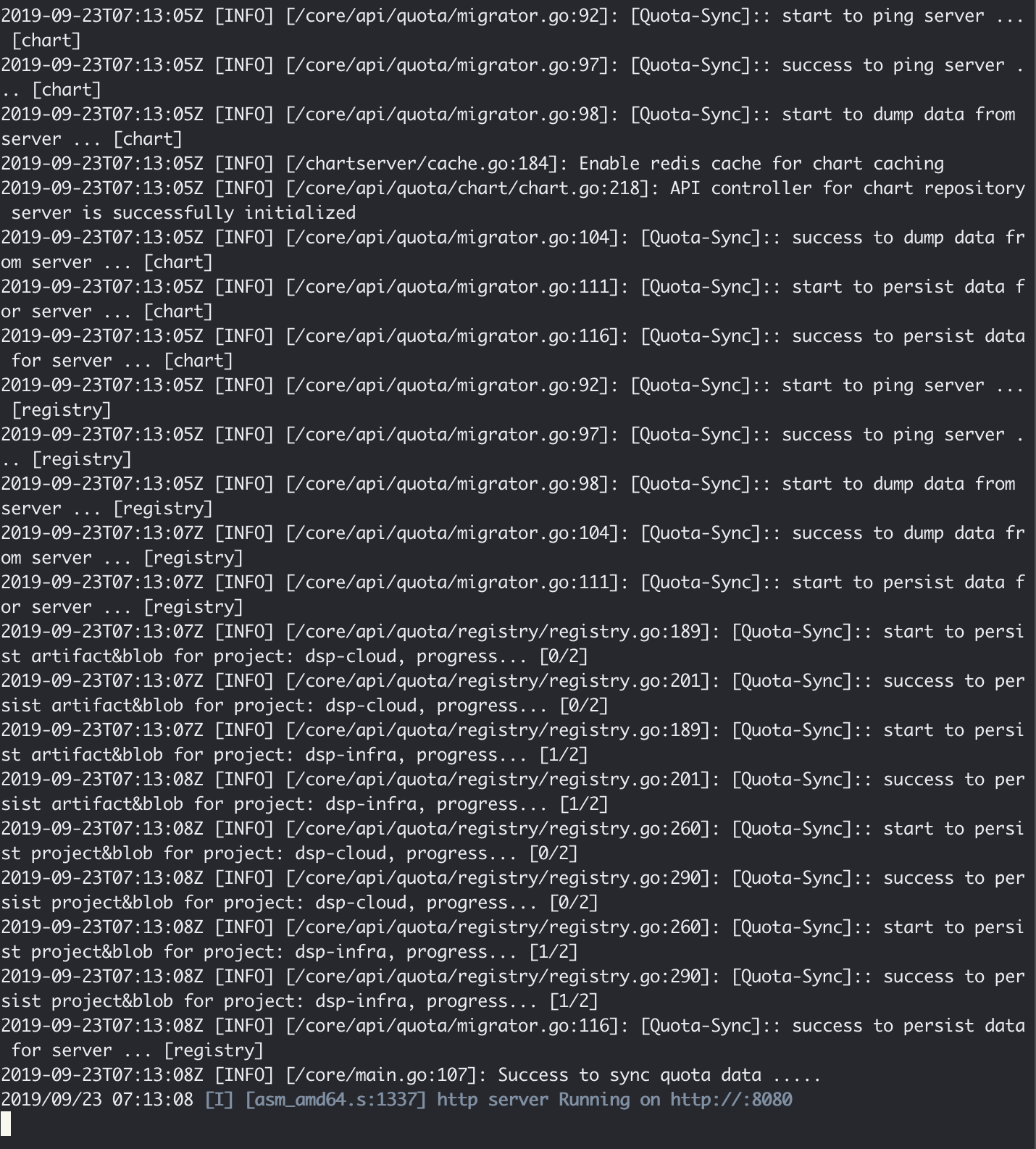

@wy65701436 The output of core is

k logs -f harbor-harbor-core-7996fd6568-88r5q

2019-09-23T06:44:24Z [INFO] [/replication/adapter/native/adapter.go:44]: the factory for adapter docker-registry registered

2019-09-23T06:44:24Z [INFO] [/replication/adapter/harbor/adapter.go:42]: the factory for adapter harbor registered

2019-09-23T06:44:24Z [INFO] [/replication/adapter/dockerhub/adapter.go:25]: Factory for adapter docker-hub registered

2019-09-23T06:44:24Z [INFO] [/replication/adapter/huawei/huawei_adapter.go:27]: the factory of Huawei adapter was registered

2019-09-23T06:44:24Z [INFO] [/replication/adapter/googlegcr/adapter.go:31]: the factory for adapter google-gcr registered

2019-09-23T06:44:24Z [INFO] [/replication/adapter/awsecr/adapter.go:49]: the factory for adapter aws-ecr registered

2019-09-23T06:44:24Z [INFO] [/replication/adapter/azurecr/adapter.go:15]: Factory for adapter azure-acr registered

2019-09-23T06:44:24Z [INFO] [/replication/adapter/aliacr/adapter.go:28]: the factory for adapter ali-acr registered

2019-09-23T06:44:24Z [INFO] [/replication/adapter/helmhub/adapter.go:31]: the factory for adapter helm-hub registered

2019-09-23T06:44:24Z [INFO] [/core/controllers/base.go:288]: Config path: /etc/core/app.conf

2019-09-23T06:44:24Z [INFO] [/core/main.go:169]: initializing configurations...

2019-09-23T06:44:24Z [INFO] [/core/config/config.go:98]: key path: /etc/core/key

2019-09-23T06:44:24Z [INFO] [/core/config/config.go:71]: init secret store

2019-09-23T06:44:24Z [INFO] [/core/config/config.go:74]: init project manager based on deploy mode

2019-09-23T06:44:24Z [INFO] [/core/config/config.go:143]: initializing the project manager based on local database...

2019-09-23T06:44:24Z [INFO] [/core/main.go:173]: configurations initialization completed

2019-09-23T06:44:24Z [INFO] [/common/dao/base.go:84]: Registering database: type-PostgreSQL host-acid-harbor-cluster port-5432 databse-registry sslmode-"require"

2019-09-23T06:44:24Z [INFO] [/common/dao/base.go:89]: Register database completed

2019-09-23T06:44:24Z [INFO] [/common/dao/pgsql.go:119]: Upgrading schema for pgsql ...

2019-09-23T06:44:24Z [INFO] [/common/dao/pgsql.go:122]: No change in schema, skip.

2019-09-23T06:44:24Z [INFO] [/common/config/encrypt/encrypt.go:60]: the path of key used by key provider: /etc/core/key

2019-09-23T06:44:24Z [INFO] [/core/main.go:80]: User id: 1 already has its encrypted password.

2019-09-23T06:44:24Z [INFO] [/chartserver/cache.go:184]: Enable redis cache for chart caching

2019-09-23T06:44:24Z [INFO] [/chartserver/reverse_proxy.go:58]: New chart server traffic proxy with middlewares

2019-09-23T06:44:24Z [INFO] [/core/api/chart_repository.go:599]: API controller for chart repository server is successfully initialized

2019-09-23T06:44:24Z [INFO] [/common/dao/base.go:64]: initialized clair database

2019-09-23T06:44:24Z [INFO] [/core/main.go:221]: initializing notification...

2019-09-23T06:44:24Z [INFO] [/pkg/notification/notification.go:50]: notification initialization completed

2019-09-23T06:44:24Z [INFO] [/core/main.go:243]: Because SYNC_REGISTRY set false , no need to sync registry

2019-09-23T06:44:24Z [INFO] [/core/main.go:246]: Init proxy

2019-09-23T06:44:24Z [INFO] [/core/main.go:102]: Start to sync quota data .....

2019-09-23T06:44:24Z [INFO] [/core/api/quota/migrator.go:92]: [Quota-Sync]:: start to ping server ... [chart]

2019-09-23T06:44:24Z [INFO] [/core/api/quota/migrator.go:97]: [Quota-Sync]:: success to ping server ... [chart]

2019-09-23T06:44:24Z [INFO] [/core/api/quota/migrator.go:98]: [Quota-Sync]:: start to dump data from server ... [chart]

2019-09-23T06:44:24Z [INFO] [/chartserver/cache.go:184]: Enable redis cache for chart caching

2019-09-23T06:44:24Z [INFO] [/core/api/quota/chart/chart.go:218]: API controller for chart repository server is successfully initialized

2019-09-23T06:44:24Z [INFO] [/core/api/quota/migrator.go:104]: [Quota-Sync]:: success to dump data from server ... [chart]

2019-09-23T06:44:24Z [INFO] [/core/api/quota/migrator.go:111]: [Quota-Sync]:: start to persist data for server ... [chart]

2019-09-23T06:44:24Z [INFO] [/core/api/quota/migrator.go:116]: [Quota-Sync]:: success to persist data for server ... [chart]

2019-09-23T06:44:24Z [INFO] [/core/api/quota/migrator.go:92]: [Quota-Sync]:: start to ping server ... [registry]

2019-09-23T06:44:42Z [INFO] [/core/api/quota/migrator.go:94]: [Quota-Sync]:: fail to ping server ... [registry], quit sync ...

2019-09-23T06:44:42Z [ERROR] [/core/main.go:104]: Fail to sync quota data, failed to check health: Get http://harbor-harbor-registry:5000/: dial tcp 10.233.9.51:5000: connect: connection refused

2019-09-23T06:44:42Z [FATAL] [/core/main.go:252]: quota migration error, failed to check health: Get http://harbor-harbor-registry:5000/: dial tcp 10.233.9.51:5000: connect: connection refused

let me check the image.

@mkjoerg can you update the image to goharbor/registry-photon:v2.7.1-debug-9186-dev? I have verified this on my env, worked fine.

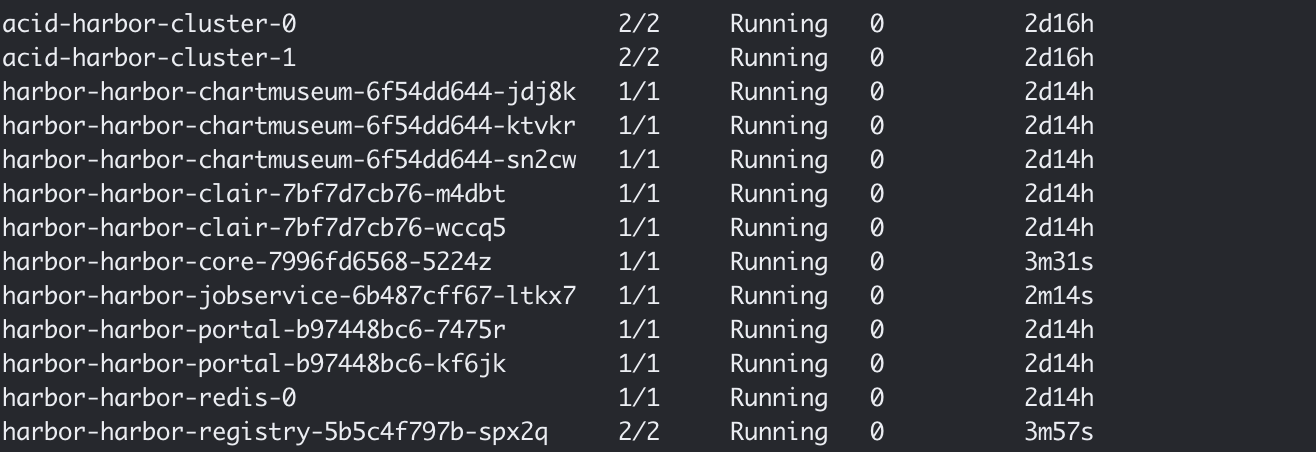

@wy65701436 This looks way better:

Harbor also started and images can be pulled.

Thanks for the verification, just try the functionality and file issue if you still get a problem.

We can have a fix in v1.9.1 for this issue.

I verified everything and it works smoothly as before. Thank you for the quick help and responses. I will close this issue and wait for 1.9.1 to get the updated registry. Till then I will stick with the working container you pushed.

Reopen it to track the fix work

close it as PR merged.

I hate to bump a closed thread, but I'm still getting this error using the patched registry. My registry podspec is:

# Please edit the object below. Lines beginning with a '#' will be ignored,

# and an empty file will abort the edit. If an error occurs while saving this file will be

# reopened with the relevant failures.

#

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

annotations:

deployment.kubernetes.io/revision: "9"

creationTimestamp: "2019-10-01T18:34:59Z"

generation: 9

labels:

app: harbor

chart: harbor

component: registry

heritage: Tiller

release: harbor

name: harbor-harbor-registry

namespace: harbor

resourceVersion: "31411546"

selfLink: /apis/extensions/v1beta1/namespaces/harbor/deployments/harbor-harbor-registry

uid: 2c6ca39b-e47a-11e9-a2cb-026fddca3bb4

spec:

progressDeadlineSeconds: 600

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

app: harbor

component: registry

release: harbor

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

template:

metadata:

annotations:

checksum/configmap: 390eb2ab44f8378438c734ea9a3d37130694f4ca979ab37147606dd66a606bfa

checksum/secret: 1a5c3031c78fba73adea6bfdad3bddb3a71420b022c4a0e1e25cecd9205bd9aa

checksum/secret-core: 780698a159055fabc29653488a2aa5bbbe92dba27b5489cedc3df1b1ec4a661c

checksum/secret-jobservice: 0c3506d85f2ec9b4debcea1a83423f30cbe152970e22aec30d280369dac52eab

iam.amazonaws.com/role: arn:aws:iam::518589827086:role/kube-harbor

creationTimestamp: null

labels:

app: harbor

chart: harbor

component: registry

heritage: Tiller

release: harbor

spec:

containers:

- args:

- serve

- /etc/registry/config.yml

envFrom:

- secretRef:

name: harbor-harbor-registry

image: goharbor/registry-photon:v2.7.1-debug-9186-dev

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /

port: 5000

scheme: HTTP

initialDelaySeconds: 1

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

name: registry

ports:

- containerPort: 5000

protocol: TCP

- containerPort: 5001

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /

port: 5000

scheme: HTTP

initialDelaySeconds: 1

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

resources: {}

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

- mountPath: /storage

name: registry-data

- mountPath: /etc/registry/root.crt

name: registry-root-certificate

subPath: tls.crt

- mountPath: /etc/registry/config.yml

name: registry-config

subPath: config.yml

- args:

- serve

- /etc/registry/config.yml

env:

- name: CORE_SECRET

valueFrom:

secretKeyRef:

key: secret

name: harbor-harbor-core

- name: JOBSERVICE_SECRET

valueFrom:

secretKeyRef:

key: secret

name: harbor-harbor-jobservice

envFrom:

- secretRef:

name: harbor-harbor-registry

image: goharbor/harbor-registryctl:v1.9.1-dev

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /api/health

port: 8080

scheme: HTTP

initialDelaySeconds: 1

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

name: registryctl

ports:

- containerPort: 8080

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /api/health

port: 8080

scheme: HTTP

initialDelaySeconds: 1

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

resources: {}

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

- mountPath: /storage

name: registry-data

- mountPath: /etc/registry/config.yml

name: registry-config

subPath: config.yml

- mountPath: /etc/registryctl/config.yml

name: registry-config

subPath: ctl-config.yml

dnsPolicy: ClusterFirst

restartPolicy: Always

schedulerName: default-scheduler

securityContext: {}

terminationGracePeriodSeconds: 30

volumes:

- name: registry-root-certificate

secret:

defaultMode: 420

secretName: harbor-harbor-core

- configMap:

defaultMode: 420

name: harbor-harbor-registry

name: registry-config

- emptyDir: {}

name: registry-data

status:

availableReplicas: 1

conditions:

- lastTransitionTime: "2019-10-01T18:41:13Z"

lastUpdateTime: "2019-10-01T18:41:13Z"

message: Deployment has minimum availability.

reason: MinimumReplicasAvailable

status: "True"

type: Available

- lastTransitionTime: "2019-10-01T18:34:59Z"

lastUpdateTime: "2019-10-01T19:35:12Z"

message: ReplicaSet "harbor-harbor-registry-6559d49b56" has successfully progressed.

reason: NewReplicaSetAvailable

status: "True"

type: Progressing

observedGeneration: 9

readyReplicas: 1

replicas: 1

updatedReplicas: 1

I got core running after running the truncate command on the database. Unblocked.

Most helpful comment

can solve this problem.

when harbor-core v1.9.0 is restartd, it always do

QUOTA MIGRATION.每当 harbor-core v1.9.0 在重启的时候,都会执行

quota migration操作。 100% 复现。https://github.com/goharbor/harbor/blob/38a9690f9ae4e56d55f027f63351e201c50803b1/src/core/api/quota/registry/registry.go#L285