Harbor: Harbor goes into read-only mode without consent

Harbor automatically and periodically sets itself to read-only mode without any interaction. What might be the cause for this and how can we prevent it from happening again?

All 16 comments

did you try to set a cron schedule for GC?

No, should I do it?

No. could you please provide the core and job service log?

@hazarguney Could you let us know the version of Harbor you are using?

The reason for the question in the previous comment, is that when Harbor is doing GC it will first set Harbor to readonly mode and launch GC, when the process it's done the it will be unset.

Version v1.8.0-25bb24ca

Thank you for explanation.

I met the same situation, too.

I have ever set a cron schedule for GC. Because the GC process takes too much time, I closed it.

The actions above have been repeated some times.

After closing the cron scheduler last time, there are still several GC schedules in redis zset. And the registry goes in to read-only mode when running GC task.

version: 1.7.1

Attention! I have NO cron schedules for GC right now.

Here is my jobservice log.

Sep 9 03:02:37 172.22.0.1 jobservice[4367]: encoding/json.Marshal(0xb00fc0, 0xc420204120, 0x0, 0x0, 0x0, 0x0, 0x0)

Sep 9 03:02:37 172.22.0.1 jobservice[4367]: #011/usr/local/go/src/encoding/json/encode.go:161 +0x5f

Sep 9 03:02:37 172.22.0.1 jobservice[4367]: github.com/goharbor/harbor/src/jobservice/opm.(*queueItem).string(0xc420204120, 0xc420204120, 0x0)

Sep 9 03:02:37 172.22.0.1 jobservice[4367]: #011/go/src/github.com/goharbor/harbor/src/jobservice/opm/redis_job_stats_mgr.go:67 +0x3b

Sep 9 03:02:37 172.22.0.1 jobservice[4367]: github.com/goharbor/harbor/src/jobservice/opm.(*RedisJobStatsManager).loop.func2(0xc42005acc0, 0xc4205c5c80, 0xc420204120)

Sep 9 03:02:37 172.22.0.1 jobservice[4367]: #011/go/src/github.com/goharbor/harbor/src/jobservice/opm/redis_job_stats_mgr.go:225 +0x40b

Sep 9 03:02:37 172.22.0.1 jobservice[4367]: created by github.com/goharbor/harbor/src/jobservice/opm.(*RedisJobStatsManager).loop

Sep 9 03:02:37 172.22.0.1 jobservice[4367]: #011/go/src/github.com/goharbor/harbor/src/jobservice/opm/redis_job_stats_mgr.go:199 +0xc2

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: chown: No /var/log/jobs/scan_job: Permission denied

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Registering database: type-PostgreSQL host-10.247.17.11 port-5432 databse-registry sslmode-"disable"

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: [ORM]2019/09/08 19:02:38 Detect DB timezone: PRC open /usr/local/go/lib/time/zoneinfo.zip: no such file or directory

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Register database completed

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Register job *impl.DemoJob with name DEMO

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Register job *scan.ClairJob with name IMAGE_SCAN

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Register job *scan.All with name IMAGE_SCAN_ALL

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Register job *replication.Transfer with name IMAGE_TRANSFER

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Register job *replication.Deleter with name IMAGE_DELETE

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Register job *replication.Replicator with name IMAGE_REPLICATE

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Register job *gc.GarbageCollector with name IMAGE_GC

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Message server is started

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Subscribe redis channel {harbor_job_service_namespace}:period:policies:notifications

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] OP commands sweeper is started

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Redis job stats manager is started

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Server is started at :8080 with http

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Load periodic job policy 3f15f7dba739e12301f048c5 for job IMAGE_GC: 0 0 20 * * *

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Load periodic job policy 572ce89232ceb53d4049084d for job IMAGE_GC: 0 0 17 * * *

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Load periodic job policy bf372f838a96b4a2b1bacf09 for job IMAGE_GC: 0 0 17 * * 5

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Load periodic job policy 184ce895a4913bd7897df537 for job IMAGE_GC: 0 0 19 * * 5

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Load periodic job policy 82e43d83b6e9ef2665b96aa6 for job IMAGE_SCAN_ALL: 0 0 19 * * *

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Load periodic job policy a4dc4a94ccd3abcfccf2cf32 for job IMAGE_GC: 0 0 15 * * 4

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Load 6 periodic job policies

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Periodic enqueuer is started

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Redis scheduler is started

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [ERROR] [enqueuer.go:81]: periodic_enqueuer.loop.enqueue:key {harbor_job_service_namespace}:period:lock is already set with value 61312556527876cb88e02a67

Sep 9 03:02:38 172.22.0.1 jobservice[4367]: 2019-09-08T19:02:38Z [INFO] Redis worker pool is started

db data:

pgtest@10:registry> select * from admin_job order by id desc limit 20;

+------+----------------+------------+-----------------------------------------------+----------+--------------------------+---------------------+----------------------------+-----------+

| id | job_name | job_kind | cron_str | status | job_uuid | creation_time | update_time | deleted |

|------+----------------+------------+-----------------------------------------------+----------+--------------------------+---------------------+----------------------------+-----------|

| 30 | IMAGE_GC | Periodic | {"type":"Weekly","weekday":0,"offtime":57600} | pending | cb72010eb3e0faaf84f7a52b | 2019-09-08 12:53:56 | 2019-09-08 20:54:23.079588 | True |

| 29 | IMAGE_GC | Periodic | {"type":"Weekly","weekday":5,"offtime":64800} | pending | bde49175a470221ff5ce9fdf | 2019-09-06 23:58:29 | 2019-09-07 08:01:35.925956 | True |

| 28 | IMAGE_GC | Periodic | {"type":"Weekly","weekday":5,"offtime":21600} | pending | 50eeb61e4a984ae35f1778ab | 2019-09-06 23:57:01 | 2019-09-07 07:58:05.99436 | True |

| 27 | IMAGE_GC | Periodic | {"type":"Weekly","weekday":5,"offtime":54000} | pending | 59b85adee0cc1785accd036c | 2019-09-06 23:56:40 | 2019-09-07 07:57:01.975845 | True |

| 26 | IMAGE_GC | Periodic | {"type":"Weekly","weekday":5,"offtime":54000} | pending | 28bdf7572b569599b04af50c | 2019-09-06 23:54:03 | 2019-09-07 07:56:40.760134 | True |

| 25 | IMAGE_GC | Periodic | {"type":"Weekly","weekday":4,"offtime":54000} | finished | 79ae441ed31e54d35abe250b | 2019-08-09 04:05:33 | 2019-09-07 07:54:03.801789 | True |

| 24 | IMAGE_SCAN_ALL | Periodic | | finished | 167a6a12cc1cecf8a49a8019 | 2019-07-02 06:14:15 | 2019-09-09 03:31:09.001169 | False |

| 23 | IMAGE_GC | Periodic | {"type":"Weekly","weekday":5,"offtime":68400} | finished | 846159afa23916696bd6e416 | 2019-06-27 06:27:32 | 2019-09-07 04:56:48.075689 | True |

| 22 | IMAGE_GC | Generic | {"type":"Manual","weekday":0,"offtime":0} | finished | 19c5bb7b59ec85cbc42f5565 | 2019-06-16 03:44:35 | 2019-06-16 12:27:04.155119 | False |

| 21 | IMAGE_GC | Periodic | {"type":"Weekly","weekday":5,"offtime":61200} | finished | 9edea3622983a2004722749c | 2019-06-16 03:44:30 | 2019-09-07 03:07:52.989849 | True |

| 20 | IMAGE_GC | Periodic | {"type":"Daily","weekday":0,"offtime":61200} | finished | 3b4e71716bf50896f09a8ecd | 2019-06-14 06:23:37 | 2019-09-09 02:47:03.539696 | True |

| 19 | IMAGE_GC | Periodic | {"type":"Daily","weekday":0,"offtime":72000} | pending | | 2019-06-14 06:23:17 | 2019-06-14 14:23:17.783684 | True |

| 18 | IMAGE_GC | Generic | {"type":"Manual","weekday":0,"offtime":0} | finished | 0b1e5c266d20d4968f03e4fe | 2019-06-14 06:06:35 | 2019-06-14 14:56:58.352347 | False |

| 17 | IMAGE_GC | Generic | {"type":"Manual","weekday":0,"offtime":0} | pending | | 2019-04-11 09:52:29 | 2019-04-11 17:52:29.525763 | True |

| 16 | IMAGE_GC | Generic | {"type":"Manual","weekday":0,"offtime":0} | pending | | 2019-04-11 09:51:07 | 2019-04-11 17:51:07.879096 | True |

| 15 | IMAGE_GC | Generic | {"type":"Manual","weekday":0,"offtime":0} | pending | | 2019-04-11 09:50:09 | 2019-04-11 17:50:09.073626 | True |

| 14 | IMAGE_GC | Generic | {"type":"Manual","weekday":0,"offtime":0} | pending | | 2019-04-11 09:49:44 | 2019-04-11 17:49:44.413699 | True |

| 13 | IMAGE_GC | Generic | {"type":"Manual","weekday":0,"offtime":0} | pending | | 2019-04-11 09:49:31 | 2019-04-11 17:49:31.462649 | True |

| 12 | IMAGE_GC | Generic | {"type":"Manual","weekday":0,"offtime":0} | pending | | 2019-04-11 09:48:22 | 2019-04-11 17:48:22.36654 | True |

| 11 | IMAGE_GC | Generic | {"type":"Manual","weekday":0,"offtime":0} | pending | | 2019-04-11 09:48:01 | 2019-04-11 17:48:01.172248 | True |

+------+----------------+------------+-----------------------------------------------+----------+--------------------------+---------------------+----------------------------+-----------+

redis data:

127.0.0.1:6379[2]> ZRANGE {harbor_job_service_namespace}:period:policies 0 -1 withscores

1) "{\"job_name\":\"IMAGE_GC\",\"job_params\":{\"redis_url_reg\":\"redis://redis:6379/1\"},\"cron_spec\":\"0 0 20 * * *\"}"

2) "1547020500"

3) "{\"job_name\":\"IMAGE_GC\",\"job_params\":{\"redis_url_reg\":\"redis://redis:6379/1\"},\"cron_spec\":\"0 0 17 * * *\"}"

4) "1560493881"

5) "{\"job_name\":\"IMAGE_GC\",\"job_params\":{\"redis_url_reg\":\"redis://redis:6379/1\"},\"cron_spec\":\"0 0 17 * * 5\"}"

6) "1560657024"

7) "{\"job_name\":\"IMAGE_GC\",\"job_params\":{\"redis_url_reg\":\"redis://redis:6379/1\"},\"cron_spec\":\"0 0 19 * * 5\"}"

8) "1561617033"

9) "{\"job_name\":\"IMAGE_SCAN_ALL\",\"job_params\":null,\"cron_spec\":\"0 0 19 * * *\"}"

10) "1562048512"

11) "{\"job_name\":\"IMAGE_GC\",\"job_params\":{\"redis_url_reg\":\"redis://redis:6379/1\"},\"cron_spec\":\"0 0 15 * * 4\"}"

12) "1565324417"

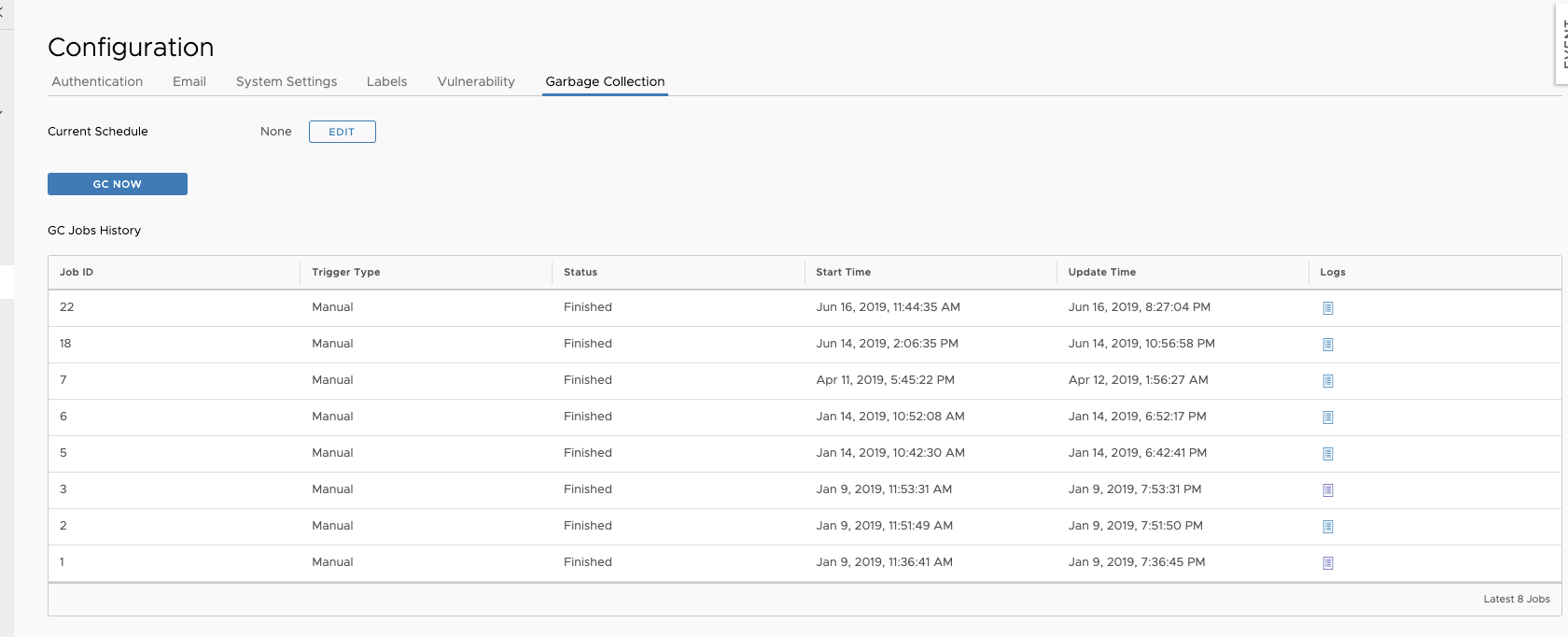

Harbor potal configuration.

it's a bug of jobservice, you have to clean the schedule of GC manually in redis. @steven-zou, can you help?

Is there any way to get the status of read only mode via API? We want to monitor this issue as having read only mode on leads to failes builds.

swagger.yaml is not avaiable at the moment so we cannot check for ourself....

Is there any way to get the status of read only mode via API? We want to monitor this issue as having read only mode on leads to failes builds.

swagger.yaml is not avaiable at the moment so we cannot check for ourself....

Hi, just curl the API:

curl -u "$USER:$PASSWORD" -vkXGET 'https://$HARBOR_NAME/api/configurations' -H "content-type: application/json"

This give you a JSON with all the configuration, you just need to map the specific field (if response['read_only']['value'] == True).

We have the same issue here since a few days, (running Harbor v1.9.1-c90c264f).

Indeed, we have a GC cron job running at 00:00 daily.

So the actual issue is that the GC either runs too long, oder does not unset the readonly flag?

We are experiencing the same read-only issue due to GC. No issue after disabling the cron scheduler GC.

Any fix or suggestion for this issue?

We are also experiencing problems with readonly registry. We have a cron schedule defined for GC (0 15 3 * * *) and I can see that the GC job starts and finishes (apparently the cron schedule seems to be UTZ time and our logs are in localtime (UTZ+1):

Dec 23 04:15:02 172.27.0.1 jobservice[1650]: 2019-12-23T03:15:02Z [INFO] [/jobservice/worker/cworker/c_worker.go:73]: Job incoming: {"name":"IMAGE_GC","id":"7771b31f405ccfc4e59eff29","t":1577070900,"args":null}

Dec 23 05:48:11 172.27.0.1 jobservice[1650]: 2019-12-23T04:48:11Z [INFO] [/jobservice/runner/redis.go:61]: |^_^| Job 'IMAGE_GC:7771b31f405ccfc4e59eff29' exit with success

However even when the job is finished, the registry is still in readonly mode until we go into the admin gui-> configuration and disables readonly.

We are running in instance which has been upgraded from 1.5 -> 1.7.1 -> 1.9.3

Is there any update on this issue? this is forcing us to have unnecessary monitoring code checking when our multiple harbors are changed to read only mode.

In case it helps, we are seeing this issue with v1.8.4 running on Azure VMs. We have other harbors on prem with the same version but we are not encountering the issue on these ones, at least as of now.

@muruganlnx @ThomasRasmussen @gonzalomk please refer the comments https://github.com/goharbor/harbor/issues/10413#issuecomment-586809368

Hi I am also facing this issue. GC is scheduled for every hour but it is working good as it is unchecking the read only mode. But only few times it not able to uncheck and turning on to the readonly mode.

Enhancements of jobservice which may cause this issue has been released in 1.10.1.

For more discussion, let's use the newer thread https://github.com/goharbor/harbor/issues/10413 to avoid duplications.