Hi~

I found several bad epochs(like bad epochs 3) when browsing the training log. I‘m confused about the meaning of the bad epochs.’

One more question, if we want to use the bert-lstm-crf whose lstm layer is not bidirectional( I set the bidirectional = False in Sequence_tagger.py), but it seems to be more modified?(the error proves that dimension does not match)

Hope to get your reply, Thanks.

All 21 comments

Hi, bad epoch means your metrics does not improve on validation set for this epoch

Hi, bad epoch means your metrics does not improve on validation set for this epoch

@gccome

Hi,

What does the 3 mean? Does it mean that 3 epochs have not been improved on the verification set?

That’s correct.

That’s correct.

@gccome

Thank you.

And how does flair define the best model?

I mean that the highest F1 on the verification set throughout the training process is 0.735, but the F1 on the best model is 0.71. (the verification set and the testing set are the same. ) I'm also confused about this.

@aslicedbread by reviewing the code, the best model is saved when you have best F1 on validation set. At the end, the best model will be applied on test data. In my experiments, those two numbers match. Can I see you training log file?

@aslicedbread by reviewing the code, the best model is saved when you have best F1 on validation set. At the end, the best model will be applied on test data. In my experiments, those two numbers match. Can I see you training log file?

@gccome

of course. Here is the url: https://share.weiyun.com/5U1NBWR

I'm not sure whether this web drive is available in your country.

@aslicedbread unfortunately I was not able to open it:(

@aslicedbread by reviewing the code, the best model is saved when you have best F1 on validation set. At the end, the best model will be applied on test data. In my experiments, those two numbers match. Can I see you training log file?

@gccome

of course. Here is the url: https://share.weiyun.com/5U1NBWR

I'm not sure whether this web drive is available in your country.

Unfortunately no.

@aslicedbread unfortunately I was not able to open it:(

@gccome

sorry to hear that.

I have uploaded to the google drive.

Here is the url:

https://drive.google.com/file/d/1dnlOaIrFqO4hVWyqNRl-C7PDPF1L59t3/view?usp=sharing

@aslicedbread it seems you were not evaluating against validation set. Could you please share your training code snippet? Did you set anneal_against_train_loss to be False? By observing the log, seems like you were training against training loss.

@aslicedbread it seems you were not evaluating against validation set. Could you please share your training code snippet? Did you set

anneal_against_train_lossto be False? By observing the log, seems like you were training against training loss.

@gccome

of course.

Here is the url:

https://drive.google.com/open?id=1niO_26tdOtV_tjdKdRA0bBSW1CXJtD3P

By the way, what does the anneal_against_train_loss mean?

@aslicedbread Yes you were training against training loss, not validation set. If you look at ModelTrainer.train function, you will see that the default value for anneal_against_train_loss is True, meaning the best model is saved when you hit lowest training loss. You are not training against validation set unless you set this parameter to be False

@aslicedbread Yes you were training against training loss, not validation set. If you look at

ModelTrainer.trainfunction, you will see that the default value foranneal_against_train_lossisTrue, meaning the best model is saved when you hit lowest training loss. You are not training against validation set unless you set this parameter to beFalse

@gccome

Thanks a lot. I get it.

In addition, what should I set if we want to use the bert-lstm-crf model (not the bidirectional lstm)?

@aslicedbread what do you mean by bert-lstm-crf? In flair you can use Bert embedding + lstm + crf for sequence tagging. Did you mean fine tuning BERT?

@aslicedbread what do you mean by bert-lstm-crf? In flair you can use Bert embedding + lstm + crf for sequence tagging. Did you mean fine tuning BERT?

@gccome

No. I mean that we use the bert-bidirectional lstm-crf by default. If we want to use the bert-lstm(single direction)-crf, what should we set? ( I set the bidirectional = False in Sequence_tagger.py, but it seems that it's not enough. )

@aslicedbread I think set bidirectional=False should do. Why do you think it is not enough?

Closing due to inactivity, but feel free to reopen if there are more questions!

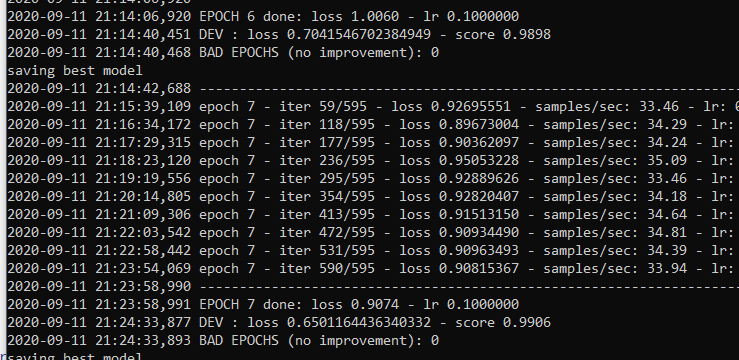

Could someone kindly explain why it's BAD EPOCHS despite that the loss reduced and score increase on the validation set?

It is the bad epoch if the number is greater than 0.

Thanks @djstrong If I understand you correctly, do you mean this doesn't mean Bad epochs? It's only bad if it's something like BAD EPOCHS (no improvement): 1 or any number above zero?

Yes, it is a counter of bad epochs.