Fairseq: CUDA out of Memory when fine-tuning mBART pre-trained CC25 model

❓ Questions and Help

While fine-tuning mBART pre-trained CC25 model on preprocessed data with the given sentencepiece model, was always running into out of memory problems.

Please help to resolve this -

denom = exp_avg_sq.sqrt().add_(group['eps'])

RuntimeError: CUDA out of memory. Tried to allocate 978.00 MiB (GPU 0; 10.76 GiB total capacity; 8.73 GiB already allocated; 659.12 MiB free; 9.26 GiB reserved in total by PyTorch)

Command -

python train.py $DEST_DIR --encoder-normalize-before --decoder-normalize-before --arch mbart_large --task translation_from_pretrained_bart --source-lang $SRC --target-lang $TGT --criterion label_smoothed_cross_entropy --label-smoothing 0.2 --dataset-impl mmap --optimizer adam --adam-eps 1e-06 --adam-betas '(0.9, 0.98)' --lr-scheduler polynomial_decay --lr 3e-05 --min-lr -1 --warmup-updates 2500 --total-num-update 40000 --dropout 0.3 --attention-dropout 0.1 --weight-decay 0.0 --max-tokens 128 --update-freq 2 --save-interval 1 --save-interval-updates 5000 --keep-interval-updates 10 --no-epoch-checkpoints --seed 222 --log-format simple --log-interval 2 --reset-optimizer --reset-meters --reset-dataloader --reset-lr-scheduler --restore-file $PRETRAIN --langs $langs --layernorm-embedding --ddp-backend no_c10d --memory-efficient-fp16

Tried -

1) Lowered no of max-tokens

2) memory-efficient-fp16

3) --empty-cache-freq

4) combinations of above on a single and multiple GPUs

Relating issue :

https://github.com/pytorch/fairseq/issues/1841

Reference :

https://github.com/pytorch/fairseq/tree/master/examples/mbart#download-model

Environment

- fairseq Version (e.g., 1.0 or master):

master - PyTorch Version (e.g., 1.0):

1.4.0 - OS (e.g., Linux):

Ubuntu 18.04.4 - How you installed fairseq (

pip, source):pip - Build command you used (if compiling from source):

pip install --editable . - Python version:

3.7.7 - GPU models and configuration: Geforce RTX 2080ti

- Any other relevant information:

All 8 comments

Tried the above in a P100 GPU in Google Colab.

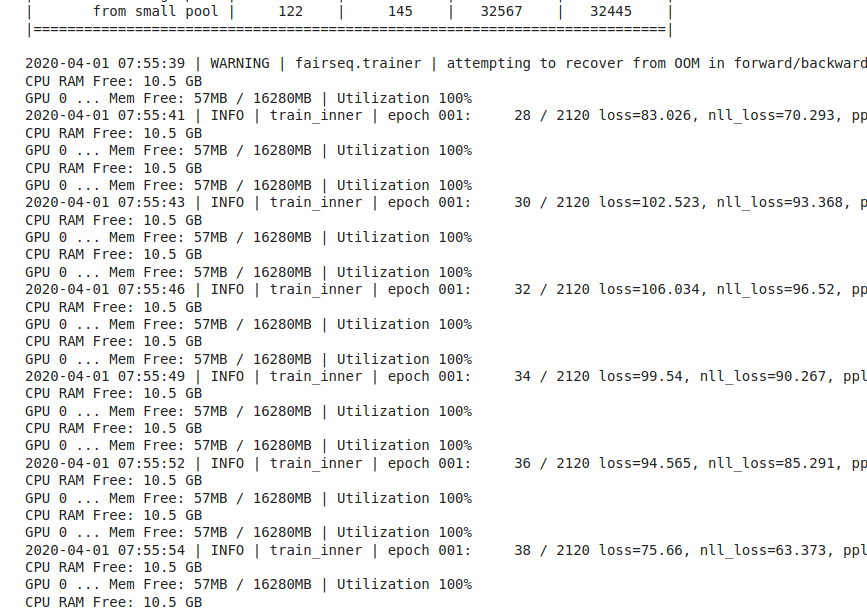

used the following snippet to print current GPU memory before calling train_step in train.py

```import os,sys,humanize,psutil,GPUtil

Define function

def mem_report():

print("CPU RAM Free: " + humanize.naturalsize( psutil.virtual_memory().available ))

GPUs = GPUtil.getGPUs()

for i, gpu in enumerate(GPUs):

print('GPU {:d} ... Mem Free: {:.0f}MB / {:.0f}MB | Utilization {:3.0f}%'.format(i, gpu.memoryFree, gpu.memoryTotal, gpu.memoryUtil*100))

Execute function

mem_report()

```

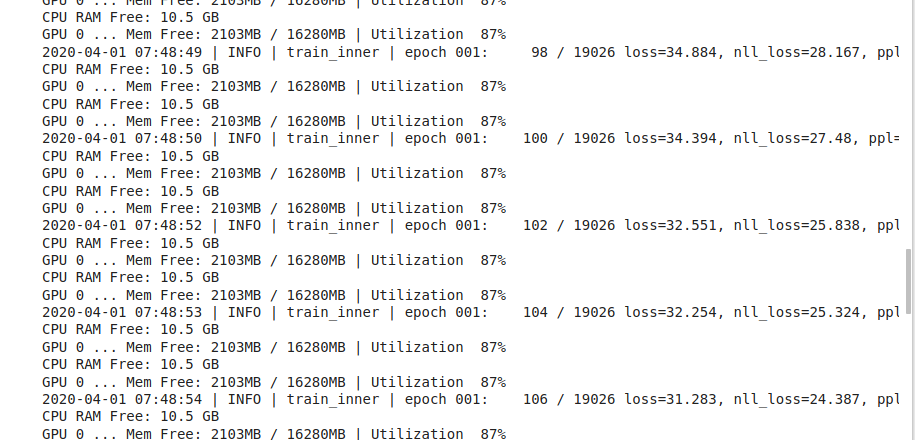

--max-tokens 128

--max-tokens 256

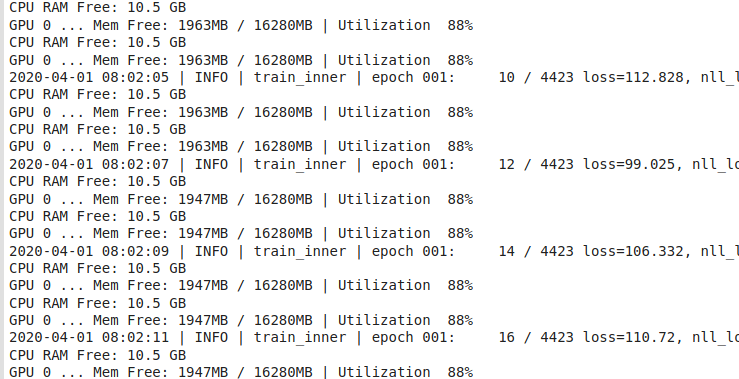

--max-tokens 1024

@yinhanliu @myleott can you consider releasing mbart base model which uses bart_base architecture

@ngoyal2707

@gvskalyan We are not planning to release mbart.base model as previous work has shown that bigger model size help to deal with the issue of interference when training on multiple languages.

To deal with the GPU OOM, I'd recommend looking into gradient checkpointing: https://pytorch.org/docs/stable/checkpoint.html

Which allows to trade compute for memory.

We don't have yet implemented it in fairseq as it has some generalization issues with fairseq abstraction, we will look into getting that in fairseq in future. But for now, I'd recommend trying to get gradient checkpointing working for fitting the model in GPU memory.

Having a smaller mBART available would be very helpful for quicker development and debugging on weaker devices, even if it performs poorly.

@gvskalyan We are not planning to release

mbart.basemodel as previous work has shown that bigger model size help to deal with the issue of interference when training on multiple languages.To deal with the GPU OOM, I'd recommend looking into gradient checkpointing: https://pytorch.org/docs/stable/checkpoint.html

Which allows to trade compute for memory.

We don't have yet implemented it in fairseq as it has some generalization issues with fairseq abstraction, we will look into getting that in fairseq in future. But for now, I'd recommend trying to get gradient checkpointing working for fitting the model in GPU memory.

Can you give us some suggestion to limit GPU usage ? And if we need to use torch.utils.checkpoint on fine-tune mbart , do you have examples for us?

I also got out of memory error even with a very small batch size of 8, num_beams = 1.

But I don't have such an error when I'm using the original BART-large. Not sure what happened

mBART is much larger than bart-large because of a much larger embeddings table.

Most helpful comment

@gvskalyan We are not planning to release

mbart.basemodel as previous work has shown that bigger model size help to deal with the issue of interference when training on multiple languages.To deal with the GPU OOM, I'd recommend looking into gradient checkpointing: https://pytorch.org/docs/stable/checkpoint.html

Which allows to trade compute for memory.

We don't have yet implemented it in fairseq as it has some generalization issues with fairseq abstraction, we will look into getting that in fairseq in future. But for now, I'd recommend trying to get gradient checkpointing working for fitting the model in GPU memory.