Fairseq: Installation via Hub with custom paths not working

🐛 Bug

Hi,

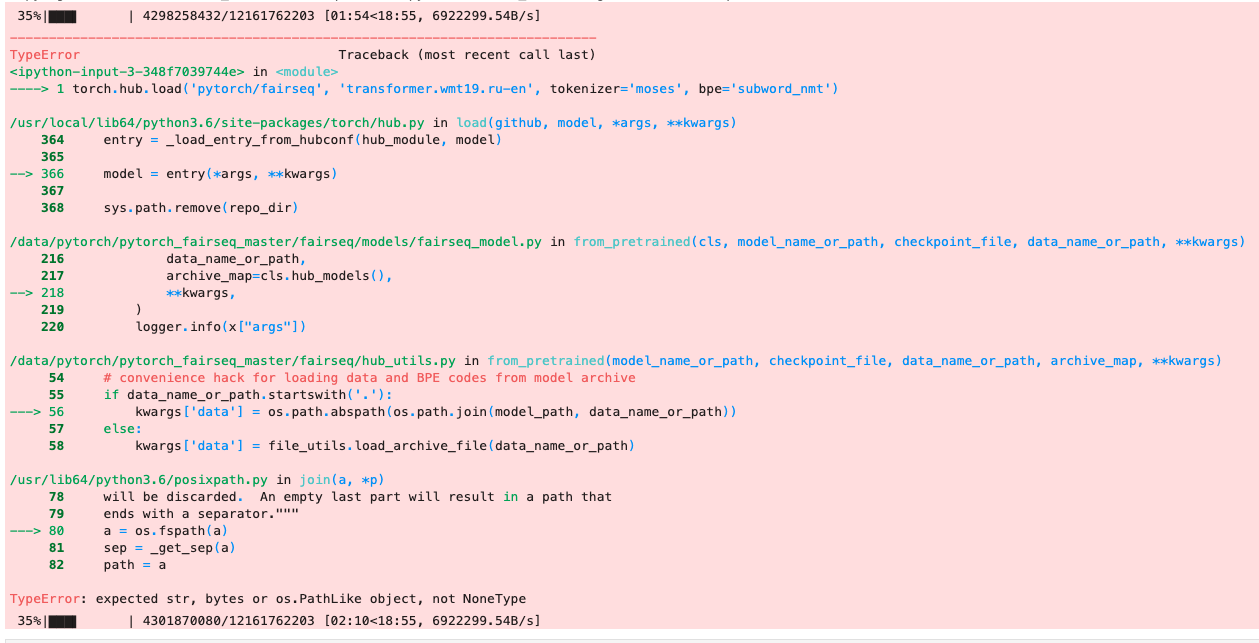

When running the code below, I get the following exception:

TypeError: expected str, bytes or os.PathLike object, not NoneType

To Reproduce

import torch

import os

os.environ["TORCH_HOME"] = "/data/pytorch/"

os.environ["PYTORCH_FAIRSEQ_CACHE"] = "/data/pytorch/cache/"

torch.hub.set_dir("/data/pytorch/")

torch.hub.load('pytorch/fairseq', 'transformer.wmt19.ru-en', tokenizer='moses', bpe='subword_nmt')

Environment

- fairseq Version (e.g., 1.0 or master): 0.9.0

- PyTorch Version (e.g., 1.0): 1.4.0

- OS (e.g., Linux): Linux

- How you installed fairseq (

pip, source): pip - Build command you used (if compiling from source):

- Python version: 3.6

- CUDA/cuDNN version: None

- GPU models and configuration: None

All 5 comments

I'm not able to reproduce this error. Have you tried recently?

I saw different errors when running your command, because you need to update your command to match the instructions:

- pass

checkpoint_file='model1.pt:model2.pt:model3.pt:model4.pt'totorch.hub.load - use

bpe='fastbpe'notbpe='subword_nmt'

Hi @myleott. Had the same issue. Switching from 'subword_nmt' to 'fastbpe' fixed it for me. I'm translating english to french though with 'transformer.wmt14.en-fr'.

In my case it was SSL error - hub wasn't able to download the model because of corporate SSL proxy, and https://github.com/pytorch/fairseq/blob/9f92b05e2a10f1c559d44dc1c264af31723d1d76/fairseq/file_utils.py#L65 fails silently (if logger not attached, anyway i needed pdb to find this).

Solution was to set export env variable:

export CURL_CA_BUNDLE=""

python script.py

In my case, I found

https://github.com/pytorch/fairseq/blob/9f92b05e2a10f1c559d44dc1c264af31723d1d76/fairseq/file_utils.py#L286

uses tempfile.

When the /tmp reaches the limit, you can not download models.

My hack is to change /tmp to somewhere else.

In my case reason was in reached limit of Docker Desktop disk size.

Most helpful comment

In my case, I found

https://github.com/pytorch/fairseq/blob/9f92b05e2a10f1c559d44dc1c264af31723d1d76/fairseq/file_utils.py#L286

uses tempfile.

When the /tmp reaches the limit, you can not download models.

My hack is to change /tmp to somewhere else.