Fairseq: [QUESTION] XLM-RoBERTa for NER

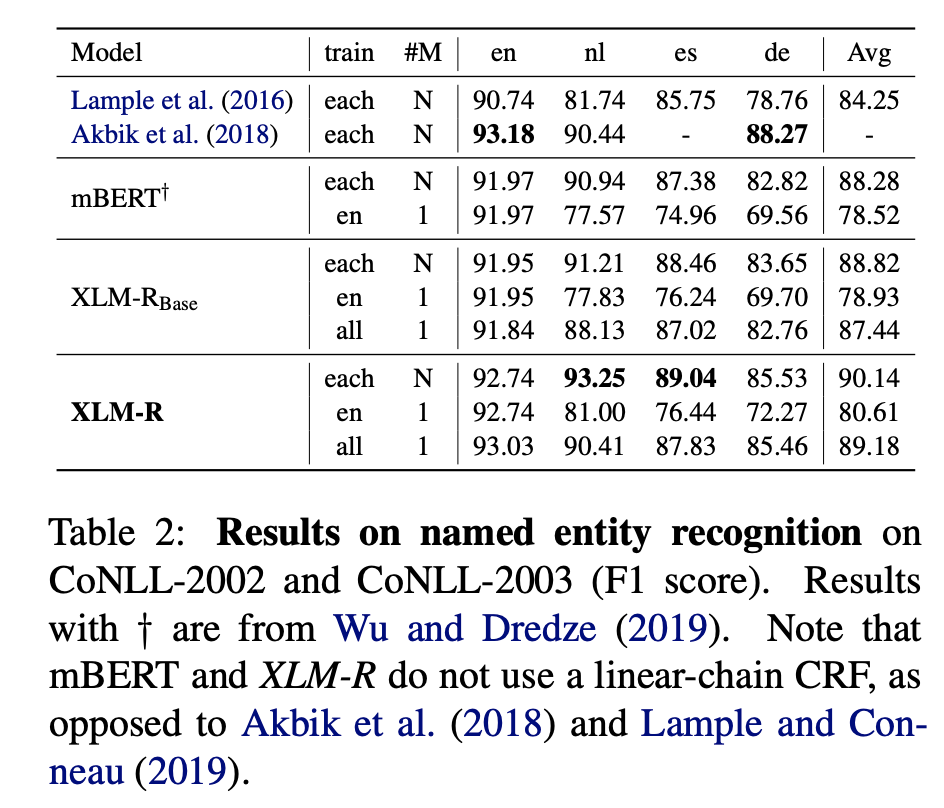

It would be possible to provide an example of NER task for XLM-RoBERTa. We would like to reproduce NER results from the paper with CoNLL-2003 here:

and to run a fine tuning against a specific dataset.

Thanks

All 10 comments

@kartikayk ?

@loretoparisi thanks for checking in! We'll share detailed pointers for how to fine-tune XLM-R on QA and NER tasks in PyText in a week or so.

@kartikayk that's amazing! Thank you so much.

@kartikayk any update on this?

@myleott the code should be in the PyText repo after a PyText release tomorrow/Wednesday I believe. Will post a link here once its done.

@kartikayk Any update on this? I am looking for examples on fine tuning XLM-Roberta for NER (or sequence tagging in general). Thanks!

@rnd2110 Checkout my code for this exact task. I hope it helps.

@mohammadKhalifa Thank you. This is so useful.

@rnd2110 Checkout my code for this exact task. I hope it helps.

Thanks for this. Your code requires fairseq, which requires GPUs. Are you aware of a cpu-only approach for fine tuning? My search has been fruitless so far. :(

Thanks for this. Your code requires fairseq, which requires GPUs. Are you aware of a cpu-only approach for fine tuning? My search has been fruitless so far. :(

You can still run the code on CPU. It will be slower though.

Most helpful comment

@loretoparisi thanks for checking in! We'll share detailed pointers for how to fine-tune XLM-R on QA and NER tasks in PyText in a week or so.