Fairseq: Pretrained embedding file is not found when generate from pretrained model

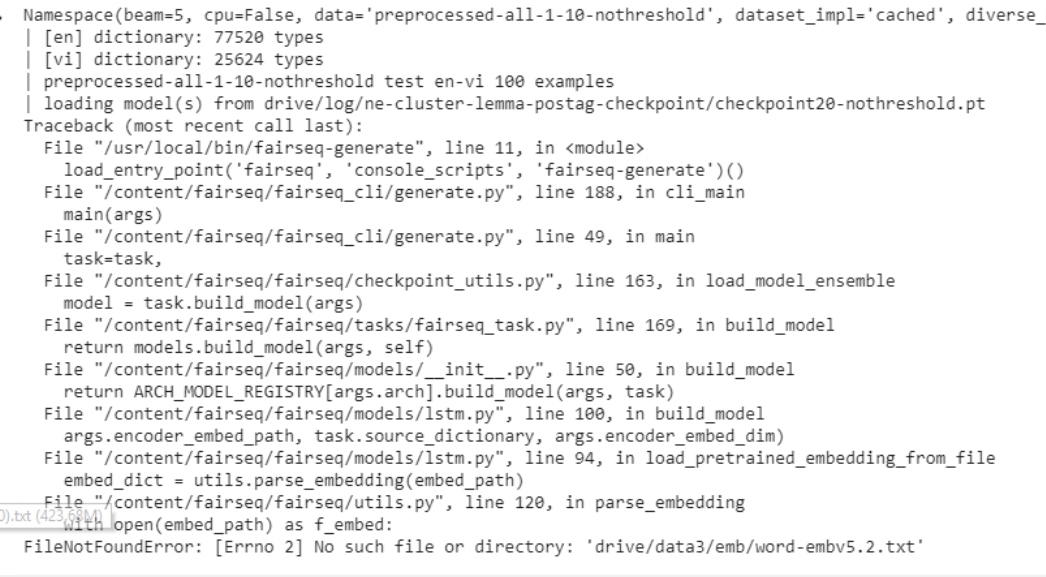

Hi fairseq team, i have a pretrained translation model (use pretrain embedding file located with path "drive/data3/emb/word-embv5.2.txt". Then for some reason, i changed embedding filename to word-embv5-nothreshold.txt. Now, when i used generate script to generate translation result from my pretrained model, it gave me this error

Then i solved this error by rename it back to word-embv5.2.txt,

So the question, is there any option to set pretrain emb path for pretrained model ?

All 4 comments

Did you try using --encoder-embed-path/--decoder-embed-path (same as when you trained the model)?

Yeah, i have tried both options but they are unrecognized arguments.

How about --model-overrides '{"encoder_embed_path": "<path_to_embeddings"}'

ohh thanks @lematt1991 it works