Fairseq: Support Removing BPE when encoding with Sentencepiece

I'm using SentencePiece instead of subword-nmt to tokenize the data.

Problem: During evaluation the flag --remove-bpe is useless:

In SentencePiece

- the

bpe-tokenchanges from@@to▁. - first whitespace needs to be removed and second the

bpe-tokenneeds to be replaced with whitespace.

Currently you can fulfill (1) by passing "▁" as an argument for the --remove-bpe flag, but that does not eliminate the additional whitespace from (2).

All 4 comments

Hmm, they suggest doing this for detokenization: detokenized = ''.join(pieces).replace('_', ' ')

I guess that won’t fit naturally into the current remove_bpe flag. We’ll add a new flag.

Just following up on this, you can now detokenize sentencepiece with --remove-bpe=sentencepiece

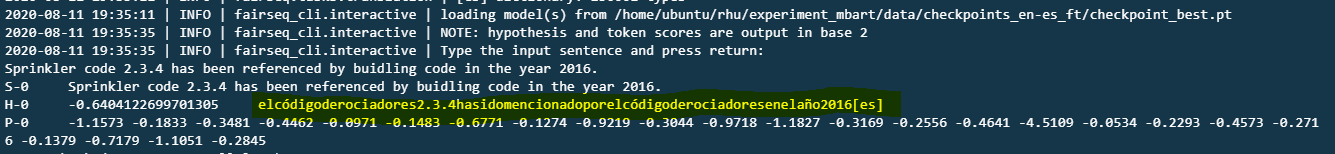

For me when using --remove-bpe=sentencepiece its joining all words without the space between tokens, like below:

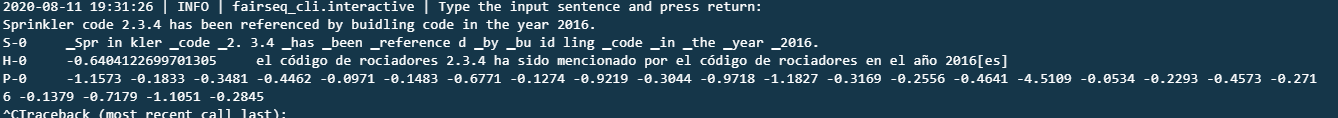

If I don't give anything it gives me the correct BPE tokens:

command used:

fairseq-interactive "/home/ubuntu/rhu/experiment_mbart/data/use/es/test_proc"

--task translation_from_pretrained_bart

--path "/home/ubuntu/rhu/experiment_mbart/data/checkpoints_en-es_ft/checkpoint_best.pt"

-t es -s en

--bpe 'sentencepiece'

--sentencepiece-vocab "/home/ubuntu/rhu/experiment_mbart/data/cc25_pretrain/sentence.bpe.model"

--sacrebleu

--remove-bpe=sentencepiece

--cpu

--max-sentences 1

--langs "ar_AR,cs_CZ,de_DE,en,es,et_EE,fi_FI,fr_XX,gu_IN,hi_IN,it_IT,ja_XX,kk_KZ,ko_KR,lt_LT,lv_LV,my_MM,ne_NP,nl_XX,ro_RO,ru_RU,si_LK,tr_TR,vi_VN,zh_CN"

--buffer-size 1

--input -

Any reason its not splitting out the words in hypothesis correctly @myleott

Thanks!

For me when using

--remove-bpe=sentencepieceits joining all words without the space between tokens, like below:

If I don't give anything it gives me the correct BPE tokens:

command used:

fairseq-interactive "/home/ubuntu/rhu/experiment_mbart/data/use/es/test_proc" --task translation_from_pretrained_bart --path "/home/ubuntu/rhu/experiment_mbart/data/checkpoints_en-es_ft/checkpoint_best.pt" -t es -s en --bpe 'sentencepiece' --sentencepiece-vocab "/home/ubuntu/rhu/experiment_mbart/data/cc25_pretrain/sentence.bpe.model" --sacrebleu --remove-bpe=sentencepiece --cpu --max-sentences 1 --langs "ar_AR,cs_CZ,de_DE,en,es,et_EE,fi_FI,fr_XX,gu_IN,hi_IN,it_IT,ja_XX,kk_KZ,ko_KR,lt_LT,lv_LV,my_MM,ne_NP,nl_XX,ro_RO,ru_RU,si_LK,tr_TR,vi_VN,zh_CN" --buffer-size 1 --input -Any reason its not splitting out the words in hypothesis correctly @myleott

Thanks!

I have observer a similar output for the Hindi language.

Most helpful comment

Just following up on this, you can now detokenize sentencepiece with

--remove-bpe=sentencepiece