Etcd: clientv3 sdk will send /etcdserverpb.Lease/LeaseKeepAlive twice every second for lease id after over time.

Version:

etcd Version: 3.2.22+git

Git SHA: dc7477e21

Go Version: go1.9.1

Go OS/Arch: darwin/amd64

All v3 client

Result:

- send /etcdserverpb.Lease/LeaseKeepAlive twice every second for lease id after over time.

reproduce:

code:

package main

import (

"fmt"

"log"

"github.com/coreos/etcd/clientv3"

"time"

"context"

)

const ETCD_CONNECT_TIMEOUT = time.Second * 5

func main() {

keeprun := make(chan bool)

getLeaseInfo()

<-keeprun

}

func getLeaseInfo () {

endpoints := []string{"http://127.0.0.1:2379"}

cfg := clientv3.Config{

Endpoints: endpoints,

TLS: nil,

DialTimeout:ETCD_CONNECT_TIMEOUT,

}

client, err := clientv3.New(cfg)

if err != nil {

log.Println(err)

return

}

putKeyName := "/cnxct/cfc4n/keyname1"

//申请lease id

ctx, cancel := context.WithTimeout(context.Background(), ETCD_CONNECT_TIMEOUT)

leaseResp, err := client.Grant(ctx, 30)

cancel()

//注册 KEY

ctx, cancel = context.WithTimeout(context.Background(), ETCD_CONNECT_TIMEOUT)

_, err = client.KeepAlive(context.TODO(), leaseResp.ID)

log.Println("注册成功 success:", putKeyName)

//keepalive

ctx, cancel = context.WithTimeout(context.Background(), ETCD_CONNECT_TIMEOUT)

_, err = client.KeepAlive(context.TODO(), leaseResp.ID)

cancel()

if err != nil {

log.Println(err)

return

}

go func(leaseId clientv3.LeaseID) {

t := time.NewTicker(time.Second * 3)

for {

select {

case _,ok := <- t.C:

if !ok {

return

}

ctx, cancel := context.WithTimeout(context.Background(), ETCD_CONNECT_TIMEOUT)

rs, err := client.TimeToLive(ctx,leaseId)

cancel()

if err != nil {

log.Println(err)

return

}

log.Println(fmt.Sprintf("rs.TTL:%d, rs.GrantedTTL:%d",rs.TTL , rs.GrantedTTL))

}

}

}(leaseResp.ID)

return

}

- Grant ttl 30s

- get ttl of lease id every 3 second

run result

2018/07/09 19:26:32 注册成功: /cnxct/cfc4n/keyname1

2018/07/09 19:26:35 rs.TTL:26, rs.GrantedTTL:30

2018/07/09 19:26:38 rs.TTL:23, rs.GrantedTTL:30

2018/07/09 19:26:41 rs.TTL:20, rs.GrantedTTL:30

2018/07/09 19:26:44 rs.TTL:28, rs.GrantedTTL:30

2018/07/09 19:26:47 rs.TTL:25, rs.GrantedTTL:30

2018/07/09 19:26:50 rs.TTL:22, rs.GrantedTTL:30

2018/07/09 19:26:53 rs.TTL:29, rs.GrantedTTL:30

2018/07/09 19:26:56 rs.TTL:26, rs.GrantedTTL:30

2018/07/09 19:26:59 rs.TTL:23, rs.GrantedTTL:30

2018/07/09 19:27:02 rs.TTL:20, rs.GrantedTTL:30

2018/07/09 19:27:05 rs.TTL:27, rs.GrantedTTL:30

2018/07/09 19:27:08 rs.TTL:24, rs.GrantedTTL:30

2018/07/09 19:27:11 rs.TTL:21, rs.GrantedTTL:30

2018/07/09 19:27:14 rs.TTL:28, rs.GrantedTTL:30

2018/07/09 19:27:17 rs.TTL:25, rs.GrantedTTL:30

2018/07/09 19:27:20 rs.TTL:22, rs.GrantedTTL:30

2018/07/09 19:27:23 rs.TTL:29, rs.GrantedTTL:30

2018/07/09 19:27:26 rs.TTL:26, rs.GrantedTTL:30

2018/07/09 19:27:29 rs.TTL:23, rs.GrantedTTL:30

2018/07/09 19:27:32 rs.TTL:20, rs.GrantedTTL:30

2018/07/09 19:27:35 rs.TTL:27, rs.GrantedTTL:30

2018/07/09 19:27:38 rs.TTL:24, rs.GrantedTTL:30

2018/07/09 19:27:41 rs.TTL:21, rs.GrantedTTL:30

2018/07/09 19:27:44 rs.TTL:28, rs.GrantedTTL:30

2018/07/09 19:27:47 rs.TTL:25, rs.GrantedTTL:30

2018/07/09 19:27:50 rs.TTL:22, rs.GrantedTTL:30

2018/07/09 19:27:53 rs.TTL:29, rs.GrantedTTL:30

2018/07/09 19:27:56 rs.TTL:26, rs.GrantedTTL:30

2018/07/09 19:27:59 rs.TTL:23, rs.GrantedTTL:30

2018/07/09 19:28:02 rs.TTL:20, rs.GrantedTTL:30

2018/07/09 19:28:05 rs.TTL:27, rs.GrantedTTL:30

2018/07/09 19:28:08 rs.TTL:24, rs.GrantedTTL:30

2018/07/09 19:28:11 rs.TTL:21, rs.GrantedTTL:30

2018/07/09 19:28:14 rs.TTL:28, rs.GrantedTTL:30

2018/07/09 19:28:17 rs.TTL:25, rs.GrantedTTL:30

2018/07/09 19:28:20 rs.TTL:22, rs.GrantedTTL:30

2018/07/09 19:28:23 rs.TTL:29, rs.GrantedTTL:30

2018/07/09 19:28:26 rs.TTL:26, rs.GrantedTTL:30

2018/07/09 19:28:29 rs.TTL:23, rs.GrantedTTL:30

2018/07/09 19:28:32 rs.TTL:20, rs.GrantedTTL:30

2018/07/09 19:28:35 rs.TTL:27, rs.GrantedTTL:30

2018/07/09 19:28:38 rs.TTL:24, rs.GrantedTTL:30

2018/07/09 19:28:41 rs.TTL:21, rs.GrantedTTL:30

2018/07/09 19:28:44 rs.TTL:28, rs.GrantedTTL:30

2018/07/09 19:28:47 rs.TTL:25, rs.GrantedTTL:30

2018/07/09 19:28:50 rs.TTL:22, rs.GrantedTTL:30

2018/07/09 19:28:53 rs.TTL:29, rs.GrantedTTL:30

2018/07/09 19:28:56 rs.TTL:26, rs.GrantedTTL:30

2018/07/09 19:28:59 rs.TTL:23, rs.GrantedTTL:30

2018/07/09 19:29:02 rs.TTL:20, rs.GrantedTTL:30

2018/07/09 19:29:05 rs.TTL:27, rs.GrantedTTL:30

2018/07/09 19:29:08 rs.TTL:24, rs.GrantedTTL:30

2018/07/09 19:29:11 rs.TTL:21, rs.GrantedTTL:30

2018/07/09 19:29:14 rs.TTL:29, rs.GrantedTTL:30

2018/07/09 19:29:17 rs.TTL:29, rs.GrantedTTL:30

2018/07/09 19:29:20 rs.TTL:29, rs.GrantedTTL:30

...

- at 19:29:14 , 19:29:17 , 19:29:20 and others, rs.TTL was 29 all of them.

- Grant ttl 30s

- 19:29:14 - 19:26:32 ≈ 160s

- lease keepalive request will send every

nextKeepAlive := time.Now().Add((time.Duration(karesp.TTL) * time.Second) / 3.0) - lease request was 10s in my case

- 10 * leaseResponseChSize = 160s

- rs.TTL was 29 always.

- clientv3 send leaseKeepalive request twice every second

cause:

- clientv3/lease.go line 472

// send update to all channels

nextKeepAlive := time.Now().Add((time.Duration(karesp.TTL) * time.Second) / 3.0)

ka.deadline = time.Now().Add(time.Duration(karesp.TTL) * time.Second)

for _, ch := range ka.chs {

select {

case ch <- karesp:

ka.nextKeepAlive = nextKeepAlive

default:

}

}

- NextKeepAlive is assigned to ka.nextKeepAlive only after the ch channel is successfully written.

- but the cap of ch channel was 1,It means that ch only be written once。

- And the length of ka.chs is fixed ,

leaseResponseChSize = 16,at clientv3/lease.go line 222ch := make(chan *LeaseKeepAliveResponse, leaseResponseChSize) - send request of lease keepalive at clientv3/lease.go line 514 , like this

// sendKeepAliveLoop sends keep alive requests for the lifetime of the given stream.

func (l *lessor) sendKeepAliveLoop(stream pb.Lease_LeaseKeepAliveClient) {

for {

var tosend []LeaseID

now := time.Now()

l.mu.Lock()

for id, ka := range l.keepAlives {

if ka.nextKeepAlive.Before(now) {

tosend = append(tosend, id)

}

}

l.mu.Unlock()

for _, id := range tosend {

r := &pb.LeaseKeepAliveRequest{ID: int64(id)}

if err := stream.Send(r); err != nil {

// TODO do something with this error?

return

}

}

select {

case <-time.After(500 * time.Millisecond):

case <-stream.Context().Done():

return

case <-l.donec:

return

case <-l.stopCtx.Done():

return

}

}

}

- every ch channel was full of chs slices, and

ka.nextKeepAlivenot to be assigned, Its value is still the last value, an expiration time . and matchif ka.nextKeepAlive.Before(now), It will add totosend slice. - clientv3/lease.go line 522 will send this request of lease keepalive

- After 500 millisecond ,run next loop,send it,and keep loop

fix

- what ever

nextKeepAliveshould be assigned toka.nextKeepAlivealways, - this bug is the cause of #9812 from my colleague,and I'll record the details of this bug this week.

All 15 comments

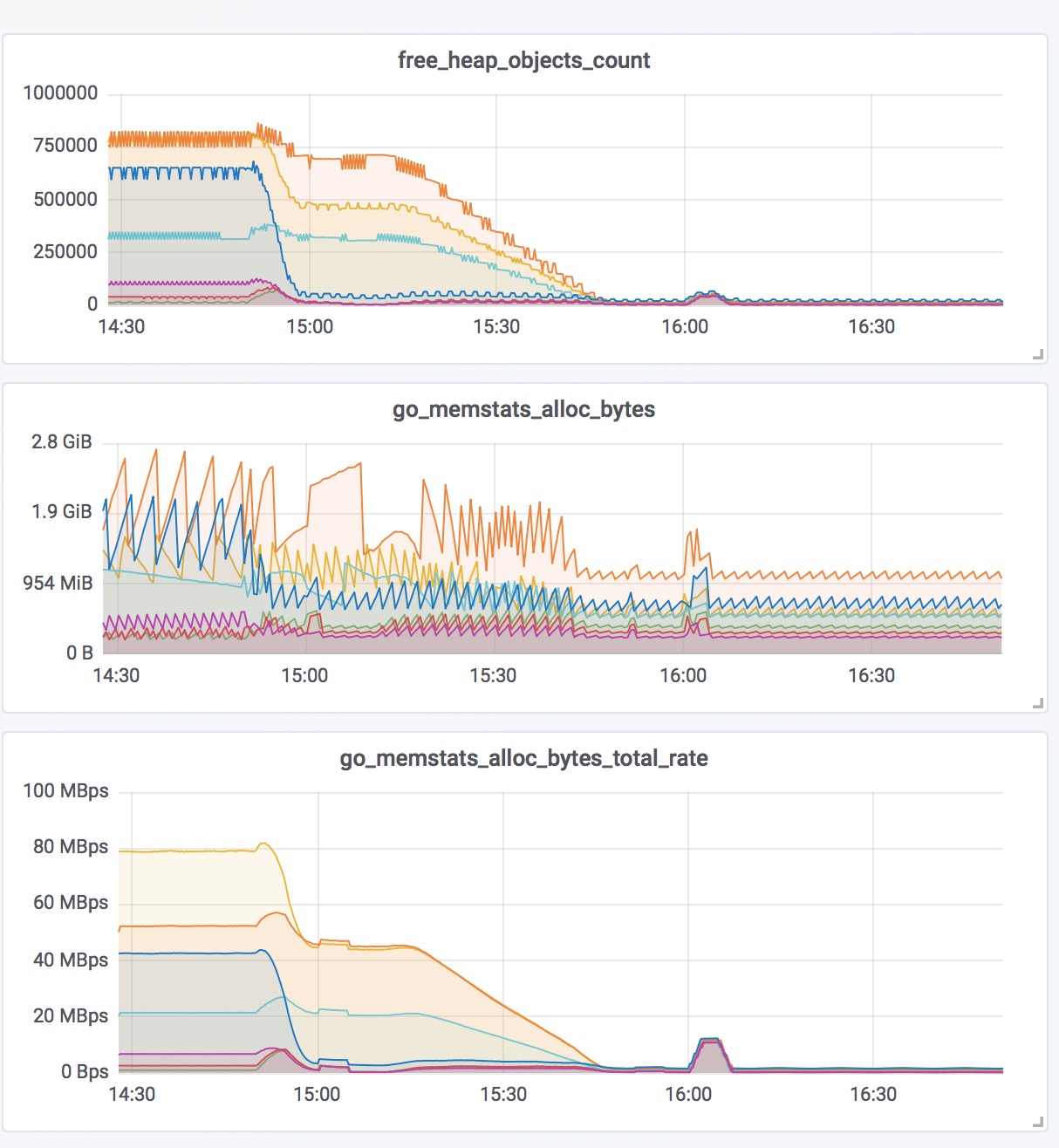

in this case (server memory highly load due to too many connections):

- 14K clients, grants a lease id everyone with 10s ttl,and keepalived

- 14k * 2 = 28k keepalive requests every second send to etcd member on port 2379

- etcd member create http post request for every lease keepalive request,and send to etcd leader on port 2380 with

http.RoundTripfunction - etcd leader recive them,and call

recvBuffer.putfunction to save tobacklog []recvMsg - etcd leader can't process these requests quickly,and it causing requests to accumulate,and causing memory usage more and more.

- the connection of client between etcd member use http2 grpc, so it can be reused

- the connection of etcd member between etcd leader use http1.1,It can't be reused when have not response from etcd leader.and it will create a tcp connection for every http request(lease keepalive).

- etcd leader will close tcp socket after return response ,and there are a lot of tcp socket with

TIME-WAITstatus - etcd member will close tcp socket with tls timeout limit, and there are more

CLOSE-WAITstatus tcp socket on etcd leader, becuse etcd leader was so busy..

so, It happened...

hi, @gyuho @xiang90 Is that right?

I deploy a new version without lease to my 14K servers.there are some graphs about performance monitoring of all servers.

If that is.

- can we need something to do for etcd overload protection?

- change

recvBuffer.backlogtochan recvMsg ,MAX_CHAN_SIZEfrom[]recvMsg? or others? - use

http2for member connection to leader ?

@cfc4n Could you also try latest 3.3?

OK,I'll try it today.

hi, @gyuho I test it in v3.3.8 ,and

2018/07/11 12:23:16 注册成功 success: /cnxct/cfc4n/keyname1

2018/07/11 12:23:19 rs.TTL:26, rs.GrantedTTL:30

2018/07/11 12:23:22 rs.TTL:23, rs.GrantedTTL:30

2018/07/11 12:23:25 rs.TTL:20, rs.GrantedTTL:30

2018/07/11 12:23:28 rs.TTL:28, rs.GrantedTTL:30

2018/07/11 12:23:31 rs.TTL:25, rs.GrantedTTL:30

2018/07/11 12:23:34 rs.TTL:22, rs.GrantedTTL:30

2018/07/11 12:23:37 rs.TTL:29, rs.GrantedTTL:30

2018/07/11 12:23:40 rs.TTL:26, rs.GrantedTTL:30

2018/07/11 12:23:43 rs.TTL:23, rs.GrantedTTL:30

2018/07/11 12:23:46 rs.TTL:20, rs.GrantedTTL:30

2018/07/11 12:23:49 rs.TTL:27, rs.GrantedTTL:30

2018/07/11 12:23:52 rs.TTL:24, rs.GrantedTTL:30

2018/07/11 12:23:55 rs.TTL:21, rs.GrantedTTL:30

2018/07/11 12:23:58 rs.TTL:28, rs.GrantedTTL:30

2018/07/11 12:24:01 rs.TTL:25, rs.GrantedTTL:30

2018/07/11 12:24:04 rs.TTL:22, rs.GrantedTTL:30

2018/07/11 12:24:07 rs.TTL:29, rs.GrantedTTL:30

2018/07/11 12:24:10 rs.TTL:26, rs.GrantedTTL:30

2018/07/11 12:24:13 rs.TTL:23, rs.GrantedTTL:30

2018/07/11 12:24:16 rs.TTL:20, rs.GrantedTTL:30

2018/07/11 12:24:19 rs.TTL:27, rs.GrantedTTL:30

2018/07/11 12:24:22 rs.TTL:24, rs.GrantedTTL:30

2018/07/11 12:24:25 rs.TTL:21, rs.GrantedTTL:30

2018/07/11 12:24:28 rs.TTL:28, rs.GrantedTTL:30

2018/07/11 12:24:31 rs.TTL:25, rs.GrantedTTL:30

2018/07/11 12:24:34 rs.TTL:22, rs.GrantedTTL:30

2018/07/11 12:24:37 rs.TTL:29, rs.GrantedTTL:30

2018/07/11 12:24:40 rs.TTL:26, rs.GrantedTTL:30

2018/07/11 12:24:43 rs.TTL:23, rs.GrantedTTL:30

2018/07/11 12:24:46 rs.TTL:20, rs.GrantedTTL:30

2018/07/11 12:24:49 rs.TTL:27, rs.GrantedTTL:30

2018/07/11 12:24:52 rs.TTL:24, rs.GrantedTTL:30

2018/07/11 12:24:55 rs.TTL:21, rs.GrantedTTL:30

2018/07/11 12:24:58 rs.TTL:28, rs.GrantedTTL:30

2018/07/11 12:25:01 rs.TTL:25, rs.GrantedTTL:30

2018/07/11 12:25:04 rs.TTL:22, rs.GrantedTTL:30

2018/07/11 12:25:07 rs.TTL:29, rs.GrantedTTL:30

2018/07/11 12:25:10 rs.TTL:26, rs.GrantedTTL:30

2018/07/11 12:25:13 rs.TTL:23, rs.GrantedTTL:30

2018/07/11 12:25:16 rs.TTL:20, rs.GrantedTTL:30

2018/07/11 12:25:19 rs.TTL:27, rs.GrantedTTL:30

2018/07/11 12:25:22 rs.TTL:24, rs.GrantedTTL:30

2018/07/11 12:25:25 rs.TTL:21, rs.GrantedTTL:30

2018/07/11 12:25:28 rs.TTL:28, rs.GrantedTTL:30

2018/07/11 12:25:31 rs.TTL:25, rs.GrantedTTL:30

2018/07/11 12:25:34 rs.TTL:22, rs.GrantedTTL:30

2018/07/11 12:25:37 rs.TTL:29, rs.GrantedTTL:30

2018/07/11 12:25:40 rs.TTL:26, rs.GrantedTTL:30

2018/07/11 12:25:43 rs.TTL:23, rs.GrantedTTL:30

2018/07/11 12:25:46 rs.TTL:20, rs.GrantedTTL:30

2018/07/11 12:25:49 rs.TTL:27, rs.GrantedTTL:30

2018/07/11 12:25:52 rs.TTL:24, rs.GrantedTTL:30

2018/07/11 12:25:55 rs.TTL:21, rs.GrantedTTL:30

2018/07/11 12:25:58 rs.TTL:29, rs.GrantedTTL:30

2018/07/11 12:26:01 rs.TTL:29, rs.GrantedTTL:30

2018/07/11 12:26:04 rs.TTL:29, rs.GrantedTTL:30

2018/07/11 12:26:07 rs.TTL:29, rs.GrantedTTL:30

2018/07/11 12:26:10 rs.TTL:29, rs.GrantedTTL:30

in my test-case , TTL always was 29 after 160s (30/3*16) , because client will send lease keepalive every 500ms, So this bug also exists in v3.3.8 , maybe in all v3.3.* ...

@cfc4n Ok, will take a look. Currently busy with fixing some other issues in client. Thanks!

waiting for your good news...

@cfc4n

your application should consume the chan returned here: https://github.com/coreos/etcd/blob/master/clientv3/lease.go#L133

you are right about the assignment issue

Can you send a PR to fix it? Please also add an integration test. Thanks!

@gyuho This is a bug. We need to backport the fix.

@cfc4n @xiang90 Ack. Will investigate this with high priority, and backport whenever fix is ready.

@xiang90 @gyuho OK,I'll send a PR later. Thanks for reply.

@cfc4n I can reproduce. Let me fix soon.

@cfc4n We have released https://github.com/coreos/etcd/blob/master/CHANGELOG-3.3.md#v339-2018-07-24 with the fix. Please try.

@gyuho It is fixed.Thanks.

Can you tell me what this tool is ?It's your own developed?

Most helpful comment

@cfc4n Ok, will take a look. Currently busy with fixing some other issues in client. Thanks!