Esp-idf: PSRAM Cache Issue stills exist (IDFGH-31)

We stumbled upon the fact that cache issue with PSRAM still exist, even in the newest development environment. This can produce random crashes, even if the code is 100 % valid.

This very small example program reproduces the problem easily, at least if compiled with newest ESP-IDF and toolchain under Mac OS X (did not try other environments):

https://github.com/neonious/memcrash-esp32/

(As a side note: We noticed this problem when we implemented the dlmalloc memory allocator in a fork of ESP-IDF. We worked around this problem (hopefully you can fix it correctly), and now have an ESP-IDF with far faster allocations. Take a look at the blog post here: https://www.neonious-basics.com/index.php/2018/12/27/faster-optimized-esp-idf-fork-psram-issues/ ).

All 178 comments

@neoniousTR Hi, neoniousTR, thanks for reporting this, we will look into this and update if any feedbacks. Also there is a topic about the issue on our forum at http://bbs.esp32.com/viewtopic.php?f=13&t=8628&sid=1acc8bd897e72cf450ad9eb71491d732. Thanks.

We updated the example project at https://github.com/neonious/memcrash-esp32

It is now leaner, more to the point, and most importantly, compiles out of the box.

We think this problem is urgent to fix, as random crashes can occur to anyone using the PSRAM of the ESP32.

There only seems to be two workarounds:

- Use only the first 2 MB of 4 MB of PSRAM (big penalty)

- End every function which stores to PSRAM with a memw instruction (slow). nops do not help.

Please take a look at the project, and hopefully you have a better idea.

Fyi, we're working on this. For what it's worth, it seems to be caused by an interrupt (in your example, the FreeRTOS tick interrupt) firing while some cache activity is going on. We have our digital team running simulations to see what exactly is going on in the hardware; we hope to create a better workaround than the memw solution from that.

Good that you can reproduce this.

Interrupts are a good explanation why this happens only randomly..

Hoping for the best.

We seem to be seeing this as a std::string memory corruption (all zeros, on a 4 byte boundary).

In our case, disabling the top 2MB of SPIRAM didn't seem to work. But pre-allocating 2MB (which we then never use) seemed to workaround the problem. Our code runs primarily on core #1.

This is impacting us quite badly. Lots of random corruptions and crashes with devices in the field.

Maybe whether the top or bottom of the RAM works depends on the core used.

Confirmed: running our test project from #3006 with that 2 MB allocation and also starting the test task on core 0 shows the corruptions again. It seems core 0 can only work reliably with the lower 2 MB and core 1 only with the higher 2 MB.

@Spritetm or @Alvin1Zhang

As this issue does not happen in single core mode, do you know if the original PSRAM cache issue which is fixed with the flag and adds many nops and memws is also only in dual core mode?

If so, we will try to switch low.js to single core mode, this might even be faster at the end, because the JavaScript itself is single core anyhow and has the most load.

Also, how is the progress going? I'd think the chances are to get this fixed by modifying the interrupt handlers or the cache fetchers and savers (they are part of the ROM?).

Hello, I wanted to add to the subject the error I observed in my application using PSRAM. Random error while retrieving the amount of free PSRAM memory. In my application, I check the amount of free PSRAM memory in the main loop, and differently I received in reply that, for example, 16 bytes of free memory, but at the next check, it actually answered ~ 4mb.

In my opinion, there must have been an erroneous random reading from PSRAM.

Our current status:

Load to cache/Write from cache does not seem to be interrupt-based.. Might be 100% hardware-based?

Added memw to interrupt handlers does not change anything.

Currently we believe Dual Core + PSRAM is a broken combination.

So we will completly switch to Unicore now.

Please answer:

Do you know if the original PSRAM cache issue also exist in unicore mode? Would be great if we can get rid of the nops and memws with this once and for all...

I made such an experience, I rewrote the String class from the arduino project to use the PSRAM memory. I changed the name and changed the realloc in the changebuffer function. Suddenly, it turned out that my application did not regularly show 0x00 in one cell.

here is this changed String class: https://github.com/xbary/xb_StringPSRAM

I would like you to be able to replace the class with StringSRAM as part of the tests, you may be able to reproduce the repeatability of the error.

I have to confirm that the original PSRAM workaround is still required in Unicore mode.

So Workaround + Unicore is the only combination which works reliably with PSRAM. If I am wrong, I hope somebody will post. Otherwise we have to take this as a fact ...

Can dual core chip be switched to unicore mode?

Does this error manifest itself in Arduino framework? As far as I know, Arduino task is pinned to core 0, therefore, it can be effectively viewed as unicore. Am I right?

Yes the CPU can be configured for unicore.

Pinning to one core does not help, as in dual core mode the other 2 MB are handled by the other core.

FWIW, we have a tentative solution for this; the existing workaround solution does actually seem to work but doesn't take calls/returns into account properly. We'll ship a toolchain with improved workaround code soon, but we want to have this fairly well tested so we don't have any other edge cases sneaking past us. I'll see if I can post a preliminary patch as soon as I have something halfway stable,

Do have any idea of schedule for this, or an ability to get us a pre-release toolchain?

This is impacting us quite badly. The 2MB pre-allocation solves the problem for our code, but just shifts the problem to wifi running on the first core (which now experiences random errors and throughput problems).

I confirm, the error still occurs at random moments, even hangs completely.

Do have any idea of schedule for this, or an ability to get us a pre-release toolchain?

@Spritetm We do appreciate your efforts in making sure your patch is perfect. But meanwhile our system has to bear a huge performance hit by the workaround, while the stability is still impacted by the bug. We're more than willing to help you in beta testing your patch by using it on our project. Please do a pre-release or share some update on the status. Thanks!

Also think an update is in place, we are awaiting to see if you are able to fix this bug or if it makes the PSRAM feature unusable.

We need more RAM than internal available in the ESP32, so this is a deal breaker for our product.

Just wondering why if the original workaround should work in this case that forcing nops does not resolve it.

400d4b3c: 1047a5 call8 400e4fb8 <crash_set_both>

400d4b3f: f03d nop.n

400d4b41: f03d nop.n

400d4b43: f03d nop.n

400d4b45: f03d nop.n

400d4b47: 0228 l32i.n a2, a2, 0

400e4fb8 <crash_set_both>:

400e4fb8: 004136 entry a1, 32

400e4fbb: 0249 s32i.n a4, a2, 0

400e4fbd: 0349 s32i.n a4, a3, 0

400e4fbf: f03d nop.n

400e4fc1: f03d nop.n

400e4fc3: f03d nop.n

400e4fc5: f03d nop.n

400e4fc7: f01d retw.n

400e4fc9: 000000 ill

md5-e5a31230111b48caff9b72484c8a1637

400d4e86: 03a9 s32i.n a10, a3, 0

400d4e88: 0020c0 memw

400d4e8b: 01a9 s32i.n a10, a1, 0

400d4e8d: f03d nop.n

400d4e8f: f03d nop.n

400d4e91: f03d nop.n

400d4e93: f03d nop.n

400d4e95: 0020c0 memw

400d4e98: 0138 l32i.n a3, a1, 0

From what I can see, the load-store inversion doesn't occur there exactly... the issue has something to do with a cache miss around that time that has delayed effects later. Because a cache miss takes a while to resolve, you can fix it with nops but you'd need to put a gazillion of them there.

I also noticed the nop/memw interaction... will see if I can get rid of that as well, inasfar gcc marks it. (As in: I can probably detect volatiles that cause an implicit memw, but a literal asm("memw") is harder to spot.)

Ok thanks. Other things I noticed when playing with the memcrash-esp32 example:

- If running on core 0 error will occur with mem2 (lower) in HIGH-LOW mode

- If running on core 1 error will occur with mem1 (upper) in HIGH-LOW mode

- If running on core 0 error will occur with both mem1 & mem2 even in EVEN-ODD mode

- If running on core 1 error will occur with both mem1 & mem2 odd in EVEN-ODD mode

- Error does not seem to happen in NORMAL mode on either core ( I don't know if this is supported or just giving a false result)

Assuming running NORMAL mode is valid workaround with a performance trade-off, how will it compare to performance of the planned workaround?

More info: the memw in the example actually does not prevent the error when the routine is running in parallel on both cores. It is much more infrequent but still happens.

Normal mode does have a performance cost as it is around 24% fewer tries/ms with the example on both cores, but no errors.

Normal mode has cache coherency issues according to documentation, so not an option.

Yes I see, makes sense. Back to the drawing board then.

Okay, I have a toolchain update that has a different psram workaround. You can grab the binaries for Linux, Windows or OSX. By default, it should use the workaround; switch to the new one by putting

CFLAGS+=-mfix-esp32-psram-cache-dupldst

CXXFLAGS+=-mfix-esp32-psram-cache-dupldst

into your Makefile (or do the equivalent for cmake), recompile and test.

Note that this is not an 100% fix as the binary libraries (wifi/bt/libstdc/libc++/libgcc) at the moment are not compiled with this workaround, but I don't think I have seen any issues that originated there yet, so as a first test this should cover most use cases.

Thank you.

Can you explain the workaround? What does it do / what penalty does it have?

It's actualy almost shamefully trivial for how well it works... every store of a 32-bit value to a memory location that is not volatile, will have a load from that memory location appended to it. I haven't ran any benchmarks, but I don't think it affects speed more than the NOP solution. In theory, it may break storing a word to a peripheral (as the load after may trigger something), but as those should be declared volatile, I don't think that happens in practice.

It also includes a fix for a different issue, by the way: there's a workaround where a memw is inserted after an 8/16-bit store in some situations, but the workaround code didn't handle the end of functions correctly. That's been fixed as well.

Thank you.

Is there a schedule when the binaries will exist fixed? Is it realistic that all code in ROM will be patched, too (btw was this already done completly with the last psram workaround? This would be good because we should not expect more code to be patched, just a new patch, correct?).

We first need to know if this fix 1. actually fixes the issue and 2. doesn't introduce more problems than it fixes. Once that's established, we'll also recompile all binary libraries with it. The amount of fixed code should indeed be the same as the existing psram fix.

The windows binaries have symlinks instead of copies.

The good news: using the workaround my test project from #3006 no longer produces the corruptions in the char buffer tests (run_test_buffer).

The bad news: the std::string tests have corruptions as before. Did you build the included libstdc++ using the workaround? As we mostly use std::string for buffers, we won't see much of an improvement without a fixed std::string class.

Update: looking into the toolchain I found a secondary build of the libs in xtensa-esp32-elf/xtensa-esp32-elf/sysroot/lib/esp32-psram, so I gave those linker precedence and voila: the std::string tests now also work without corruptions!

So it seems the -m flag misses setting that path. I've added this to my main/component.mk:

COMPONENT_ADD_LDFLAGS = -lmain -L$(HOME)/esp/xtensa-esp32-elf/xtensa-esp32-elf/sysroot/lib/esp32-psram

There surely is a better method to add this to the project Makefile I haven't found yet.

I'll now try building our main project with the workaround.

Not a single data corruption yet & no new issues so far, I'm publishing the build to our beta testers now.

~Yeah it only happens on the order of 1 per million tries when hammering the cache from both cores so it may be very rare in a practical application~

@neoniousTR makes an interesting point about passing an extram pointer to a rom function which may perform a store and then return to user code which performs a load

My other question would be whether the compiler is aware of our extra load and since a store/load is the trigger can we guarantee that the register will maintain its value since it may be used again in subsequent instructions?

@negativekelvin That doesn't mesh with my tests. What setup do you use? I tried the sources at https://github.com/neonious/memcrash-esp32/ with only the Makefile modified to use the master esp-idf and to add the -mfix-esp32-psram-cache-dupldst flag. I've ran the test for a good million ticks now and haven't seen any errors.

Wrt ROM functions: All the relevant ROM functions (mostly Newlib) get placed in flash with the workaround compiled in when the PSRAM workaround is enabled. That's the case for the old workaround and will also be done for the new workaround as soon as I have an indication it works well enough and doesn't lead to other issues. Same goes for all other libraries.

@Spritetm my version here https://github.com/negativekelvin/memcrash-esp32

Thanks, that one also fails for me. I'll look into what the issue is there...

Edit: Errm... Wouldn't your test fail anyway if both cores decide to write to the same random address, even if the hardware works OK? I think that's the issue, as if I modify the task on core 0 to only write to even words and core 1 to only write to odd words, I get no errors anymore.

Ah ok I think you got me there, ...brain fart. I guess it is working then!

Just want to mention I am still seeing an error in EVENODD mode on the order of 1 in 100 million tries. I don't have any particular need to use this mode though.

That EVENODD does not work 100% lets me think: Could the fix maybe not work if the address which you read from which is appended to the one you write in is in the other half of the memory? Right on the boundary?

Not sure, there are definitely more boundaries in EVENODD but the extra generated load is from the same address as the store.

As of now I also have observed 11/3 errors respectively on each core out of 18 billion tries using LOWHIGH.

Anyone else can reproduce this on their hardware?

Test results so far: improved stability & network performance, no new issues. Looks very good.

@negativekelvin I can replicate the few-times-in-a-billion error with your code. It's a weird error: while the previous errors could be influenced by interrupts (as in: more interrupts = more errors), this error is impervious to interrupt flooding, which makes me think it's caused by something else.

New version of the toolchain is here: Linux MacOS WIndows (note the Windows toolchain probably still has symlinks instead of copies)

In this version, the load-after-store is the default psram fix; there's no need to use -mfix-esp32-psram-cache-dupldst in your Makefile anymore. The old fix still is available: specify -mfix-esp32-psram-cache-nops to use it. Because of this change, all the compiler libraries (libgcc, libstdc++ etc) should also be compiled with the new fix.

A few times in a billion IMHO is too much. Customers expect uptimes of years (our PC servers manage that easily), and if such an error propagates to the file system, data might even be corrupted.

Have you tried turning down the Mhz? Maybe it is simply a bad signal problem.

Question 2: I think there are binary blobs in ESP-IDF, too, such as Wifi? If I am correct, how are these updated?

@neoniousTR Agreed entirely, and we're not done with this issue yet. However, seeing that this already is a pretty large improvement on the previous situation and I can see the remaining issue potentially taking a fair while to catch, I'd rather start giving out beta tests and updating early. WiFi and Newlib need to be updated in an esp-idf release; as soon as the gcc release is stable I'll see if I can get those recompiled as well.

@Spritetm: I just sent you a message via the contact form of your website SpritesMod.com, as I did not find any other way to contact you in private. Please look for this mail. Thank you!

Another build; this one should be pretty close to final. The once-a-billion problem is fixed, thanks to some simulation and creative thinking on the side of my digital colleagues. Seems doing store-load-store is a robust solution here. Also included in this new build is a fix for an edge case where the original patch erroneously thought a memw wasn't necessary.

Linux

MacOS

WIndows (note the Windows toolchain probably still has symlinks instead of copies)

Either we need to do something different than before or something's wrong with that -95 version compared to the previous -93 version: simply rebuilding our project with the -95 leads to a crash reboot loop.

The crash happens right after the scheduler startup:

I (395) cpu_start: Starting scheduler on PRO CPU.

I (0) cpu_start: Starting scheduler on APP CPU.

/home/balzer/esp/esp-idf/components/freertos/queue.c:1445 (xQueueGenericReceive)- assert failed!

abort() was called at PC 0x40094b17 on core 0

0x40094b17: xQueueGenericReceive at /home/balzer/esp/esp-idf/components/freertos/queue.c:2037

Backtrace: 0x400906fc:0x3ffcbbf0 0x400908d3:0x3ffcbc10 0x40094b17:0x3ffcbc30 0x40094c55:0x3ffcbc70 0x400855b1:0x3ffcbc90 0x400856a9:0x3ffcbcc0 0x40123e9d:0x3ffcbce0 0x40118e1d:0x3ffcbfa0 0x40118919:0x3ffcbff0 0x40092e89:0x3ffcc020 0x40093b32:0x3ffcc050 0x40094db1:0x3ffcc070 0x400959a7:0x3ffcc090 0x40095aac:0x3ffcc0c0

0x400906fc: invoke_abort at /home/balzer/esp/esp-idf/components/esp32/panic.c:670

0x400908d3: abort at /home/balzer/esp/esp-idf/components/esp32/panic.c:670

0x40094b17: xQueueGenericReceive at /home/balzer/esp/esp-idf/components/freertos/queue.c:2037

0x40094c55: xQueueTakeMutexRecursive at /home/balzer/esp/esp-idf/components/freertos/queue.c:2037

0x400855b1: lock_acquire_generic at /home/balzer/esp/esp-idf/components/newlib/locks.c:155

0x400856a9: _lock_acquire_recursive at /home/balzer/esp/esp-idf/components/newlib/locks.c:169

0x40123e9d: _vfiprintf_r at /home/jeroen/esp8266/esp32/newlib_xtensa-2.2.0-bin/newlib_xtensa-2.2.0/xtensa-esp32-elf/newlib/libc/stdio/../../../.././newlib/libc/stdio/vfprintf.c:860 (discriminator 2)

0x40118e1d: fiprintf at /home/jeroen/esp8266/esp32/newlib_xtensa-2.2.0-bin/newlib_xtensa-2.2.0/xtensa-esp32-elf/newlib/libc/stdio/../../../.././newlib/libc/stdio/fiprintf.c:50

0x40118919: __assert_func at /home/jeroen/esp8266/esp32/newlib_xtensa-2.2.0-bin/newlib_xtensa-2.2.0/xtensa-esp32-elf/newlib/libc/stdlib/../../../.././newlib/libc/stdlib/assert.c:59 (discriminator 8)

0x40092e89: vPortCPUAcquireMutexIntsDisabledInternal at /home/balzer/esp/esp-idf/components/freertos/tasks.c:3564

(inlined by) vPortCPUAcquireMutexIntsDisabled at /home/balzer/esp/esp-idf/components/freertos/portmux_impl.h:98

(inlined by) vTaskEnterCritical at /home/balzer/esp/esp-idf/components/freertos/tasks.c:4267

0x40093b32: vTaskPlaceOnEventListRestricted at /home/balzer/esp/esp-idf/components/freertos/tasks.c:3564

0x40094db1: vQueueWaitForMessageRestricted at /home/balzer/esp/esp-idf/components/freertos/queue.c:2429

0x400959a7: prvProcessTimerOrBlockTask at /home/balzer/esp/esp-idf/components/freertos/timers.c:484

0x40095aac: prvTimerTask at /home/balzer/esp/esp-idf/components/freertos/timers.c:484

Rebooting...

Chip is ESP32D0WDQ6 (revision 1)

The build from version -93 works flawlessly.

Btw, for the record: the previous builds had few crashes left, most of them happening at random places within the LWIP subsystem while running on Wifi. Since yesterday I'm testing CONFIG_WIFI_LWIP_ALLOCATION_FROM_SPIRAM_FIRST disabled, too few data (read: no crashes) on that config yet.

I assume the next big step in stability can now be achieved by replacing the idf blobs.

Can these patches be made available for gcc 8 as well? I'd like to test if they solve #3624.

Can we please have an update on this? I still can't use TLS on the ESP as per my previous post (tried with today's IDF, v4.0-dev-1275-gfdab15dc7, xtensa-esp32-elf-gcc (crosstool-NG esp32-2019r1) 8.2.0

rebuilding our project with the -95 leads to a crash reboot loop.

Solved: after upgrading our esp-idf clone to v3.3-beta3, builds with toolchain -95 now work without any problems so far.

Sorry, I was too quick assuming no problems. My build just began crashing and won't stop. The -93 build before has been running fine for two days, so there still is an issue with -95.

For those of us who can't use a binary toolchain could we get the source version that results in crosstool-ng-1.22.0-93-gf6c4cdf? (And if we could get the -95 version we could investigate the boot crash that Michael reports.)

I'm currently using 1.22.0-80-g6c4433a5

A fix for version -95 would be nice, as the once-in-a-billion problem apparently occurs in real life (I've had it this morning on my production module).

On Windows using esp-idf 3.3rc, how do I update the toolchain from xtensa-esp32-elf-win32-1.22.0-95-g5a1864d-5.2.0-psramfix-20190621.tar.gz download to test and how to go back if it doesn't work?

One more update. This should fix some more edge issues (hopefully the boot crash as well...) Apologies for the slow update speed, but internal tests aren't too fast as it takes a while to generate the psram crashes, so we can only be sure after a long time of no crashing...

Linux

MacOS

Windows (note the Windows toolchain probably still has symlinks instead of copies)

Also, a new set of Newlib binaries, recompiled with the above toolchain and with some manual fixes to some assembly routines.

@Spritetm,

after update to provided toolchain and newlib (over ESP-IDF v4.1-dev-104-gaa087667d-dirty) got the following:

I (0) cpu_start: Starting scheduler on APP CPU.

I (1440) spiram: Reserving pool of 32K of internal memory for DMA/internal allocations

Guru Meditation Error: Core 1 panic'ed (Interrupt wdt timeout on CPU1)

Core 1 register dump:

PC : 0x40096dd9 PS : 0x00060634 A0 : 0x80097eed A1 : 0x3ffb4d60

0x40096dd9: vPortCPUAcquireMutexIntsDisabledInternal at /home/user/esp/esp-mdf/esp-idf/components/freertos/tasks.c:3507

(inlined by) vPortCPUAcquireMutexIntsDisabled at /home/user/esp/esp-mdf/esp-idf/components/freertos/portmux_impl.h:99

(inlined by) vTaskEnterCritical at /home/user/esp/esp-mdf/esp-idf/components/freertos/tasks.c:4201

A2 : 0x3ffb0cac A3 : 0x0000abab A4 : 0xb33fffff A5 : 0x00000001

A6 : 0x00060623 A7 : 0x0000cdcd A8 : 0x0000cdcd A9 : 0x3ffb4d70

A10 : 0x00000002 A11 : 0x3ffc0334 A12 : 0x0001fc68 A13 : 0x00000000

A14 : 0x3ffdfff8 A15 : 0x3ffc038c SAR : 0x00000000 EXCCAUSE: 0x00000006

EXCVADDR: 0x00000000 LBEG : 0x00000000 LEND : 0x00000000 LCOUNT : 0x00000000

ELF file SHA256: 52a740c3ecf03d065edf990d89dc118a9675be21de44999e99560e357e5317ca

Backtrace: 0x40096dd6:0x3ffb4d60 0x40097eea:0x3ffb4d90 0x40095b4e:0x3ffb4db0 0x4009601c:0x3ffb4dd0 0x40096105:0x3ffb4e10 0x4009611d:0x3ffb4e30 0x400856ba:0x3ffb4e50 0x400856e8:0x3ffb4e70 0x40085849:0x3ffb4ea0 0x4013399e:0x3ffb4ec0 0x40132345:0x3ffb4fa0 0x40132299:0x3ffb4ff0 0x40096ee3:0x3ffb5020 0x40097962:0x3ffb5040 0x40085a3a:0x3ffb5060 0x400849db:0x3ffb5080 0x400953fd:0x3ffb50a0

0x40096dd6: uxPortCompareSet at /home/user/esp/esp-mdf/esp-idf/components/freertos/tasks.c:3507

(inlined by) vPortCPUAcquireMutexIntsDisabledInternal at /home/user/esp/esp-mdf/esp-idf/components/freertos/portmux_impl.inc.h:86

(inlined by) vPortCPUAcquireMutexIntsDisabled at /home/user/esp/esp-mdf/esp-idf/components/freertos/portmux_impl.h:99

(inlined by) vTaskEnterCritical at /home/user/esp/esp-mdf/esp-idf/components/freertos/tasks.c:4201

0x40097eea: xTaskPriorityDisinherit at /home/user/esp/esp-mdf/esp-idf/components/freertos/tasks.c:4097

0x40095b4e: prvCopyDataToQueue at /home/user/esp/esp-mdf/esp-idf/components/freertos/queue.c:2044

0x4009601c: xQueueGenericSend at /home/user/esp/esp-mdf/esp-idf/components/freertos/queue.c:2044

0x40096105: prvInitialiseMutex at /home/user/esp/esp-mdf/esp-idf/components/freertos/queue.c:2044

0x4009611d: xQueueCreateMutex at /home/user/esp/esp-mdf/esp-idf/components/freertos/queue.c:2044

0x400856ba: lock_init_generic at /home/user/esp/esp-mdf/esp-idf/components/newlib/locks.c:79

0x400856e8: lock_acquire_generic at /home/user/esp/esp-mdf/esp-idf/components/newlib/locks.c:134

0x40085849: _lock_acquire_recursive at /home/user/esp/esp-mdf/esp-idf/components/newlib/locks.c:171

0x4013399e: _vfiprintf_r at /builds/idf/crosstool-NG/.build/src/newlib-2.2.0/newlib/libc/stdio/vfprintf.c:860 (discriminator 2)

0x40132345: fiprintf at /builds/idf/crosstool-NG/.build/src/newlib-2.2.0/newlib/libc/stdio/fiprintf.c:50

0x40132299: __assert_func at /builds/idf/crosstool-NG/.build/src/newlib-2.2.0/newlib/libc/stdlib/assert.c:59 (discriminator 8)

0x40096ee3: vPortCPUReleaseMutexIntsDisabledInternal at /home/user/esp/esp-mdf/esp-idf/components/freertos/tasks.c:3507

(inlined by) vPortCPUReleaseMutexIntsDisabled at /home/user/esp/esp-mdf/esp-idf/components/freertos/portmux_impl.h:110

(inlined by) vTaskExitCritical at /home/user/esp/esp-mdf/esp-idf/components/freertos/tasks.c:4261

0x40097962: xTaskResumeAll at /home/user/esp/esp-mdf/esp-idf/components/freertos/tasks.c:3507

0x40085a3a: spi_flash_op_block_func at /home/user/esp/esp-mdf/esp-idf/components/spi_flash/cache_utils.c:201

0x400849db: ipc_task at /home/user/esp/esp-mdf/esp-idf/components/esp_common/src/ipc.c:62

0x400953fd: vPortTaskWrapper at /home/user/esp/esp-mdf/esp-idf/components/freertos/port.c:435

Core 0 register dump:

PC : 0x40096dd0 PS : 0x00060334 A0 : 0x800856a1 A1 : 0x3ffb6270

0x40096dd0: uxPortCompareSet at /home/user/esp/esp-mdf/esp-idf/components/freertos/tasks.c:3507

(inlined by) vPortCPUAcquireMutexIntsDisabledInternal at /home/user/esp/esp-mdf/esp-idf/components/freertos/portmux_impl.inc.h:86

(inlined by) vPortCPUAcquireMutexIntsDisabled at /home/user/esp/esp-mdf/esp-idf/components/freertos/portmux_impl.h:99

(inlined by) vTaskEnterCritical at /home/user/esp/esp-mdf/esp-idf/components/freertos/tasks.c:4201

A2 : 0x3ffb11fc A3 : 0x0000cdcd A4 : 0xb33fffff A5 : 0x00000001

A6 : 0x00060123 A7 : 0x0000abab A8 : 0x0000cdcd A9 : 0x3ffb6470

A10 : 0x3ffb0cac A11 : 0x00000000 A12 : 0x00060023 A13 : 0x00000001

A14 : 0x00000000 A15 : 0x00000000 SAR : 0x0000001e EXCCAUSE: 0x00000006

EXCVADDR: 0x00000000 LBEG : 0x4008d090 LEND : 0x4008d0a6 LCOUNT : 0xffffffff

0x4008d090: memcpy at /builds/idf/crosstool-NG/.build/src/newlib-2.2.0/newlib/libc/machine/xtensa/memcpy.S:146

0x4008d0a6: memcpy at /builds/idf/crosstool-NG/.build/src/newlib-2.2.0/newlib/libc/machine/xtensa/memcpy.S:161

ELF file SHA256: 52a740c3ecf03d065edf990d89dc118a9675be21de44999e99560e357e5317ca

Backtrace: 0x40096dcd:0x3ffb6270 0x4008569e:0x3ffb62a0 0x400856e8:0x3ffb62c0 0x40085849:0x3ffb62f0 0x4013399e:0x3ffb6310 0x40132345:0x3ffb63f0 0x40132299:0x3ffb6440 0x40095911:0x3ffb6470 0x40096a16:0x3ffb64a0 0x40095a30:0x3ffb64c0 0x400959e6:0x00000001 |<-CORRUPTED

0x40096dcd: vPortCPUAcquireMutexIntsDisabledInternal at /home/user/esp/esp-mdf/esp-idf/components/freertos/tasks.c:3507

(inlined by) vPortCPUAcquireMutexIntsDisabled at /home/user/esp/esp-mdf/esp-idf/components/freertos/portmux_impl.h:99

(inlined by) vTaskEnterCritical at /home/user/esp/esp-mdf/esp-idf/components/freertos/tasks.c:4201

0x4008569e: lock_init_generic at /home/user/esp/esp-mdf/esp-idf/components/newlib/locks.c:51

0x400856e8: lock_acquire_generic at /home/user/esp/esp-mdf/esp-idf/components/newlib/locks.c:134

0x40085849: _lock_acquire_recursive at /home/user/esp/esp-mdf/esp-idf/components/newlib/locks.c:171

0x4013399e: _vfiprintf_r at /builds/idf/crosstool-NG/.build/src/newlib-2.2.0/newlib/libc/stdio/vfprintf.c:860 (discriminator 2)

0x40132345: fiprintf at /builds/idf/crosstool-NG/.build/src/newlib-2.2.0/newlib/libc/stdio/fiprintf.c:50

0x40132299: __assert_func at /builds/idf/crosstool-NG/.build/src/newlib-2.2.0/newlib/libc/stdlib/assert.c:59 (discriminator 8)

0x40095911: vPortCPUAcquireMutexIntsDisabledInternal at /home/user/esp/esp-mdf/esp-idf/components/freertos/portmux_impl.inc.h:106

(inlined by) vPortCPUAcquireMutexIntsDisabled at /home/user/esp/esp-mdf/esp-idf/components/freertos/portmux_impl.h:99

(inlined by) vPortCPUAcquireMutex at /home/user/esp/esp-mdf/esp-idf/components/freertos/port.c:394

0x40096a16: vTaskSwitchContext at /home/user/esp/esp-mdf/esp-idf/components/freertos/tasks.c:3507

0x40095a30: _frxt_dispatch at /home/user/esp/esp-mdf/esp-idf/components/freertos/portasm.S:406

0x400959e6: _frxt_int_exit at /home/user/esp/esp-mdf/esp-idf/components/freertos/portasm.S:206

Our experience is similar, right back to the same unacceptable random data corruption in the field that @dexterbg saw.

-93 at least executes and is in testing. Both -95 and -98 fail with Mutex Assertion failures as noted by @no1seman .

In our case v3.3rc with the patch for issue 3865.

Thanks for reporting this. I think I saw this before, has something to do with a stub that exists in newlib that sometimes does not get linked correctly. Let me quash this, should be a pretty quick fix.

@no1seman @stuarthatchbaby In esp-idf/components/freertos/include/freertos/portmacro.h , around line 149, can you change uint32_t owner to volatile uint32_t owner, and a bit below, uint32_t count to volatile uint32_t count? That should fix it. When this issue is fixed, I'll change it in master on our side.

@Spritetm Any thoughts on xtensa-gcc 8 in regards to this issue?

@Spritetm,

short answer - again NO. Boot now success, but PSRAM errors still present.

Long answer:

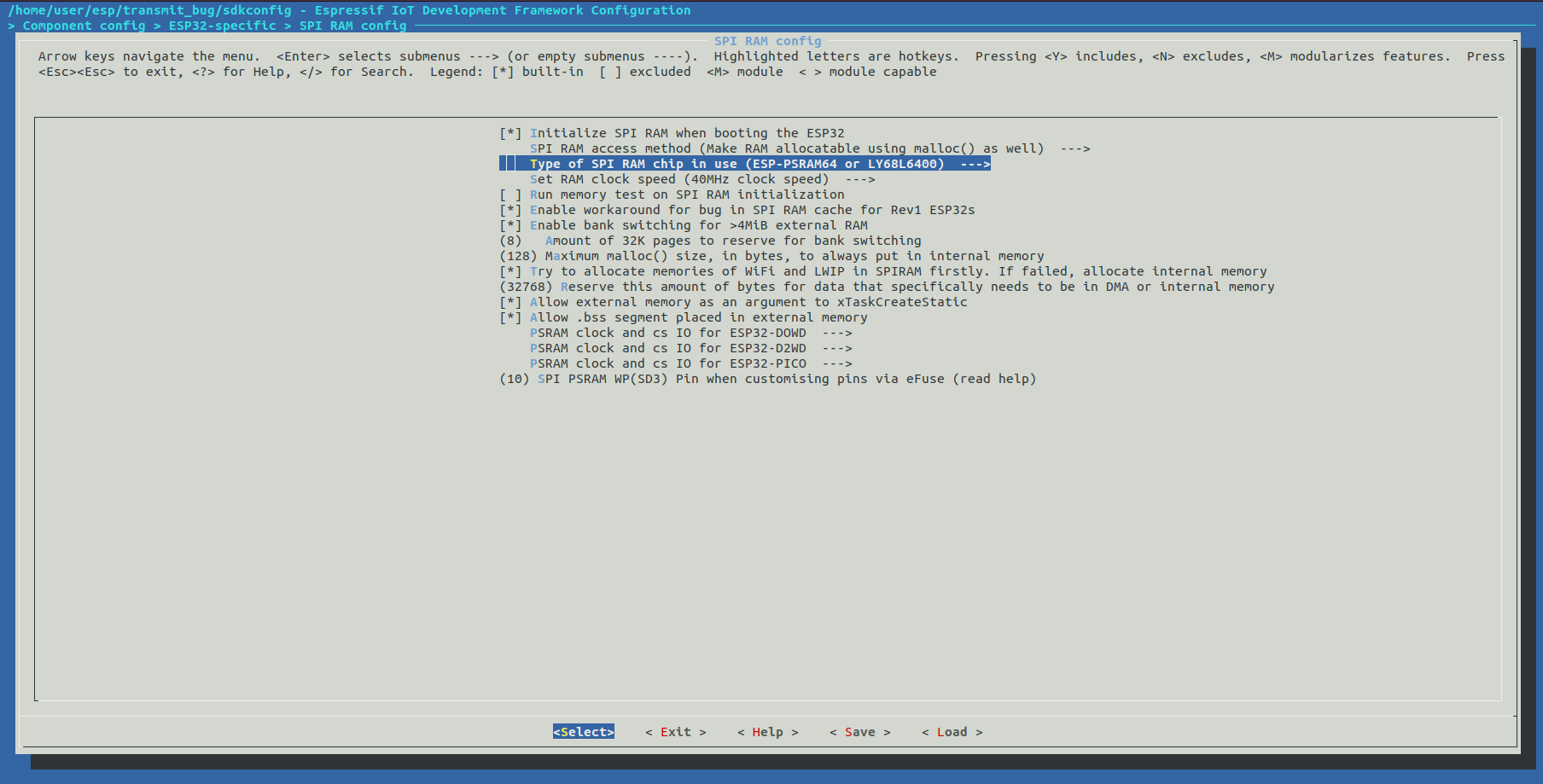

For test I'm using app that I wrote some weeks ago when I was explore http bug (https://github.com/espressif/esp-idf/issues/3851): https://github.com/no1seman/transmit_bug

The logic is the following:

- Up WiFi STA

- Make 3 random buffers and put it into external RAM (CONFIG_SPIRAM_MALLOC_ALWAYSINTERNAL = 128 and buffers a little bit greater)

- Up http server

- On each http GET request app adds into http response header MD5 hash of transmited data

- Recieving script (http_test.sh) calculates md5 hash after each recieve and compares it with hash from http response header.

Environment:

- Chip: ESP32D0WDQ6 (revision 1)

- PSRAM: ESP-PSRAM64H (3.3V)

- FLASH: Winbond 25Q64JVSIQ (3.3V)

- Compiler: xtensa-esp32-1.22.0-98-g4638c4f-5.2.0-20190827: https://dl.espressif.com/dl/xtensa-esp32-elf-linux64-1.22.0-98-g4638c4f-5.2.0-20190827.tar.gz

- IDF ESP-IDF v4.1-dev-104-gaa087667d-dirty

- newlib PSRAM fix: https://dl.espressif.com/dl/newlib-psram-fix-ldstld-14aug.zip

- In esp-idf/components/freertos/include/freertos/portmacro.h 2 lines changed:

uint32_t owner; => volatile uint32_t owner;

uint32_t count; => volatile uint32_t count;

esp32 log:

esp32.zip

http_test.sh script log:

script.zip

PS If PSRAM swithed off - test works perfectly without any errors along some days.

Gotcha. Thanks for that, test cases that can quickly reproduce the issue are always welcome.

@Spritetm,

not for!

You can also find another one test for mqtt bug here: https://github.com/espressif/esp-mqtt/issues/126

100% that bug relates to PSRAM bug, but it's a little more difficult to reproduce.

In a few words:

MQTT client connects to MQTT server, application sends periodicaly some messages, esp_mqtt_client->run == 1 (located in External RAM), then, in one uncertain moment esp_transport_write() returns ESP_FAIL, esp_mqtt_task() sets esp_mqtt_client->run == 0 and ... no breakpoint on esp_mqtt_client->run write fires. In a result: mqtt client think that connection exits but actually socket already closed, reconnection will never occur.

PS If you have some quiestions or need my involvement - just let me know

@Spritetm The change to freeRTOS enabled us to get past the boot issue with -98. No feedback on data corruption yet.

Version -98 + volatile patch builds our main project bootable and my corruption test runs without any issues. I'll collect some more run data from our main project tomorrow.

no1seman: Wrt your http testcase: I'm having trouble reproducing it... on my system it works perfectly fine: SUCCESS: 3000 - 100%. Apart from the WiFi AP name and password, there's nothing I need to change in the config, right?

Also, small note wrt the 98 build: we have some issues with interrupt watchdog timeouts in some of the code we tested. Be aware that while it may help with the psram corruption issues, it's still a work-in-progress.

@Spritetm,

thats strange, because I have a problem reproducing on different boards (I'm using several TTGO T8 v 1.7 boards: https://github.com/LilyGO/TTGO-T8-ESP32/blob/master/Schematic.pdf), unfortunately just now can't test on Espressif DevKit because I burned out 2 DevKit's a few weeks ago and new ones is on the way to me. On my testboards I've got this test app work perfectly only if SPIRAM is switched off.

Here is my efuse table:

efuse1.zip

Here is my SPI RAM settings:

Have you used sdkconfig provided from my test app repo?

PS I'am away for today, I can look at the problem deeper only tommorow.

UPD: Whats the results of the the test do you have with clean IDF ESP-IDF v4.1-dev-104-gaa087667d & esp32-2019r1-8.2.0?

Yes, I used the sdkconfig of the test repo. And I have tried with the -98 release I posted earlier in this issue. The 8.2.0 toolchain can indeed lead to issues as it doesn't have all the fixes yet; we'll update that as soon as the 5.2.0 workaround is entirely fleshed out.

@Spritetm,

excuse me, it's my fault, I set wrong SPI PSRAM WP(SD3) pin setting (it's GPIO7 - for DevKit and for TTGO it should be - GPIO10 because of WP connected to GPIO10).

After fixing that, with toolcahin: xtensa-esp32-elf-linux64-1.22.0-98-g4638c4f-5.2.0-20190827, newlib fix: newlib-psram-fix-ldstld-14aug and portmacro.h fix:uint32_t owner; => volatile uint32_t owner; uint32_t count; => volatile uint32_t count; test became more stable, but I've got the following errors with 10K iterations test (it happened after 3336 iteration) :

I (1677479) example: Processing request 'index.html.gz' took 20 ms

W (1677539) wifi: alloc eb len=24 type=3 fail, heap:4040872

W (1677539) wifi: m f null

W (1677579) wifi: alloc eb len=24 type=3 fail, heap:4040540

W (1677579) wifi: m f null

I (1753049) example_connect: Wi-Fi disconnected, trying to reconnect...

W (1753049) wifi: alloc eb len=76 type=2 fail, heap:4041656

W (1753049) wifi: m f probe req l=0

I (1753049) example: Stopping webserver

I (1754139) tcpip_adapter: sta ip: 192.168.3.129, mask: 255.255.255.0, gw: 192.168.3.1

I (1754139) example: Starting webserver

I (1754139) example: Starting server on port: '80'

I (1754149) example: Registering URI handlers

I (1754169) example: GET /index.css

I (1754169) example: Get static file: index.css.gz

W (1754249) wifi: alloc eb len=36 type=3 fail, heap:4034300

W (1754249) wifi: mem fail

I (1754369) example: Sending file 'index.css.gz' took 200 ms

I (1754369) example: Processing request 'index.css.gz' took 200 ms

I (1754479) example: GET /index.js

I (1754479) example: Get static file: index.js.gz

I (1754779) example: Sending file 'index.js.gz' took 300 ms

I (1754779) example: Processing request 'index.js.gz' took 300 ms

I (1754809) example: GET /index.html

I (1754819) example: Get static file: index.html.gz

I (1754839) example: Sending file 'index.html.gz' took 20 ms

I (1754839) example: Processing request 'index.html.gz' took 30 ms

W (1754909) wifi: alloc eb len=24 type=3 fail, heap:4040872

W (1754909) wifi: m f null

W (1754919) wifi: alloc eb len=24 type=3 fail, heap:4040516

W (1754919) wifi: m f null

Here is full log:

http_bug_psram_bug_update_0.zip

The build with -98 and volatile patch has been running for 24h without issues, I'm rolling this out to beta testers now.

@Spritetm , @negativekelvin ,

oh no, again..... you are right, it's my fault again, it's memory leak in my test app. After fixing 12 hours run - OK.

Waiting for final release for GCC 5.2.0 and also for GCC 8.2.0

Thanks in advance

What can I do now to update software built with ESP IDF 3.3 release that is going into the field in the next week, heavily uses PSRAM, but doesn't crash that I know of? Using Windows and unsure of the toolchain update procedure and how to revert if something goes wrong. Or if I should do nothing and will fixes be rolled out some other way?

Real world feedback from our beta testers: the build from toolkit -98 with the new libs is the most stable build we ever had. It will be rolled out to all users in a few days.

@dexterbg,

that's really good news, but -98 - is solution for GCC 5.2.0 and whats going on with GCC 8.2.0 solution?

Thanks for the feedback. As soon as we have time, we'll make an official release for the 5.2.0 GCC and also forward-port the changes to 8.2.0.

@Spritetm Any chance that this fix will be included in the upcoming release of gcc 8 which includes #3624 ? It'd be really great as these two issues are such show stoppers for anyone wanting to use PSRAM and gcc8.

We all get it - analysing and ensuring that whatever solution is found actually solves the underlying issue takes time; especially for problems of this nature. It needs to take time, this can't be rushed. But when you after nearly two years seemingly dangle the solution in front of the community - people who believe in the product and spend huge amount of time giving feedback, reporting bugs and submitting pull requests for the betterment of your product, it raises a few eyebrows.

Believe me, I know full and well that it often is not up the the developers what to fix first - some large customer wants this or that and some manager pushes resources towards that instead of what the development team knows is actually important for the product.

I hold no grudges towards the Espressif developers frequenting GH, I'm sure they are doing their best within whatever constraints they have. If fact, I think they deserve praise for putting up with all the questions and (often user-caused) problems being thrown at them.

However, the hard truth is that this issue effectively renders the ESP32 unusable for what is likely a large part of the user base, more so than any other reported issue I've seen, be it a bug in a peripheral driver, some quirk with the new CMake system or whatever.

_A device that can't be relied upon not to corrupt itself is simply unusable for production._

I don't know how to put it in clearer terms than that - we need this issue resolved to make the devices usable. And we need it sooner rather than later.

So can we please have some feedback on when the solution will be available to us (gcc 5 and 8 both)?

Well, someone had to write that. Unfortunately. Should be obvious, perhaps it's not.

Apparently rev3 will fix these issues in hardware but it is unclear what production plan is for rev2/rev3

https://github.com/espressif/esp-idf/commit/751b60b17188d28732ee64443063d644c925cd44

I'm using GCC 5.2 and ESP IDF 3.3 release on Windows and need to do something now for beta testing, so used 7zip in admin mode to inflate the toolchain in C:\msys32\opt\xtensa-esp32-elf-new, but it is 300MB smaller than the 80 toolchain which is in C:\msys32\opt\xtensa-esp32-elf and which I can rename, but compilation still works (after changing the path and it gives a warning it is using 98). I presumed with the smaller size of 98 it was using symbolic links to use parts of the 80 toolchain, but if I can rename the 80 toolchain folder it seems not? Need a sanity check. I've never used symbolic links and they seem like shortcuts, but in this case it seems to be getting 300MB of toolchain from thin air?

Apparently rev3 will fix these issues in hardware but it is unclear what production plan is for rev2/rev3

That is awesome, but also raises doubt whether the issues will ever be fully resolved for rev1. ESP32 is supposed to be production ready, not a public beta.

At this point I won't trust the new toolchain will actually fix all the problems for rev1, even after an official release. Perhaps it'll work better, but still not 100% stable.

At this point I won't trust the new toolchain will actually fix all the problems for rev1, even after an official release. Perhaps it'll work better, but still not 100% stable.

Espressif is going to have a not so fun time explaining that to existing customers. Just think about how many devices are out there.

I must agree that we have no proof that this fix really is a fix, or if the chances are just multiplied that it fails: 2x writing => 1% chance of failure * 1% chance of failure => 0,01% chance of failure

It seems stable, but it is just less of a chance of a failure.

This problem does not seem to be around with unicore mode. Maybe that is the reason why the new coming up flagship ESP32-S2 is reported to only be single core.

Because of all of this, for me it would be more interesting to have an official statement if Espressif knows about any other problems which happen in unicore mode. Because of not, we will just continue to handle ESP32 as a single core platform, as we do right now with low.js.

No, it definitely is not stable yet. It's much more stable than before, but the bug still occurs. It's been reported by users and just an hour ago it happened on my own production module, leading to a false alert. A single cleared sensor value within an array of values that are read in blocks → most probably the bug.

@dexterbg Worst part is, you never really know if it's the bug, or some other race.

In this case, there is just one writer to that array, that gets the values in blocks directly from the CAN bus (single frames), and if it was some pointer running wild it would trash more than one byte. But I agree, in most cases you will simply get some random data corruption and/or crashes that simply cannot be tracked down.

@neoniousTR restricting to single core isn't sufficient, you also need to restrict the RAM usage to the corresponding "safe" half of the SPIRAM → https://github.com/espressif/esp-idf/issues/2892#issuecomment-459120605

Our tests showed it is sufficient. The 2 MB split only happens on dual core. We've tested lots of scenarios (the test app and the starting post of this issue are written by me)... And low.js is extremly stable since we went to single core.

Wonder why you think differently..?

PS: It is not sufficient to just use one core, you have to configure unicore mode.

Sorry, my fault, just read through your later findings – I didn't test unicore mode.

That's sad news @dexterbg. I really wish Espressif could wake up and give this some needed attention and tell us how they intend to handle this going forward. This goes far beyond it being an inconvenience, but I think I've made that clear already.

Is there anything to suggest that 8 and 16 bit operations on PSRAM are corrupted, or just 32 bit?

I need to revise my last post (https://github.com/espressif/esp-idf/issues/2892#issuecomment-543833524).

As @szmodz said so wisely: you never really know if it's the bug. On further investigation I traced that wrong sensor value down to a hardware bug in the ESP32 CAN controller. I've submitted an issue & workaround on that: https://github.com/espressif/esp-idf/issues/4276

So that actually wasn't the SPIRAM bug, and therefore I need to revert my statement about the stability of the fix.

TL;DR: there are currently no detectable stability issues with toolkit -98 on our project.

That makes me very happy. I just finished something else and was about to get to packaging the toolchain for more general release, and I already was afraid to have to go through the entire process of finding something to get this stable all over again. Thanks for the update.

@Spritetm Will there be an update to the ESP32 errata regarding this issue?

https://www.espressif.com/sites/default/files/documentation/eco_and_workarounds_for_bugs_in_esp32_en.pdf

It would be nice to have an official statement regarding effects on unicore vs multicore operation, etc.

Preferably including performance impact regarding issue 3.10

I've tried the -98 toolchain with updated newlib and volatile patches on ESP-IDF v3.3. Unfortunately, my tests (verifying data received over MQTT) still fail with some regularity.

Based on some comments above, I removed the -mfix-esp32-psram-cache-issue flag. Is that still required?

Or does the fix only work for ESP-IDF v4.0?

@rohansingh mfix-esp32-psram-cache-issue is still required, but even then your best bet is running in single core mode. If you still get issues in single core mode, it's probably (but not certainly) an issue on your side.

@szmodz Got it. I added the flag back but still hit the issue. I'm actually able to reproduce it very quickly, under a normal non-benchmark workload.

I'll try with unicore now. If that doesn't work, I guess I'm just going to have to update critical operations to use internal memory and treat external RAM as an untrustworthy cache.

@rohansingh if you're able to reproduce it quickly, then it's most likely (really) something on your side. This is not something that is easily reproducible (and that's part of the problem).

@szmodz Hmm, the symptoms I'm seeing are that bytes become zeroed out after a memcpy, and that this only happens when using PSRAM. Additional details here:

https://github.com/GoogleCloudPlatform/iot-device-sdk-embedded-c/issues/90

Basically though, it's the same issue as #3006. I'm very happy to consider the possibility that it's not that issue and that it's something else on my end. But having buffers corrupted by a simple memcpy makes me think that it's not my code at fault.

We are 100% stable with single core + mfix-esp32-psram-cache-issue in hundreds of installations.. To be honest however, low.js is not based on the newest ESP-IDF, we forked of 3.0 long time ago because most development in ESP-IDF seemed not to be relevant for us/dangerous for stability and did many fixes in-house.

@rohansingh perhaps you should check that the correct versions of libc and libgcc are getting linked in. There were some issues with the idf linking incorrect versions.

@neoniousTR Thanks for the info, good to hear that things are working for someone.

I spent this morning testing with ESP-IDF v3.3, volatile patch, updated newlib binaries, the -mfix-esp32-psram-cache-issue flag, the -98 toolchain, and CONFIG_FREERTOS_UNICORE. Basically, everything and the kitchen sink. The issue still occurs.

I'm using an MQTT library that copies received message payloads into a buffer, 32 bytes at a time. This results in a large number of memcpy operations for a single message. A significant percentage of these messages end up corrupted by one of those operations, with some bytes that were previously copied successfully being replaced with \0. This happens more under load.

So either this is some completely different issue, or this toolchain fix is not effective. For now I've just switched to only use MALLOC_CAP_INTERNAL for this MQTT library. It masks the issue but sucks to have to use internal memory.

@rohansingh That's interesting. Do you think you could reduce that code as much as possible and post here?

@rohansingh That's interesting. Do you think you could reduce that code as much as possible and post here?

That shouldn't be too difficult, it's a pretty bland usage of the esp-google-iot library. The only thing I'm doing to trigger the issue is sending a bunch of MQTT messages to a device very quickly.

@rohansingh perhaps you should check that the correct versions of libc and libgcc are getting linked in. There were some issues with the idf linking incorrect versions.

Good call, I'll double-check. Hopefully my build system just has some issue and is linking in the wrong version, that would be a good result :)

Turns out that the wrong version of libc was getting linked in, without the PSRAM fixes. Major mea culpa, apologies to everyone and thanks a ton to @szmodz for pointing me down that path.

I've been using PlatformIO as my build system, and it had a number of issues that I had to address to make sure the correct libs are linked in. Everything is running beautifully now.

@rohansingh No worries, we all jump into conclusions sometimes and I think it's even more understandable in this case. That being an understatement.

Is there an update to when this will be officially available in the ESP-IDF or some official instructions on using it, and whether or not it's actually fixed? I'm experiencing an issue in production that I think may be related to this bug and I'm wondering if it's time to try to integrate the changes.

See also https://github.com/espressif/esp-idf/issues/4561#issuecomment-571756918

Is the gcc8 version still happening? With rev3 there will be decreasing motivation (ha) to ship this.

The lack of response really is weird. I am really wondering if you found a case which still is not fixed and if you are trying to somehow silence this...

Please confirm this is NOT the case...

In case I am lucky, I am currently reviewing the status, checking if it is OK to switch back to dual core. GCC5 is fine for me, apart from one compilation error with asio it seems to compile the newest ESP-IDF master.

However, I am wondering, what about libstdc++ and the binary blobs (wifi?). Don't they need to be updated, too, to be stable?

I really hope for an answer here...

Hi folks, update on the status (was some delay because of CNY and stuff):

- The fix will be included in gcc8. We're preparing a release as we speak.

- We decided to go with the alternative memw-based workaround by default (mfix-esp32-psram-cache-memw on gcc5-98 toolchain) as the dupldst workaround had some side-effects affecting hardware encryption (specifically, it interacted with the workaround for a dport bug we used there). The dupldst workaround will still be selectable if you have more faith in that and enable software encryption.

Binary blobs for at least the git master of esp-idf will be recompiled with this fix as well; I think a workaround-enabled version of libstdc++ is automatically generated in the same way as newlib by the toolchain build process.

So you are saying the duplst interaction will interact with mbedtls? Can you give more information how exactly? What dport bug workaround exactly?

The bug is https://www.espressif.com/sites/default/files/documentation/eco_and_workarounds_for_bugs_in_esp32_en.pdf section 3.10. For most peripherals, we work around this by halting the other CPU, and this works fine. However, for the hardware encryption hardware, this gives a significant speed penalty, so instead we use some fine-tuned assembly to make sure we don't trigger the bug. However, the dupldst workaround interferes with this in some as-of-yet unknown way. Note that we know this because some testcases specifically designed to squirrel out this failure failed; it's unknown how often this problem happens in practice.

(Note that we also have been testing the memw workaround in production code internally for a while; this is less of a last-minute change than the announcement here may make it look.)

It is important for me to understand this..

Please explain one more detail: Which code are you talking about exactly with hardware encryption? mbedtls has aes.c and sha.c, but this code is not exactly timed ASM code, just normal C code.

Can you point exactly to the code which behaves weird?

Thank you.

Sorry, I misquoted the ECO, it's not 3.3 but item 3.10. The testcase that fails is in esp-idf/components/mbedtls/test/test_apb_dport_access.c . Somewhere in the mbed-tls logic, some assembly is called, I'll see if I can find it back...

It would be great if you can find it. Because I am not sure what ASM has to do with this, the test case is also C (maybe some ASM in a macro?)

I would like to test a few things myself and understand this better, because I expect the memw workaround to be slower than the duplst version. Do you think the same?

When writing/reading large bits of data, the hardware encryption port bits in mbedtls access the hardware using e.g. esp_dport_access_read_buffer, which is defined in esp-idf/components/esp32/dport_access.c. The assembly snippets used are there as well. The memw and dupldst seem to differ in speed depending on what the workload is, in my experience; in a fair amount of cases, memw actually seems to be the faster workaround.

For someone like me who has an increasing number of devices in the field using ESP IDF 3.3 release with all the fixes I could find here about 2 months ago, and no problems AFAIK, but heavily using PSRAM, do I need to take any action?

Hi there,

first things first: Thank you all for your investigations and hard work on fixing this issue. We have been following you and reporting about this at [1] and [2] since August 2019 after being hit by the same issue when working on a versatile MicroPython datalogger for Pycom devices [3].

When we found @pycom updated their most recent development firmware release 1.20.2 to be based upon IDF v3.3.1 just a few minutes ago [4], we compared the changes we made to our Dragonfly builds [5] coming from this conversation with the changes now coming from upstream [6,7]. It looks like most of the details outlined within this discussion have been incorporated here and there.

However, we found two details which might still be unaddressed.

a) We found @pycom would still use the 1.22.0-80 toolchain in contrast to the 1.22.0-98 toolchain recommended here and used for building our Dragonfly firmware images. At least, this is what the commit message within https://github.com/pycom/pycom-micropython-sigfox/commit/35d737fc says. I am thinking about telling them about this detail. cc @husigeza, @Xykon

b) @Spritetm: We don't see your recommendations at https://github.com/espressif/esp-idf/issues/2892#issuecomment-525697255 regarding the volatile changes within portmacro.h anywhere within the Espressif IDF yet as outlined by @dexterbg within https://github.com/openvehicles/esp-idf/commit/8c015a9fffe566eb22c5df2892caa3ac352be6cd, by @rohansingh within https://github.com/tidbyt/esp-idf/commit/61a766cb21c4bcb40d2a511b2455215106eed42c and (just recently) by @husigeza within https://github.com/pycom/pycom-esp-idf/pull/18. Might this be just another glitch or isn't this required anymore to mitigate this issue?

Thanks already for taking the time to look into this.

With kind regards,

Andreas.

[1] https://community.hiveeyes.org/t/random-memory-corruption-faults-on-esp32-wrover-rev-1-and-rev-2-when-running-in-dual-core-mode/2515

[2] https://community.hiveeyes.org/t/investigating-random-core-panics-on-pycom-esp32-devices/2480

[3] https://github.com/hiveeyes/terkin-datalogger

[4] https://community.hiveeyes.org/t/pycom-firmware-release-1-20-2/2945

[5] https://community.hiveeyes.org/t/dragonfly-firmware-for-pycom-esp32/2746

[6] https://github.com/pycom/pycom-micropython-sigfox/pull/394

[7] https://github.com/pycom/pycom-esp-idf/tree/idf_v3.3.1

Hi @Spritetm, I am trying to understand if this bug is likely present in my work. ECO ( https://www.espressif.com/sites/default/files/documentation/eco_and_workarounds_for_bugs_in_esp32_en.pdf ) section 3.9 says "This bug is automatically worked around when external SRAM use is enabled in ESP-IDF V3.0 and newer." but my understanding is that this fix requires a new toolchain, and the ESP-IDF docs for v3.3.1, which is what I am using, do not reference a new toolchain.

Can you please provide a summary of the state of this bug, whether it is really fixed in v3.3.1 or not, and if there is anything users need to do to get the fix, if it is available?

@szmodz the toolchain update has been merged, will appear on GitHub as soon as we resolve some unrelated CI failures. Should not take more than a couple of days. After that will also backport the update to v4.1 and v4.0.

@igrr Thanks. I've noticed the toolchain archives are already up.

Updated on the master branch in https://github.com/espressif/esp-idf/commit/e5dab771dd31c3b3034c3a18975bd2fd4b5fd085.

So far dupldst seems to be much more stable in my experience.

@igrr what is the latest commit containing static libs built with 2019r2? Anything earlier than https://github.com/espressif/esp-idf/commit/e5dab771dd31c3b3034c3a18975bd2fd4b5fd085 ?

@szmodz I think Wi-Fi/bt libraries are not rebuilt with 2020r1 yet. So, all commits up until the tip of the master branch.

@Spritetm @igrr I've been following from the start, and it is still not clear if this issue is documented in the ECO document. Is it considered the same as bug 3.10? If so it clearly isn't fixed in IDF 3.0.

Is sillicon v3 affected or can it be used safely?

Hello!

I see that esp-2020r2 toolchain is out, and it states that "Fixes the PSRAM workaround". What does this exactly mean ?

When linking an application binary which uses PSRAM, should the libraries from "esp32-psram" folder(s) to be used ? (e.g. libc.a, libgcc.a, libstdc++.a).

Hi @geza-pycom, The previous release (2020r1) had two issue related to the PSRAM workaround, which were identified and fixed by @szmodz: https://github.com/espressif/esp-idf/issues/5090. The fixes were incorporated and released in 2020r2.

When linking the application, GCC will automatically use correct library paths when -mfix-esp32-psram-cache-issue flag is present on the linker command line. You can verify this as follows:

$ xtensa-esp32-elf-gcc -print-file-name=libc.a

/home/user/.espressif/tools/xtensa-esp32-elf/esp-2020r2-8.2.0/xtensa-esp32-elf/bin/../lib/gcc/xtensa-esp32-elf/8.2.0/../../../../xtensa-esp32-elf/lib/libc.a

$ xtensa-esp32-elf-gcc -mfix-esp32-psram-cache-issue -print-file-name=libc.a

/home/user/.espressif/tools/xtensa-esp32-elf/esp-2020r2-8.2.0/xtensa-esp32-elf/bin/../lib/gcc/xtensa-esp32-elf/8.2.0/../../../../xtensa-esp32-elf/lib/esp32-psram/libc.a

If you are using a custom build system, ensure that -mfix-esp32-psram-cache-issue is passed to the final linking command.

@sinope-db You are right, we'll update the ECO document to mention the actual versions where the issue is fixed.

In the v3 silicon, the issue has been fixed, and the workaround is no longer required.

@igrr Are these fixes available for the LTS 3.3.x releases? The docs for 3.3.2 seem to still refer to the older toolchain: https://dl.espressif.com/dl/xtensa-esp32-elf-osx-1.22.0-80-g6c4433a-5.2.0.tar.gz

For 3.x releases, we don't have an updated toolchain build, yet. It is in progress, and this issue will be updated when the update happens. We will be keeping the GCC 5.2 + newlib 2.2.0 based toolchain in 3.x, since an update to GCC 8 + newlib 3 would be a breaking change.

Sounds good @igrr - thank you for the update!

@vonnieda the toolchain has been updated in release/v3.3 branch by commit https://github.com/espressif/esp-idf/commit/f4333c8e3a554e8bb4210d825cbdf4bcaa1fc1b8, this will be included into the next bugfix release.

That's great @igrr - thank you very much! I will start testing immediately!

@igrr Why toolchain 1.22.0-96-g2852398 instead of 1.22.0-98-g4638c4f?

@dexterbg I think that @Spritetm

built the earlier version from committ 4638c4f of crosstool-ng, which happened to be 98 commits ahead of 1.22.0 tag. When the commit history was cleaned up (and some additional fixes were cherry-picked), we ended up with smaller number of commits, ending up with 1.22.0-96-g2852398, i.e. commit 2852398, 96 commits on top of 1.22.0 tag.

We recognize this git describe based versioning scheme may be confusing. Starting from IDF v4.0, toolchain releases use a simpler scheme (year + release number). When backporting these fixes to IDF 3.x branches, we have opted for keeping the original versioning scheme, as changing it could cause breakage in various scripts relying on specific version string format.

@igrr The volatile fix from https://github.com/espressif/esp-idf/issues/2892#issuecomment-525697255 is not included in the current esp-idf 3.3 release. Is this no longer needed?

@Spritetm can probably say for sure (i'm not very familiar with that part), but i think the default workaround used in the new toolchain is "memw", which doesn't need this volatile fix.

Hello @igrr,

As a final verdict, does this mean that to fix the issue:

- On esp-idf 3.x: crosstool-ng-1.22.0-96-g2852398 should be used instead of 1.22.0-98-g4638c4f ?

- On esp-idf 4.x: latest crosstool to be used

Thanks !

@geza-pycom That's right!

For 3.x versions, one additional step which hasn't been done yet is to update all the prebuilt libraries (libc, Wi-Fi and Bluetooth libraries). This issue will be updated when that step is finished.

It seems that the latest changes (https://github.com/espressif/esp-idf/issues/5090#issuecomment-613669668) added in 2020r2 and crosstool-ng-1.22.0-96-g2852398 releases have introduced a regression in PSRAM fixes. In some case when a memory barrier has to be inserted, it is now not inserted.

This has been reported in https://github.com/espressif/esp-idf/issues/5423, and we have now reproduced this situation in our testing. We are looking for the cause and will update the issue as a solution is available. Most likely, it will involve making another toolchain release. Sorry for the extra time this will take.

@igrr do you have an ETA for the toolchain release?

@stuarthatchbaby Which IDF version are you using at the moment? If it is v4.0 and later, we recommend using the 2020r1 release for now. For v3.3, we can prepare a release early next week.

@igrr We're on 4.0.1 now, but seeing possible VFS and select errors per https://github.com/espressif/esp-idf/issues/5423 . 2020r2 solves these issues in our testing but has the PSRAM regression mentioned above and causes us the tiT aborts referenced.

I see, in that case I suggest temporarily going back to the 2020r1 release, as that is going to fix both the issue with 8-bit load/store (tiT abort) 16-bit load/store (VFS/select errors).

@igrr We're using crosstool-ng-1.22.0-96 currently and have had a few strange issues we haven't been able to track down yet. Would you recommend switching back to the crosstool-ng-1.22.0-98 pre-release until the fix is available?

@igrr , Hi, I have tested three toolchains, including: 2020-r2(has the commit of szmodz), 2020-r1(up to Jereon's commit) , and 2020-r1.5 (like 2020-r2, but except szmodz's commit). The test result shows that, 2020-r2 will reproduce psram bug, 2020-r1 and 2020-r1.5 both solve the psram bug. Besides, as for the bug szmodz memtioned, I cannot reproduce. So I think now we can switch 2020-r1.5.

Hi @wanglei-esp

For most users here, it's difficult to understand your reply without knowing the commit you mentioned.

Could you provide link to the szmodz's commit.

@AxelLin , sorry for that. szmodz's commits are, https://github.com/espressif/gcc/commit/f015747f8dfbd582a0e1f9b49764618cab97a36b

https://github.com/espressif/gcc/commit/71e42061265b39b20bbc8e3f7530405ad9068236

https://github.com/espressif/gcc/commit/1a58a05701edeca928f667175c938ca319b3d6db

i think these three commits introduced a regression in PSRAM fixes. So have build a toolchain based on 2020-r2 but revert these three commits. Have tested and solved the psram fix

2020r3 toolchain has been released, which has above mentioned commits reverted. Release notes are here: https://github.com/espressif/crosstool-NG/releases/tag/esp-2020r3.

release/v4.0 branch has been updated to use the new toolchain: https://github.com/espressif/esp-idf/commit/6093407d7876a9ea43f2a23ac9e8d22e2e1a60d0.

Similar updates to master, v4.1, v4.2, v3.3 are in progress.

Toolchain updated in master branch with https://github.com/espressif/esp-idf/commit/439f4e4f70ef5423ccfdc706902372ac3aded144.

Toolchain updated in release/v4.1 branch with https://github.com/espressif/esp-idf/commit/c7ba54ed73b95406c6bcc9fc0220c99eeabe0445.

Hello @igrr we're working with v3.3 but also experience issues with regards to PSRAM. (Interestingly enough with ant without a PSRAM chip attached ) It only happens in release mode, debug build works just fine.

Could this be the same issue? How is the roadmap for updating the toolchain/fix for v3.3?

v4.2 9f0c564de4ca91634083c209d152794063ff123c

v3.3 81da2bae2aa3e15a1793d40bb9648620f8cd2555 @Curclamas, does this fix the issue in v3.3?

@AxelLin I started using the PSRAM a couple days ago and started having weird issues with MQTT (from the IDF) on version v3.3. Tried updating the compiler to the one from the linked commit, but that didn't help... after that I tried forcing the MQTT allocation to use the DMA ram and the issue disappeared. So my guess is the issues is not fixed :(

@tmihovm2m Did you enable CONFIG_SPIRAM_CACHE_WORKAROUND and do a full rebuild?

@dexterbg Hmm, I though it was enabled, but I guess I have accidentaly reverted the change when testing with the updated toolchain.

Sorry guys :(

Given that the toolchain has been updated in all currently maintained releases, I will close this issue. Please open a new issue if you are seeing a PSRAM-related problem.

Thanks everyone for the all the help reproducing the issue and great deal of patience while we were releasing the fixes.

@igrr It seems premature to close this when the toolchain released contains a critical bug as described at https://github.com/espressif/esp-idf/releases/tag/v3.3.4. I will note that the Known Issue says "difficult to reproduce", but in my use case, which is heavy WiFi and BLE, it crashes within minutes and usually under a minute. I have had to revert to a prior revision to keep my app stable.

@vonnieda Do you still see this with toolchain 1.22.0-97-gc752ad5?

@dexterbg I hadn't seen 97 yet, I will try it next week. On the commit, though, it says "Revert a part of PSRAM workaround because of regression"; so, does this mean the PSRAM issue is still not fixed in this version?

No, that means toolchain 97-gc752ad5 reverts the regression of the fix introduced in toolchain 96-g2852398 (see above). The fix is now supposed to be fully functional.

Sorry that i didn't make it clear, all release _branches_ now contain the fix, i.e. a commit which updates the toolchain version. These commits are:

- master: https://github.com/espressif/esp-idf/commit/439f4e4f70ef5423ccfdc706902372ac3aded144.

- release/v4.2: https://github.com/espressif/esp-idf/commit/9f0c564de4ca91634083c209d152794063ff123c. This commit will be part of v4.2-rc, due to be released soon.

- release/v4.1: https://github.com/espressif/esp-idf/commit/c7ba54ed73b95406c6bcc9fc0220c99eeabe0445. This commit will be part of the next v4.1.1 bugfix release.

- release/v4.0: https://github.com/espressif/esp-idf/commit/6093407d7876a9ea43f2a23ac9e8d22e2e1a60d0. This commit is part of v4.0.2 release.

- release/v3.3: https://github.com/espressif/esp-idf/commit/81da2bae2aa3e15a1793d40bb9648620f8cd2555. This commit will be part of the next v3.3.5 bugfix release.

The toolchain versions which contain the fix are esp-2020r3 based on GCC 8.4 (used in 4.x releases), and 1.22.0-97-gc752ad5 based on GCC 5.2.0 (used in 3.3 release).

@igrr do you happen to have any timeline when the v3.3.5 bugfix release is scheduled?

At the moment QA is testing two bugfix releases: 4.1.1 and 3.3.5. Testing will be finished around Dec 25, if no issues are found we will proceed with the release. 4.1.1 currently has higher priority for us, so if the issues are found we will work on fixing them in release/v4.1 first. Early January is probably viable for the release. We'll try to keep you updated (cc @Alvin1Zhang).

So, this has been a problem FOR TWO YEARS. How certain are you that the early January release you speak of will actually fix the problem permanently?

It's early January now, by the way.

Hi @etherfi, some issues (unrelated to PSRAM) have been found while testing v4.1.1 release candidate, so the v3.3.5 release is still pending while we are working on fixing the issues in v4.1.1. To the best of our knowledge, no new PSRAM issue reports appeared since we have switched to this version of the compiler. That said, for new designs it is recommended to use ESP32 silicon revision 3 as it fixes the PSRAM cache issue in hardware.

Thanks!

Most helpful comment

We all get it - analysing and ensuring that whatever solution is found actually solves the underlying issue takes time; especially for problems of this nature. It needs to take time, this can't be rushed. But when you after nearly two years seemingly dangle the solution in front of the community - people who believe in the product and spend huge amount of time giving feedback, reporting bugs and submitting pull requests for the betterment of your product, it raises a few eyebrows.

Believe me, I know full and well that it often is not up the the developers what to fix first - some large customer wants this or that and some manager pushes resources towards that instead of what the development team knows is actually important for the product.

I hold no grudges towards the Espressif developers frequenting GH, I'm sure they are doing their best within whatever constraints they have. If fact, I think they deserve praise for putting up with all the questions and (often user-caused) problems being thrown at them.

However, the hard truth is that this issue effectively renders the ESP32 unusable for what is likely a large part of the user base, more so than any other reported issue I've seen, be it a bug in a peripheral driver, some quirk with the new CMake system or whatever.

_A device that can't be relied upon not to corrupt itself is simply unusable for production._

I don't know how to put it in clearer terms than that - we need this issue resolved to make the devices usable. And we need it sooner rather than later.

So can we please have some feedback on when the solution will be available to us (gcc 5 and 8 both)?