Eksctl: create nodegroup fails with EKS 1.18

What happened?

I upgraded my EKS cluster to 1.18 via web console. I then tried to make a nodegroup in the cluster but it fails. I tried with eksctl 0.29.2 and 0.30.0-rc1

What you expected to happen?

A nodegroup to be created

How to reproduce it?

I had created a previous cluster with eksctl with 1.17

Upgraded the cluster via the web console

ran eksctl create nodegroup --cluster stage

Anything else we need to know?

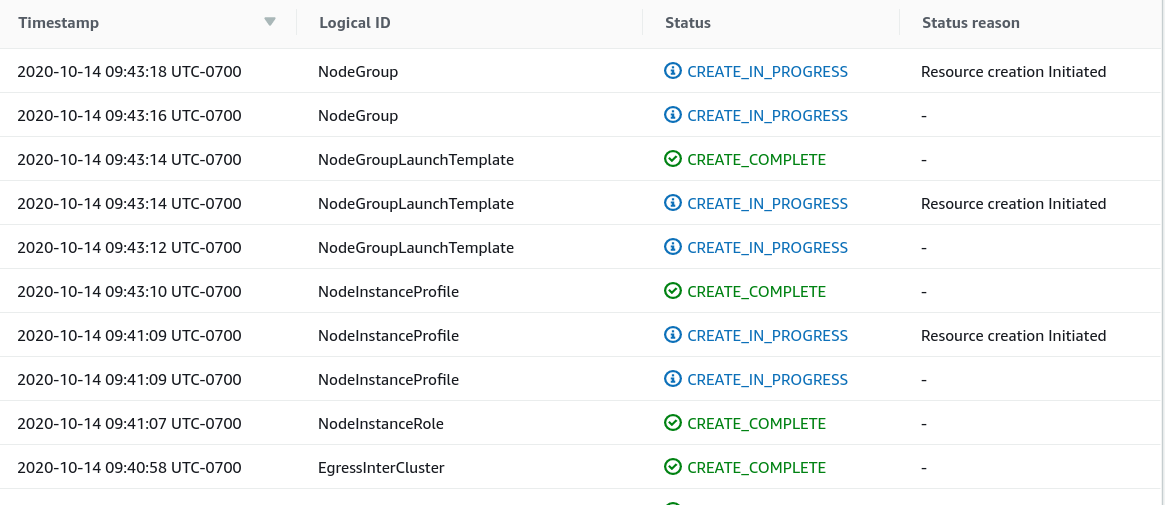

it appears the problem may actually be with CFN because there are no errors on the stack but multiple resources are in CREATE_IN_PROGRESS state after eksctl fails

Versions

Please paste in the output of these commands:

$ eksctl 0.30.0-rc1

$ kubectl 1.19.2

Logs

[ℹ] eksctl version 0.29.2

[ℹ] using region us-west-2

[ℹ] will use version 1.18 for new nodegroup(s) based on control plane version

[ℹ] nodegroup "ng-cd4f772c" present in the given config, but missing in the cluster

[ℹ] nodegroup "ng-63dcd78d" present in the cluster, but missing from the given config

[ℹ] nodegroup "ng-655ee1b7" present in the cluster, but missing from the given config

[ℹ] 2 existing nodegroup(s) (ng-63dcd78d,ng-655ee1b7) will be excluded

[ℹ] nodegroup "ng-cd4f772c" will use "ami-04f0f3d381d07e0b6" [AmazonLinux2/1.18]

[ℹ] 1 nodegroup (ng-cd4f772c) was included (based on the include/exclude rules)

[ℹ] will create a CloudFormation stack for each of 1 nodegroups in cluster "stage"

[ℹ] 2 sequential tasks: { fix cluster compatibility, 1 task: { 1 task: { create nodegroup "ng-cd4f772c" } } }

[ℹ] checking cluster stack for missing resources

[ℹ] cluster stack has all required resources

[ℹ] building nodegroup stack "eksctl-stage-nodegroup-ng-cd4f772c"

[ℹ] --nodes-min=2 was set automatically for nodegroup ng-cd4f772c

[ℹ] --nodes-max=2 was set automatically for nodegroup ng-cd4f772c

[ℹ] deploying stack "eksctl-stage-nodegroup-ng-cd4f772c"

[✖] unexpected status "CREATE_IN_PROGRESS" while waiting for CloudFormation stack "eksctl-stage-nodegroup-ng-cd4f772c"

[ℹ] fetching stack events in attempt to troubleshoot the root cause of the failure

[ℹ] 1 error(s) occurred and nodegroups haven't been created properly, you may wish to check CloudFormation console

[ℹ] to cleanup resources, run 'eksctl delete nodegroup --region=us-west-2 --cluster=stage --name=<name>' for each of the failed nodegroup

[✖] waiting for CloudFormation stack "eksctl-stage-nodegroup-ng-cd4f772c": RequestCanceled: waiter context canceled

caused by: context deadline exceeded

Error: failed to create nodegroups for cluster "stage"

All 12 comments

@rothgar It looks like the creation may have timed out, did the status on CREATE_IN_PROGRESS ever change?

Facing similar issue

That's a different error, please see https://github.com/weaveworks/eksctl/issues/2735. Please provide more information like the commands you're using and/or your config.

@rothgar It looks like the creation may have timed out, did the status on

CREATE_IN_PROGRESSever change?

No it never changed. It was definitely a timeout error but no visibility from the CLI why

@michaelbeaumont

I have the same problem.

The aws-node pod stuck at "Checking for IPAM connectivity ... "

And the nodegroup's role doesn't include AmazonEKS_CNI_Policy

Edit:

- It only happen when I create nodegroup with config file.

- eksctl v0.30.0

@quantm241 same problem as who? You must include the config file/commands you're using as well as their output.

@michaelbeaumont

Config file:

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: my-cluster

region: ap-northeast-1

version: "1.18"

iam:

withOIDC: true

managedNodeGroups:

- name: dev-system

instanceType: t3.small

desiredCapacity: 2

minSize: 2

maxSize: 5

volumeSize: 80

- name: test

instanceType: t3.small

desiredCapacity: 1

minSize: 1

maxSize: 5

volumeSize: 80

Command:

eksctl create nodegroup -f config.yaml

Outputs:

[ℹ] eksctl version 0.30.0

[ℹ] using region ap-northeast-1

[ℹ] nodegroup "test" present in the given config, but missing in the cluster

[ℹ] 1 existing nodegroup(s) (dev-system) will be excluded

[ℹ] combined include rules: test

[ℹ] 1 nodegroup (test) was included (based on the include/exclude rules)

[ℹ] will create a CloudFormation stack for each of 1 managed nodegroups in cluster "my-cluster"

[ℹ] 2 sequential tasks: { fix cluster compatibility, 1 task: { 1 task: { create managed nodegroup "test" } } }

[ℹ] checking cluster stack for missing resources

[ℹ] cluster stack has all required resources

[ℹ] building managed nodegroup stack "eksctl-my-cluster-nodegroup-test"

[ℹ] deploying stack "eksctl-my-cluster-nodegroup-test"

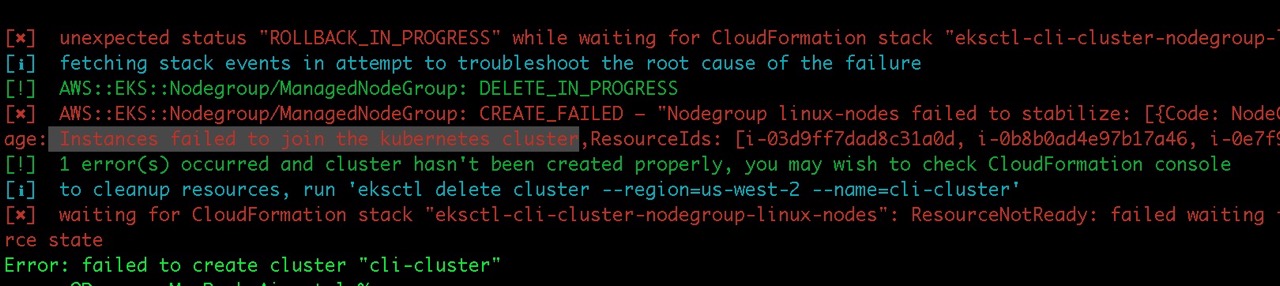

[✖] unexpected status "ROLLBACK_IN_PROGRESS" while waiting for CloudFormation stack "eksctl-my-cluster-nodegroup-test"

[ℹ] fetching stack events in attempt to troubleshoot the root cause of the failure

[✖] AWS::EKS::Nodegroup/ManagedNodeGroup: CREATE_FAILED – "Nodegroup test failed to stabilize: [{Code: NodeCreationFailure,Message: Unhealthy nodes in the kubernetes cluster,ResourceIds: [i-0d35703c6bfdd07ab]}]"

[ℹ] 1 error(s) occurred and nodegroups haven't been created properly, you may wish to check CloudFormation console

[ℹ] to cleanup resources, run 'eksctl delete nodegroup --region=ap-northeast-1 --cluster=my-cluster --name=<name>' for each of the failed nodegroup

[✖] waiting for CloudFormation stack "eksctl-my-cluster-nodegroup-test": ResourceNotReady: failed waiting for successful resource state

Error: failed to create nodegroups for cluster "my-cluster"

I have investigated on AWS console and see that nodeGroup's role doesn't include AmazonEKS_CNI_Policy

@quantm241 Do you know which version of eksctl you created the cluster with?

@quantm241 Do you know which version of

eksctlyou created the cluster with?

eksctl v0.27.0

@quantm241 I'm still doing testing but feel free to build & try https://github.com/weaveworks/eksctl/tree/withoidc_old

@rothgar This may or may not be related to your issue, also feel free to try and report

@quantm241 @rothgar Please try out https://github.com/weaveworks/eksctl/releases/tag/0.31.0-rc.1!

0.31.0-rc.1 worked for me :+1:

Most helpful comment

0.31.0-rc.1 worked for me :+1: