Eksctl: Posible bug deleting managed nodegroups

We have a failure in integration tests:

(Integration) Create, Get, Scale & Delete when creating a cluster with 1 node

should not return an error

/src/integration/creategetdelete_test.go:62

Using kubeconfig: /src/integration/kubeconfig-451144843

starting '../eksctl "--region" "us-west-2" "create" "cluster" "--verbose" "4" "--name" "scrumptious-gopher-1582686390" "--tags" "alpha.eksctl.io/description=eksctl integration test" "--nodegroup-name" "ng-0" "--node-labels" "ng-name=ng-0" "--nodes" "1" "--version" "1.14" "--kubeconfig" "/src/integration/kubeconfig-451144843"'

.

.

.

2020-02-26T05:15:04Z [✖] unexpected status "DELETE_FAILED" while waiting for CloudFormation stack "eksctl-managed-hilarious-wardrobe-1582686390-nodegroup-managed-ng-0"

2020-02-26T05:15:04Z [ℹ] fetching stack events in attempt to troubleshoot the root cause of the failure

2020-02-26T05:15:04Z [✖] AWS::CloudFormation::Stack/eksctl-managed-hilarious-wardrobe-1582686390-nodegroup-managed-ng-0: DELETE_FAILED – "The following resource(s) failed to delete: [SG]. "

2020-02-26T05:15:04Z [✖] AWS::EC2::SecurityGroup/SG: DELETE_FAILED – "resource sg-0161c1e34bd99c2e6 has a dependent object (Service: AmazonEC2; Status Code: 400; Error Code: DependencyViolation; Request ID: 80f4c7fb-1b9a-4fdc-b6ab-fd4e7ef5fdf8)"

https://app.circleci.com/jobs/github/weaveworks/eksctl/6620/parallel-runs/0/steps/0-107

All 6 comments

Hi,

I face to the same error using eksctl and managed node groups

eksctl delete cluster -f my-cluster.yml

[ℹ] eksctl version 0.14.0

[ℹ] using region eu-west-3

[ℹ] deleting EKS cluster "my-cluster-dev"

[ℹ] either account is not authorized to use Fargate or region eu-west-3 is not supported. Ignoring error

[✔] kubeconfig has been updated

[ℹ] cleaning up LoadBalancer services

[ℹ] 2 sequential tasks: { 2 parallel sub-tasks: { cleanup for nodegroup "managed-ng-1", delete nodegroup "managed-ng-1" }, delete cluster control plane "my-cluster-dev" [async] }

[ℹ] trying to cleanup dangling network interfaces

[ℹ] will delete stack "my-cluster-dev-nodegroup-managed-ng-1"

[ℹ] waiting for stack "my-cluster-dev-nodegroup-managed-ng-1" to get deleted

[✖] unexpected status "DELETE_FAILED" while waiting for CloudFormation stack "my-cluster-dev-nodegroup-managed-ng-1"

[ℹ] fetching stack events in attempt to troubleshoot the root cause of the failure

[✖] AWS::CloudFormation::Stack/my-cluster-dev-nodegroup-managed-ng-1: DELETE_FAILED – "The following resource(s) failed to delete: [ManagedNodeGroup]. "

[✖] AWS::EKS::Nodegroup/ManagedNodeGroup: DELETE_FAILED – "Nodegroup managed-ng-1 failed to stabilize: Internal Failure"

[✖] AWS::CloudFormation::Stack/my-cluster-dev-nodegroup-managed-ng-1: DELETE_FAILED – "The following resource(s) failed to delete: [ManagedNodeGroup]. "

[✖] AWS::EKS::Nodegroup/ManagedNodeGroup: DELETE_FAILED – "Nodegroup managed-ng-1 failed to stabilize: Internal Failure"

[ℹ] 1 error(s) occurred while deleting cluster with nodegroup(s)

[✖] waiting for CloudFormation stack "my-cluster-dev-nodegroup-managed-ng-1": ResourceNotReady: failed waiting for successful resource state

Error: failed to delete cluster with nodegroup(s)

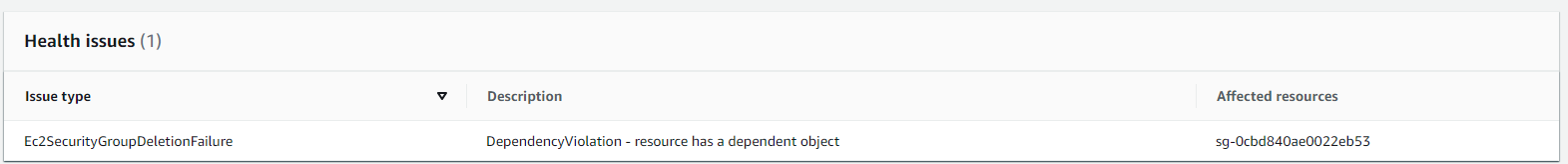

I get this error on AWS console:

edit 1: Apparently, the related security group is the one created to get remote SSH access

SG Group Name: eks-remoteAccess-*******

SG Description: Security group for all nodes in the nodeGroup to allow SSH access

edit 2: After having deleted the ENI bound to this security group (The ENI was available, not in use), I run the command again without errors

[✔] all cluster resources were deleted

edit 3: I reused the same config file. The cluster creation and the cluster deletion worked fine...

I am facing the same issue issue with un-managed node group as well. What I did is to simply re-run delete cluster.

Configuration

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: test-delete

region: ap-southeast-2

nodeGroups:

- name: standard-workers

instanceType: t3.medium

desiredCapacity: 1

minSize: 1

maxSize: 2

- name: private-workers

instanceType: t3.medium

privateNetworking: true

desiredCapacity: 1

minSize: 1

maxSize: 2

managedNodeGroups:

- name: managed-workers

instanceType: t3.medium

desiredCapacity: 1

minSize: 1

maxSize: 2

Delete output

./eksctl delete cluster -f test_private.yaml --wait

[ℹ] eksctl version 0.16.0-dev+239507a8.2020-03-09T20:24:27Z

[ℹ] using region ap-southeast-2

[ℹ] deleting EKS cluster "test-delete"

[ℹ] either account is not authorized to use Fargate or region ap-southeast-2 is not supported. Ignoring error

[✔] kubeconfig has been updated

[ℹ] cleaning up LoadBalancer services

[ℹ] 2 sequential tasks: { 6 parallel sub-tasks: { cleanup for nodegroup "standard-workers", delete nodegroup "standard-workers", cleanup for nodegroup "private-workers", delete nodegroup "private-workers", cleanup for nodegroup "managed-workers", delete nodegroup "managed-workers" }, delete cluster control plane "test-delete" }

[ℹ] trying to cleanup dangling network interfaces

[ℹ] trying to cleanup dangling network interfaces

[ℹ] trying to cleanup dangling network interfaces

[ℹ] will delete stack "eksctl-test-delete-nodegroup-managed-workers"

[ℹ] waiting for stack "eksctl-test-delete-nodegroup-managed-workers" to get deleted

[ℹ] will delete stack "eksctl-test-delete-nodegroup-standard-workers"

[ℹ] waiting for stack "eksctl-test-delete-nodegroup-standard-workers" to get deleted

[ℹ] will delete stack "eksctl-test-delete-nodegroup-private-workers"

[ℹ] waiting for stack "eksctl-test-delete-nodegroup-private-workers" to get deleted

[✖] unexpected status "DELETE_FAILED" while waiting for CloudFormation stack "eksctl-test-delete-nodegroup-standard-workers"

[ℹ] fetching stack events in attempt to troubleshoot the root cause of the failure

[✖] AWS::CloudFormation::Stack/eksctl-test-delete-nodegroup-standard-workers: DELETE_FAILED – "The following resource(s) failed to delete: [SG]. "

[✖] AWS::EC2::SecurityGroup/SG: DELETE_FAILED – "resource sg-0badfc766ec4a0369 has a dependent object (Service: AmazonEC2; Status Code: 400; Error Code: DependencyViolation; Request ID: 88abb1ef-d5f5-4cfb-aefb-c5e3bd3f9b79)"

[ℹ] 1 error(s) occurred while deleting cluster with nodegroup(s)

[✖] waiting for CloudFormation stack "eksctl-test-delete-nodegroup-standard-workers": ResourceNotReady: failed waiting for successful resource state

Delete output (again)

./eksctl delete cluster -f test_private.yaml --wait

[ℹ] eksctl version 0.16.0-dev+239507a8.2020-03-09T20:24:27Z

[ℹ] using region ap-southeast-2

[ℹ] deleting EKS cluster "test-delete"

[ℹ] either account is not authorized to use Fargate or region ap-southeast-2 is not supported. Ignoring error

[✔] kubeconfig has been updated

[ℹ] cleaning up LoadBalancer services

[ℹ] 2 sequential tasks: { 2 parallel sub-tasks: { cleanup for nodegroup "standard-workers", delete nodegroup "standard-workers" }, delete cluster control plane "test-delete" }

[ℹ] trying to cleanup dangling network interfaces

[ℹ] will delete stack "eksctl-test-delete-nodegroup-standard-workers"

[ℹ] waiting for stack "eksctl-test-delete-nodegroup-standard-workers" to get deleted

[ℹ] will delete stack "eksctl-test-delete-cluster"

[ℹ] waiting for stack "eksctl-test-delete-cluster" to get deleted

[✔] all cluster resources were deleted

Maybe that is why we have condition for failed to delete stack https://github.com/weaveworks/eksctl/blob/master/pkg/cfn/manager/delete_tasks.go#L79, however will it better to avoid such delete failure in the first place (unless we can't) ?

I had encountered the same issue...

$ eksctl delete cluster --config-file aws_cluster.yaml

[ℹ] eksctl version 0.16.0

[ℹ] using region ap-southeast-2

[ℹ] deleting EKS cluster "learn-kube"

[ℹ] either account is not authorized to use Fargate or region ap-southeast-2 is not supported. Ignoring error

[✔] kubeconfig has been updated

[ℹ] cleaning up LoadBalancer services

[ℹ] 2 sequential tasks: { 2 parallel sub-tasks: { cleanup for nodegroup "ng-1", delete nodegroup "ng-1" }, delete cluster control plane "learn-kube" [async] }

[ℹ] trying to cleanup dangling network interfaces

[ℹ] will delete stack "eksctl-learn-kube-nodegroup-ng-1"

[ℹ] waiting for stack "eksctl-learn-kube-nodegroup-ng-1" to get deleted

[✖] unexpected status "DELETE_FAILED" while waiting for CloudFormation stack "eksctl-learn-kube-nodegroup-ng-1"

[ℹ] fetching stack events in attempt to troubleshoot the root cause of the failure

[✖] AWS::CloudFormation::Stack/eksctl-learn-kube-nodegroup-ng-1: DELETE_FAILED – "The following resource(s) failed to delete: [ManagedNodeGroup]. "

[✖] AWS::EKS::Nodegroup/ManagedNodeGroup: DELETE_FAILED – "Nodegroup ng-1 failed to stabilize: Internal Failure"

[✖] AWS::CloudFormation::Stack/eksctl-learn-kube-nodegroup-ng-1: DELETE_FAILED – "The following resource(s) failed to delete: [ManagedNodeGroup]. "

[✖] AWS::EKS::Nodegroup/ManagedNodeGroup: DELETE_FAILED – "Nodegroup ng-1 failed to stabilize: Internal Failure"

[✖] AWS::CloudFormation::Stack/eksctl-learn-kube-nodegroup-ng-1: DELETE_FAILED – "The following resource(s) failed to delete: [ManagedNodeGroup]. "

[✖] AWS::EKS::Nodegroup/ManagedNodeGroup: DELETE_FAILED – "Nodegroup ng-1 failed to stabilize: Internal Failure"

[✖] AWS::CloudFormation::Stack/eksctl-learn-kube-nodegroup-ng-1: DELETE_FAILED – "The following resource(s) failed to delete: [NodeInstanceRole, ManagedNodeGroup]. "

[✖] AWS::IAM::Role/NodeInstanceRole: DELETE_FAILED – "Cannot delete entity, must detach all policies first. (Service: AmazonIdentityManagement; Status Code: 409; Error Code: DeleteConflict; Request ID: 35067978-5029-4e0b-a4be-c74030e1590b)"

[✖] AWS::EKS::Nodegroup/ManagedNodeGroup: DELETE_FAILED – "Nodegroup ng-1 failed to stabilize: Internal Failure"

[ℹ] 1 error(s) occurred while deleting cluster with nodegroup(s)

[✖] waiting for CloudFormation stack "eksctl-learn-kube-nodegroup-ng-1": ResourceNotReady: failed waiting for successful resource state

Error: failed to delete cluster with nodegroup(s)

Followed step 2 above from @lliknart and then eksctl delete cluster worked successfully. I too had a dangling ENI(that was showing as available).

I've exactly the same issue. I also opened an internal support ticket. Let's see the outcome.

Had the exact same problem. Tried re-running the delete, did not work. Deleting the dangling ENI did work. Here is how you can delete the ENI using the console:

- Go to EC2 > Network Interfaces

- Sort by VPC, find the interfaces assigned to your VPC

- The interface to delete should be the only one that is "available", it should also be the only one assigned to the problematic remote access SG. If more than one interface matches this description, delete them all.

Most helpful comment

Had the exact same problem. Tried re-running the delete, did not work. Deleting the dangling ENI did work. Here is how you can delete the ENI using the console: