Eksctl: When creating a cluster, subnets are listed multiple times [0.7.0]

What happened?

When creating a cluster 0.7.0 lists the same subnets multiple times. I was testing with a config file with two node groups, but with command eksctl create cluster --config-file=foo-config.yaml --without-nodegroup so no node groups were created. The subnets are listed only once in the vpc section of the config file. I am guessing subnets get incorrectly duplicated as many times as there are node groups in the config file?

E.g. here the private subnets were each listed twice, though in different orders:

[ℹ] eksctl version 0.7.0

[ℹ] using region ap-southeast-2

[✔] using existing VPC (vpc-012345678901234) and subnets (private:[subnet-0000000000000

subnet-111111111111 subnet-22222222222 subnet-1111111111111 subnet-222222222222

subnet-000000000000] public:[])

[!] custom VPC/subnets will be used; if resulting cluster doesn't function as expected,

make sure to review the configuration of VPC/subnets

[ℹ] using Kubernetes version 1.14

What you expected to happen?

Each subnet to be listed once.

How to reproduce it?

- Create a config file

foo-config.yamlwith definitions for two node groups eksctl create cluster --config-file=foo-config.yaml --without-nodegroup

Anything else we need to know?

Using downloaded 0.7.0 release.

Versions

Please paste in the output of these commands:

$ eksctl version

[ℹ] version.Info{BuiltAt:"", GitCommit:"", GitTag:"0.7.0"}

$ kubectl version

Client Version: version.Info{Major:"1", Minor:"16", GitVersion:"v1.16.2", GitCommit:"c97fe5036ef3df2967d086711e6c0c405941e14b", GitTreeState:"clean", BuildDate:"2019-10-15T19:18:23Z", GoVersion:"go1.12.10", Compiler:"gc", Platform:"linux/amd64"}

Server Version: version.Info{Major:"1", Minor:"14+", GitVersion:"v1.14.6-eks-5047ed", GitCommit:"5047edce664593832e9b889e447ac75ab104f527", GitTreeState:"clean", BuildDate:"2019-08-21T22:32:40Z", GoVersion:"go1.12.9", Compiler:"gc", Platform:"linux/amd64"}

Logs

Haven't got a -v 4 log example sorry.

All 7 comments

Please help to share your config yaml file, I tried with the below config and behavior is as expected

# A simple example of ClusterConfig object with two nodegroups:

---

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: cluster-1

region: ap-southeast-2

vpc:

id: "vpc-XXX" # (optional, must match VPC ID used for each subnet below)

subnets:

private:

ap-southeast-2a:

id: "subnet-XXX"

ap-southeast-2b:

id: "subnet-XXX"

ap-southeast-2c:

id: "subnet-XXX"

nodeGroups:

- name: ng1-public

instanceType: m5.xlarge

desiredCapacity: 4

- name: ng2-private

instanceType: m5.large

desiredCapacity: 10

privateNetworking: true

eksctl create cluster -v 4 --config-file=conf.yaml --without-nodegroup

...

2019-10-22T23:11:55+11:00 [✔] using existing VPC (vpc-0d2c525c13e574a83) and subnets (private:[subnet-XXX subnet-XXX subnet-XXX] public:[])

...

Thanks @sayboras config as below, used with:

eksctl create cluster --config-file=foo-config.yaml --without-nodegroup

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: foo

region: ap-southeast-2

version: '1.14'

vpc:

subnets:

private:

PrivateSubnet1: { 'id': 'subnet-000000000000' }

PrivateSubnet2: { 'id': 'subnet-111111111111' }

PrivateSubnet3: { 'id': 'subnet-222222222222' }

nat:

gateway: Disable

# Not yet available in 0.7.0

#clusterEndpoints:

# publicAccess: true

# privateAccess: true

cloudWatch:

clusterLogging:

enableTypes:

- api

- audit

- authenticator

- controllerManager

- scheduler

iam:

withOIDC: true

serviceAccounts:

- metadata:

name: efs-provisioner

namespace: kube-system

labels:

aws-usage: cluster-ops

attachPolicyARNs:

- 'arn:aws:iam::aws:policy/AmazonElasticFileSystemReadOnlyAccess'

- metadata:

name: cluster-autoscaler

namespace: kube-system

labels:

aws-usage: cluster-ops

attachPolicyARNs:

- 'arn:aws:iam:::policy/kube2-EKSClusterAutoscaler'

nodeGroups:

- name: group-1

ami: auto

amiFamily: AmazonLinux2

labels:

example.com/lifecycle: on-demand

instanceType: m5.xlarge

desiredCapacity: 1

minSize: 0

maxSize: 6

volumeSize: 80

volumeType: gp2

volumeEncrypted: true

privateNetworking: true

availabilityZones:

- ap-southeast-2a

securityGroups:

withShared: true

withLocal: true

attachIDs: [ 'sg-XXX', 'sg-YYY' ]

kubeletExtraConfig:

kubeReserved:

cpu: "300m"

memory: "300Mi"

ephemeral-storage: "1Gi"

kubeReservedCgroup: "/kube-reserved"

systemReserved:

cpu: "300m"

memory: "300Mi"

ephemeral-storage: "1Gi"

evictionHard:

memory.available: "200Mi"

nodefs.available: "10%"

featureGates:

RotateKubeletServerCertificate: true

ssh:

publicKeyName: my-key

tags:

Cluster: foo

NodeGroup: group-1

- name: group-2

ami: auto

amiFamily: AmazonLinux2

labels:

example.com/lifecycle: on-demand

instanceType: m5.xlarge

desiredCapacity: 1

minSize: 0

maxSize: 6

volumeSize: 80

volumeType: gp2

volumeEncrypted: true

privateNetworking: true

availabilityZones:

- ap-southeast-2c

securityGroups:

withShared: true

withLocal: true

attachIDs: [ 'sg-XXX', 'sg-YYY' ]

kubeletExtraConfig:

kubeReserved:

cpu: "300m"

memory: "300Mi"

ephemeral-storage: "1Gi"

kubeReservedCgroup: "/kube-reserved"

systemReserved:

cpu: "300m"

memory: "300Mi"

ephemeral-storage: "1Gi"

evictionHard:

memory.available: "200Mi"

nodefs.available: "10%"

featureGates:

RotateKubeletServerCertificate: true

ssh:

publicKeyName: my-key

tags:

Cluster: foo

NodeGroup: group-2

@whereisaaron thanks for your details. This seems like a bug to me.

Please update your configuration as a workaround:

vpc:

subnets:

private:

<actual AZ for this subnet>: { 'id': 'subnet-000000000000' }

<actual AZ for this subnet>: { 'id': 'subnet-111111111111' }

<actual AZ for this subnet>: { 'id': 'subnet-222222222222' }

@martina-if this issue is due to https://github.com/weaveworks/eksctl/blob/master/pkg/apis/eksctl.io/v1alpha5/vpc.go#L138, which adds subnet details into the map, while the original entry from config file is not removed.

Need your input on this. Simple fix is to check for duplicate before calling https://github.com/weaveworks/eksctl/blob/master/pkg/apis/eksctl.io/v1alpha5/vpc.go#L91 and https://github.com/weaveworks/eksctl/blob/master/pkg/apis/eksctl.io/v1alpha5/vpc.go#L102. Another approach is #1477

Thanks for checking into this @sayboras @martina-if! I notice that the CF stack also includes the duplication. So eksctl-foo-cluster::SubnetsPrivate lists the subnets twice.

Thanks also for the workaround. I guess I have to delete and recreate clusters to clean this up. Or I guess manually update the stack template. There is a chance CF will want to recreate the cluster if I change the subnet list to remove the duplicate entries.

Strangely the subnet list export in the stack is just hard-coded values, and it is hard-coded a second time for the cluster resource (again with the duplication). Wouldn't a mapping or a parameter be better style? Same for the VPC Id in node pools, which is hard-coded in about a dozen places. Generated code I guess, but a generated mapping would simplify things by reducing the number of injection points.

Yeah, I notice the issue in CF while testing for the fix as well.

I don’t think you need to delete the existing stack, normal CF update will work as well.

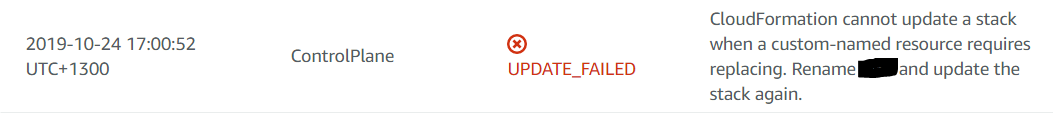

No, I cannot update the subnet list for clusters, even just to remove the duplicates. It wants to replace the control plane with a new one.

To clean this up it appears I need to delete and recreate the clusters. Although I can patch the export, since it is hard-coded values.

I don't know if the duplicates impact the EKS control plane or its behavior. It looks like it filters out the duplicates. They don't appear in aws eks describe-cluster.