Dataverse: File Ingest: Large dta file partially completes ingest before failing, leaves file in incorrect state.

User reported an issue uploading file, see https://help.hmdc.harvard.edu/Ticket/Display.html?id=215169

Initially, it had ingested but resulted in red triangle. Updated ingeststatus in db to remove triangle. File was reporting as dta, stata13 but was actually the tab file. Has since been corrected.

File: CNR_Latvia_IMFER.dta (~7GB)

Dataset: http://dx.doi.org/10.7910/DVN/HHHM5E

Added 2018-06-12 by @pdurbin

connects to #2301

All 9 comments

Update: it does not appear to be a problem exclusively of Stata 13 files. Large Stata 12, and 11 files have similar issues. We are currently trying to solve problems in a related dataset. https://dataverse.harvard.edu/dataset.xhtml?persistentId=doi:10.7910/DVN/E4NE50&version=DRAFT

File size seems to be the problem. Thanks

@bppmit

Fixed the download issue with the file CNR_QJE_ikea.dta in doi:10.7910/DVN/E4NE50;

the file should now be downloadable in the original stata format, as uploaded by the user.

Still need to attempt to re-ingest the file as fully functional tabular data.

Apologies for the delays with this issue.

In order to close this issue, I tried to reingest this giant Stata file as tabular data; but it hasn't completed yet. The ingest itself - i.e. the process that converts and saves the data in a tab-delimited file and produces the datatable and datavariable objects in the database - actually completes in reasonable time (under an hour). But the post-ingest tasks - summary statistics and the UNF are taking a suspiciously long time. I can't close this issue until it finishes, and I can't keep it open under 4.2 - so I'm bumping it up to 4.3; and will continue working on it this week.

The generation of summary stats may in fact be one remaining bottleneck in the ingest process.

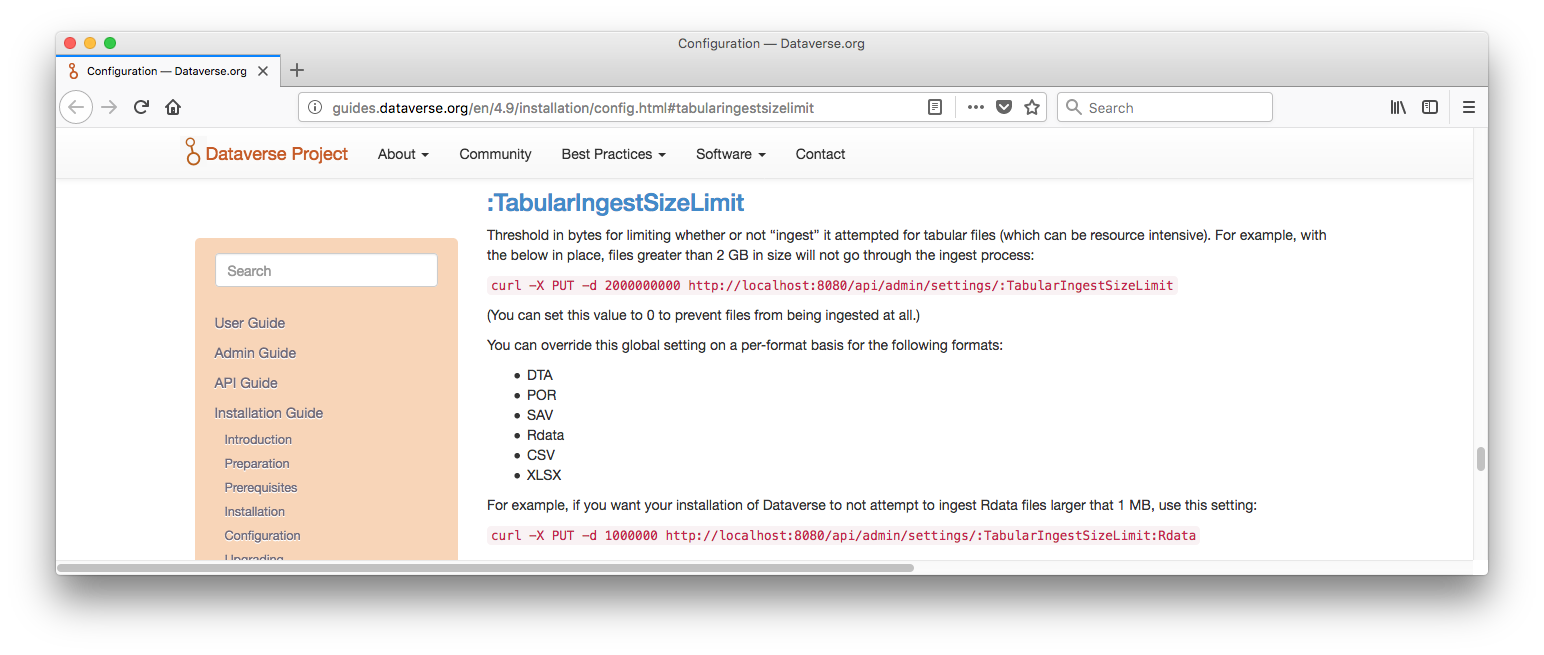

@kcondon and I spoke about this issue this morning and decided to put it through QA along with pull request #4708. The issue is so old that we had not yet implemented "Add threshold size for ingest file" in #2268 (55ea90c seems to be the main commit). These days we would never even attempt to ingest a tabular file that 9GB in size. In production at https://dataverse.harvard.edu we have ":TabularIngestSizeLimit" set to 2684354560 bytes which is 2.5 GB. The only specific format that we further limit even lower is Rdata. We have ":TabularIngestSizeLimit:Rdata" set to 1000000 bytes or 1 MB. I was going to make a commit because I suspected these settings weren't documented but they actually are and are close enough to the numbers above that I think we're explaining well enough what we recommend. Here's a screenshot from http://guides.dataverse.org/en/4.9/installation/config.html#tabularingestsizelimit

@kcondon and I spoke about this issue this morning and decided to put it through QA along with pull request #4708.

Is what I wrote above still true? I see the pull request was merged.

Phil, I don't think this ticket is impacted by either our current ingest file size limit nor support for stata 14, 15, based on Leonid's testing comments. It seemed he was actively testing it at one point and saw that part of the ingest process completed normally but part did not seem to complete. I'd keep this open for comment by Leonid when he is back tomorrow.

@kcondon sounds good. I moved it over to code review so we don't forget to discuss it.

@kcondon @pdurbin

Yes, Phil is correct - the size of the file is way above our threshold for DTA files. So if somebody tries to upload it now, we'll never even attempt to ingest is tabular data. It is in fact larger than our threshold for any upload; so it will not be uploaded as an uningested .dta file either. (But we do occasionally upload files this large for specific users, on request, using system backdoors).

I'm assuming the only reason we allowed it to upload, and then tried to ingest it is that it was uploaded during the early days of the initial Dataverse 4 deployment, when all sorts of chaos was happening. We must have been running without any limits set up.

The only real problem with that upload was that after the ingest failure, the DataFile was stuck in this incomplete/"partial success" state - the main physical file had already been replaced by the generated tabular file; but the metadata was showing the original, uningested state - including type "Stata" and the ".dta" extension. For this specific file this was fixed manually (luckily, the original stata file was properly saved).

In general, the issue of failed ingests leaving file in this partial state was addressed last year. So this should not be happening anymore.

On my own dev. build attempting to ingest the file failed with "java.lang.OutOfMemoryError: GC overhead limit exceeded". Apparently after the file was successfully parsed and the tab file produced; meaning, it was the calculation of the summary stats, and/or UNFs that ran out of memory. The failure was more or less predictable (this is the reason we have size limits on tabular ingests). But I confirmed that it was a "nice" failure - the DataFile was left in the proper, uningested state, with the physical file downloadable as stata/dta - but with the ingest error report shown to the owner.

I'm moving this to QA for closure (no code to review).

@landreev great write up.