Dataverse: UZH - UI Testing / Additional Test Improvements

Hi all!

We have received our final assignment regarding testing improvements in the context of #5619. This will be the "root" issue for our tasks, which are the following:

- Implement UI tests for some parts of the application (e.g., using Cypress or Selenium)

- Improve some of the code we have already committed/merged

- And finally, add some more new testing/coverage

We were wondering if you have any previous experience with UI testing in the dataverse project or if there is any interest in starting to do something like it? We do not have to get anything merged for that task but could obviously create PRs if it were of interest to you. What it would/will probably look like is that the UI interactions are executed against mocked API responses to ensure their correct behavior (not "end-to-end").

In the same context we are also wondering if it is possible to only start the web interface without having to bootstrap the entire stack? Our experience with Java EE etc. is limited to this project.. We managed to run the entire application with the docker-aio but if it were possible to run the web interface separately that would ease the process a fair bit.

A final question: do you still want us to create one issue per PR, now that you have migrated to Github projects? It seems that you can add pull requests to the board as well and that issues are no longer grouped in any way. It might now be better to relate all PRs to this issue?

All 21 comments

We were wondering if you have any previous experience with UI testing in the dataverse project or if there is any interest in starting to do something like it?

Yes, we are extremely interested in automated UI testing. If you look at 0d8150c you can see that we used to have a folder called "sauce_test" because we were playing around with Selenium and free test execution (free for open source) through "Open Sauce": https://saucelabs.com/blog/Announcing-Open-Sauce-free-unlimited-testing-for-Open-Source-projects

That was years ago. The automated UI tests have fallen into disrepair.

More to come but I wanted to show you the old broken tests that are still in the code base with that commit above. Thanks!!

@rschlaefli I wanted to mention that I left a couple comments in #4202 and #4207 and than on Tuesday's community call, I asked @4tikhonov if now is a good time for him to coordinate with you. Unfortunately it isn't. He's busy working with @poikilotherm on getting the infrastructure in place to host Dataverse on Docker (that's my understanding anyway). It's not very well captured in the notes at https://groups.google.com/d/msg/dataverse-community/hekvbHfD-3w/uaTHr5rEAgAJ but I thanked you and @alexscheitlin and @nikzaugg for all your great work!

Also, yesterday I shared some thoughts on automated testing here if that's of interest: https://groups.google.com/d/msg/dataverse-dev/ISot5k4VjZQ/t-hzPk8tAwAJ

Let me try to answer some more of your questions above.

In the same context we are also wondering if it is possible to only start the web interface without having to bootstrap the entire stack?

If this is possible, I have no idea how to do it. I'm sorry. Also, #3958 talks about some difficulties in testing JSF.

A final question: do you still want us to create one issue per PR, now that you have migrated to Github projects? It seems that you can add pull requests to the board as well and that issues are no longer grouped in any way. It might now be better to relate all PRs to this issue?

This is an excellent question. Honestly, now that we've switched to GitHub Projects you are welcome to simply create pull requests for now without bothering to create issues as well. I say this because unlike with Waffle, we don't have an easy way to visually connect issues and pull requests anymore on the board. So if it's easier to just make pull requests, that's totally fine. We plan to work out some of the kinks with the move from Waffle over in #5845

I hope this helps! Please keep the questions coming! Thank you!!

Hi @pdurbin,

Thanks for all of your responses and mentioning us in the call. It has been a pleasure to support your project and work with you!

I have actually started experimenting with some UI testing, even though not in the way you did it a while back. More specifically, I have been trying out Cypress (www.cypress.io) instead of Selenium, which is a JavaScript-based alternative without much of the overhead. It is possible to run the entire "thing" in CI without needing a server-side counterpart and it is much easier to get started. I have encountered some issues with JSF behavior and UI testing in general that I am currently trying to figure out (e.g., reloading behavior that breaks test flows, or the weird naming of inputs you have mentioned).

Also, it seems to be necessary to run the entire Dataverse stack, as far as I was able to conclude. However, it works quite smoothly with docker-aio.

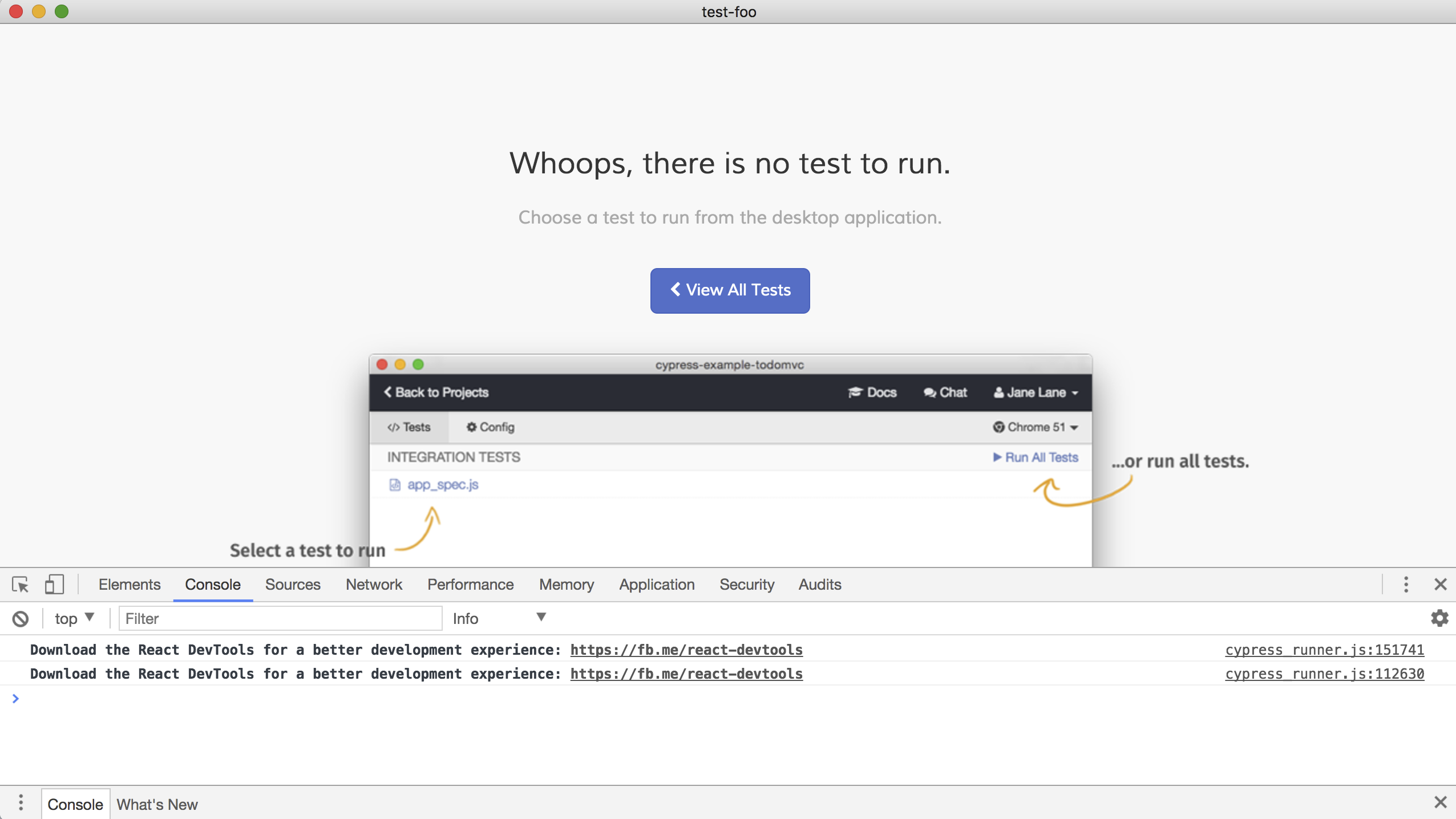

This is what it would look like if run locally:

And the current code for this:

https://github.com/IQSS/dataverse/compare/develop...rschlaefli:5846-UI-Testing

Depending on the goals you have with UI testing, this might or might not be an alternative you could actually consider. For example, Cypress is (not yet) able to do cross-browser testing (it runs only Chromium/Chrome/Electron).

Are there any application flows that would be particularly interesting for you to have covered? Even if you do not end up using Cypress, the flow of the test would probably be applicable to Selenium as well.

@rschlaefli this looks fantastic! Do you have ideas of how we can run it in CI? Would you add it to our .travis.yml file in the root of this repo? Would you like us to add a job to our (new) Jenkins server at https://jenkins.dataverse.org

The workflow you're showing in the animated gif (the dataverseAdmin user logging in) looks like a great start! Maybe the next step would be to create a dataverse? And then a dataset inside that dataverse? Honestly, I'm more interested in getting some CI in place even with what you have working now. Then after that we can write more tests. Thanks!!! 🎉 🎉

@pdurbin Sure, I can look into CI and how this could work. Do you have a staging instance or similar that the UI tests could run against? I can see that the instance you had used in the old Selenium tests is no longer available. I am also working on Dataverse creation and can extend it after that :)

Also, is there some way to "reset state" once tests have run, such that they can start fresh?

Also, if you were to use Cypress going forward, they also have an offering that is free for OSS: https://www.cypress.io/dashboard. This does not execute the tests itself (they run in CI) but stores recordings of test sessions and could overall be quite useful.

Maybe you could try using dataverse-kubernetes in Travis? There are some handy tutorials for running Minikube with the none-driver in their VMs.

Do you have a staging instance or similar that the UI tests could run against?

Sure, you can use https://demo.dataverse.org for testing. That's the server we use at http://guides.dataverse.org/en/4.14/api/intro.html#testing

Also, is there some way to "reset state" once tests have run, such that they can start fresh?

Can you please elaborate on what you mean? There's a server at http://phoenix.dataverse.org that rises from the ashes on each run. The database is dropped. All uploaded files are deleted. Is that what you mean? You can read more about this server at http://guides.dataverse.org/en/4.14/developers/testing.html#the-phoenix-server

You could also spin up Dataverse in Docker. There are instructions on how to do so at https://github.com/IQSS/dataverse/blob/v4.14/conf/docker-aio/readme.md and it's also described at http://guides.dataverse.org/en/4.14/developers/testing.html#running-the-full-api-test-suite-using-docker

Finally, perhaps you could use dataverse-kubernetes, like @poikilotherm is suggesting.

Cypress Dashboard looks really cool! Thanks for letting us know about it!

@pdurbin @poikilotherm Thanks for your comments! The Phoenix instance seems to be what I initially meant (a clean slate that can be used as a basis for testing). I can look into spinning up a dockerized instance in Travis too (it seems doable from what I have seen, and might be nice as there is currently a lot of work on Dataverse and K8s). Either of docker-aio in Travis (as I use locally) or minikube should work. I would imagine that this would drive up the overall pipeline duration by quite a bit. Maybe we would have to restrict UI tests to only run on merges and not on every commit, or live with the additional time it takes?

I have also managed to find the reason for one of the issues I encountered yesterday: the UI testing framework was breaking for many forms once they were submitted (e.g., the password reset or dataverse creation form). The framework was then getting redirected to an empty page:

It seems to be related to certain framebusting (breaking out of iframe) and redirection techniques as described in https://github.com/cypress-io/cypress/issues/3642 or many others of https://github.com/cypress-io/cypress/issues?utf8=%E2%9C%93&q=label%3A%22topic%3A+whoops+%F0%9F%98%B3%22+, and once I comment out the following in dataverse_template.xhtml, the tests do not break anymore (and I could continue working on flows like dataverse creation and password reset):

<ui:fragment rendered="#{!widgetWrapper.widgetView}">

<script>

// Break out of iframe

// if (window !== top) top.location = window.location;

</script>

</ui:fragment>

Cypress seems to wrap the UI of the application in an Iframe and once a redirect is performed as shown above, the wrapping Cypress browser redirects, not the application itself. I am not familiar what would be the reason for this code. If it needs to be there, is there any way to disable this protection in testing? The most naive solution would probably be to sed it away before running UI tests but I would hope there would be another possibility.

I am not familiar what would be the reason for this code.

This is where git blame helps. 😄 It looks like 5fdb872 ("Break out of iframe") was added shortly after https://github.com/IQSS/dataverse/issues/2499#issuecomment-197999204 so I'm assuming it has to do with the "widgets" feature: http://guides.dataverse.org/en/latest/user/dataverse-management.html#widgets (widgets run in an iframe in @openscholar sites, for example, I think. @mheppler and @scolapasta would know more. Ideally we wouldn't have to remove code with sed to get UI tests to run but for now that's fine with me. 😄

@pdurbin I have added a basic CI pipeline and extended the UI tests to some initial cases that should serve as a good base for further additions. The CI is currently based on docker-aio, which is probably not the optimal solution but was the easiest to get started with. One thing that would save some time here is that the tests run during the Docker build would not actually need to run, as they are also executed in the parallel Travis job.

You can see my Travis pipelines here: https://travis-ci.org/rschlaefli/dataverse

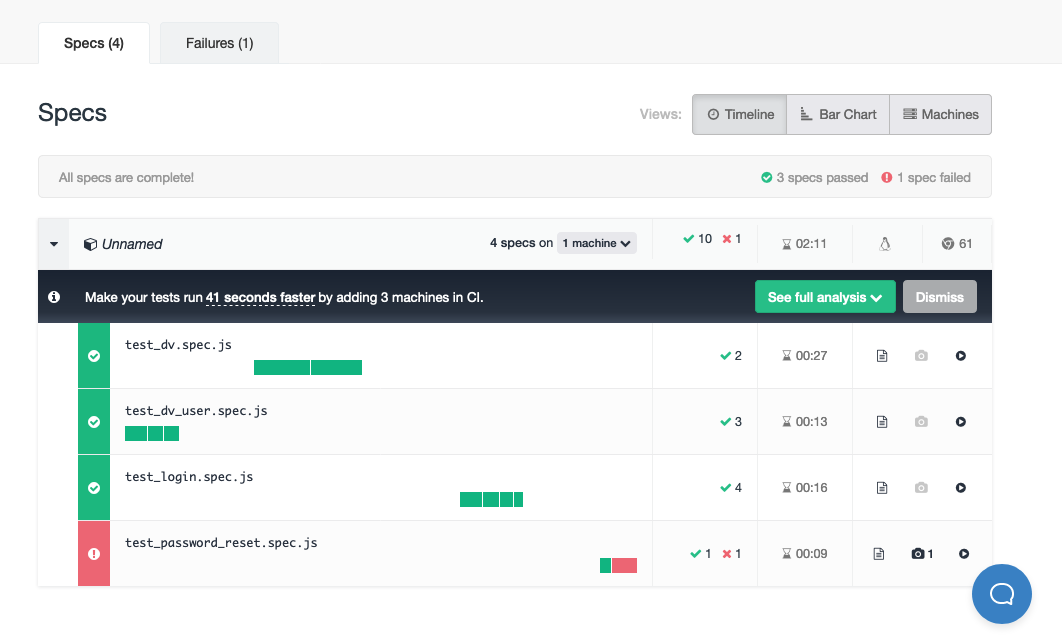

I have also setup the Cypress dashboard: https://dashboard.cypress.io/#/projects/ufkudc/runs

Including the bootup time of the entire Dataverse stack in docker-aio, the total runtime in Travis amounts to more than 10 minutes for the UI testing stage (of which max. 2 minutes are actually spent executing UI tests, as can be seen in the Cypress Dashboard). The Java tests are not slowed down by this, as they run in parallel and finish as fast as previously.

Also, note that the line I commented above is currently commited as such. I will need to fix or change this to a better solution. I would generally like to improve on the current state but some initial feedback would probably be helpful, now that you could e.g. also look at the videos in the Dashboard and how Cypress actually executes in CI.

Some possible improvements:

- Each test should be independent of the others (as is best practice in all testing)

- E.g., Test for creation of a dataset currently depends on the Dataverse that is created in the dataverse creation test. Instead, the dataverse should be programmatically created before the dataset is added.

- It can be quite painful to create stuff programmatically by directly calling the API, as it is necessary to extract the

ViewStatefrom the DOM and append it to the API request. I managed to do this for the login such that the login does not need to be executed via the login form before every test (but the login page still needs to be called to get a ViewState value and session id).

- ...

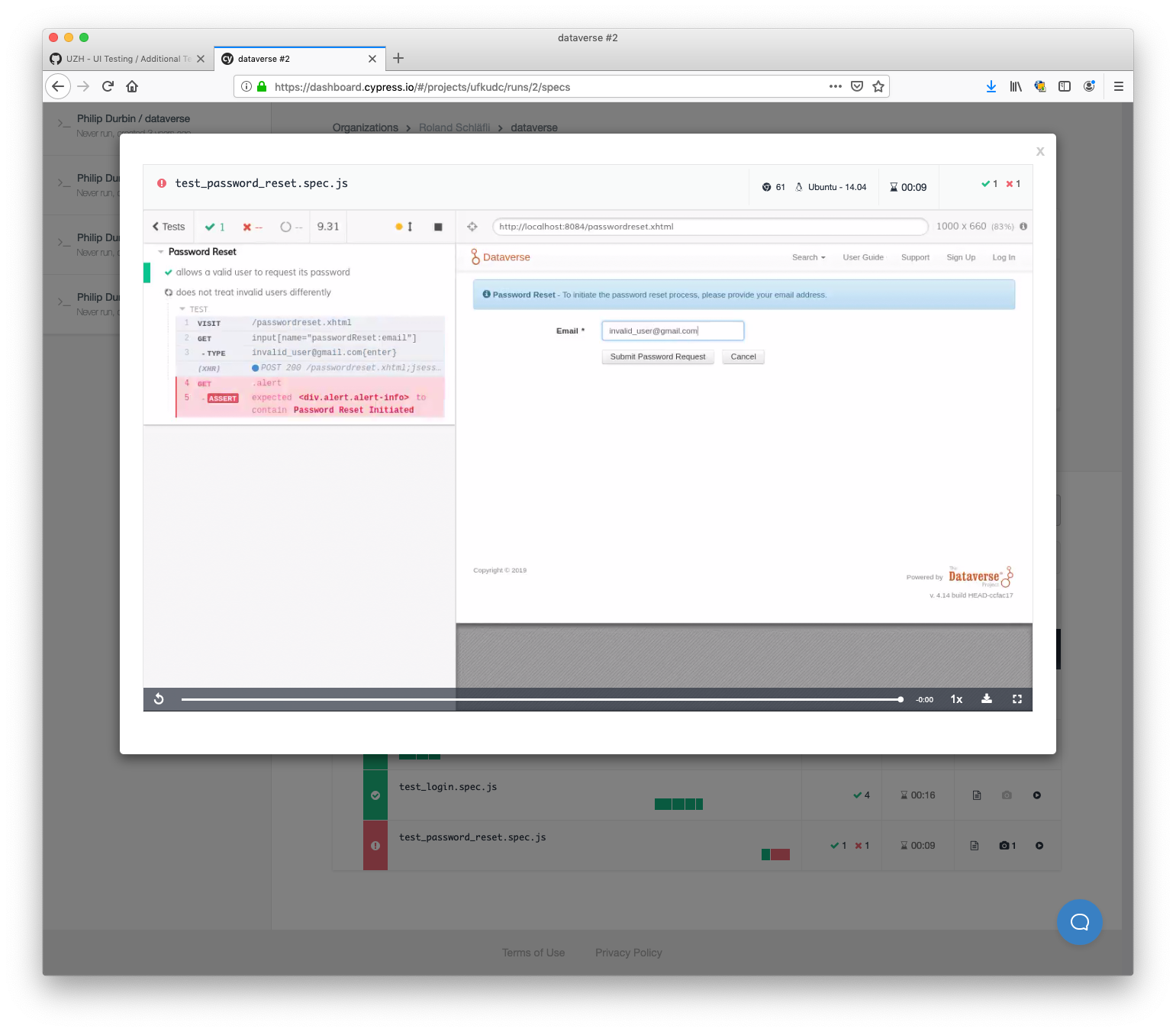

@rschlaefli wow. I logged in and got curious about the failure I saw at https://dashboard.cypress.io/#/projects/ufkudc/runs/2/specs and here's a screenshot of what I saw:

I clicked test_password_reset.spec.js and watched the video (awesome) and see that you have reproduced #5462 with a test. Here's a screenshot of the end of the video:

This is really powerful stuff! Thank you!! 🎉

Yes, I saw that this behavior is not consistent across valid and invalid emails when performing a reset and tried to capture it in this test (I did not know about the other issue regarding that problem).

On a more general note, I am not completely satisfied with how the videos are "cut" (e.g., the final screens are either not shown or for a very short amount of time). Maybe there are some settings I can change to make them less "jumpy".

Hi @rschlaefli, it looks fantastic. I'm just wondering if you have some plans to add Selenium support to the pipeline, we can share some tests.

@rschlaefli hi! Is https://github.com/IQSS/dataverse/compare/develop...rschlaefli:5846-UI-Testing still the branch you're working on? As I believe we discussed above it seems to depend on docker-aio but I was wondering how hard it would be (or if it's already possible) to point your Cypress tests at the URL of an installation of Dataverse that's already running. For example, at the moment I have Dataverse deployed to Payara 5 instead of Glassfish 4.1 for #4172 over at http://ec2-34-207-192-222.compute-1.amazonaws.com:8080 . I have not yet made any changes to the Docker files in docker-aio to get Dataverse running on Payara. I hope you see what I'm asking. I'm wondering if I can point your UI test suite against an arbitrary Dataverse server.

@4tikhonov same question but for Selenium, if you have any tests written yet. :smile:

Hi @pdurbin, yes, that's the current state. I have not had time to extend it further over the last week.

Changing the url is no problem and could trivially be extracted to a configuration parameter (e.g., an environment variable that could be set in Travis, or something else).

If we were to point the tests at a real instance, the instance would have to be reset after each execution, as the UI test depends on the pristine state as available once docker-aio is started (i.e., the admin user can login, has one notification to mark as read, etc.). The tests can be amended as well, but there needs to be an initial state that is always the same.

Just in general, would you like me to make a PR (if yes, what changes should I make to make it "mergeable"? Documentation? What to do with the framebusting code?), or would you like to keep this separate just as a basis for future investigation? Both is fine for me, I do not explicitly have to get anything merged. I probably do not have time to rebuild everything in Selenium (and chose Cypress explicitly because of it being easy to get started with and less of a burden to setup the architecture and CI).

@rschlaefli I'm definitely interested in a pull request for all the great work you've done. When I look at https://github.com/IQSS/dataverse/compare/develop...rschlaefli:5846-UI-Testing I'm wondering how how you feel about making a new branch with the following changes:

- No changes to .travis.yml but I'd still love to figure out a good place to put your changes. Maybe you could include you version as .travis.yml.future so that we have your config handy for reference.

- No changes to src/main/webapp/dataverse_template.xhtml . I know you need this hack but I'd want your pull request to only include the tests you added.

- Add tests/package-lock.json to a .gitignore file, if possible.

To summarize, I'd love to capture and merge the work you've done even if we don't use it right away. This is what we did with the old Selenium tests from years ago.

Docs would be nice but I can add them later so please don't worry about it.

Please let me know if this makes sense! The main idea is to have a new branch from "develop" similar to your existing branch. No problem if you don't have time and want me to create it. Thanks again!

@pdurbin I have started drafting a PR based on your feedback at https://github.com/IQSS/dataverse/pull/5922. I will extend it with some of my thoughts and comments and submit it as a PR later today.

@rschlaefli from a quick glance pull request #5922 looks like exactly what I asked for above!! Thank you!! 🎉

I have marked the PR as ready for review (https://github.com/IQSS/dataverse/pull/5922). Please feel free to add any comments you have about what should be added/extended. I found it quite interesting to develop these tests and have a bit of time left to do some improvements.

Pull request #5923 was merge so I'm closing this. Thanks, @rschlaefli ! Cypress is cool and modern and I especially appreciate all the continuous integration work you put into this effort. Have a good weekend! 🎉

Most helpful comment

I have marked the PR as ready for review (https://github.com/IQSS/dataverse/pull/5922). Please feel free to add any comments you have about what should be added/extended. I found it quite interesting to develop these tests and have a bit of time left to do some improvements.