Cxbx-reloaded: X_D3DFMT_L6V5U5 breaks if not supported by hardware

BumpEarth uses a bump texture stored as L6V5U5

If L6V5U5 is not supported natively, CXBX attempts to convert to ARGB using R6G5B5 to A8R8G8B8 conversion.

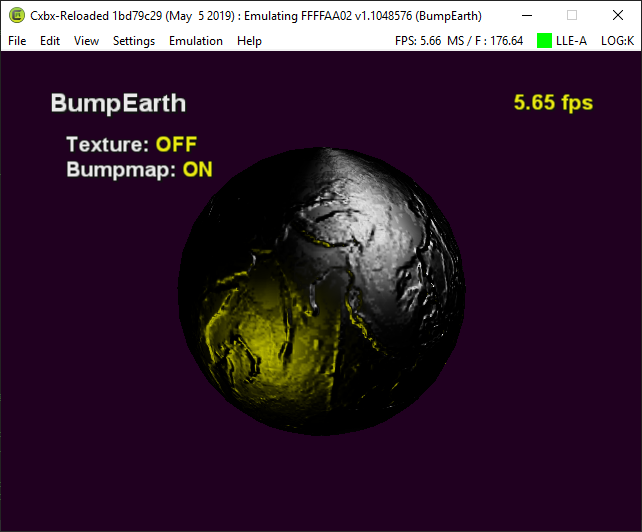

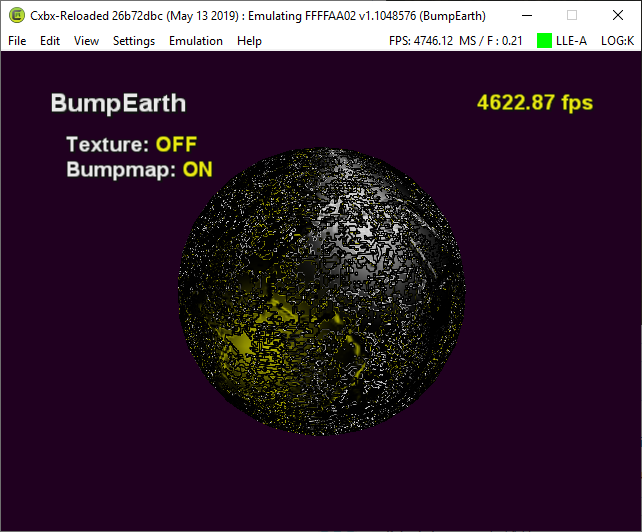

BumpEarth comparison

Hardware without L6V5U5

Software D3D Reference Device

It's tricky because this is one of a few formats that shares a value with another:

X_D3DFMT_L6V5U5 == X_D3DFMT_R6G5B5

So if there are other textures actually encoded as R6G5B5 then they should currently convert correctly

Related formats and test cases:

// Test case BumpEarth -> is stored as L6V5U5

D3DFMT_L6V5U5 : TEXTURE_FORMAT_COLOR_SZ_R6G5B5 // Alias D3DFMT_R6G5B5

// Test case JSRF boost dash -> seems to be stored as X8R8G8B8 works correctly

D3DFMT_X8L8V8U8 : TEXTURE_FORMAT_COLOR_SZ_X8R8G8B8 // Alias D3DFMT_X8R8G8B8

// Test case ???

D3DFMT_LIN_L6V5U5 : TEXTURE_FORMAT_COLOR_LU_IMAGE_R6G5B5 // Alias D3DFMT_LIN_R6G5B5

Some observations:

- Seems all Xbox LVU formats are aliases

- Seems all Xbox TEXTURE_FORMATs are RGB

I don't know enough about textures to confidently fix this.

I suspect:

- there can only be one actual format per Xbox GPU TEXTURE_FORMAT

- we should always convert to RGB textures

It could be worth testing a build that fixes BumpEarth's L6G5B5 texture, as it would break textures stored as R6G5B5, which should be visually obvious.

Then we can know if there's a way other than D3DFMT to determine how to interpret/convert textures.

However, conversion to ARGB doesn't maintain the same bits-per-component of the texture, so apparently might cause issues anyway

All 23 comments

It'd also be worthwhile to find out (which bits of) which channels are read by the bump mapping texture fetch operation. Perhaps we can suffice by using R6G5B5 on host instead, and using the Xbox texture data as-is?

In pixel shader, LVU textures get their channels reversed so

R=U5

G=V5

B=L6

When UV are offsets and L is luminance.

All channels can be used for bump operations

You're suggesting we convert R5G6B5 and drop some L precision?

I'm not sure the reasons for the texture conversion constraints, would it be buggy in certain situations because of the component size mismatch?

Occurs to me then this texture is actually R5G5B6, yet the internal format is TEXTURE_FORMAT_COLOR_SZ_R6G5B5

Xbox has shared memory, so the texture data we fetch/convert is already guaranteed to be in the gpu internal format? Or?

This doesn't make much sense, I'm probably missing something

What i am thinking is since there is no way that i can see from the api to explicitly specify which alias you are trying to use for a resource, the only way to correctly use one of them, is through the corresponding renderstate/shader stage specifications that know how to treat/handle the format.

i believe the D3DFORMATs for aliases specify simple storage layout and explicit component order, but usage determines which component mapping is implied.

So, R6G5B5 and L6V5U5 have the same storage layout, but different implied component orders, RGB and BGR respectively, determined solely through their usage. Unless i am missing something through the API, i think that a L6V5U5 texture is not treated as such until a bumpmapping instruction (texbem, texbeml, ...) is encountered or a shader manually treats it as such.

Implied ordering (from shader perspective):

- X8R8G8B8: r = R8, g = G8, b = B8

- X8L8V8U8: r = U8, g = V8, b = L8

More specifically, i do not think it is until a texture stage is used with

- PS_TEXTUREMODES_BUMPENVMAP

- PS_TEXTUREMODES_BUMPENVMAP_LUM

- D3DTOP_BUMPENVMAP,

- D3DTOP_BUMPENVMAPLUMINANCE

or any other such method that indicates bumpmap usage, that it is treated as BGR ordered instead of RGB. Until then i think that any of the aliased formats will behave as their RGB counterparts otherwise (i.e. a (0, 255, 0, 0) stored as a X8L8V8U8 value will simply show as a red texture if not used in a bumpmapping operation (using X8R8G8B8 implied ordering due to alias), even though taken as the intended format, it should be treated as (0, 0, 0, 255) if you were to supposed to take the actual implied component order intended by X8L8V8U8).

As such i think we may be forced to only selectively apply this logic in the cases that definitively specify it should be used.

Hopefully i am making sense.

Unfortunately i cannot test this logic on an actual xbox to verify whether this would hold up in all cases.

Xqemu solves this for PS_TEXTUREMODES_BUMPENVMAP(_LUM) by sampling UV from the textures' RG channels - see https://github.com/xqemu/xqemu/blob/729e52662fcadc04bb790465e99c3779e6e88393/hw/xbox/nv2a/nv2a_psh.c#L630 and https://github.com/xqemu/xqemu/blob/729e52662fcadc04bb790465e99c3779e6e88393/hw/xbox/nv2a/nv2a_psh.c#L644

What do the bumpmapping samples look like in xqemu?

Can't find any on YouTube (only Dolphin and such : https://www.youtube.com/watch?v=Z-Y_rF2SS2o&t=227s )

WRT usage defining the texture

Note it is not just the order of the channels breaking bumpearth, but the format of L6V5U5 is 8bit signed instead of unsigned

Also, JSRF bump texture with X8R8G8B8/X8L8V8U8 is correct without flipping channel order

It is a bit different though as a render target and having an X component, not sure if that's significant

So how the actual bits from the texture end up in the pixel shader seems case-by-case for aliased formats?

L6V5U5 is both signed and unsigned (mixed format). L is unsigned, U and V are signed.

Once the components are used in correct order the code we have for biasing works fine.

The code i posted in your pull request is from my local cxbx. Simply swapping the components worked for me, for bumpearth at least.

Need to test more.

Will update xqemu and see how it handles the bumpearth sample.

Hmm, do you have a comparison of JSRF with swapped components? i will see if i can try that as well.

Ah ok, will have to double check tomorrow and see where I went wrong with my conversion 🤔

The main thing i believe is that the components only need to be swapped if it was not converted to a similar format on the PC side.

If the driver supports L6V5U5 we may have an issue in the other direction if the texture was supposed to be R6G5B5.

Will have to see if i any of my computers support it to verify that case as well.

An X indicates 'discarded' channel bits - they're there to help alignment (and could potentially be used for when texture data is shared between this, and another texture that DOES use the Alpha channel)

As for the differentiation between host support or not, please consider extracting this code block into a helper function, so that it can be called for this bump-mapping purpose too : https://github.com/Cxbx-Reloaded/Cxbx-Reloaded/blob/26b72dbc11fd31c3c9a62c21ddc2e664da86424e/src/core/hle/D3D8/Direct3D9/Direct3D9.cpp#L4813-L4855

Said function would require three arguments : XboxResourceType, D3DUsage and X_Format (best renamed into XboxFormat), and would return a bool.

i have something similar, that is how i was able to make my version work.

Have not reused it in the location i copied it from yet. Will have to check what parameters i gave it.

have one for getting the PCFormat as well, but it goes a round about way through the xbox resource and format instead of directly from the PC resource at the moment.

inline const char * GetResourceTypeName(XTL::X_D3DRESOURCETYPE XboxResourceType)

inline bool IsHostSupportedFormat(XTL::X_D3DRESOURCETYPE XboxResourceType, DWORD D3DUsage, XTL::X_D3DFORMAT XboxFormat)

XTL::D3DFORMAT GetHostPixelContainerFormat(const XTL::X_D3DPixelContainer *pXboxPixelContainer, DWORD D3DUsage, bool* bConvertToARGB)

Have not gotten around to properly refactoring things. Your suggestion makes more sense. Ok modified

All just copied logic from CreateHostResource for now, but seems to work for this purpose so far.

And i guess for reference, this is what worked for me:

bool bias = false;

bool swap_components = false;

if ((format == XTL::X_D3DFMT_X8L8V8U8 && pc_format != XTL::D3DFMT_X8L8V8U8)

|| (format == XTL::X_D3DFMT_L6V5U5 && pc_format != XTL::D3DFMT_L6V5U5)) {

bias = true;

swap_components = true;

}

auto red_mask = swap_components ? MASK_B : MASK_R;

auto blue_mask = swap_components ? MASK_R : MASK_B;

But as mentioned, need to run through more test cases.

@revel8n The software ref D3D device should support L6V5U5, change in video settings

I can confirm using L6V5U5 works correctly with no adjustment - The components are flipped when it comes to the pixel shader, and the mixed-format is handled, AND the bias is not needed. Pic in first post

This is what I am seeing on my hardware with L6V5U5 conversion to A8R8G8B8:

Note the histogram is inverted, instead of centred around 128 / 0.5

Which is why I'm confused when you say BumpEarth works with just swapping components?

JSRF:

In JSRF we have bump info in Red Channel so swap in TEXBEM is not needed

Component swap looks like this unless I messed it up:

JSRF component swap (this definitely has the bump information unbiased in RG, not GB):

@revel8n It's probably that the JSRF test case is broken and should be disregarded..?

You seem to be absolutely right about how this should work.

If it's the case then we don't need to bias, actually we need to adjust for signed data being flipped around?

How's this coming along?

My thinking is:

- We are getting a bad texture out of JSRF currently, the bias should be considered a game-specific hack

- We should always read all ambiguous LVU textures as ARGB

- Operations requiring LVU should always swizzle and sign-correct the texture value

I haven't been able to get the shader instructions correct for this though

https://github.com/NZJenkins/Cxbx-Reloaded/commit/544e92d0bfe1a65810e7b35107a2f07e644eddad

Result:

I can't be bothered trying to improve until I can get Intel GPA shader debugging working again on my PC

Just realized doing this in a shader doesn't make sense because of filtering - we need to actually read the texture as the other format

Maybe we could load bump textures under both formats using extra texture stages available, assuming ps_2_0?

If we upgrade the video backend this won't be an issue anymore though

Reading unsupported formats in a fragment (/pixel) shader is kinda tricky when there's no native format available that has the same bitwise memory layout; In that case, the shader language must offer bitwise operations (shifting and masking to restore channel data), which is only available from shader model 4.0 onwards, which is only supported from DirectX 10 and up.

And yes, it would certainly be possible to convert an Xbox texture towards more than one host format, and register those via additional (shadow) texture registers (since Xbox only has 4 texture stages, while Direct3D 9 has at least twice that). Do realise that this would require extending the shader conversion code too.

In this case I think we can get away with just cmp but would have to use nearest neighbour filtering, which is no good. But I haven't tried it yet.

In D3D10 apparently textures are typeless so you can use a view with a different format. Not sure what you can do if the format is unsupported though?

Can you do bitwise ops on the texture before sampling? Or load as ints and transform on the gpu?

It'd be best to first rewrite our register-combiner-to-pixel-shader conversion code to generate HLSL, so that we can drop our current pixel-shader assembly optimizing code while still using Direct3D 9.

Next, texture sampling should indeed be made such that it fetches texels by converting data words (either 8, 16 or 32 bit, possibly even more esoteric formats) towards channel data in the shader itself.

For formats that require bitwise operations, we either have to come up with a working solution that still uses floating point math (which seems unlikely, but we haven't really tried yet), OR we have to port to DirectX 10 first, to be able to interpret texels using bitwise operations.

I already wrote something like that (although simpler) in our OpenGL LLE YUY renderer, see this : https://github.com/Cxbx-Reloaded/Cxbx-Reloaded/blob/0077eb7ab296a1636bac54f32fb44f6d2c35e3ae/src/devices/video/nv2a.cpp#L446-L456

Most helpful comment

@revel8n The software ref D3D device should support L6V5U5, change in video settings

I can confirm using L6V5U5 works correctly with no adjustment - The components are flipped when it comes to the pixel shader, and the mixed-format is handled, AND the bias is not needed. Pic in first post

This is what I am seeing on my hardware with L6V5U5 conversion to A8R8G8B8:

Note the histogram is inverted, instead of centred around 128 / 0.5

Which is why I'm confused when you say BumpEarth works with just swapping components?

JSRF:

In JSRF we have bump info in Red Channel so swap in TEXBEM is not needed

Component swap looks like this unless I messed it up:

JSRF component swap (this definitely has the bump information unbiased in RG, not GB):