Cockroach: Backup/Restore to return job_id for tracking

Is your feature request related to a problem? Please describe.

Saw this in the testing file in the jobutils package:

133 var runningStatus gosql.NullString

134 var runningStatusString string

135 // We have to query for the nth job created rather than filtering by ID,

136 // because job-generating SQL queries (e.g. BACKUP) do not currently return

137 // the job ID.

138 db.QueryRow(t, `

139 SELECT description, user_name, descriptor_ids, status, running_status

140 FROM crdb_internal.jobs WHERE job_type = $1 ORDER BY created LIMIT 1 OFFSET $2`,

141 filterType.String(),

142 offset,

143 ).Scan(

144 &actual.Description, &actual.Username, &rawDescriptorIDs,

145 &statusString, &runningStatus,

146 )

147 if runningStatus.Valid {

148 runningStatusString = runningStatus.String

149 }

But then I saw this:

https://github.com/cockroachdb/cockroach/blob/master/pkg/jobs/jobs.go

Which has:

// ID returns the ID of the job that this Job is currently tracking. This will

// be nil if Created has not yet been called.

func (j *Job) ID() *int64 {

return j.id

}

// Created records the creation of a new job in the system.jobs table and

// remembers the assigned ID of the job in the Job. The job information is read

// from the Record field at the time Created is called.

func (j *Job) Created(ctx context.Context) error {

return j.insert(ctx, j.registry.makeJobID(), nil /* lease */)

}

... which looks it could be adapted to return the job_id upon running either a BACKUP or RESTORE command that doesn't error due to syntax.

Describe the solution you'd like

Return the job_id of a RESTORE or BACKUP command.

Describe alternatives you've considered

Everything from CDC on the jobs table and then polling an external queue to polling the table via queries against the CRDB itself adding extra load. If I had the job_id returned in the same vein as insert into foo(a , b , c ) select 1 union all 2 union all 3 returning id if foo has an auto generated id column I could then take that id and even CDC on the jobs table and never have to issue any extra job queries to the DB but query the external queue that CRDB is pushing job statuses to instead.

Additional context

Add any other context or screenshots about the feature request here.

All 30 comments

I think in general every bulk i/o statement (all of them produce jobs) should have this behavior.

All the bulk I/O statement _do_ return the job ID when they return, however currently that is when the job completes. It sounds like what you're looking for is something we've discussed a few times -- an optional syntax to spawn the job and then immediately return the ID rather than waiting for it to complete.

Some prior discussion of this over here: https://github.com/cockroachdb/cockroach/issues/25129#issuecomment-407647876

@dt That's exactly what I am hoping for, a job_id to be returned, so that I can poll for success.

Did this get added to a timeline? Or any update if possible would be nice. I imagine everyone is busy just would like to know if this is getting looked at or not -- basically how it is triaged so I can take next steps.

@gigatexal this hasn't been scheduled on our roadmap yet. Is this is something that you'd like to contribute to? thanks

Yeah I will make an attempt with a PR in some days, weeks, hopefully days.

thanks! we're happy to help you.

Looking forward to it!

On Tue, Mar 19, 2019 at 5:11 PM vivekmenezes notifications@github.com

wrote:

thanks! we're happy to help you.

—

You are receiving this because you were assigned.

Reply to this email directly, view it on GitHub

https://github.com/cockroachdb/cockroach/issues/35048#issuecomment-474448735,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AAk8rVIEeeS9hJlgQVqK33D14SM7Os6yks5vYQwmgaJpZM4bChjY

.

Backed up doing our mysql to crdb migration so not enough free time to look at this will update when I start.

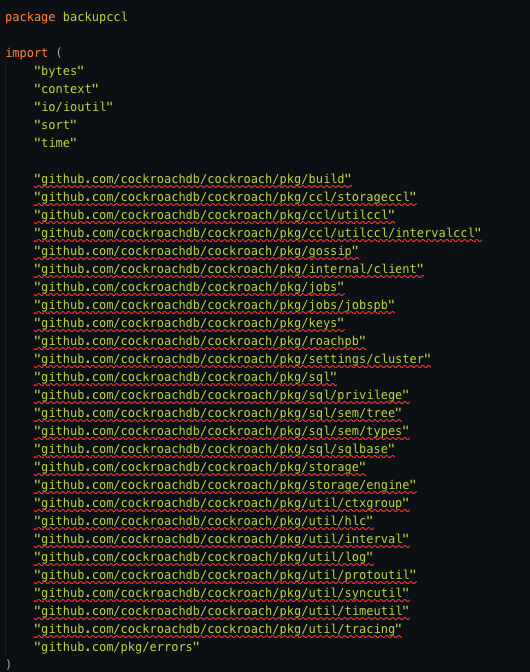

A bit of a go noob here and have only ever done stuff with project using go mod files. How do I get around this, none of the packages are seen in my fork of CRDB

@gigatexal all those packages do exist.

Perhaps you didn't check out the project into your GOPATH?

Ahh you know what that’s likely it! I’ll fix that.

On Thu, Apr 11, 2019 at 5:47 AM Jordan Lewis notifications@github.com

wrote:

Perhaps you didn't check out the project into your GOPATH?

—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/cockroachdb/cockroach/issues/35048#issuecomment-481954128,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AAk8rdffUq6jjVdo6bBHpAc0NfOBtGyaks5vfrBRgaJpZM4bChjY

.

So I've got some time on my vacation and I want to take a stab at this. Is there a doc on how to do local builds of CRDB (I'm only familiar with go mod based workflows aka Golang 1.12 and above)?

Yep! CONTRIBUTING.md and first-pr.md talk a bit about getting local development setup and how to get started!

For this one, I think a we'd want to start with a grammar change in sql.y to add an optional RETURNING ID or something modifier (we could also use the existing options syntax and add an opt to the map, which would look like e.g. WITH returning = 'id' or similar, but that could get confusing since the opts are unordered and are strings, the possible options and values are not in the parser and thus missing from the generated docs, etc.)

If the returning ID option is passed, the question is if we should _only_ return the ID in an immediate OK response, or if we should return it as the first row of a streaming result and still send the rest of the output (or error) as the job runs and finishes. The later seems nice, since you'd still get the result if you wanted it, but many drivers don't have great support for streaming results, and might handle the simpler approach of the former better. I don't have a great sense for the current state of pq driver support for streaming so I don't really know which makes more sense. @rolandcrosby ?

One of the main motivations I've seen behind feature requests like this (for all kinds of bulk I/O jobs) is to provide a consistent, predictable experience that can be relied on in scripts and other external tools interacting over SQL. As such, I would rather err on the side of always immediately returning a job ID instead of starting a long-running streaming response. (If an error occurs during planning before a job ID is created, that's fine - we should return that error immediately instead.)

Thanks for looking into this, @gigatexal!

I’m a still a Golang rookie but hopefully I can make some headway and the code I contribute doesn’t look too terrible.

I’ll go the always return the ID route.

@gigatexal Sorry if I was unclear - I was responding to @dt's comment. We should definitely retain the existing behavior by default (block until the job is done). David and I were discussing whether RETURNING ID should return the ID immediately, or return it and keep a streaming connection open to send progress back. We decided that RETURNING ID should return immediately after the job is created.

Ahh...makes sense.

If I have issues building CRDB from source where should I go? the Gitter? It probably doesn't make sense to crowd this ticket with unrelated things.

Yep that works! The gitter channel usually has a couple Cockroach Labs engineers hanging out as does the #cockorachdb channel over on the Gophers slack for those that prefer to stick to Slack. Neither of those are _officially_ supported channels, but there are _usually_ a few engineers who have volunteered to hang out in them if you're looking for real-time chat.

The _official_ place for developer questions is the web-based forums -- the forums are a bit less real-time than the chat channels, but we make a bit more of an official effort to monitor them.

From what I currently understand the file backup.go found in pkg/sql/sem/tree/backup.go defines the structure of a backup statement and which is where I found the WITH and how that works.

My thought is that if I could figure out where in pkg/ccl/backupccl/backup.go to add some logic I could check for a Key called returning and a value of id using the KVOption struct that I think gets parsed and then have a sort of async path that puts the actual backup in a goroutine (not sure if that's needed yet, thinking out loud still) and returns just the id.

Am I on the right track? Are there other areas to look at?

Close. sem/tree is where the AST is defined, so if you wanted to add a new, separate flag in the SQL grammar. If you just want to add it as one of the options that can be passed via WITH, you don't actually need to edit the AST or parser at all -- you can actually just add background or whatever to the backupOptionExpectValues map of allowed options in pkg/ccl/backupccl/backup.go (with false as the value since it does not expect a value -- it is just present or not present).

pkg/ccl/backupccl/backup.go is also where you'd make the actual behavior change -- I'd read the flag from the opts map in backupPlanHook and then depending on the that value you'll need to do something a little different from the usual StartJob call -- you basically just want to skip the <-errCh which actually blocks on the job running but you'll also need to actually get the job ID and push a row (with the right types) onto resultsCh containing the job ID.

I think that's probably a good starting point -- a slightly fancier approach would be to add an actual flag to the AST node and extend the parser in sql.y, instead of just using the WITH kvoption map. I think that approach could let you switch on the AST flag to return a different header before getting into the statement execution (unlike the opts which aren't available until execution because of details around prepared statements and bound args), so that approach could let the result columns types actually different when running in background (e.g. just one job_id column). That seems nice, but I'd probably start with the simpler one to make sure it does what you want before investing in messing with new syntax.

I'm still trying to wrap my head around what all goes on and how in the backup.go file, but I've made to my local fork the first change:

var backupOptionExpectValues = map[string]sql.KVStringOptValidate{

backupOptRevisionHistory: sql.KVStringOptRequireNoValue,

returningID: sql.KVStringOptRequireNoValue, // eg. WITH return_id;

}

If one wanted to trace the execution of code in CRDB during something like a backup being executed how would one go about that? Add logging statements?

URI: to,

BackupDescriptor: descBytes,

},

Progress: jobspb.BackupProgress{},

})

if err != nil {

return err

}

return <-errCh

}

return fn, header, nil, false, nil

I don't see how if/else'ing around the return <-errCh won't prevent the job from completing -- could be my rookie Golang skills or that I don't understand the codebase all that well (likely both).

StartJob above there fires the job off, but it is being run _by the job registry_, and not by the thread here.

errCh is a channel on which the an error from the job execution will be sent.

<-errCh blocks the thread running that hook -- which started the job -- until something is sent on that channel or until the channel closes. That <- is what waits until the job is done before returning.

I think what we probably want to do is tell StartJob about this though, so that it a) sends the job ID on resultsCh right away and then b) closes errCh (to unblock the caller). That might end up clearer than having a conditional block on errCh in the caller. We'd need to make sure that the execution code handles errCh and resultsCh being closed when it does get to the actual end of the job too in that case.

Ahh I can work with that

I’m super behind on this as I’ve not had time to really touch it now that I’ve been back at work

Cockroach 20.2 supports DETACHED keyword for Backup and restore, which executes the job, but does not wait for it to finishing, immediately returning the job ID. https://www.cockroachlabs.com/docs/v20.2/backup.html