Botframework-webchat: Error synthesizing speech when including an QR-code image in the QnA maker.

Hello!

Our team was working on the project that would use the Web Bot implemented for a React application with the speech-to-text and text-to-speech functionality for the Bot connected to a QnA Maker.

I was successful in connecting the features for speech, however noticed a strange bug. When the response that we send contains a QR code in it, the speech synthesis seems to fail entirely. The qr code is embedded as an image in the QnA maker response, not sure if this would be an expected functionality. You can check it out through the following.

Navigate to:

https://wow-kiosk.netlify.app/kiosk

In the bot field you can test the speech functionality by just saying "Hello" and it should synthesize speech correctly.

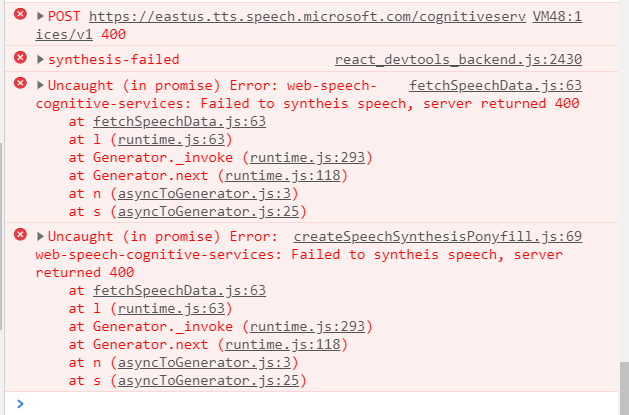

However, when you ask "Tell me about Week of Welcome", the following error pops up in the console.

I would gladly appreciate any feedback about this issue. What could be a possible go around on the issue? Should the library be able to handle embedded images?

Best,

Oleg T.

Please view our Technical Support Guide before filing a new issue.

Screenshots

Version

To determine what version of Web Chat you are running, open your browser's development tools, and paste the following line of code into the console.

[].map.call(document.head.querySelectorAll('meta[name^="botframework-"]'), function (meta) { return meta.outerHTML; }).join('\n')If you are using Web Chat outside of a browser, please specify your hosting environment. For example, React Native on iOS, Cordova on Android, SharePoint, PowerApps, etc.

Describe the bug

Steps to reproduce

- Go to '...'

- Click on '....'

- Scroll down to '....'

- See error

Expected behavior

Additional context

[Bug]

All 7 comments

@tielushko it isn't clear how you're integrating speech, as you haven't provided any repro steps at all, however this doesn't sound like a Web Chat issue and more of an issue with the limitation of the speech service you're using, so not something our team can directly help you with, unfortunately.

Additionally, QnA Maker service itself doesn't support images at this time. You can get around their limitation by using a link to the image hosted elsewhere and use Markdown with the link, however then you will need to ensure that you're using a client that will render markdown (I'm not 100% certain if WC does, but you could test real quickly with your bot by simply having the bot try to send an image with markdown. If WC does not, then you will simply need to add logic within your bot code to add image as an activity to the reply activity, which WC for sure knows how to render)

See:

- this answer on Stack Overflow by one of our support engineers for more info on QnA service + images

- 06.using-cards bot in our samples repo for how to use attachments in activities

If you want additional help, I encourage you to leverage our community on Stack Overflow, as speech synthesis behavior is out of the purview of this team.

@Zerryth sorry I haven't filled out the reproduction steps under the header there, I have pasted them in the main body of the issue. I could repeat them in this comment.

Navigate to:

https://wow-kiosk.netlify.app/kiosk

In the bot field you can test the speech functionality by just saying "Hello" and it should synthesize speech correctly.

However, when you ask "Tell me about Week of Welcome", the following error pops up in the console.

The way I added images in QnA maker is I used the insert image into the question (CTRL+G) on the qnamaker.ai Knowledge Base and inserted the src attribute from the QR maker generator tool. The bot has not problems rendering the QR code on the screen, it is the Speech capabilities I have implemented using the createCognitiveServicesSpeechServicesPonyfillFactory() as a part of ReactWebChat component.

Would you recommend submitting the issue with through the Cognitive Services? I would appreciate your help.

Best Regards,

Oleg T.

I'm not even certain how to contact the speech services team (like if they have a GitHub repo, etc.), however following a link in their documentation, this popped up:

https://feedback.azure.com/forums/932041-azure-cognitive-services

And on the right-hand side you can see different cognitive services, including speech.

You can leave your feedback there, and based on popularity & severity, they will prioritize issues.

They also mention a tag on StackOverflow that they use as well in their documentation

@Zerryth for QnA maker, do you know if the speak field can be customized when the underlying bot is responding?

Specifically, I mean, when the bot reply (schema here), can the QnA maker reply a different value for the speak field, while keeping the text field containing the QR code?

Currently, the bot response is too long/complex for Cognitive Services to synthesis it out, copied the Activity object below:

{

"type": "message",

"id": "3cg...|0000006",

"timestamp": "2021-04-09T17:18:15.0723723Z",

"channelId": "directline",

"from": {

"id": "...",

"name": "..."

},

"conversation": {

"id": "3cg..."

},

"locale": "en-US",

+ "text": "**Week of Welcome (WOW)** is week-long USF celebration to welcome new and returning students each fall and spring! WOW features a series of events and programs for new and returning Bulls to make lasting connections and have a successful start to your semester. New Student Connections (NSC) coordinates Week of Welcome (WOW) on the Tampa campus. SCAN THE QR Code for details!\n\n",

"inputHint": "acceptingInput",

"suggestedActions": {

"actions": []

},

"replyToId": "3cg...|0000005"

}

Without the speak field set, the text field (highlighted) will be used for synthesis, which can be too long/complex. That means, we will send a SSML for the following to Cognitive Services, which is probably too long/complex for it to synthesize it.

<speak version="1.0" xml:lang="en-US">

<voice xml:lang="en-US" name="Microsoft Server Speech Text to Speech Voice (en-US, AriaNeural)">

<prosody pitch="+0%" rate="+0%" volume="+0%">

**Week of Welcome (WOW)** is week-long USF celebration to welcome new and returning students each fall and spring! WOW features a series of events and programs for new and returning Bulls to make lasting connections and have a successful start to your semester. New Student Connections (NSC) coordinates Week of Welcome (WOW) on the Tampa campus. SCAN THE QR Code for details!

</prosody>

</voice>

</speak>

Thus, Cognitive Services returned 400.

@compulim, good point, I had failed to mention the speak field. As for QnAMaker, it itself doesn't have a way of manipulating the speak method, but the customer could definitely add some business logic to properly handle QR code responses before sending the reply activity to the user

@tielushko See this line in sample 11.qnamaker. You would use QnAMaker to get the QnA result, then your bot can add custom logic to parse specific words you actually want spoken, instead of some long garbled text that William above had given as an example of what could break Cognitive Services.

@Zerryth @compulim Thank you guys for helping me figure it out. I can imagine now how that link for the image would fail to be synthesized by the speech service. I will try and find a workaround with the bot logic to prevent the link elements from being sent to the speech service for processing.

Thank you so much! Have a wonderful rest of your week!

You want to set Activity.speak field. Activity schema in botbuilder-js (Not sure what language your bot is in, but this speak prop is available in all SDK languages)