Azure-sdk-for-net: Service Bus subscriber stops processing messages

Describe the bug

After 5000 messages are processed by the subscriber, the subscriber doesn't accept any more messages.

No exception are thrown by the subscriber.

Async message delegate doesn't receive any more messages.

Any sync/async operation locks indefinitely.

Exception or Stack Trace

No exception are thrown.

To Reproduce

Execute snippet below

Code Snippet

static readonly ConcurrentBag<Tuple<string, string>> messageRefs = new ConcurrentBag<Tuple<string, string>>();

static void Main(string[] args)

{

/*

* Subscription Details

* Max delivery count: 10

* Lock Duration: 2minutes

*/

var receiver = CreateSubscriptionClient();

var options = new MessageHandlerOptions(ExceptionReceivedHandler)

{

MaxConcurrentCalls = 5,

AutoComplete = false,

};

receiver.RegisterMessageHandler(async (message, cancellation) =>

{

try

{

messageRefs.Add(new Tuple<string, string>(message.MessageId.ToString(), message.SystemProperties.LockToken));

throw new Exception("something bad happens...");

await receiver.CompleteAsync(message.SystemProperties.LockToken);

}

catch (Exception)

{

//we do not want to return the message immediately (receiver.AbandonAsync)

//let the message lock (2min) expire before this message being re-processed

}

}, options);

while (true) //monitor

{

Console.WriteLine($"Message read: {messageRefs.Count}");

if (MONITOR_VERBOSE)

Console.WriteLine(

String.Join(

Environment.NewLine,

messageRefs

.GroupBy(x => x.Item1)

.OrderByDescending(x => x.Count())

.Take(10)

.Select(x => $"Id: {x.Key} | Locks: {x.Count()}")));

Thread.Sleep(5000);

}

}

Expected behavior

We are aware of the max 5000 concurrent connections to subscriber entities while using the amqp protocol. We are although expecting those connections to be released, when the message lock expires.

Specifically, we want the lock (30secs) to expire before the message being visible again.

As indeed highlighted from the previous snippet, after the lock expires, the message is picked up again by the same subscriber, but the previous connection is not released, bringing the subscriber into a locked state

Additional context

Same behavior from WindowsAzure.ServiceBus library.

Setup (please complete the following information):

- OS: Win10

- 3.4.0

All 32 comments

Thank you for opening this issue! We are routing it to the appropriate team for follow up.

CC: @AlexGhiondea

@federicobarera could you please share a repository with a simple repro? Thanks.

Hi @SeanFeldman

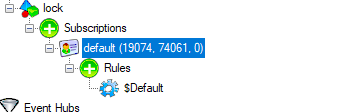

You can find a repro with instructions here:

https://github.com/federicobarera/bus-lock

Thanks

@federicobarera I'm somewhat confused by your code as I don't quite understand what are you trying to demonstrate. The messages sent to the topic are retrieved in your code. The code throws an exception, which means the retrieved messages must have their lock duration to expire to be retried again. Which is what's happening. Those messages are all eventually ending up in a DLQ.

Some of your assumptions seem to be wrong. Specifically:

- Alternatively, as per this docs, we would expect service bus to throw Number of concurrent connections on a namespace exception to be thrown.

You have a single connection in this code. Each retrieved message is not a connection.

If all of your messages will dead-letter, you will not be receiving anything. Is that the issue?

@SeanFeldaman

No, the issue is: after 5000 messages are processed and failed, the service bus stops processing any further message, as after 5000 messages AMQP is not pulling any more messages

I'll try to get you a service bus connection with the right configuration to replicate

@SeanFeldman

I could not find an email address where to privately send a connection string, but here some more clarification on the behavior we are experiencing.

The receiver stops receiving messages after 5000 messages are processed and failed:

My assumption is, because we are not calling .Abandon() on failures, we are reaching the limit of 5000 concurrent connection to sb entities limit. If that is the case, why the message lock expiration doesn't trigger an automatic abandon (freeing up 1 connection) and/or throws exception regarding reaching the quota

Those messages are all eventually ending up in a DLQ.

Yes, but that is not the issue. The issue is that legit messages are not processed anymore since the receiver is locked.

EDIT:

Regarding the console output, I can now see why the output could be found confusing. Probably renaming to

Total messages read: 5000

MessageId: [...] | This message was locked: [n] times.

P.s The output contains the number of times the same MessageId got locked just to prove that the messages' locks are indeed expiring and get re-processed.

The reason I'm questioning the assumption of 5,000 connections is that you only have 1 connection in your code. It's created once when the subscription client is created. The connection is either stay as-is and you receive all messages through that connection. Or it gets closed and then you'd see an exception when trying to use it.

Have you tried a different entity/namespace? If you want to share anything over email - feldman.sean at gmail.com

Hi @SeanFeldman ,

This is a problem that has been haunting us in production for a long time, on different entities and namespaces. The only hints of this occurring were, high volumes + high volume of failures = receiver locked up not processing messages.

We finally managed to reproduce (code provided) and the constant that is always coming back is this magic number: 5000.

A quick google: "azure service bus 5000", points to: https://docs.microsoft.com/en-us/azure/service-bus-messaging/service-bus-quotas

Subsequent receive requests are rejected, and an exception is received by the calling code. This quota applies to the combined number of concurrent receive operations across all subscriptions on a topic.

That is why I assume that letting the lock expire, rather than abandoning the failing messages, is essentially causing the internals of the protocol to lock.

My only note in this case is that we are not receiving any exception by the subscriber, it just stops receiving messages in the delegate.

I'll send you the ConnectionString of our test sb if you want to run the replica against it.

EDIT:

maybe is this ticket better suited here? https://github.com/Azure/azure-amqp

maybe is this ticket better suited here? Azure/azure-amqp

Perhaps. Note that additional connections would not just lock up your receiver, but you'd get an exception. And, as I mentioned earlier, with a single receiver it's a single connection, not 5000. You can take a memory dump when it happens and see how many connections were created. Or, look at the TCP connections and how many you have established from your machine.

Was this every resolved or figured out? I am seeing the exact same issue. I use the SubscriptionClient to gain access to a topic and register an event handler using the RegisterMessageHandler method.

The topic in question has currently around 10k messages but on the 5000th message it simply stops processing them with no exception.

I am using the default Peak setting and not abandoning or completing the messages.

Basically:

private async Task ProcessMessagesAsync(Message message, CancellationToken arg2)

{

await _subscriptionClient.AbandonAsync(message.SystemProperties.LockToken);

}

Vs:

private Task ProcessMessagesAsync(Message message, CancellationToken arg2)

{

return Task.CompletedTask;

}

In contrast, if I do call AbandonAsync, the message handler is called past the 5000th message and seems to work as expected.

Of note however is that calling AbandonAsync vs simply completing successfully is significantly slower.

I am using version 3.4 of the Microsoft.Azure.ServiceBus package.

Hi @james-jw , no i fear we did not get to the bottom of it.

The only reasonable explanation is that not "completing" or "abandoning" the messages keeps them "in transit" which eventually brings amqp to a lock when 5000 messages have been engaged.

On another note, I opened the ticket since this strategy of not abandoning failing messages is something that we intentionally wanted to use to automatically retry the message once the lock timeout expired. What is your reason behind non abandoning/completing messages?

We have a very similar experience but ours is more intermittent and difficult to get any reproducible steps. My collegeue has documented some of this here https://github.com/Azure/azure-sdk-for-net/issues/8291

Thanks for the feedback! We are routing this to the appropriate team for follow-up. cc @jfggdl

Any good working solution of this problem yet? I am also facing the same issue but the interesting part is that now it is happening with a random number, not with 5000th message.

No, this is a specific behavior and, right or wrong, most likely lies in amqp. I have provided a console app to replicate the issue.

We ended up manually abandoning the messages and rely on delay visibility and custom retry count

@federicobarera

Sorry for extremely late response. Somehow lost the visibility of this thread.

This is the behavior on our implementation of AMQP.

AMQP is a stateful link and keeps the state of all the messages received in its cache. So it is always advisable to abandon/complete the message when you are done processing it. If you don't the state reaches its limit of 5000. This is unfortunately by design.

Fixes -

- Abandon / Complete / DeadLetter the message to free up the "amqp-delivery" on your receiver.

- Alternatively, recycle the receiver which will create a new link, and hence a new 5000 limit.

Based on this discussion, I am going to say you are only on 1 connection and this has nothing to do with connection limit.

I'll add a backlog item on the service team's board to figure out a better solution for this so that it becomes evident on the client.

Two approaches for long term fixes -

- Client realizes it reached 5000 window size and next Receive() will throw an exception.

- Alternatively, server will close the link when this happens.

Given this is a client issue, I'd think it's better for the client to log/throw.

This is a client bug which impacts the server as well. Think about customers using native AMQP library instead of our library which sets the value to 5000. If they set the value to int.MaxValue and forget to acknowledge the message, the service will also end up keeping the whole state in memory (as is required by AMQP). I don't want to propose final solution right now as it needs some discussion and thinking through.

Hi @nemakam , thanks for coming back on this one. Late is better than ever :)

As explained in this thread, we initially intentionally avoided releasing the messages to leverage the peak lock timeout before the message becoming visible again. That was a crude way to time retries over transient errors, using the oob delivery count increase and the automatic deadlettering after n retries.

We then misinterpreted the docs on that 5000 limit.

Finally we ended up implementing our own logic for delayed retries and dead lettering.

Regarding the point you made about protecting the server from "dumb" clients, I can give you my 5cent as the library consumer.

We would have really preferred having the library throwing exceptions back to us, rather than having production systems locking up because of a "unintended" utilization of the library itself.

That would have saved us time and headaches trying to get to the bottom of it.

I hope my feedback helped!

Cheers

@federicobarera ,

Don't get me wrong. Its clear that the experience needs to get better and client needs to get an exception somehow, and just keeping it blocked without having an understanding of what's happening within is unintended.

It was never "chosen" to get blocked like this. Its not even a decision of our client that this is happening. This is how AMQP as a protocol works. Protocol itself puts a hard limit on how many active messages can be there without being acknowledged.

As a SDK library, we can just try to make the experience smoother.

Hi, we're sending this friendly reminder because we haven't heard back from you in a while. We need more information about this issue to help address it. Please be sure to give us your input within the next 7 days. If we don't hear back from you within 14 days of this comment the issue will be automatically closed. Thank you!

Excuse me, but why was @nemakam unassigned and this issue was closed? Has this been fixed through a pull request that is not linked from here?

I got a feeling that everyone agreed that this should be addressed in some way but then @jsquire added lables that probably prompted the bot to close this?

Can this be repoened so that we can decide on the solution and implement it? This issue is sure to surface for many more people yet if not addressed, it sure did not so long ago for me.

Also as it stands, AbandonAsync throws MessageLockLostException right now when old lock token is provided which in conjunction with what was discussed here would mean these messages cannot be discarded anymore and therefore any application (or reciever) cannot clear lost messages from the AMQP cache and is bound to carry them around, again freezing when 5000 messages are reached. Did I get that right?

I've done some more testing and it actually turns out that AbandonAsync deletes the message from the AMQP cache even if it throws MessageLockLostException. Therefore the limit is not there. We just need to finish the messages properly.

That said I believe this should still be reopened and the underlying issue that the SDK silently stops working should be addressed. I don't want to force solutions since @nemakam was before wanted to think about this more deeply before making changes. but I believe the SDK should raise an exception after the 5000 messages.

Hi, we're sending this friendly reminder because we haven't heard back from you in 7 days. We need more information about this issue to help address it. Please be sure to give us your input. If we don't hear back from you within 14 days of this comment the issue will be automatically closed. Thank you!

Ok, what more information about this issue do you need to help address it? I can't think of anything that needs to be added so I don't understand the needs-author-feedback tag, can it be removed?

I have the exact same issue. We have an Azure Function with a service bus trigger. We only run CompleteAsync when a message is successfully handled. If there is an error, the exception is hidden and we just let the code run out. This is desired by us because then the message will be retried after the lock timeout.

If we see a lot of failures in the function, e.g. because some external resource is down, the function stops working after a while and we need to restart it. There are no errors recorded anywhere.

The alternative, that we are using for queues, is to complete the message and add a new message with a schedule to the queue. This approach cannot however be using with a topic that could have several subscribers.

@axisc, is this something the service team should investigate?

+1

I'd suggest that this limitation is at least documented here. And if this limit can be configured on the backend, that this is exposed as a namespace property.

I have the exact same issue. We have an Azure Function with a service bus trigger. We only run CompleteAsync when a message is successfully handled. If there is an error, the exception is hidden and we just let the code run out. This is desired by us because then the message will be retried after the lock timeout.

If we see a lot of failures in the function, e.g. because some external resource is down, the function stops working after a while and we need to restart it. There are no errors recorded anywhere.

The alternative, that we are using for queues, is to complete the message and add a new message with a schedule to the queue. This approach cannot however be using with a topic that could have several subscribers.

We are facing the same issue. Is there a solution to this?

We are facing the same issue. Is there a solution to this?

It looks like it was fixed in PR https://github.com/Azure/azure-sdk-for-net/pull/16163 by @yvgopal.

Most helpful comment

This is a client bug which impacts the server as well. Think about customers using native AMQP library instead of our library which sets the value to 5000. If they set the value to int.MaxValue and forget to acknowledge the message, the service will also end up keeping the whole state in memory (as is required by AMQP). I don't want to propose final solution right now as it needs some discussion and thinking through.