Azure-sdk-for-js: Pausing and resuming option in azure blob upload using javascript.

How to pause the on progress upload of browser files to azure storage account and resume when required using azure storage blob javascript(v12) library file?

All 19 comments

Thanks for the feedback! We are routing this to the appropriate team for follow-up. cc @xgithubtriage

Thanks for the feedback! We are routing this to the appropriate team for follow-up. cc @xgithubtriage

Add @ljian3377

Storage JS SDK doesn't support pause and resume naturally.

But if you are using methods like uploadFromStream() in Node.js, you can pause the Node.js Readable stream and resume the stream later.

For browser method like uploadFromBrowserData() which accepts File or Blob type. You can create a customized PauseResume policy and injects into pipeline. The policy can simply hold outgoing requests until resume.

Refer to this simple about how to create a customized policy: https://github.com/Azure/azure-sdk-for-js/blob/master/sdk/storage/storage-blob/samples/javascript/customizedClientHeaders.js

English

@ljian3377

Would you mind prvoider a more detail example/demo

about how to Pause/Resume/Cancel upload?

blockBlobClient.uploadStream (Doc Here) didn't describe how to do these action

中文

我在上传文件到 Azure Storage 里面,用的是 blockBlobClient.uploadStream,

Progress 怎么监听搞懂了,

但是找了半个小时没搞清楚怎么 pause / resume。

我试过:

var readStream = fs.createReadStream(file_path);

// 省略一些代码

然后

readStream.pause()

发现不管用

然后看了下

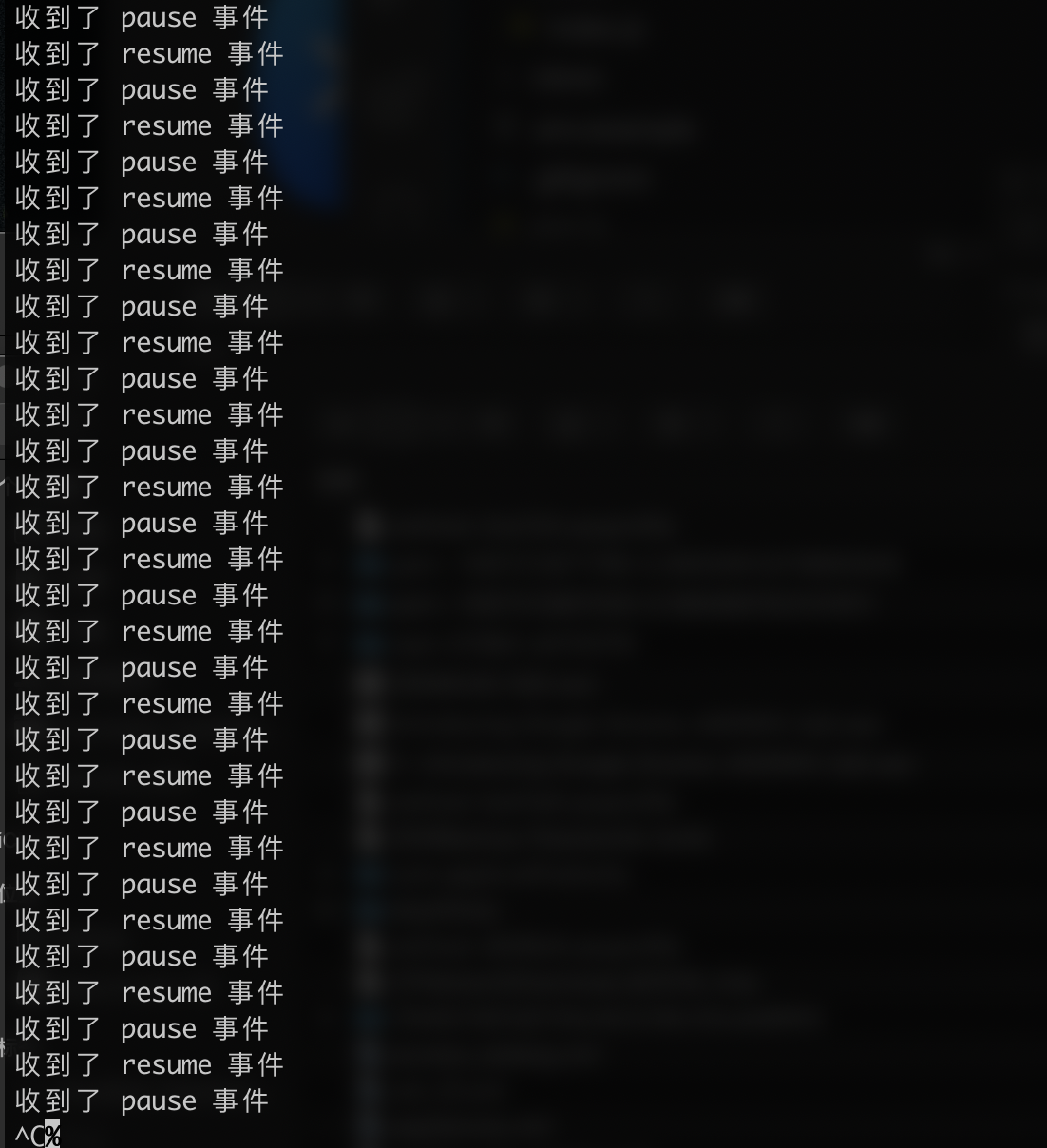

readStream.on('pause', ()=>{

console.log('收到了 pause 事件');

})

readStream.on('resume', ()=>{

console.log('收到了 resume 事件');

})

setTimeout(() => {

readStream.pause();

console.log(readStream.isPaused())

}, 5000);

发现会

我的代码长这样

const ONE_MEGABYTE = 1024 * 1024;

const uploadOptions = {

bufferSize: 4 * ONE_MEGABYTE,

maxBuffers: 20

};

var readStream = fs.createReadStream(file_path);

await blockBlobClient.uploadStream(readStream,

uploadOptions.bufferSize, uploadOptions.maxBuffers, {

onProgress: (ev) => { }

});

(copy 自官方例子然后改了点)

Sorry we should have tested it before giving you the advice on using uploadStream. Internal handling for parallel upload messed with it. Though uploadStream with single concurrency (uploadOptions.maxBuffers = 1) seems to work fine.

Also, could you tell us more about the scenario where you need this pausing and resuming functionality?

@ljian3377

I am building a Desktop app that can use Azure "Speech To Text" API

The App work like this:

- Transcode file into the acceptable format by Azure (For example mp4->wav)

- Upload to Azure -> Storage Account -> Container

- Get a URL (publicly accessible)

- Send that URL to Azure speech to text REST API to create a transcription job

From the user's perspective

- Drag & Drop file(.mp4 .wav .mp3 .mkv etc) into this Desktop app

- See status changed to "Transcoding"

- See status changed to "Uploading"

- See status changed to "Processing"

- Show successful result

The Pause and Resume is a "nice to have" feature when "Uploading"

Right now I need "Cancel" more than "Pause" and "Resume"

Any help? Thank you

@1c7

To "Cancel", I think you can utilize the abortSignal. Refer to these test cases to get started.

https://github.com/Azure/azure-sdk-for-js/blob/ea765db3922dc6ee2aa85a020ce7d4ac2d9c2dcc/sdk/storage/storage-blob/test/aborter.spec.ts#L42

Thanks for the feedback! We are routing this to the appropriate team for follow-up. cc @xgithubtriage.

@ljian3377, @XiaoningLiu Is this still a feature request in the backlog or should the issue be closed with the workarounds mentioned above?

@ljian3377, @XiaoningLiu Is this still a feature request in the backlog or should the issue be closed with the workarounds mentioned above?

@ramya-rao-a It's a feature request for pausing and resuming. The guidance given is for cancelling.

A potential use case for this could be to (auto?) pause an upload of a large file where there is a network drop, then resume when network connectivity is normal - preventing having to start the upload from the start.

Or is there something already that supports this use case?

A potential use case for this could be to (auto?) pause an upload of a large file where there is a network drop, then resume when network connectivity is normal - preventing having to start the upload from the start.

Or is there something already that supports this use case?

azcopy may be of use. And they already have a npm package.

If we pause the upload stream body for a while within one REST API call, it will soon time out. I guess this won't be satisfying.

Another way is uploading in divided parts via several stageBlock and commitBlockList while keeping track of where you are. This is better to be done in your own code since our SDK are designed to be stateless.

Does azcopy solve your issue? We may not address this in SDK.

Hi, we're sending this friendly reminder because we haven't heard back from you in a while. We need more information about this issue to help address it. Please be sure to give us your input within the next 7 days. If we don't hear back from you within 14 days of this comment the issue will be automatically closed. Thank you!

Will add a pause and resume sample using stageBlock

Thank you @ljian3377, the sample code will be helpful, please post it.

I added a sample here: https://github.com/ljian3377/azure-storage-tools/blob/sample/uploadWithPauseAndResume.ts

The basic idea is to do multiple stageBlock and then commitBlockList. By pausing, you stop issuing any new http request and wait till ongoing http requests are done.

It got a bit more complicate as I used a Batch to stageBlock in parallel. We will see if we can improve the sample and get it into the official repo.

Hi, we're sending this friendly reminder because we haven't heard back from you in a while. We need more information about this issue to help address it. Please be sure to give us your input within the next 7 days. If we don't hear back from you within 14 days of this comment the issue will be automatically closed. Thank you!

Most helpful comment

@1c7

To "Cancel", I think you can utilize the

abortSignal. Refer to these test cases to get started.https://github.com/Azure/azure-sdk-for-js/blob/ea765db3922dc6ee2aa85a020ce7d4ac2d9c2dcc/sdk/storage/storage-blob/test/aborter.spec.ts#L42

https://github.com/Azure/azure-sdk-for-js/blob/ea765db3922dc6ee2aa85a020ce7d4ac2d9c2dcc/sdk/storage/storage-blob/test/node/highlevel.node.spec.ts#L189