Aws-sdk-js: Latency increased after updating [email protected]

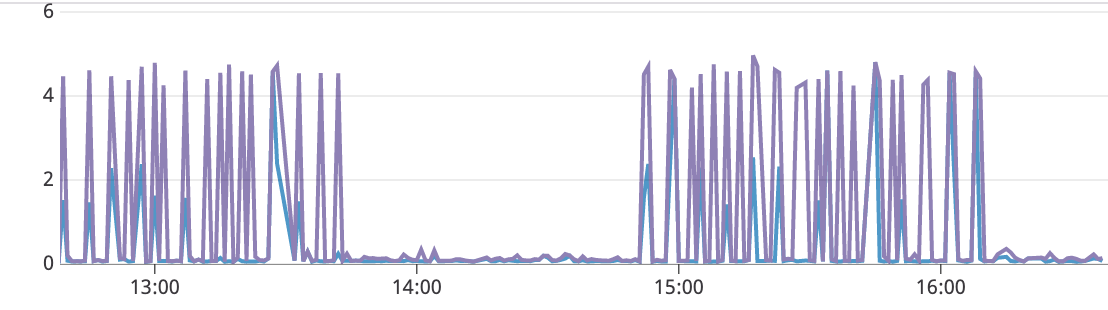

After updating the module to version 2.600.0 we notice an increasement in the API latency from 100 ms to 4/5 seconds (Percentile 95). After rolling back the update, the latency normalized.

We are only using the SDK for S3 requests.

All 10 comments

Hey @wjsc, can you please provide some reproduction steps how you got these metrics?

This is a real problem, but it started before v2.600.0. We have a project that was using v2.586.0 and found that SSM and S3 aws calls (and probably for all aws services) took 4+ seconds to execute. Here are the log statements generated from the aws sdk v2.586.0:

[AWS ssm 200 4.583s 0 retries] getParameter({

Name: '/services/parameter1',

WithDecryption: true

})

[AWS ssm 200 4.388s 0 retries] getParameter({

Name: '/services/parameter2',

WithDecryption: true

})

[AWS ssm 200 4.551s 0 retries] getParameter({

Name: '/services/parameter3',

WithDecryption: true

})

[AWS s3 200 4.604s 0 retries] getObject({

Bucket: 'our-bucket',

Key: 'our-key'

})

After downgrading to v2.507.0 (same as one of our other projects), we get these times. The only change we did was change the aws sdk version.

[AWS ssm 200 0.067s 0 retries] getParameter({

Name: '/services/parameter1',

WithDecryption: true

})

[AWS ssm 200 0.077s 0 retries] getParameter({

Name: '/services/parameter2',

WithDecryption: true

})

[AWS ssm 200 0.035s 0 retries] getParameter({

Name: '/services/parameter3',

WithDecryption: true

})

[AWS s3 200 0.225s 0 retries] getObject({

Bucket: 'our-bucket',

Key: 'our-key'

})

We're running in kubernetes pods in EC2. This is a big issue and at some point we'll want to move to newer versions of the sdk.

@ajredniwja I tried to reproduce this bug in an isolated codebase, but I couldn't. Seems to work fine in local.

We're running kubernetes in EKS.

In our case we rollback aws-sdk to v2.562.0

Is this one the same as https://github.com/aws/aws-sdk-js/issues/3024 ?

@lukiano Sure looks like it could be.

This problem wont be because of the SDK, I have reached out to EKS service team, since they recently made some changes on how they evaluate credentials logic. Will update once I hear back from them.

@wjsc and @jeksmith are you using IAM roles for service accounts?

Any update on this? Facing the same exact issue in our EKS clusters.

We were able to download the AWSCLI (v1) and run it directly from our cluster's container. It showed one single request to the metadata endpoint, and it seems it did a fallback after that. So the CLI added 1 second of constant latency to every single request. My guess is somehow the aws-sdk-js is doing 3 timeouts (confirmed by the comment in the linked issue).

Additionally, we marked our instances as being "required" for the new authentication flow with ec2 metadata. Like so:

aws ec2 modify-instance-metadata-options --profile default --http-endpoint enabled --http-token required --instance-id i-2834fn

The aws-sdk-js still takes approximately 4 seconds to timeout, but instead throws a Credentials error. This confirms what's already been said in other comments and the linked issue but adding more context and detail in case it helps.

I'm adding workarounds we found for anyone else suffering from this

- Set the metadata timeout to be much lower

AWS.config.credentials = new AWS.EC2MetadataCredentials({

httpOptions: { timeout: 500 }, // 1/2 second or whatever you want

maxRetries: 1

});

Downgrade to aws-sdk-js version 2.574.0 before the commit was introduced to use the new signed IMDSv2 metadata flow. (ref: https://github.com/aws/aws-sdk-js/commit/4ed556871d347b07e95da4ec5dd0ffe1bcafe87f)

Use an alternative credentials source before it attempts to hit the metadata api. (harcoded, env variables, config files, etc). The metadata credential source defaults to being the last source to check, so using any other source will temporarily solve this problem.

+1 also having 4-5 second latency for SSM getParameter callls from ECS. Any updates on this?

Most helpful comment

Any update on this? Facing the same exact issue in our EKS clusters.

We were able to download the AWSCLI (v1) and run it directly from our cluster's container. It showed one single request to the metadata endpoint, and it seems it did a fallback after that. So the CLI added 1 second of constant latency to every single request. My guess is somehow the aws-sdk-js is doing 3 timeouts (confirmed by the comment in the linked issue).

Additionally, we marked our instances as being "required" for the new authentication flow with ec2 metadata. Like so:

aws ec2 modify-instance-metadata-options --profile default --http-endpoint enabled --http-token required --instance-id i-2834fnThe aws-sdk-js still takes approximately 4 seconds to timeout, but instead throws a Credentials error. This confirms what's already been said in other comments and the linked issue but adding more context and detail in case it helps.