Argo: Race conditions when persisting Workflows causing undefined behavior

Users are reporting

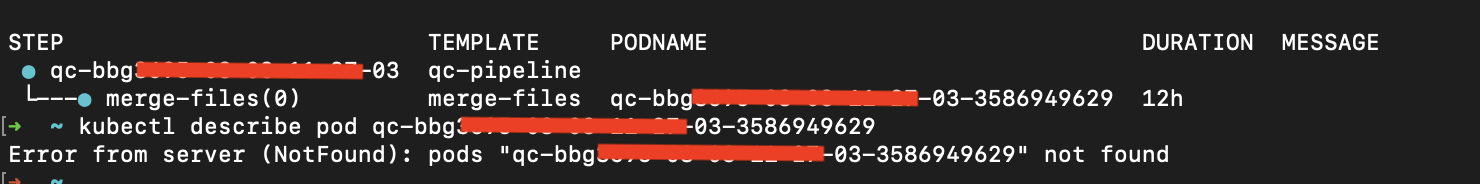

Fabio Rigato: "stuck with deleted pods"

- v2.9.2 workflows can get stuck with deleted pods:

Error from server (NotFound): pods "..." not found". There are #3097 and #3469 in v2.9.3 that could fix this.

Prateek Khera: "deadline exceeded"

- v2.9.3 workflows stuck in progressing `level=warning msg="Deadline exceeded" namespace=XXXX workflow=XXX level=error msg="error in entry template execution" error="Deadline exceeded" namespace=ci workflow=XXX

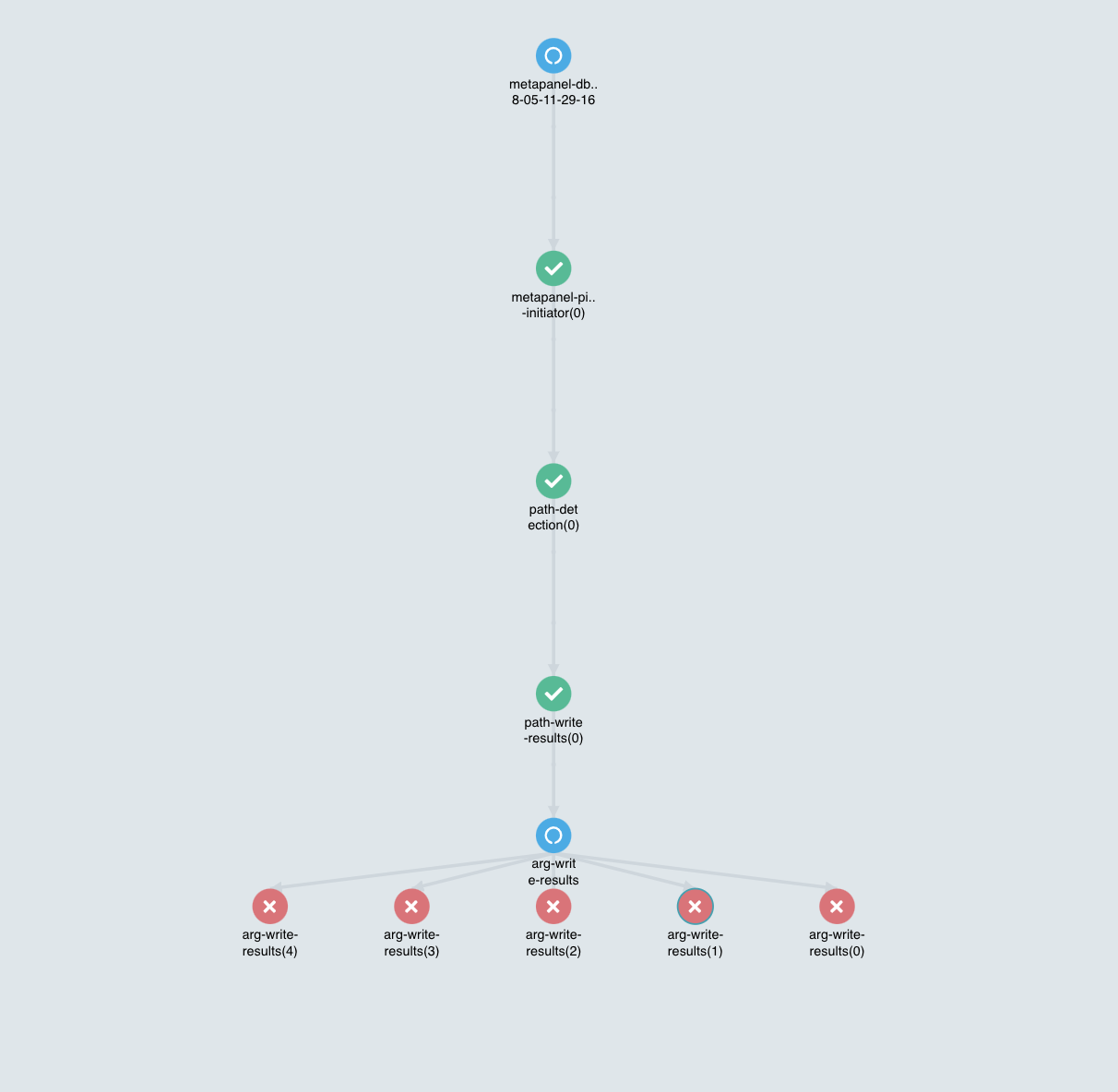

Jonathan Steele: "stuck workflow parent task"

I upgraded to 2.9.4 and am now experiencing "stuck" workflows where all of the tasks are complete, but the parent task is still running

All 15 comments

Prateek's workflow:

apiVersion: argoproj.io/v1alpha1

kind: Workflow

metadata:

generateName: ci-

namespace: ci

spec:

entrypoint: ci

onExit: final-steps

arguments:

parameters:

- name: branch

value: "ci" #default value

- name: build_develop

value: "false" #default value

- name: build_release

value: "false" #default value

- name: image_tag

value: "na" #default value

- name: refid

value: "na" #default value

- name: latest-commit

value: "na" #default value

- name: change_type

value: "na" #default value

- name: user_slug

value: "na" #default value

imagePullSecrets:

- name: XXX

templates:

- name: ci

dag:

tasks:

- name: ci-inprogress

template: build-status

arguments:

parameters:

- name: status

value: INPROGRESS

- name: ci-make-lint

template: ci-make-lint-template

dependencies: [ci-inprogress]

- name: ci-make-unit-test

template: ci-make-unit-test-template

dependencies: [ci-make-lint]

- name: ci-make-integration-test

template: ci-make-integration-test-template

dependencies: [ci-make-lint]

- name: ci-build-image

template: docker-build-template

when: "{{workflow.parameters.build_develop}} == true || {{workflow.parameters.build_release}} == true"

dependencies: [ci-make-integration-test,ci-make-unit-test]

- name: build-status

inputs:

parameters:

- name: status

metadata:

labels:

app: ci

commit: '{{workflow.parameters.latest-commit}}'

stage: '{{workflow.name}}-notification-{{inputs.parameters.status}}'

workflow: '{{workflow.name}}'

container:

name: build-status

image: XXX

imagePullPolicy: Always

command: ["python3"]

args: ["ops/k8s/dev/ci/status.py"]

env:

- name: WORKFLOW

value: '{{workflow.name}}'

- name: LATEST_COMMIT

value: '{{workflow.parameters.latest-commit}}'

- name: STATUS

value: '{{inputs.parameters.status}}'

envFrom:

- secretRef:

name: dev-secret-ci

resources:

requests:

cpu: '1'

memory: 1Gi

- name: ci-make-lint-template

inputs:

artifacts:

- name: argo-source

path: /src

git:

repo: XXX

revision: "{{workflow.parameters.latest-commit}}"

usernameSecret:

name: dev-secret-ci

key: GIT_USERNAME

passwordSecret:

name: dev-secret-ci

key: GIT_PASSWORD

metadata:

labels:

app: ci

commit: '{{workflow.parameters.latest-commit}}'

stage: '{{workflow.name}}-lint-test'

workflow: '{{workflow.name}}'

container:

image: XXX

command: ["bash", "-c"]

args: ["pip3 install -r src/test_requirements.txt --quiet && make lint"]

workingDir: /src

resources:

requests:

cpu: '1'

memory: 1Gi

- name: ci-make-unit-test-template

inputs:

artifacts:

- name: argo-source

path: /src

git:

repo: XXX

revision: "{{workflow.parameters.latest-commit}}"

usernameSecret:

name: dev-secret-ci

key: GIT_USERNAME

passwordSecret:

name: dev-secret-ci

key: GIT_PASSWORD

metadata:

labels:

app: ci

commit: '{{workflow.parameters.latest-commit}}'

stage: '{{workflow.name}}-unit-test'

workflow: '{{workflow.name}}'

container:

image: XXXX

command: ["bash", "-c"]

args: ["pip3 install -r src/test_requirements.txt --quiet && make unit_test"]

workingDir: /src

resources:

requests:

cpu: '8'

memory: 25Gi

- name: ci-make-integration-test-template

inputs:

artifacts:

- name: argo-source

path: /src

git:

repo: XXX

revision: "{{workflow.parameters.latest-commit}}"

usernameSecret:

name: dev-secret-ci

key: GIT_USERNAME

passwordSecret:

name: dev-secret-ci

key: GIT_PASSWORD

metadata:

labels:

app: ci

commit: '{{workflow.parameters.latest-commit}}'

stage: '{{workflow.name}}-integration-test'

workflow: '{{workflow.name}}'

container:

image: XXXX

command: ["bash", "-c"]

args: ["pip3 install -r src/test_requirements.txt --quiet && make integration_test"]

workingDir: /src

resources:

requests:

cpu: '8'

memory: 25Gi

- name: docker-build-template

inputs:

artifacts:

- name: argo-source

path: /src

git:

repo: XXX

revision: "{{workflow.parameters.latest-commit}}"

usernameSecret:

name: dev-secret-ci

key: GIT_USERNAME

passwordSecret:

name: dev-secret-ci

key: GIT_PASSWORD

retryStrategy:

limit: 2

container:

image: gcr.io/kaniko-project/executor:debug

args: ["XX"]

workingDir: /src

resources:

requests:

cpu: '2'

memory: 4Gi

volumeMounts:

- name: kaniko-secret

mountPath: /kaniko/.docker

volumes:

- name: kaniko-secret

secret:

secretName: XX

items:

- key: ".dockerconfigjson"

path: config.json

- name: cd-trigger-template

metadata:

labels:

app: ci

commit: '{{workflow.parameters.latest-commit}}'

stage: '{{workflow.name}}-cd-argo-trigger'

workflow: '{{workflow.name}}'

container:

image: XXX

command: ["bash", "-c"]

args: ["ops/scripts/cd-trigger.sh"]

resources:

requests:

cpu: '1'

memory: 1Gi

env:

- name: BRANCH

value: "{{workflow.parameters.branch}}"

- name: REFID

value: "{{workflow.parameters.refid}}"

- name: LATEST_COMMIT

value: "{{workflow.parameters.latest-commit}}"

- name: CHANGE_TYPE

value: "{{workflow.parameters.change_type}}"

- name: USER_SLUG

value: "{{workflow.parameters.user_slug}}"

envFrom:

- secretRef:

name: dev-secret-ci

Can we ask this user for a "live" Workflow with Workflow.Status included?

I don't think the introduction of SignificantPodChange caused @Prateek khera’s issue - I think his issue is existed before v2.9

Hi Guys,

I just would like to add my comments on this issue.

With a load of ~ 450 simultaneous WFs on Argo v2.9.2 ~ 80 WF were suffering the same problem.

In particular, as you can see in the screenshot attached, the WF is stuck, looks like is still running but the "running pod" doesn't exist anymore.

I went through the pod logs and the controller log as well and both of them are clear, no error and no panic.

From the controller's log is possible to follow the pos status update until the "Running" state (as in the picture).

From the Pod log (both main and wait) everything looks great as well, the execution complete with success (i can find the results as expected in the GCS bucket). Happy to share it with you in pvt channel if you need.

Last night I updated to v2.9.4 (the last one) where I was facing the #3655 before (i just commented the maxDuration key because doesn't work fine in v2.9.4), run around 300 WF and the cluster looks stable.

Happy to help you to investigate more about this issue, I'm available to have a chat or provide you guys more detail.

Thanks, guys for all your effort in fixing these issues!

Cheers,

Fabio

Hi @simster7,

that's an update. I'm facing the same issue in the v2.9.4 but this time is stuck on "Pending" status from 13 hours. I sent you on slack the "Live" yaml output and a screenshot of the UI (maybe is useful for debugging)

I suspended and resume the WF manually (from the UI) in order to continue the execution of the WF.

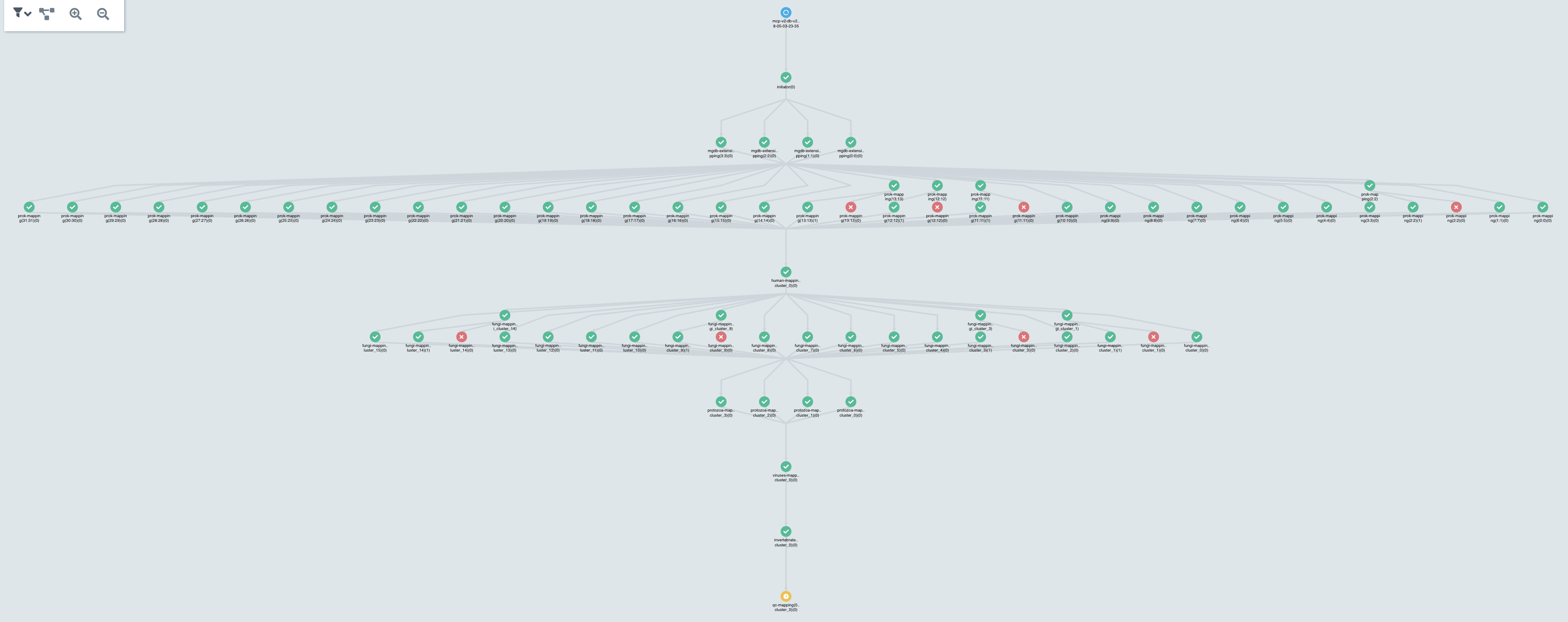

I also had many samples stuck on "Running" after all retry attempts, in this case the WF should be updated to status "Failed", this is the screenshot and I sent you @simster7 on slack the "Live" yaml file.

I'm really hoping to find a stable version soon if not we have to rollback to v2.2.1.

Cheers,

Fabio

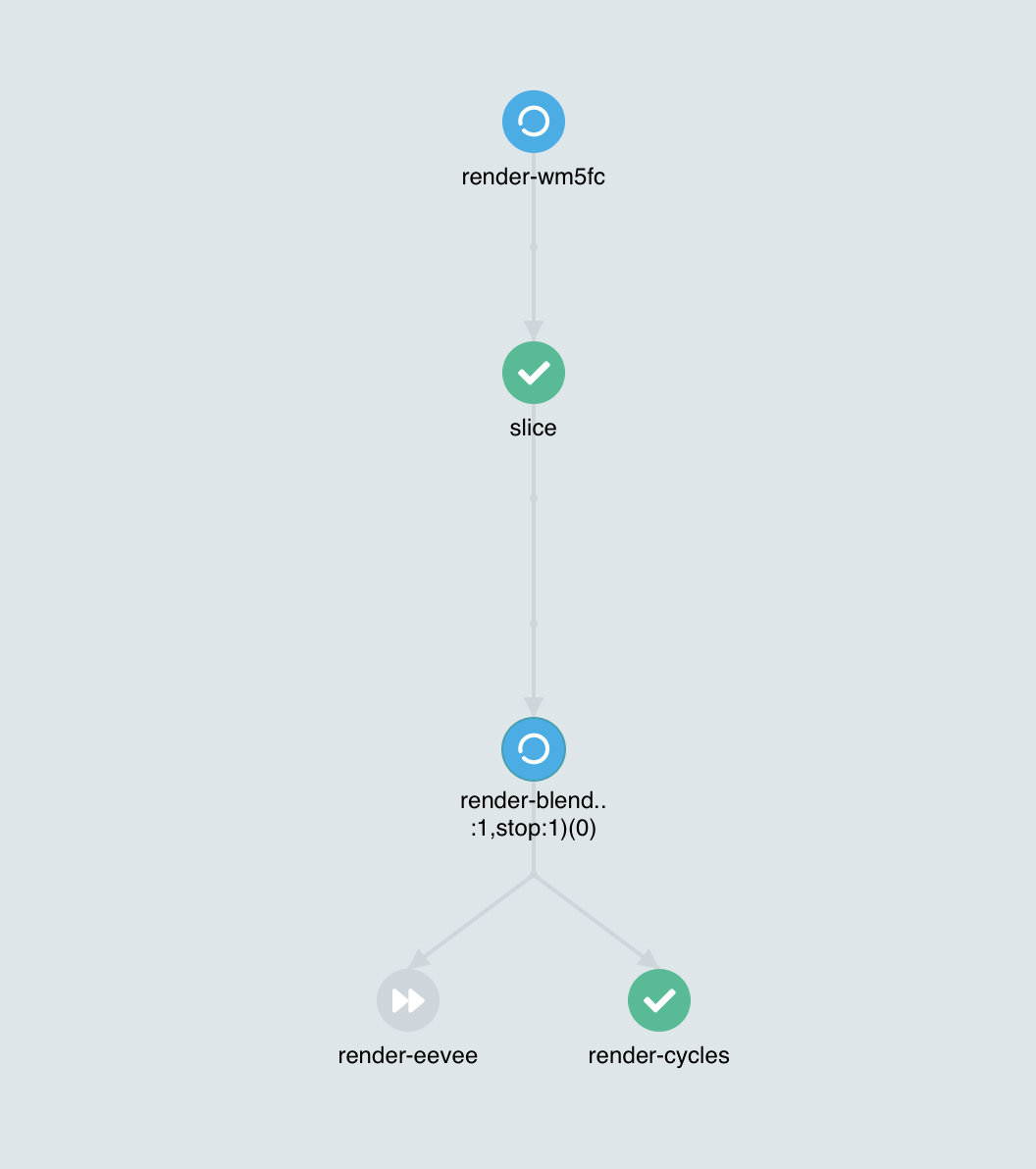

I have been having similar issues with 2.9.

- Pods that are Running in the Argo but are Terminated/Terminating in Kubernetes.

- Pods that do not exist in Kubernetes but are running in Argo

I just stumbled upon something similar to the screenshot above in 2.9.4. A pod that is completed but the Workflow and Retry is still running.

I've spent a good amount of time today working on this issue and have a fair idea of what the issue is. @salanki if you could send me the Workflow from that screenshot (kubectl get wf <NAME> -o yaml) that would be appreciated.

Thanks for the detailed reports @fabio-rigato

For everyone affected by this issue: I am making test builds with possible fixes. If you're interested in helping us test to quickly fix this issue, please reach out to me on the Argo Slack @simon. Thanks!

Available in v2.9.5

Hey guys (@alexec @simster7),

I'm facing the same issue in v2.9.5

I already shared to @simster7 on slack the yaml and screenshots.

All the best.

How can I reopen this issue ?

just create a new one ?

Asked @fabio-rigato to confirm if lines containing the object has been modified; please apply your changes to the latest version and try again Conflict are present in his logs. If not, then this issue can be closed again and a new issue must be opened

Talked with @fabio-rigato and identified this as a separate issue. Asked him to open a different issue to track this

Thanks @simster7,

I created a new issue (#3857)

Cheers,

Fabio