Argo: Retry Strategy doesn't work in GKE preemptible node (pod deleted)

Checklist:

- [x] I've included the version.

- [ ] I've included reproduction steps.

- [ ] I've included the workflow YAML.

- [x] I've included the logs.

What happened:

I configured the retry strategy as the following

retryStrategy:

limit: 4

retryPolicy: "Always"

backoff:

duration: "12s"

factor: 5

maxDuration: "200m"

and run the Argo workflow in a GKE preemptible nodes.

When GKE required resources back deleting the nodes, the retry strategy doesn't work.

I received a red warning /!\ without the retry.

What you expected to happen:

I expected the retry strategy to works properly because I setup retryPolicy: "Always" as well.

How to reproduce it (as minimally and precisely as possible):

Create a workflow with the following retry Strategy and run it in GKE GCP in a preemptible node

retryStrategy:

limit: 4

retryPolicy: "Always"

backoff:

duration: "12s"

factor: 5

maxDuration: "200m"

Anything else we need to know?:

Environment:

GKE (GCP)

- Argo version: v2.7.6

$ argo version

argo: v2.7.6+70facdb.dirty

BuildDate: 2020-04-28T17:09:06Z

GitCommit: 70facdb67207dbe115a9029e365f8e974e6156bc

GitTreeState: dirty

GitTag: v2.7.6

GoVersion: go1.13.4

Compiler: gc

Platform: darwin/amd64

- Kubernetes version :

$ kubectl version -o yaml

clientVersion:

buildDate: "2020-02-06T03:31:35Z"

compiler: gc

gitCommit: f5757a1dee5a89cc5e29cd7159076648bf21a02b

gitTreeState: clean

gitVersion: v1.14.10-dispatcher

goVersion: go1.12.12b4

major: "1"

minor: 14+

platform: darwin/amd64

serverVersion:

buildDate: "2020-02-21T18:01:40Z"

compiler: gc

gitCommit: 145f9e21a4515947d6fb10819e5a336aff1b6959

gitTreeState: clean

gitVersion: v1.14.10-gke.27

goVersion: go1.12.12b4

major: "1"

minor: 14+

platform: linux/amd64

Other debugging information (if applicable):

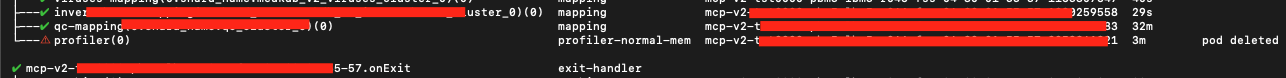

- workflow result:

Message from the maintainers:

If you are impacted by this bug please add a 👍 reaction to this issue! We often sort issues this way to know what to prioritize.

All 10 comments

This is an update

After digging logs with @sarabala1979, we understood that probably there is an issue in managing the maxDuration in the retryStrategy.

As you can see in my retryStrategy configuration, the maxDuration: "200m" and the pod was running just for 3m before to be claimed by GKE.

We analysed the controller's logs and these are the 3 logs after pod deletion:

1) time="2020-04-30T02:47:47Z" level=info msg="node &NodeStatus{ID:xxxxxxxxxxxxx-xxxxxxxxxxxx,Name:xxxxxxxxxxxx[8].profiler,DisplayName:profiler,Type:Retry,TemplateName:profiler-normal-mem,TemplateRef:nil,Phase:Running,BoundaryID:xxxxxxxxxxxx,Message:,StartedAt:2020-04-30 02:44:30 +0000 UTC,FinishedAt:0001-01-01 00:00:00 +0000 UTC,PodIP:,Daemoned:nil,Inputs:nil,Outputs:nil,Children:[xxxxxxxxxxxx],OutboundNodes:[],StoredTemplateID:,WorkflowTemplateName:,TemplateScope:,ResourcesDuration:ResourcesDuration{},} phase Running -> Error" namespace=default workflow=xxxxxxxxxxxx

2) time="2020-04-30T02:47:47Z" level=info msg="node &NodeStatus{ID:xxxxxxxxxxxx,Name:xxxxxxxxxxxx[8].profiler,DisplayName:profiler,Type:Retry,TemplateName:profiler-normal-mem,TemplateRef:nil,Phase:Error,BoundaryID:xxxxxxxxxxxx,Message:,StartedAt:2020-04-30 02:44:30 +0000 UTC,FinishedAt:0001-01-01 00:00:00 +0000 UTC,PodIP:,Daemoned:nil,Inputs:nil,Outputs:nil,Children:[xxxxxxxxxxxx],OutboundNodes:[],StoredTemplateID:,WorkflowTemplateName:,TemplateScope:,ResourcesDuration:ResourcesDuration{},} message: Max duration limit exceeded" namespace=default workflow=xxxxxxxxxxxx

3) time="2020-04-30T02:47:47Z" level=info msg="node &NodeStatus{ID:xxxxxxxxxxxx,Name:xxxxxxxxxxxx[8].profiler,DisplayName:profiler,Type:Retry,TemplateName:profiler-normal-mem,TemplateRef:nil,Phase:Error,BoundaryID:xxxxxxxxxxxx,Message:Max duration limit exceeded,StartedAt:2020-04-30 02:44:30 +0000 UTC,FinishedAt:2020-04-30 02:47:47.59984196 +0000 UTC,PodIP:,Daemoned:nil,Inputs:nil,Outputs:nil,Children:[xxxxxxxxxxxx],OutboundNodes:[],StoredTemplateID:,WorkflowTemplateName:,TemplateScope:,ResourcesDuration:ResourcesDuration{},} finished: 2020-04-30 02:47:47.59984196 +0000 UTC" namespace=default workflow=xxxxxxxxxxxx

It's weird that there is a Max duration limit exceeded even if the pod was running just 3m.

By the way, thanks @sarabala1979 for your time and for your effort in deep debugging the issue.

@simster7 can you own this issue?

@fabio-rigato Do you still have access to the logs that caused this? With the logs provided it's hard to diagnose the max duration issue.

Really helpful would be:

- The full Workflow object after it fails:

kubectl get wf <NAME> -o yaml - Logs for the controller when the operation takes place:

kubectl logs deploy/workflow-controller

I see that you redacted some data in your logs. If you don't feel comfortable posting these on GitHub, but are comfortable with sharing them privately feel free to message them to me on Slack: @simon

I have the same problem on aws eks

a good reproduction is delete the pod while the the workflow is running

Hi @simster7,

thanks for reaching me out.

I still have access to the controller log but unfortunately, when I run kubectl get wf <NAME> -o yaml I received pod not found, probably because the node was preempted by GCP?

But I have the whole yaml in MySQL I can share it with you if you need it.

please let me know =)

with all the best,

Fabio

Hi @fabio-rigato, I spent some time debugging today. If you could share the yaml with me it would be very helpful.

Another note to you and @raanand-home: What is the message that gets tagged on when the Node gets preempted? Is it always pod deleted?

Hi @simster7,

thanks for your effort in debugging the issue.

I shared with you in Slack more information about the workflow.

Happy to have a chat if you need more details.

Hope you an amazing day guys.

Cheers,

Fabio

Has this resolved the issue?

I still see this happening on the latest version when used in Kubeflow

Would anyone have any information on whether argo will retry tasks based on retryStrategy that are interrupted by GCE preemptible / EC2 spot nodes shutting down?

If I _delete_ the pod manually, argo seems not to retry the task — but that's an imperfect replication of a node shutting down.

I can confirm it retries with 2.11.6 version when the error message is "pod deleted". Need to have backoff configured. Only issue I found is "

Timeout: request did not complete within requested timeout" when argo time outs while waiting create pod or other kubernetes api operation but thats other issue that I think is not related to preemptible instances. In this case workflow will fail without retry

Most helpful comment

I have the same problem on aws eks

a good reproduction is delete the pod while the the workflow is running