Aiohttp: memory leak when doing requests against AWS

Long story short

while investigating a leak in botocore (https://github.com/boto/botocore/issues/1464), I decided to check to see if this also affected aiobotocore. I found that even the get_object case was leaking in aiobotocore. So I tried doing a the underlying GET aiohttp request and found it triggers a leak in aiohttp.

Expected behaviour

no leak

Actual behaviour

leaking ~800kB over 300 requests

Steps to reproduce

update the below script with your credentials and run like so: PYTHONMALLOC=malloc

python3 `which mprof` run --interval=1 /tmp/test_leak.py -test aiohttp_test

script: https://gist.github.com/thehesiod/dc04230a5cdac70f25905d3a1cad71ce

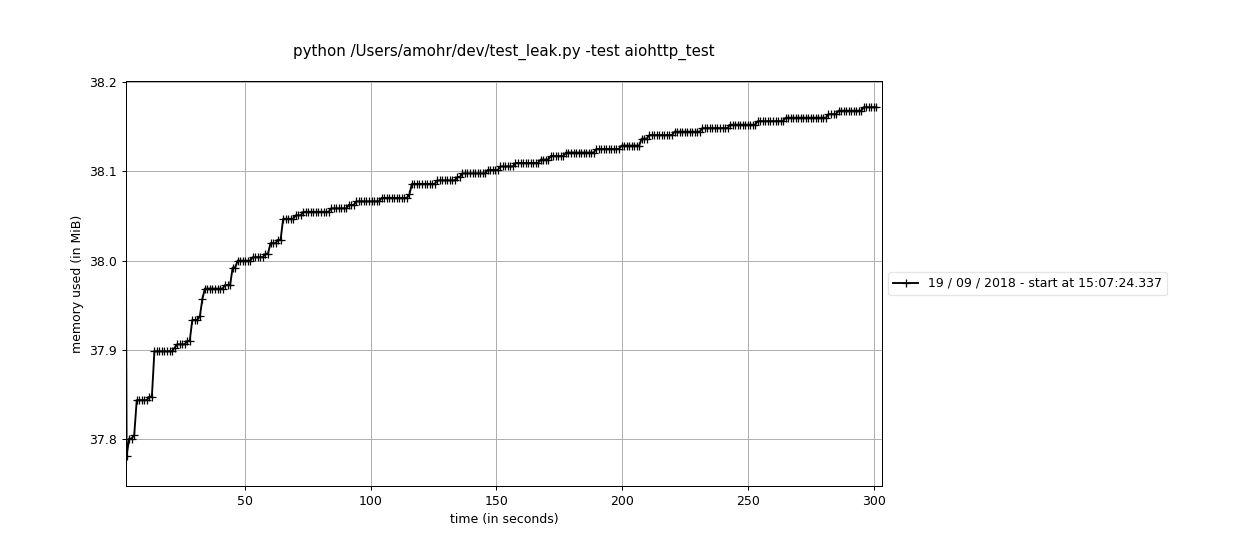

you should get a memory usage graph like so:

Your environment

bash-4.2# /valgrind/bin/python3 -m pip freeze

aiobotocore==0.8.0

aiohttp==3.1.3

APScheduler==3.3.1

async-timeout==2.0.1

asynctest==0.12.0

attrs==18.1.0

aws-xray-sdk==1.0

botocore==1.10.12

cachetools==2.1.0

certifi==2018.4.16

chardet==3.0.4

cycler==0.10.0

datadog==0.20.0

decorator==4.3.0

dill==0.2.7.1

docutils==0.14

editdistance==0.3.1

future==0.16.0

gapic-google-cloud-pubsub-v1==0.15.4

google-api-python-client==1.6.7

google-auth==1.4.1

google-auth-httplib2==0.0.3

google-cloud-core==0.25.0

google-cloud-pubsub==0.26.0

google-gax==0.15.16

googleapis-common-protos==1.5.3

graphviz==0.8.3

grpc-google-iam-v1==0.11.4

grpcio==1.12.0

httplib2==0.11.3

idna==2.6

idna-ssl==1.0.1

iso8601==0.1.12

jmespath==0.9.3

jsonpickle==0.9.6

kiwisolver==1.0.1

matplotlib==2.2.2

memory-profiler==0.52.0

multidict==4.3.1

nameparser==0.5.6

numpy==1.14.3

oauth2client==4.1.2

objgraph==3.4.0

packaging==17.1

ply==3.8

proto-google-cloud-pubsub-v1==0.15.4

protobuf==3.5.2.post1

psutil==5.4.5

pyasn1==0.4.2

pyasn1-modules==0.2.1

PyGithub==1.39

PyJWT==1.6.1

pyparsing==2.2.0

python-dateutil==2.7.3

pytz==2018.4

requests==2.18.4

rsa==3.4.2

setproctitle==1.1.10

simplejson==3.15.0

six==1.11.0

tzlocal==1.5.1

uritemplate==3.0.0

urllib3==1.22

wrapt==1.10.11

yarl==1.2.4

All 32 comments

Could you run with https://docs.python.org/3/library/tracemalloc.html enabled?

What objects are leaking?

I've been trying to, but I ran into: https://bugs.python.org/issue33565 :( You wouldn't believe the pain I've had trying to diagnose several leaks in our application.

Python version? Have you tried with older Aiohttp releases?

3.6.5, 2.3.10 is the same

FYI: looks like the warnings fix does not solve the leak (entirely)

Ok after getting the warnings fix, no significant leaks are detected by tracemalloc however memory is definitely increasing. I'm guessing a third party library is leaking like openssl.

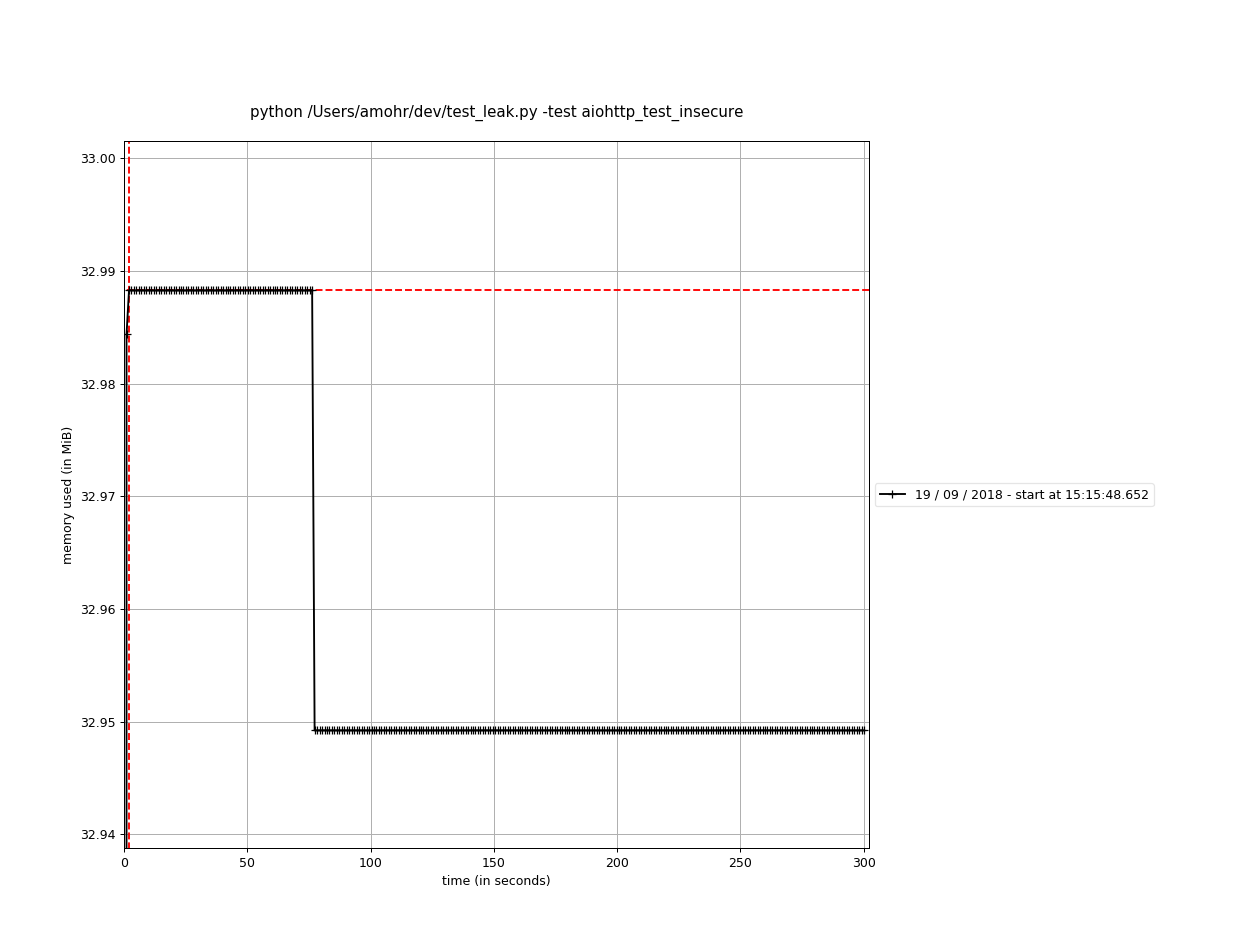

memory usage graph generated (w/o tracemalloc usage of course):

similarly objgraph is not showing any issues

I've updated my script and I think I have a testcase against raw asyncio sockets, re-validating now.

@asvetlov I've updated my test script per the gist link above which allows you to run w/ memory_profiler or tracemalloc, I've created tests for botocore/aiobotocore/aiohttp and asyncio (see -test param)

botocore no longer leaks with the warnings fix, however aiohttp still leaks.

I tried creating a raw asyncio http client based on aiohttp's implementation but have been unable to reproduce the leak with it. If you run the script w/ tracemalloc you'll see it does not report any leaks, nor does objgraph. So it sounds like there's a native leak somewhere.

Any idea how to get the leak to reproduce with the native asyncio client in my script, or even better what the leak is?

No idea yet :(

btw this may be unrelated but in a separate project I noticed that simply using PYTHONMALLOC=malloc caused a leak to disappear

I believe I may be running into this same issue. I'm running into massive memory leaks doing SSL requests. tracemalloc, mem_top, and friends don't report anything that odd. If anything, the number of objects and memory used by them seem to decrease as actual memory usage increases.

EDIT: For clarity, I want to mention that I'm not using aiobotocore, just straight aiohttp, and I'm not interacting with AWS.

Well, I was able to fix my issue.

It was as simple as this:

connector = aiohttp.TCPConnector(enable_cleanup_closed=True)

session = aiohttp.ClientSession(connector=connector)

The webserver I'm hitting must have a broken SSL implementation. Dunno if this would fix the issue that @thehesiod is having, however.

Some additional information that may be related to this issue:

I'm having a large memory leak trying to upload binary files (video) to AWS S3.

I've done several tests and I can confirm that memory leak is caused by calling aiobotocore.s3.put_object. What is interesting is memory usage that I have after running a test case:

- Case 1 - 100 files uploaded, File size - 2 Mb, Test is over and I measure resident memory:

Memory before GC - 297052

Memory after GC - 215856 - Case 2 - 100 files uploaded, File size - 20 Mb, Test is over and I measure resident memory:

Memory before GC - 1.977g

Memory after GC - 98452

The same processing without saving files to S3 have a resulting memory footprint around ~80Mb And GC doesn't change it much.

Cause of this I have 2 basic assumptions:

1) Some native code messes up reference count for byte-arrays preventing them from being released properly.

2) memory leak size is somehow related to the size of the files being processed (calculation of file size, hash, etc.). For some reason resulting memory leak is much smaller for big files (since they don't fit in buffer or some other reason).

I will try to investigate this issue deeper to find out how exactly file contents is processed and what may cause this issues.

enable_cleanup_closed=True

Doesn't work in my case :(

updated my test script with some new options, and ran with python3.7 (to avoid warning leak) with:

aiobotocore 0.9.4

aiodns 1.1.1

aiohttp 3.3.2.1

async-timeout 3.0.0

attrs 18.1.0

awscli 1.15.59

boto3 1.7.58

botocore 1.10.58

cchardet 2.1.1

certifi 2018.4.16

chardet 3.0.4

colorama 0.3.9

datadog 0.22.0

decorator 4.3.0

docutils 0.14

entrypoints 0.2.3

graphviz 0.9

idna 2.7

jmespath 0.9.3

kiwisolver 1.0.1

memory-profiler 0.54.0

msgpack-python 0.5.6

multidict 4.3.1

nameparser 0.5.7

objgraph 3.4.0

packaging 17.1

pip 18.0

psutil 5.4.6

pyasn1 0.4.4

pycares 2.3.0

PyGithub 1.40

PyJWT 1.6.4

pyparsing 2.2.0

Pypubsub 4.0.0

python-dateutil 2.7.3

python-json-logger 0.1.9

PyYAML 3.13

requests 2.19.1

rsa 3.4.2

schema 0.6.8

setuptools 39.0.1

simplejson 3.16.0

six 1.11.0

ujson 1.35

urllib3 1.23

wrapt 1.10.11

wxPython 4.0.3

yarl 1.2.6

so this seems to definitely be an SSL related leak

FYI:

>>> ssl.OPENSSL_VERSION

'OpenSSL 1.0.2o 27 Mar 2018'

another interesting note is that I had to add a test limiter to the insecure test because it's SOOO much faster than the https test, so some serious https optimization is needed

Do You use uvloop? If yes, which version?

@hellysmile me? no, just regular asyncio loop, you can see in my gist link above

ok, looks like I'm now able to reproduce the leak with my raw asyncio socket test, see:

logged python bug: https://bugs.python.org/issue34745

@thehesiod what about to try with uvloop?

@hellysmile updated gist with uvloop support, still leaks w/ uvloop

Not sure if I hit the wide side of the barn with it, but recently I was tracing memory leak in aiohttp app and came to this: https://github.com/aio-libs/multidict/issues/307

For some reason latest aiohttp still depends on a buggy version. Upgrading multidict fixed the issue for me.

@whale2 multidict memleak is not related to the issue: it was introduced by multidict 4.5.0 (2018-11-19) and finally fixed by 4.5.2 (2018-11-28).

@thehesiod used older multidict version for his measurements.

ya, plus I was able to repro with raw asyncio sockets per my cmt above

looks like a fix has been proposed in https://bugs.python.org/issue34745! When I get a change I need to try it, if or anyone else gets a chance!

@thehesiod the graph in https://github.com/python/cpython/pull/12386 is the result of a slightly simplified version of your gist, would that do?

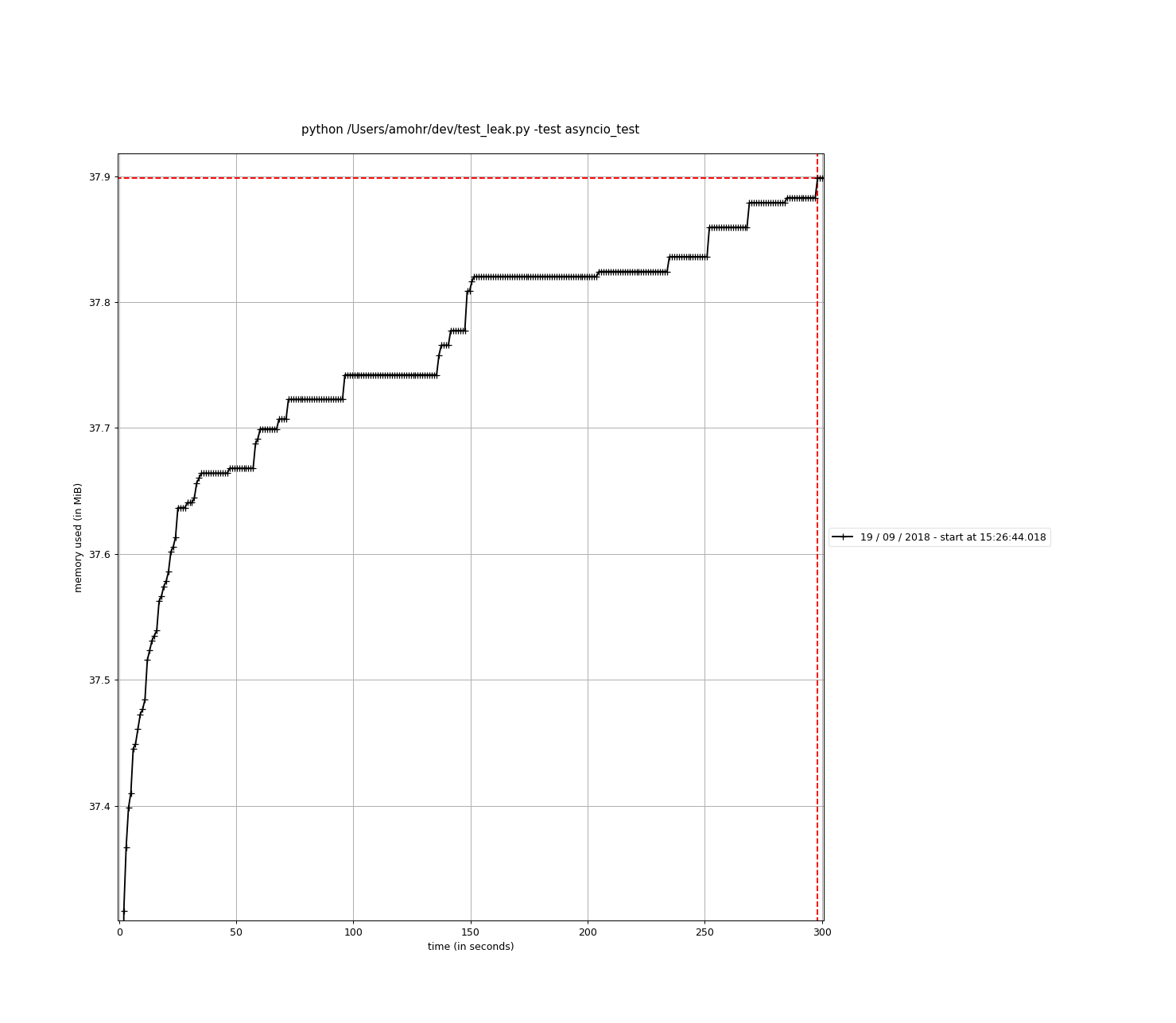

thanks @fantix , just ran my test w/ the aiohttp_test scenario with a build based on your PR (and a ported warnings fix for 3.6) and got the below graph, you rock! So happy I'm going to port this to our prod instances tonight! :)

closing this issue! ty!

Glad it works for you!

@fantix looking at the new graph again, what's a little worrying is that memory is still increasing, however much slower. Definitely lower priority from original issue

@thehesiod yeah, I feel you. I think we should have more of the same refcount-only tests like gevent to guarantee the core of asyncio or uvloop (maybe aiohttp too, didn't check) does not produce circular references.